1.4.1.RELEASE

Copyright © 2008-2014 The original authors.

Table of Contents

- Preface

- I. Introduction

- II. Reference Documentation

- 4. MongoDB support

- 4.1. Getting Started

- 4.2. Examples Repository

- 4.3. Connecting to MongoDB with Spring

- 4.4. General auditing configuration

- 4.5. Introduction to MongoTemplate

- 4.6. Saving, Updating, and Removing Documents

- 4.6.1. How the '_id' field is handled in the mapping layer

- 4.6.2. Type mapping

- 4.6.3. Methods for saving and inserting documents

- 4.6.4. Updating documents in a collection

- 4.6.5. Upserting documents in a collection

- 4.6.6. Finding and Upserting documents in a collection

- 4.6.7. Methods for removing documents

- 4.7. Querying Documents

- 4.8. Map-Reduce Operations

- 4.9. Group Operations

- 4.10. Aggregation Framework Support

- 4.11. Overriding default mapping with custom converters

- 4.12. Index and Collection management

- 4.13. Executing Commands

- 4.14. Lifecycle Events

- 4.15. Exception Translation

- 4.16. Execution callbacks

- 4.17. GridFS support

- 5. MongoDB repositories

- 6. Mapping

- 7. Cross Store support

- 8. Logging support

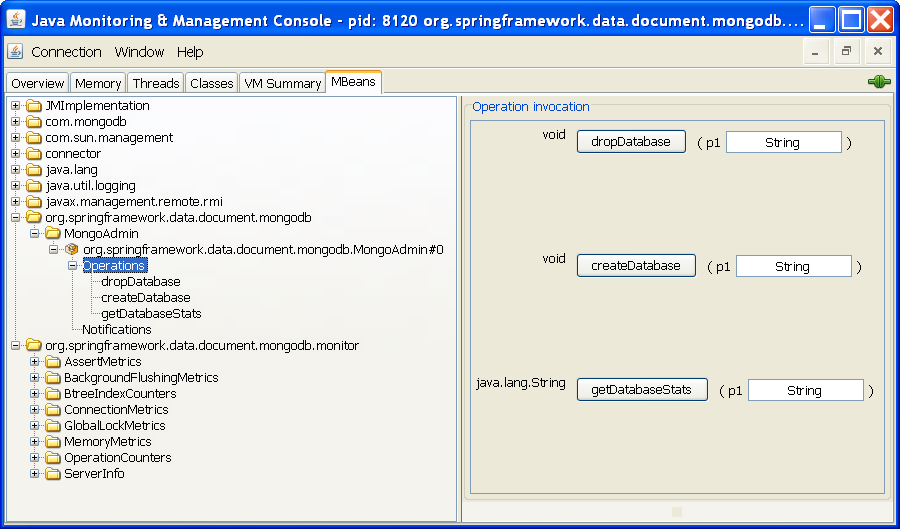

- 9. JMX support

- III. Appendix

The Spring Data MongoDB project applies core Spring concepts to the development of solutions using the MongoDB document style data store. We provide a "template" as a high-level abstraction for storing and querying documents. You will notice similarities to the JDBC support in the Spring Framework.

This document is the reference guide for Spring Data - Document Support. It explains Document module concepts and semantics and the syntax for various stores namespaces.

This section provides some basic introduction to Spring and Document database. The rest of the document refers only to Spring Data Document features and assumes the user is familiar with document databases such as MongoDB and CouchDB as well as Spring concepts.

Spring Data uses Spring framework's core functionality, such as the IoC container, type conversion system, expression language, JMX integration, and portable DAO exception hierarchy. While it is not important to know the Spring APIs, understanding the concepts behind them is. At a minimum, the idea behind IoC should be familiar for whatever IoC container you choose to use.

The core functionality of the MongoDB and CouchDB support can be

used directly, with no need to invoke the IoC services of the Spring

Container. This is much like JdbcTemplate which

can be used 'standalone' without any other services of the Spring

container. To leverage all the features of Spring Data document, such as

the repository support, you will need to configure some parts of the

library using Spring.

To learn more about Spring, you can refer to the comprehensive (and sometimes disarming) documentation that explains in detail the Spring Framework. There are a lot of articles, blog entries and books on the matter - take a look at the Spring framework home page for more information.

NoSQL stores have taken the storage world by storm. It is a vast domain with a plethora of solutions, terms and patterns (to make things worth even the term itself has multiple meanings). While some of the principles are common, it is crucial that the user is familiar to some degree with the stores supported by DATADOC. The best way to get acquainted to this solutions is to read their documentation and follow their examples - it usually doesn't take more then 5-10 minutes to go through them and if you are coming from an RDMBS-only background many times these exercises can be an eye opener.

The jumping off ground for learning about MongoDB is www.mongodb.org. Here is a list of other useful resources.

The manual introduces MongoDB and contains links to getting started guides, reference documentation and tutorials.

The online shell provides a convenient way to interact with a MongoDB instance in combination with the online tutorial.

MongoDB Java Language Center

Several books available for purchase

Karl Seguin's online book: "The Little MongoDB Book"

Spring Data MongoDB 1.x binaries requires JDK level 6.0 and above, and Spring Framework 3.2.x and above.

In terms of document stores, MongoDB preferably version 2.4.

Learning a new framework is not always straight forward. In this section, we try to provide what we think is an easy to follow guide for starting with Spring Data Document module. However, if you encounter issues or you are just looking for an advice, feel free to use one of the links below:

There are a few support options available:

The Spring Data forum is a message board for all Spring Data (not just Document) users to share information and help each other. Note that registration is needed only for posting.

Professional, from-the-source support, with guaranteed response time, is available from Pivotal Sofware, Inc., the company behind Spring Data and Spring.

For information on the Spring Data Mongo source code repository, nightly builds and snapshot artifacts please see the Spring Data Mongo homepage.

You can help make Spring Data best serve the needs of the Spring community by interacting with developers through the Spring Community forums. To follow developer activity look for the mailing list information on the Spring Data Mongo homepage.

If you encounter a bug or want to suggest an improvement, please create a ticket on the Spring Data issue tracker.

To stay up to date with the latest news and announcements in the Spring eco system, subscribe to the Spring Community Portal.

Lastly, you can follow the SpringSource Data blog or the project team on Twitter (SpringData)

The goal of Spring Data repository abstraction is to significantly reduce the amount of boilerplate code required to implement data access layers for various persistence stores.

![[Important]](images/important.png) | Important |

|---|---|

Spring Data repository documentation and your module This chapter explains the core concepts and interfaces of Spring Data repositories. The information in this chapter is pulled from the Spring Data Commons module. It uses the configuration and code samples for the Java Persistence API (JPA) module. Adapt the XML namespace declaration and the types to be extended to the equivalents of the particular module that you are using. Appendix A, Namespace reference covers XML configuration which is supported across all Spring Data modules supporting the repository API, Appendix B, Repository query keywords covers the query method keywords supported by the repository abstraction in general. For detailed information on the specific features of your module, consult the chapter on that module of this document. |

The central interface in Spring Data repository abstraction is

Repository (probably not that much of a

surprise). It takes the domain class to manage as well as the id type of

the domain class as type arguments. This interface acts primarily as a

marker interface to capture the types to work with and to help you to

discover interfaces that extend this one. The

CrudRepository provides sophisticated CRUD

functionality for the entity class that is being managed.

Example 3.1. CrudRepository interface

public interface CrudRepository<T, ID extends Serializable> extends Repository<T, ID> {<S extends T> S save(S entity);

T findOne(ID primaryKey);

Iterable<T> findAll(); Long count();

void delete(T entity);

boolean exists(ID primaryKey);

// … more functionality omitted. }

| Saves the given entity. |

| Returns the entity identified by the given id. |

| Returns all entities. |

| Returns the number of entities. |

| Deletes the given entity. |

| Indicates whether an entity with the given id exists. |

![[Note]](images/note.png) | Note |

|---|---|

We also provide persistence technology-specific abstractions like

e.g. |

On top of the CrudRepository there is

a PagingAndSortingRepository abstraction

that adds additional methods to ease paginated access to entities:

Example 3.2. PagingAndSortingRepository

public interface PagingAndSortingRepository<T, ID extends Serializable> extends CrudRepository<T, ID> { Iterable<T> findAll(Sort sort); Page<T> findAll(Pageable pageable); }

Accessing the second page of User by a page

size of 20 you could simply do something like this:

PagingAndSortingRepository<User, Long> repository = // … get access to a bean Page<User> users = repository.findAll(new PageRequest(1, 20));

Standard CRUD functionality repositories usually have queries on the underlying datastore. With Spring Data, declaring those queries becomes a four-step process:

Declare an interface extending

Repositoryor one of its subinterfaces and type it to the domain class and ID type that it will handle.public interface PersonRepository extends Repository<User, Long> { … }

Declare query methods on the interface.

List<Person> findByLastname(String lastname);

Set up Spring to create proxy instances for those interfaces. Either via JavaConfig:

import org.springframework.data.jpa.repository.config.EnableJpaRepositories; @EnableJpaRepositories class Config {}

or via XML configuration:

<?xml version="1.0" encoding="UTF-8"?> <beans xmlns="http://www.springframework.org/schema/beans" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" xmlns:jpa="http://www.springframework.org/schema/data/jpa" xsi:schemaLocation="http://www.springframework.org/schema/beans http://www.springframework.org/schema/beans/spring-beans.xsd http://www.springframework.org/schema/data/jpa http://www.springframework.org/schema/data/jpa/spring-jpa.xsd"> <jpa:repositories base-package="com.acme.repositories"/> </beans>

The JPA namespace is used in this example. If you are using the repository abstraction for any other store, you need to change this to the appropriate namespace declaration of your store module which should be exchanging

jpain favor of, for example,mongodb. Also, note that the JavaConfig variant doesn't configure a package explictly as the package of the annotated class is used by default. To customize the package to scanGet the repository instance injected and use it.

public class SomeClient { @Autowired private PersonRepository repository; public void doSomething() { List<Person> persons = repository.findByLastname("Matthews"); } }

The sections that follow explain each step.

As a first step you define a domain class-specific repository

interface. The interface must extend

Repository and be typed to the domain

class and an ID type. If you want to expose CRUD methods for that domain

type, extend CrudRepository instead of

Repository.

Typically, your repository interface will extend

Repository,

CrudRepository or

PagingAndSortingRepository.

Alternatively, if you do not want to extend Spring Data interfaces,

you can also annotate your repository interface with

@RepositoryDefinition. Extending

CrudRepository exposes a complete set

of methods to manipulate your entities. If you prefer to be selective

about the methods being exposed, simply copy the ones you want to

expose from CrudRepository into your

domain repository.

![[Note]](images/note.png) | Note |

|---|---|

This allows you to define your own abstractions on top of the provided Spring Data Repositories functionality. |

Example 3.3. Selectively exposing CRUD methods

@NoRepositoryBean interface MyBaseRepository<T, ID extends Serializable> extends Repository<T, ID> { T findOne(ID id); T save(T entity); } interface UserRepository extends MyBaseRepository<User, Long> { User findByEmailAddress(EmailAddress emailAddress); }

In this first step you defined a common base interface for all

your domain repositories and exposed

findOne(…) as well as

save(…).These methods will be routed into the

base repository implementation of the store of your choice provided by

Spring Data ,e.g. in the case if JPA

SimpleJpaRepository, because they are matching

the method signatures in

CrudRepository. So the

UserRepository will now be able to save

users, and find single ones by id, as well as triggering a query to

find Users by their email

address.

![[Note]](images/note.png) | Note |

|---|---|

Note, that the intermediate repository interface is annotated

with |

The repository proxy has two ways to derive a store-specific query from the method name. It can derive the query from the method name directly, or by using an manually defined query. Available options depend on the actual store. However, there's got to be an strategy that decides what actual query is created. Let's have a look at the available options.

The following strategies are available for the repository

infrastructure to resolve the query. You can configure the strategy at

the namespace through the query-lookup-strategy attribute

in case of XML configuration or via the

queryLookupStrategy attribute of the

Enable${store}Repositories annotation in case

of Java config. Some strategies may not be supported for particular

datastores.

CREATE attempts to construct a store-specific

query from the query method name. The general approach is to remove

a given set of well-known prefixes from the method name and parse

the rest of the method. Read more about query construction in the section called “Query creation”.

USE_DECLARED_QUERY tries to find a declared query

and will throw an exception in case it can't find one. The query can

be defined by an annotation somewhere or declared by other means.

Consult the documentation of the specific store to find available

options for that store. If the repository infrastructure does not

find a declared query for the method at bootstrap time, it

fails.

CREATE_IF_NOT_FOUND combines CREATE

and USE_DECLARED_QUERY. It looks up a declared query

first, and if no declared query is found, it creates a custom method

name-based query. This is the default lookup strategy and thus will

be used if you do not configure anything explicitly. It allows quick

query definition by method names but also custom-tuning of these

queries by introducing declared queries as needed.

The query builder mechanism built into Spring Data repository

infrastructure is useful for building constraining queries over

entities of the repository. The mechanism strips the prefixes

find…By, read…By, query…By,

count…By, and get…By from the method and

starts parsing the rest of it. The introducing clause can contain

further expressions such as a Distinct to set a distinct

flag on the query to be created. However, the first By

acts as delimiter to indicate the start of the actual criteria. At a

very basic level you can define conditions on entity properties and

concatenate them with And and Or.

Example 3.4. Query creation from method names

public interface PersonRepository extends Repository<User, Long> { List<Person> findByEmailAddressAndLastname(EmailAddress emailAddress, String lastname); // Enables the distinct flag for the query List<Person> findDistinctPeopleByLastnameOrFirstname(String lastname, String firstname); List<Person> findPeopleDistinctByLastnameOrFirstname(String lastname, String firstname); // Enabling ignoring case for an individual property List<Person> findByLastnameIgnoreCase(String lastname); // Enabling ignoring case for all suitable properties List<Person> findByLastnameAndFirstnameAllIgnoreCase(String lastname, String firstname); // Enabling static ORDER BY for a query List<Person> findByLastnameOrderByFirstnameAsc(String lastname); List<Person> findByLastnameOrderByFirstnameDesc(String lastname); }

The actual result of parsing the method depends on the persistence store for which you create the query. However, there are some general things to notice.

The expressions are usually property traversals combined with operators that can be concatenated. You can combine property expressions with

ANDandOR. You also get support for operators such asBetween,LessThan,GreaterThan,Likefor the property expressions. The supported operators can vary by datastore, so consult the appropriate part of your reference documentation.The method parser supports setting an

IgnoreCaseflag for individual properties, for example,findByLastnameIgnoreCase(…)) or for all properties of a type that support ignoring case (usuallyStrings, for example,findByLastnameAndFirstnameAllIgnoreCase(…)). Whether ignoring cases is supported may vary by store, so consult the relevant sections in the reference documentation for the store-specific query method.You can apply static ordering by appending an

OrderByclause to the query method that references a property and by providing a sorting direction (AscorDesc). To create a query method that supports dynamic sorting, see the section called “Special parameter handling”.

Property expressions can refer only to a direct property of the

managed entity, as shown in the preceding example. At query creation

time you already make sure that the parsed property is a property of

the managed domain class. However, you can also define constraints by

traversing nested properties. Assume Persons

have Addresses with

ZipCodes. In that case a method name of

List<Person> findByAddressZipCode(ZipCode zipCode);

creates the property traversal x.address.zipCode.

The resolution algorithm starts with interpreting the entire part

(AddressZipCode) as the property and checks the

domain class for a property with that name (uncapitalized). If the

algorithm succeeds it uses that property. If not, the algorithm splits

up the source at the camel case parts from the right side into a head

and a tail and tries to find the corresponding property, in our

example, AddressZip and Code. If

the algorithm finds a property with that head it takes the tail and

continue building the tree down from there, splitting the tail up in

the way just described. If the first split does not match, the

algorithm move the split point to the left

(Address, ZipCode) and

continues.

Although this should work for most cases, it is possible for the

algorithm to select the wrong property. Suppose the

Person class has an addressZip

property as well. The algorithm would match in the first split round

already and essentially choose the wrong

property and finally fail (as the type of

addressZip probably has no code property). To resolve this ambiguity you

can use _ inside your method name to manually

define traversal points. So our method name would end up like

so:

List<Person> findByAddress_ZipCode(ZipCode zipCode);

To handle parameters in your query you simply define method

parameters as already seen in the examples above. Besides that the

infrastructure will recognize certain specific types like

Pageable and

Sort to apply pagination and sorting to your

queries dynamically.

Example 3.5. Using Pageable and Sort in query methods

Page<User> findByLastname(String lastname, Pageable pageable); List<User> findByLastname(String lastname, Sort sort); List<User> findByLastname(String lastname, Pageable pageable);

The first method allows you to pass an

org.springframework.data.domain.Pageable instance to the

query method to dynamically add paging to your statically defined

query. Sorting options are handled through the

Pageable instance too. If you only need

sorting, simply add an

org.springframework.data.domain.Sort parameter to your

method. As you also can see, simply returning a

List is possible as well. In this case

the additional metadata required to build the actual

Page instance will not be created

(which in turn means that the additional count query that would have

been necessary not being issued) but rather simply restricts the query

to look up only the given range of entities.

![[Note]](images/note.png) | Note |

|---|---|

To find out how many pages you get for a query entirely you have to trigger an additional count query. By default this query will be derived from the query you actually trigger. |

In this section you create instances and bean definitions for the repository interfaces defined. One way to do so is using the Spring namespace that is shipped with each Spring Data module that supports the repository mechanism although we generally recommend to use the Java-Config style configuration.

Each Spring Data module includes a repositories element that allows you to simply define a base package that Spring scans for you.

<?xml version="1.0" encoding="UTF-8"?> <beans:beans xmlns:beans="http://www.springframework.org/schema/beans" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" xmlns="http://www.springframework.org/schema/data/jpa" xsi:schemaLocation="http://www.springframework.org/schema/beans http://www.springframework.org/schema/beans/spring-beans.xsd http://www.springframework.org/schema/data/jpa http://www.springframework.org/schema/data/jpa/spring-jpa.xsd"> <repositories base-package="com.acme.repositories" /> </beans:beans>

In the preceding example, Spring is instructed to scan

com.acme.repositories and all its subpackages for

interfaces extending Repository or one

of its subinterfaces. For each interface found, the infrastructure

registers the persistence technology-specific

FactoryBean to create the appropriate

proxies that handle invocations of the query methods. Each bean is

registered under a bean name that is derived from the interface name,

so an interface of UserRepository would

be registered under userRepository. The

base-package attribute allows wildcards, so that you can

define a pattern of scanned packages.

By default the infrastructure picks up every interface

extending the persistence technology-specific

Repository subinterface located under

the configured base package and creates a bean instance for it.

However, you might want more fine-grained control over which

interfaces bean instances get created for. To do this you use

<include-filter /> and <exclude-filter

/> elements inside <repositories />.

The semantics are exactly equivalent to the elements in Spring's

context namespace. For details, see Spring reference documentation on these

elements.

For example, to exclude certain interfaces from instantiation as repository, you could use the following configuration:

Example 3.6. Using exclude-filter element

<repositories base-package="com.acme.repositories"> <context:exclude-filter type="regex" expression=".*SomeRepository" /> </repositories>

This example excludes all interfaces ending in

SomeRepository from being

instantiated.

The repository infrastructure can also be triggered using a

store-specific

@Enable${store}Repositories annotation

on a JavaConfig class. For an introduction into Java-based

configuration of the Spring container, see the reference

documentation.[1]

A sample configuration to enable Spring Data repositories looks something like this.

Example 3.7. Sample annotation based repository configuration

@Configuration

@EnableJpaRepositories("com.acme.repositories")

class ApplicationConfiguration {

@Bean

public EntityManagerFactory entityManagerFactory() {

// …

}

}![[Note]](images/note.png) | Note |

|---|---|

The sample uses the JPA-specific annotation, which you would

change according to the store module you actually use. The same

applies to the definition of the

|

You can also use the repository infrastructure outside of a

Spring container, e.g. in CDI environments. You still need some Spring

libraries in your classpath, but generally you can set up repositories

programmatically as well. The Spring Data modules that provide

repository support ship a persistence technology-specific

RepositoryFactory that you can use as

follows.

Example 3.8. Standalone usage of repository factory

RepositoryFactorySupport factory = … // Instantiate factory here UserRepository repository = factory.getRepository(UserRepository.class);

Often it is necessary to provide a custom implementation for a few repository methods. Spring Data repositories easily allow you to provide custom repository code and integrate it with generic CRUD abstraction and query method functionality.

To enrich a repository with custom functionality you first define an interface and an implementation for the custom functionality. Use the repository interface you provided to extend the custom interface.

Example 3.9. Interface for custom repository functionality

interface UserRepositoryCustom { public void someCustomMethod(User user); }

Example 3.10. Implementation of custom repository functionality

class UserRepositoryImpl implements UserRepositoryCustom { public void someCustomMethod(User user) { // Your custom implementation } }

![[Note]](images/note.png) | Note |

|---|---|

The implementation itself does not depend on Spring Data and

can be a regular Spring bean. So you can use standard dependency

injection behavior to inject references to other beans like a

|

Example 3.11. Changes to the your basic repository interface

public interface UserRepository extends CrudRepository<User, Long>, UserRepositoryCustom { // Declare query methods here }

Let your standard repository interface extend the custom one. Doing so combines the CRUD and custom functionality and makes it available to clients.

If you use namespace configuration, the repository

infrastructure tries to autodetect custom implementations by scanning

for classes below the package we found a repository in. These classes

need to follow the naming convention of appending the namespace

element's attribute repository-impl-postfix to the found

repository interface name. This postfix defaults to

Impl.

Example 3.12. Configuration example

<repositories base-package="com.acme.repository" /> <repositories base-package="com.acme.repository" repository-impl-postfix="FooBar" />

The first configuration example will try to look up a class

com.acme.repository.UserRepositoryImpl to act

as custom repository implementation, whereas the second example will

try to lookup

com.acme.repository.UserRepositoryFooBar.

The preceding approach works well if your custom implementation uses annotation-based configuration and autowiring only, as it will be treated as any other Spring bean. If your custom implementation bean needs special wiring, you simply declare the bean and name it after the conventions just described. The infrastructure will then refer to the manually defined bean definition by name instead of creating one itself.

Example 3.13. Manual wiring of custom implementations (I)

<repositories base-package="com.acme.repository" /> <beans:bean id="userRepositoryImpl" class="…"> <!-- further configuration --> </beans:bean>

The preceding approach is not feasible when you want to add a single method to all your repository interfaces.

To add custom behavior to all repositories, you first add an intermediate interface to declare the shared behavior.

Example 3.14. An interface declaring custom shared behavior

public interface MyRepository<T, ID extends Serializable> extends JpaRepository<T, ID> { void sharedCustomMethod(ID id); }

Now your individual repository interfaces will extend this intermediate interface instead of the

Repositoryinterface to include the functionality declared.Next, create an implementation of the intermediate interface that extends the persistence technology-specific repository base class. This class will then act as a custom base class for the repository proxies.

Example 3.15. Custom repository base class

public class MyRepositoryImpl<T, ID extends Serializable> extends SimpleJpaRepository<T, ID> implements MyRepository<T, ID> { private EntityManager entityManager; // There are two constructors to choose from, either can be used. public MyRepositoryImpl(Class<T> domainClass, EntityManager entityManager) { super(domainClass, entityManager); // This is the recommended method for accessing inherited class dependencies. this.entityManager = entityManager; } public void sharedCustomMethod(ID id) { // implementation goes here } }

The default behavior of the Spring

<repositories />namespace is to provide an implementation for all interfaces that fall under thebase-package. This means that if left in its current state, an implementation instance ofMyRepositorywill be created by Spring. This is of course not desired as it is just supposed to act as an intermediary betweenRepositoryand the actual repository interfaces you want to define for each entity. To exclude an interface that extendsRepositoryfrom being instantiated as a repository instance, you can either annotate it with@NoRepositoryBeanor move it outside of the configuredbase-package.Then create a custom repository factory to replace the default

RepositoryFactoryBeanthat will in turn produce a customRepositoryFactory. The new repository factory will then provide yourMyRepositoryImplas the implementation of any interfaces that extend theRepositoryinterface, replacing theSimpleJpaRepositoryimplementation you just extended.Example 3.16. Custom repository factory bean

public class MyRepositoryFactoryBean<R extends JpaRepository<T, I>, T, I extends Serializable> extends JpaRepositoryFactoryBean<R, T, I> { protected RepositoryFactorySupport createRepositoryFactory(EntityManager entityManager) { return new MyRepositoryFactory(entityManager); } private static class MyRepositoryFactory<T, I extends Serializable> extends JpaRepositoryFactory { private EntityManager entityManager; public MyRepositoryFactory(EntityManager entityManager) { super(entityManager); this.entityManager = entityManager; } protected Object getTargetRepository(RepositoryMetadata metadata) { return new MyRepositoryImpl<T, I>((Class<T>) metadata.getDomainClass(), entityManager); } protected Class<?> getRepositoryBaseClass(RepositoryMetadata metadata) { // The RepositoryMetadata can be safely ignored, it is used by the JpaRepositoryFactory //to check for QueryDslJpaRepository's which is out of scope. return MyRepository.class; } } }

Finally, either declare beans of the custom factory directly or use the

factory-classattribute of the Spring namespace to tell the repository infrastructure to use your custom factory implementation.Example 3.17. Using the custom factory with the namespace

<repositories base-package="com.acme.repository" factory-class="com.acme.MyRepositoryFactoryBean" />

This section documents a set of Spring Data extensions that enable Spring Data usage in a variety of contexts. Currently most of the integration is targeted towards Spring MVC.

![[Note]](images/note.png) | Note |

|---|---|

This section contains the documentation for the Spring Data web support as it is implemented as of Spring Data Commons in the 1.6 range. As it the newly introduced support changes quite a lot of things we kept the documentation of the former behavior in Section 3.4.3, “Legacy web support”. Also note that the JavaConfig support introduced in Spring Data Commons 1.6 requires Spring 3.2 due to some issues with JavaConfig and overridden methods in Spring 3.1. |

Spring Data modules ships with a variety of web support if the module supports the repository programming model. The web related stuff requires Spring MVC JARs on the classpath, some of them even provide integration with Spring HATEOAS.

[2]In general, the integration support is enabled by using the

@EnableSpringDataWebSupport annotation in

your JavaConfig configuration class.

Example 3.18. Enabling Spring Data web support

@Configuration @EnableWebMvc @EnableSpringDataWebSupport class WebConfiguration { }

The @EnableSpringDataWebSupport annotation registers a few components we will discuss in a bit. It will also detect Spring HATEOAS on the classpath and register integration components for it as well if present.

Alternatively, if you are using XML configuration, register either SpringDataWebSupport or HateoasAwareSpringDataWebSupport as Spring beans:

Example 3.19. Enabling Spring Data web support in XML

<bean class="org.springframework.data.web.config.SpringDataWebConfiguration" /> <!-- If you're using Spring HATEOAS as well register this one *instead* of the former --> <bean class="org.springframework.data.web.config.HateoasAwareSpringDataWebConfiguration" />

The configuration setup shown above will register a few basic components:

A

DomainClassConverterto enable Spring MVC to resolve instances of repository managed domain classes from request parameters or path variables.HandlerMethodArgumentResolverimplementations to let Spring MVC resolvePageableandSortinstances from request parameters.

The DomainClassConverter allows you to

use domain types in your Spring MVC controller method signatures

directly, so that you don't have to manually lookup the instances

via the repository:

Example 3.20. A Spring MVC controller using domain types in method signatures

@Controller @RequestMapping("/users") public class UserController { @RequestMapping("/{id}") public String showUserForm(@PathVariable("id") User user, Model model) { model.addAttribute("user", user); return "userForm"; } }

As you can see the method receives a User instance directly

and no further lookup is necessary. The instance can be resolved by

letting Spring MVC convert the path variable into the id type of the

domain class first and eventually access the instance through

calling findOne(…) on the repository

instance registered for the domain type.

![[Note]](images/note.png) | Note |

|---|---|

Currently the repository has to implement

|

The configuration snippet above also registers a

PageableHandlerMethodArgumentResolver as well

as an instance of

SortHandlerMethodArgumentResolver. The

registration enables Pageable and

Sort being valid controller method

arguments

Example 3.21. Using Pageable as controller method argument

@Controller @RequestMapping("/users") public class UserController { @Autowired UserRepository repository; @RequestMapping public String showUsers(Model model, Pageable pageable) { model.addAttribute("users", repository.findAll(pageable)); return "users"; } }

This method signature will cause Spring MVC try to derive a

Pageable instance from the request

parameters using the following default configuration:

Table 3.1. Request parameters evaluated for Pageable instances

page | Page you want to retrieve. |

size | Size of the page you want to retrieve. |

sort | Properties that should be sorted by in the format

property,property(,ASC|DESC). Default sort

direction is ascending. Use multiple sort

parameters if you want to switch directions, e.g.

?sort=firstname&sort=lastname,asc. |

To customize this behavior extend either

SpringDataWebConfiguration or the

HATEOAS-enabled equivalent and override the

pageableResolver() or

sortResolver() methods and import your

customized configuration file instead of using the

@Enable-annotation.

In case you need multiple

Pageables or

Sorts to be resolved from the request (for

multiple tables, for example) you can use Spring's

@Qualifier annotation to distinguish

one from another. The request parameters then have to be prefixed

with ${qualifier}_. So for a method signature like

this:

public String showUsers(Model model, @Qualifier("foo") Pageable first, @Qualifier("bar") Pageable second) { … }

you have to populate foo_page and

bar_page etc.

The default Pageable handed

into the method is equivalent to a new PageRequest(0,

20) but can be customized using the

@PageableDefaults annotation on the

Pageable parameter.

Spring HATEOAS ships with a representation model class PagedResources that allows enrichting the content of a Page instance with the necessary Page metadata as well as links to let the clients easily navigate the pages. The conversion of a Page to a PagedResources is done by an implementation of the Spring HATEOAS ResourceAssembler interface, the PagedResourcesAssembler.

Example 3.22. Using a PagedResourcesAssembler as controller method argument

@Controller class PersonController { @Autowired PersonRepository repository; @RequestMapping(value = "/persons", method = RequestMethod.GET) HttpEntity<PagedResources<Person>> persons(Pageable pageable, PagedResourcesAssembler assembler) { Page<Person> persons = repository.findAll(pageable); return new ResponseEntity<>(assembler.toResources(persons), HttpStatus.OK); } }

Enabling the configuration as shown above allows the

PagedResourcesAssembler to be used as

controller method argument. Calling

toResources(…) on it will cause the

following:

The content of the

Pagewill become the content of thePagedResourcesinstance.The

PagedResourceswill get aPageMetadatainstance attached populated with information form thePageand the underlyingPageRequest.The

PagedResourcesgetsprevandnextlinks attached depending on the page's state. The links will point to the URI the method invoked is mapped to. The pagination parameters added to the method will match the setup of thePageableHandlerMethodArgumentResolverto make sure the links can be resolved later on.

Assume we have 30 Person instances in the

database. You can now trigger a request GET

http://localhost:8080/persons and you'll see something similar

to this:

{ "links" : [ { "rel" : "next",

"href" : "http://localhost:8080/persons?page=1&size=20 }

],

"content" : [

… // 20 Person instances rendered here

],

"pageMetadata" : {

"size" : 20,

"totalElements" : 30,

"totalPages" : 2,

"number" : 0

}

}You see that the assembler produced the correct URI and also

picks up the default configuration present to resolve the parameters

into a Pageable for an upcoming

request. This means, if you change that configuration, the links will

automatically adhere to the change. By default the assembler points to

the controller method it was invoked in but that can be customized by

handing in a custom Link to be used as base to

build the pagination links to overloads of the

PagedResourcesAssembler.toResource(…) method.

If you work with the Spring JDBC module, you probably are familiar

with the support to populate a DataSource

using SQL scripts. A similar abstraction is available on the

repositories level, although it does not use SQL as the data definition

language because it must be store-independent. Thus the populators

support XML (through Spring's OXM abstraction) and JSON (through

Jackson) to define data with which to populate the repositories.

Assume you have a file data.json with the

following content:

Example 3.23. Data defined in JSON

[ { "_class" : "com.acme.Person",

"firstname" : "Dave",

"lastname" : "Matthews" },

{ "_class" : "com.acme.Person",

"firstname" : "Carter",

"lastname" : "Beauford" } ]You can easily populate your repositories by using the populator

elements of the repository namespace provided in Spring Data Commons. To

populate the preceding data to your

PersonRepository , do the

following:

Example 3.24. Declaring a Jackson repository populator

<?xml version="1.0" encoding="UTF-8"?> <beans xmlns="http://www.springframework.org/schema/beans" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" xmlns:repository="http://www.springframework.org/schema/data/repository" xsi:schemaLocation="http://www.springframework.org/schema/beans http://www.springframework.org/schema/beans/spring-beans.xsd http://www.springframework.org/schema/data/repository http://www.springframework.org/schema/data/repository/spring-repository.xsd"> <repository:jackson-populator location="classpath:data.json" /> </beans>

This declaration causes the data.json file to

be read and deserialized via a Jackson

ObjectMapper. The type to which the JSON object will be unmarshalled to will

be determined by inspecting the _class attribute of the

JSON document. The infrastructure will eventually select the appropriate

repository to handle the object just deserialized.

To rather use XML to define the data the repositories shall be

populated with, you can use the unmarshaller-populator

element. You configure it to use one of the XML marshaller options

Spring OXM provides you with. See the Spring reference

documentation for details.

Example 3.25. Declaring an unmarshalling repository populator (using JAXB)

<?xml version="1.0" encoding="UTF-8"?> <beans xmlns="http://www.springframework.org/schema/beans" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" xmlns:repository="http://www.springframework.org/schema/data/repository" xmlns:oxm="http://www.springframework.org/schema/oxm" xsi:schemaLocation="http://www.springframework.org/schema/beans http://www.springframework.org/schema/beans/spring-beans.xsd http://www.springframework.org/schema/data/repository http://www.springframework.org/schema/data/repository/spring-repository.xsd http://www.springframework.org/schema/oxm http://www.springframework.org/schema/oxm/spring-oxm.xsd"> <repository:unmarshaller-populator location="classpath:data.json" unmarshaller-ref="unmarshaller" /> <oxm:jaxb2-marshaller contextPath="com.acme" /> </beans>

Given you are developing a Spring MVC web application you typically have to resolve domain class ids from URLs. By default your task is to transform that request parameter or URL part into the domain class to hand it to layers below then or execute business logic on the entities directly. This would look something like this:

@Controller @RequestMapping("/users") public class UserController { private final UserRepository userRepository; @Autowired public UserController(UserRepository userRepository) { Assert.notNull(repository, "Repository must not be null!"); userRepository = userRepository; } @RequestMapping("/{id}") public String showUserForm(@PathVariable("id") Long id, Model model) { // Do null check for id User user = userRepository.findOne(id); // Do null check for user model.addAttribute("user", user); return "user"; } }

First you declare a repository dependency for each controller to

look up the entity managed by the controller or repository

respectively. Looking up the entity is boilerplate as well, as it's

always a findOne(…) call. Fortunately Spring

provides means to register custom components that allow conversion

between a String value to an arbitrary

type.

For Spring versions before 3.0 simple Java

PropertyEditors had to be used. To

integrate with that, Spring Data offers a

DomainClassPropertyEditorRegistrar, which

looks up all Spring Data repositories registered in the

ApplicationContext and registers a

custom PropertyEditor for the managed

domain class.

<bean class="….web.servlet.mvc.annotation.AnnotationMethodHandlerAdapter"> <property name="webBindingInitializer"> <bean class="….web.bind.support.ConfigurableWebBindingInitializer"> <property name="propertyEditorRegistrars"> <bean class="org.springframework.data.repository.support.DomainClassPropertyEditorRegistrar" /> </property> </bean> </property> </bean>

If you have configured Spring MVC as in the preceding example, you can configure your controller as follows, which reduces a lot of the clutter and boilerplate.

@Controller @RequestMapping("/users") public class UserController { @RequestMapping("/{id}") public String showUserForm(@PathVariable("id") User user, Model model) { model.addAttribute("user", user); return "userForm"; } }

In Spring 3.0 and later the

PropertyEditor support is superseded

by a new conversion infrastructure that eliminates the drawbacks of

PropertyEditors and uses a stateless

X to Y conversion approach. Spring Data now ships with a

DomainClassConverter that mimics the behavior

of DomainClassPropertyEditorRegistrar. To

configure, simply declare a bean instance and pipe the

ConversionService being used into its

constructor:

<mvc:annotation-driven conversion-service="conversionService" /> <bean class="org.springframework.data.repository.support.DomainClassConverter"> <constructor-arg ref="conversionService" /> </bean>

If you are using JavaConfig, you can simply extend Spring

MVC's WebMvcConfigurationSupport and hand the

FormatingConversionService that the

configuration superclass provides into the

DomainClassConverter instance you

create.

class WebConfiguration extends WebMvcConfigurationSupport { // Other configuration omitted @Bean public DomainClassConverter<?> domainClassConverter() { return new DomainClassConverter<FormattingConversionService>(mvcConversionService()); } }

When working with pagination in the web layer you usually have

to write a lot of boilerplate code yourself to extract the necessary

metadata from the request. The less desirable approach shown in the

example below requires the method to contain an

HttpServletRequest parameter that has

to be parsed manually. This example also omits appropriate failure

handling, which would make the code even more verbose.

@Controller @RequestMapping("/users") public class UserController { // DI code omitted @RequestMapping public String showUsers(Model model, HttpServletRequest request) { int page = Integer.parseInt(request.getParameter("page")); int pageSize = Integer.parseInt(request.getParameter("pageSize")); Pageable pageable = new PageRequest(page, pageSize); model.addAttribute("users", userService.getUsers(pageable)); return "users"; } }

The bottom line is that the controller should not have to handle

the functionality of extracting pagination information from the

request. So Spring Data ships with a

PageableHandlerArgumentResolver that will do

the work for you. The Spring MVC JavaConfig support exposes a

WebMvcConfigurationSupport helper class to

customize the configuration as follows:

@Configuration

public class WebConfig extends WebMvcConfigurationSupport {

@Override

public void configureMessageConverters(List<HttpMessageConverter<?>> converters) {

converters.add(new PageableHandlerArgumentResolver());

}

}If you're stuck with XML configuration you can register the resolver as follows:

<bean class="….web.servlet.mvc.method.annotation.RequestMappingHandlerAdapter"> <property name="customArgumentResolvers"> <list> <bean class="org.springframework.data.web.PageableHandlerArgumentResolver" /> </list> </property> </bean>

When using Spring 3.0.x versions use the

PageableArgumentResolver instead. Once you've

configured the resolver with Spring MVC it allows you to simplify

controllers down to something like this:

@Controller @RequestMapping("/users") public class UserController { @RequestMapping public String showUsers(Model model, Pageable pageable) { model.addAttribute("users", userRepository.findAll(pageable)); return "users"; } }

The PageableArgumentResolver

automatically resolves request parameters to build a

PageRequest instance. By default it expects the

following structure for the request parameters.

Table 3.2. Request parameters evaluated by

PageableArgumentResolver

page | Page you want to retrieve. |

page.size | Size of the page you want to retrieve. |

page.sort | Property that should be sorted by. |

page.sort.dir | Direction that should be used for sorting. |

In case you need multiple

Pageables to be resolved from the

request (for multiple tables, for example) you can use Spring's

@Qualifier annotation to distinguish

one from another. The request parameters then have to be prefixed with

${qualifier}_. So for a method signature like

this:

public String showUsers(Model model, @Qualifier("foo") Pageable first, @Qualifier("bar") Pageable second) { … }

you have to populate foo_page and

bar_page and the related subproperties.

The PageableArgumentResolver will use a

PageRequest with the first page and a page

size of 10 by default. It will use that value if it cannot resolve a

PageRequest from the request (because of

missing parameters, for example). You can configure a global default

on the bean declaration directly. If you might need controller

method specific defaults for the

Pageable, annotate the method

parameter with @PageableDefaults and

specify page (through pageNumber), page size (through

value), sort (list of properties to sort

by), and sortDir (the direction to sort by) as

annotation attributes:

public String showUsers(Model model, @PageableDefaults(pageNumber = 0, value = 30) Pageable pageable) { … }

[1] JavaConfig in the Spring reference documentation - http://static.springsource.org/spring/docs/3.1.x/spring-framework-reference/html/beans.html#beans-java

[2] Spring HATEOAS - https://github.com/SpringSource/spring-hateoas

This part of the reference documentation explains the core functionality offered by Spring Data Document.

Chapter 4, MongoDB support introduces the MongoDB module feature set.

Chapter 5, MongoDB repositories introduces the repository support for MongoDB.

The MongoDB support contains a wide range of features which are summarized below.

Spring configuration support using Java based @Configuration classes or an XML namespace for a Mongo driver instance and replica sets

MongoTemplate helper class that increases productivity performing common Mongo operations. Includes integrated object mapping between documents and POJOs.

Exception translation into Spring's portable Data Access Exception hierarchy

Feature Rich Object Mapping integrated with Spring's Conversion Service

Annotation based mapping metadata but extensible to support other metadata formats

Persistence and mapping lifecycle events

Java based Query, Criteria, and Update DSLs

Automatic implementation of Repository interfaces including support for custom finder methods.

QueryDSL integration to support type-safe queries.

Cross-store persistance - support for JPA Entities with fields transparently persisted/retrieved using MongoDB

Log4j log appender

GeoSpatial integration

For most tasks you will find yourself using

MongoTemplate or the Repository support that both

leverage the rich mapping functionality. MongoTemplate is the place to look

for accessing functionality such as incrementing counters or ad-hoc CRUD

operations. MongoTemplate also provides callback methods so that it is easy

for you to get a hold of the low level API artifacts such as

org.mongo.DB to communicate directly with MongoDB. The

goal with naming conventions on various API artifacts is to copy those in

the base MongoDB Java driver so you can easily map your existing knowledge

onto the Spring APIs.

Spring MongoDB support requires MongoDB 1.4 or higher and Java SE 5 or higher. The latest production release (2.4.9 as of this writing) is recommended. An easy way to bootstrap setting up a working environment is to create a Spring based project in STS.

First you need to set up a running Mongodb server. Refer to the

Mongodb

Quick Start guide for an explanation on how to startup a MongoDB

instance. Once installed starting MongoDB is typically a matter of

executing the following command:

MONGO_HOME/bin/mongod

To create a Spring project in STS go to File -> New -> Spring Template Project -> Simple Spring Utility Project -> press Yes when prompted. Then enter a project and a package name such as org.spring.mongodb.example.

Then add the following to pom.xml dependencies section.

<dependencies> <!-- other dependency elements omitted --> <dependency> <groupId>org.springframework.data</groupId> <artifactId>spring-data-mongodb</artifactId> <version>1.4.1.RELEASE</version> </dependency> </dependencies>

Also change the version of Spring in the pom.xml to be

<spring.framework.version>3.2.8.RELEASE</spring.framework.version>

You will also need to add the location of the Spring Milestone repository for maven to your pom.xml which is at the same level of your <dependencies/> element

<repositories> <repository> <id>spring-milestone</id> <name>Spring Maven MILESTONE Repository</name> <url>http://repo.spring.io/libs-milestone</url> </repository> </repositories>

The repository is also browseable here.

You may also want to set the logging level to DEBUG to

see some additional information, edit the log4j.properties file to

have

log4j.category.org.springframework.data.document.mongodb=DEBUG

log4j.appender.stdout.layout.ConversionPattern=%d{ABSOLUTE} %5p %40.40c:%4L - %m%nCreate a simple Person class to persist

package org.spring.mongodb.example; public class Person { private String id; private String name; private int age; public Person(String name, int age) { this.name = name; this.age = age; } public String getId() { return id; } public String getName() { return name; } public int getAge() { return age; } @Override public String toString() { return "Person [id=" + id + ", name=" + name + ", age=" + age + "]"; } }

And a main application to run

package org.spring.mongodb.example; import static org.springframework.data.mongodb.core.query.Criteria.where; import org.apache.commons.logging.Log; import org.apache.commons.logging.LogFactory; import org.springframework.data.mongodb.core.MongoOperations; import org.springframework.data.mongodb.core.MongoTemplate; import org.springframework.data.mongodb.core.query.Query; import com.mongodb.Mongo; public class MongoApp { private static final Log log = LogFactory.getLog(MongoApp.class); public static void main(String[] args) throws Exception { MongoOperations mongoOps = new MongoTemplate(new Mongo(), "database"); mongoOps.insert(new Person("Joe", 34)); log.info(mongoOps.findOne(new Query(where("name").is("Joe")), Person.class)); mongoOps.dropCollection("person"); } }

This will produce the following output

10:01:32,062 DEBUG apping.MongoPersistentEntityIndexCreator: 80 - Analyzing class class org.spring.example.Person for index information.

10:01:32,265 DEBUG ramework.data.mongodb.core.MongoTemplate: 631 - insert DBObject containing fields: [_class, age, name] in collection: Person

10:01:32,765 DEBUG ramework.data.mongodb.core.MongoTemplate:1243 - findOne using query: { "name" : "Joe"} in db.collection: database.Person

10:01:32,953 INFO org.spring.mongodb.example.MongoApp: 25 - Person [id=4ddbba3c0be56b7e1b210166, name=Joe, age=34]

10:01:32,984 DEBUG ramework.data.mongodb.core.MongoTemplate: 375 - Dropped collection [database.person]Even in this simple example, there are few things to take notice of

You can instantiate the central helper class of Spring Mongo,

MongoTemplate, using the standardcom.mongodb.Mongoobject and the name of the database to use.The mapper works against standard POJO objects without the need for any additional metadata (though you can optionally provide that information. See here.).

Conventions are used for handling the id field, converting it to be a ObjectId when stored in the database.

Mapping conventions can use field access. Notice the Person class has only getters.

If the constructor argument names match the field names of the stored document, they will be used to instantiate the object

There is an github repository with several examples that you can download and play around with to get a feel for how the library works.

One of the first tasks when using MongoDB and Spring is to create a

com.mongodb.Mongo object using the IoC container.

There are two main ways to do this, either using Java based bean metadata

or XML based bean metadata. These are discussed in the following sections.

![[Note]](images/note.png) | Note |

|---|---|

For those not familiar with how to configure the Spring container using Java based bean metadata instead of XML based metadata see the high level introduction in the reference docs here as well as the detailed documentation here. |

An example of using Java based bean metadata to register an

instance of a com.mongodb.Mongo is shown below

Example 4.1. Registering a com.mongodb.Mongo object using Java based bean metadata

@Configuration public class AppConfig { /* * Use the standard Mongo driver API to create a com.mongodb.Mongo instance. */ public @Bean Mongo mongo() throws UnknownHostException { return new Mongo("localhost"); } }

This approach allows you to use the standard

com.mongodb.Mongo API that you may already be

used to using but also pollutes the code with the UnknownHostException

checked exception. The use of the checked exception is not desirable as

Java based bean metadata uses methods as a means to set object

dependencies, making the calling code cluttered.

An alternative is to register an instance of

com.mongodb.Mongo instance with the container

using Spring's MongoFactoryBean. As

compared to instantiating a com.mongodb.Mongo

instance directly, the FactoryBean approach does not throw a checked

exception and has the added advantage of also providing the container

with an ExceptionTranslator implementation that translates MongoDB

exceptions to exceptions in Spring's portable

DataAccessException hierarchy for data access

classes annoated with the @Repository annotation.

This hierarchy and use of @Repository is described in

Spring's

DAO support features.

An example of a Java based bean metadata that supports exception

translation on @Repository annotated classes is

shown below:

Example 4.2. Registering a com.mongodb.Mongo object using Spring's MongoFactoryBean and enabling Spring's exception translation support

@Configuration public class AppConfig { /* * Factory bean that creates the com.mongodb.Mongo instance */ public @Bean MongoFactoryBean mongo() { MongoFactoryBean mongo = new MongoFactoryBean(); mongo.setHost("localhost"); return mongo; } }

To access the com.mongodb.Mongo object

created by the MongoFactoryBean in other

@Configuration or your own classes, use a

"private @Autowired Mongo mongo;" field.

While you can use Spring's traditional

<beans/> XML namespace to register an instance

of com.mongodb.Mongo with the container, the XML

can be quite verbose as it is general purpose. XML namespaces are a

better alternative to configuring commonly used objects such as the

Mongo instance. The mongo namespace alows you to create a Mongo instance

server location, replica-sets, and options.

To use the Mongo namespace elements you will need to reference the Mongo schema:

Example 4.3. XML schema to configure MongoDB

<?xml version="1.0" encoding="UTF-8"?> <beans xmlns="http://www.springframework.org/schema/beans" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" xmlns:context="http://www.springframework.org/schema/context" xmlns:mongo="http://www.springframework.org/schema/data/mongo" xsi:schemaLocation= "http://www.springframework.org/schema/context http://www.springframework.org/schema/context/spring-context-3.0.xsd http://www.springframework.org/schema/data/mongo http://www.springframework.org/schema/data/mongo/spring-mongo-1.0.xsd http://www.springframework.org/schema/beans http://www.springframework.org/schema/beans/spring-beans-3.0.xsd"> <!-- Default bean name is 'mongo' --> <mongo:mongo host="localhost" port="27017"/> </beans>

A more advanced configuration with MongoOptions is shown below (note these are not recommended values)

Example 4.4. XML schema to configure a com.mongodb.Mongo object with MongoOptions

<beans> <mongo:mongo host="localhost" port="27017"> <mongo:options connections-per-host="8" threads-allowed-to-block-for-connection-multiplier="4" connect-timeout="1000" max-wait-time="1500}" auto-connect-retry="true" socket-keep-alive="true" socket-timeout="1500" slave-ok="true" write-number="1" write-timeout="0" write-fsync="true"/> </mongo:mongo/> </beans>

A configuration using replica sets is shown below.

Example 4.5. XML schema to configure com.mongodb.Mongo object with Replica Sets

<mongo:mongo id="replicaSetMongo" replica-set="127.0.0.1:27017,localhost:27018"/>

While com.mongodb.Mongo is the entry point

to the MongoDB driver API, connecting to a specific MongoDB database

instance requires additional information such as the database name and

an optional username and password. With that information you can obtain

a com.mongodb.DB object and access all the functionality of a specific

MongoDB database instance. Spring provides the

org.springframework.data.mongodb.core.MongoDbFactory

interface shown below to bootstrap connectivity to the database.

public interface MongoDbFactory { DB getDb() throws DataAccessException; DB getDb(String dbName) throws DataAccessException; }

The following sections show how you can use the container with

either Java or the XML based metadata to configure an instance of the

MongoDbFactory interface. In turn, you can use

the MongoDbFactory instance to configure

MongoTemplate.

The class

org.springframework.data.mongodb.core.SimpleMongoDbFactory

provides implements the MongoDbFactory interface and is created with a

standard com.mongodb.Mongo instance, the database

name and an optional

org.springframework.data.authentication.UserCredentials

constructor argument.

Instead of using the IoC container to create an instance of MongoTemplate, you can just use them in standard Java code as shown below.

public class MongoApp { private static final Log log = LogFactory.getLog(MongoApp.class); public static void main(String[] args) throws Exception { MongoOperations mongoOps = new MongoTemplate(new SimpleMongoDbFactory(new Mongo(), "database")); mongoOps.insert(new Person("Joe", 34)); log.info(mongoOps.findOne(new Query(where("name").is("Joe")), Person.class)); mongoOps.dropCollection("person"); } }

The code in bold highlights the use of SimpleMongoDbFactory and is the only difference between the listing shown in the getting started section.

To register a MongoDbFactory instance with the container, you write code much like what was highlighted in the previous code listing. A simple example is shown below

@Configuration public class MongoConfiguration { public @Bean MongoDbFactory mongoDbFactory() throws Exception { return new SimpleMongoDbFactory(new Mongo(), "database"); } }

To define the username and password create an instance of

org.springframework.data.authentication.UserCredentials

and pass it into the constructor as shown below. This listing also shows

using MongoDbFactory register an instance of

MongoTemplate with the container.

@Configuration public class MongoConfiguration { public @Bean MongoDbFactory mongoDbFactory() throws Exception { UserCredentials userCredentials = new UserCredentials("joe", "secret"); return new SimpleMongoDbFactory(new Mongo(), "database", userCredentials); } public @Bean MongoTemplate mongoTemplate() throws Exception { return new MongoTemplate(mongoDbFactory()); } }

The mongo namespace provides a convient way to create a

SimpleMongoDbFactory as compared to using

the<beans/> namespace. Simple usage is shown

below

<mongo:db-factory dbname="database">

In the above example a com.mongodb.Mongo

instance is created using the default host and port number. The

SimpleMongoDbFactory registered with the

container is identified by the id 'mongoDbFactory' unless a value for

the id attribute is specified.

You can also provide the host and port for the underlying

com.mongodb.Mongo instance as shown below, in

addition to username and password for the database.

<mongo:db-factory id="anotherMongoDbFactory" host="localhost" port="27017" dbname="database" username="joe" password="secret"/>

If you need to configure additional options on the

com.mongodb.Mongo instance that is used to create

a SimpleMongoDbFactory you can refer to an

existing bean using the mongo-ref attribute as shown

below. To show another common usage pattern, this listing show the use

of a property placeholder to parameterise the configuration and creating

MongoTemplate.

<context:property-placeholder location="classpath:/com/myapp/mongodb/config/mongo.properties"/> <mongo:mongo host="${mongo.host}" port="${mongo.port}"> <mongo:options connections-per-host="${mongo.connectionsPerHost}" threads-allowed-to-block-for-connection-multiplier="${mongo.threadsAllowedToBlockForConnectionMultiplier}" connect-timeout="${mongo.connectTimeout}" max-wait-time="${mongo.maxWaitTime}" auto-connect-retry="${mongo.autoConnectRetry}" socket-keep-alive="${mongo.socketKeepAlive}" socket-timeout="${mongo.socketTimeout}" slave-ok="${mongo.slaveOk}" write-number="1" write-timeout="0" write-fsync="true"/> </mongo:mongo> <mongo:db-factory dbname="database" mongo-ref="mongo"/> <bean id="anotherMongoTemplate" class="org.springframework.data.mongodb.core.MongoTemplate"> <constructor-arg name="mongoDbFactory" ref="mongoDbFactory"/> </bean>

Activating auditing functionality is just a matter of adding the

Spring Data Mongo auditing namespace element to your

configuration:

Example 4.6. Activating auditing using XML configuration

<mongo:auditing mapping-context-ref="customMappingContext" auditor-aware-ref="yourAuditorAwareImpl"/>

Since Spring Data MongoDB 1.4 auditing can be enabled by annotating

a configuration class with the @EnableMongoAuditing

annotation.

Example 4.7. Activating auditing using JavaConfig

@Configuration @EnableMongoAuditing class Config { @Bean public AuditorAware<AuditableUser> myAuditorProvider() { return new AuditorAwareImpl(); } }

If you expose a bean of type

AuditorAware to the

ApplicationContext, the auditing

infrastructure will pick it up automatically and use it to determine the

current user to be set on domain types. If you have multiple

implementations registered in the

ApplicationContext, you can select the one

to be used by explicitly setting the auditorAwareRef

attribute of @EnableJpaAuditing.

The class MongoTemplate, located in the

package org.springframework.data.document.mongodb, is

the central class of the Spring's MongoDB support providng a rich feature

set to interact with the database. The template offers convenience

operations to create, update, delete and query for MongoDB documents and

provides a mapping between your domain objects and MongoDB

documents.

![[Note]](images/note.png) | Note |

|---|---|

Once configured, |

The mapping between MongoDB documents and domain classes is done by

delegating to an implementation of the interface

MongoConverter. Spring provides two

implementations, SimpleMappingConverter and

MongoMappingConverter, but you can also write your

own converter. Please refer to the section on MongoCoverters for more

detailed information.

The MongoTemplate class implements the

interface MongoOperations. In as much as

possible, the methods on MongoOperations

are named after methods available on the MongoDB driver

Collection object as as to make the API familiar to

existing MongoDB developers who are used to the driver API. For example,

you will find methods such as "find", "findAndModify", "findOne",

"insert", "remove", "save", "update" and "updateMulti". The design goal

was to make it as easy as possible to transition between the use of the

base MongoDB driver and MongoOperations. A

major difference in between the two APIs is that MongOperations can be

passed domain objects instead of DBObject and there

are fluent APIs for Query,

Criteria, and Update

operations instead of populating a DBObject to

specify the parameters for those operatiosn.

![[Note]](images/note.png) | Note |

|---|---|

The preferred way to reference the operations on

|

The default converter implementation used by

MongoTemplate is MongoMappingConverter. While the

MongoMappingConverter can make use of additional

metadata to specify the mapping of objects to documents it is also capable

of converting objects that contain no additonal metadata by using some

conventions for the mapping of IDs and collection names. These conventions

as well as the use of mapping annotations is explained in the Mapping chapter.

![[Note]](images/note.png) | Note |

|---|---|

In the M2 release |

Another central feature of MongoTemplate is exception translation of exceptions thrown in the MongoDB Java driver into Spring's portable Data Access Exception hierarchy. Refer to the section on exception translation for more information.

While there are many convenience methods on

MongoTemplate to help you easily perform common

tasks if you should need to access the MongoDB driver API directly to

access functionality not explicitly exposed by the MongoTemplate you can

use one of several Execute callback methods to access underlying driver

APIs. The execute callbacks will give you a reference to either a

com.mongodb.Collection or a

com.mongodb.DB object. Please see the section

Execution Callbacks for more

information.

Now let's look at a examples of how to work with the

MongoTemplate in the context of the Spring

container.

You can use Java to create and register an instance of MongoTemplate as shown below.

Example 4.8. Registering a com.mongodb.Mongo object and enabling Spring's exception translation support

@Configuration public class AppConfig { public @Bean Mongo mongo() throws Exception { return new Mongo("localhost"); } public @Bean MongoTemplate mongoTemplate() throws Exception { return new MongoTemplate(mongo(), "mydatabase"); } }

There are several overloaded constructors of MongoTemplate. These are

MongoTemplate

(Mongo mongo, String databaseName)- takes thecom.mongodb.Mongoobject and the default database name to operate against.MongoTemplate

(Mongo mongo, String databaseName, UserCredentials userCredentials)- adds the username and password for authenticating with the database.MongoTemplate

(MongoDbFactory mongoDbFactory)- takes a MongoDbFactory object that encapsulated thecom.mongodb.Mongoobject, database name, and username and password.MongoTemplate

(MongoDbFactory mongoDbFactory, MongoConverter mongoConverter)- adds a MongoConverter to use for mapping.

You can also configure a MongoTemplate using Spring's XML <beans/> schema.

<mongo:mongo host="localhost" port="27017"/> <bean id="mongoTemplate" class="org.springframework.data.mongodb.core.MongoTemplate"> <constructor-arg ref="mongo"/> <constructor-arg name="databaseName" value="geospatial"/> </bean>

Other optional properties that you might like to set when creating

a MongoTemplate are the default

WriteResultCheckingPolicy,

WriteConcern, and

ReadPreference.

![[Note]](images/note.png) | Note |

|---|---|

The preferred way to reference the operations on

|

When in development it is very handy to either log or throw an

exception if the com.mongodb.WriteResult

returned from any MongoDB operation contains an error. It is quite

common to forget to do this during development and then end up with an

application that looks like it runs successfully but in fact the

database was not modified according to your expectations. Set

MongoTemplate's WriteResultChecking property to

an enum with the following values, LOG, EXCEPTION, or NONE to either

log the error, throw and exception or do nothing. The default is to

use a WriteResultChecking value of NONE.

You can set the com.mongodb.WriteConcern

property that the MongoTemplate will use for

write operations if it has not yet been specified via the driver at a

higher level such as com.mongodb.Mongo. If

MongoTemplate's WriteConcern property is not

set it will default to the one set in the MongoDB driver's DB or

Collection setting.

For more advanced cases where you want to set different

WriteConcern values on a per-operation basis

(for remove, update, insert and save operations), a strategy interface

called WriteConcernResolver can be

configured on MongoTemplate. Since

MongoTemplate is used to persist POJOs, the

WriteConcernResolver lets you create a

policy that can map a specific POJO class to a

WriteConcern value. The

WriteConcernResolver interface is shown

below.

public interface WriteConcernResolver { WriteConcern resolve(MongoAction action); }

The passed in argument, MongoAction, is what you use to

determine the WriteConcern value to be used or

to use the value of the Template itself as a default.

MongoAction contains the collection name being

written to, the java.lang.Class of the POJO,

the converted DBObject, as well as the

operation as an enumeration

(MongoActionOperation: REMOVE, UPDATE, INSERT,

INSERT_LIST, SAVE) and a few other pieces of contextual information.

For example,

private class MyAppWriteConcernResolver implements WriteConcernResolver {

public WriteConcern resolve(MongoAction action) {

if (action.getEntityClass().getSimpleName().contains("Audit")) {

return WriteConcern.NONE;

} else if (action.getEntityClass().getSimpleName().contains("Metadata")) {

return WriteConcern.JOURNAL_SAFE;

}

return action.getDefaultWriteConcern();

}

}MongoTemplate provides a simple way for you

to save, update, and delete your domain objects and map those objects to

documents stored in MongoDB.

Given a simple class such as Person

public class Person { private String id; private String name; private int age; public Person(String name, int age) { this.name = name; this.age = age; } public String getId() { return id; } public String getName() { return name; } public int getAge() { return age; } @Override public String toString() { return "Person [id=" + id + ", name=" + name + ", age=" + age + "]"; } }

You can save, update and delete the object as shown below.

![[Note]](images/note.png) | Note |

|---|---|

|

package org.spring.example; import static org.springframework.data.mongodb.core.query.Criteria.where; import static org.springframework.data.mongodb.core.query.Update.update; import static org.springframework.data.mongodb.core.query.Query.query; import java.util.List; import org.apache.commons.logging.Log; import org.apache.commons.logging.LogFactory; import org.springframework.data.mongodb.core.MongoOperations; import org.springframework.data.mongodb.core.MongoTemplate; import org.springframework.data.mongodb.core.SimpleMongoDbFactory; import com.mongodb.Mongo; public class MongoApp { private static final Log log = LogFactory.getLog(MongoApp.class); public static void main(String[] args) throws Exception { MongoOperations mongoOps = new MongoTemplate(new SimpleMongoDbFactory(new Mongo(), "database")); Person p = new Person("Joe", 34); // Insert is used to initially store the object into the database. mongoOps.insert(p); log.info("Insert: " + p); // Find p = mongoOps.findById(p.getId(), Person.class); log.info("Found: " + p); // Update mongoOps.updateFirst(query(where("name").is("Joe")), update("age", 35), Person.class); p = mongoOps.findOne(query(where("name").is("Joe")), Person.class); log.info("Updated: " + p); // Delete mongoOps.remove(p); // Check that deletion worked List<Person> people = mongoOps.findAll(Person.class); log.info("Number of people = : " + people.size()); mongoOps.dropCollection(Person.class); } }

This would produce the following log output (including debug

messages from MongoTemplate itself)

DEBUG apping.MongoPersistentEntityIndexCreator: 80 - Analyzing class class org.spring.example.Person for index information.

DEBUG work.data.mongodb.core.MongoTemplate: 632 - insert DBObject containing fields: [_class, age, name] in collection: person

INFO org.spring.example.MongoApp: 30 - Insert: Person [id=4ddc6e784ce5b1eba3ceaf5c, name=Joe, age=34]

DEBUG work.data.mongodb.core.MongoTemplate:1246 - findOne using query: { "_id" : { "$oid" : "4ddc6e784ce5b1eba3ceaf5c"}} in db.collection: database.person

INFO org.spring.example.MongoApp: 34 - Found: Person [id=4ddc6e784ce5b1eba3ceaf5c, name=Joe, age=34]

DEBUG work.data.mongodb.core.MongoTemplate: 778 - calling update using query: { "name" : "Joe"} and update: { "$set" : { "age" : 35}} in collection: person