Welcome to Spring Boot for Apache Geode & VMware Tanzu GemFire.

Spring Boot for Apache Geode & VMware Tanzu GemFire provides the convenience of Spring Boot’s convention over configuration approach using auto-configuration with the Spring Framework’s powerful abstractions and highly consistent programming model to truly simplify the development of Apache Geode or VMware Tanzu GemFire applications in a Spring context.

Secondarily, Spring Boot for Apache Geode & VMware Tanzu GemFire aims to provide developers with a consistent experience whether building and running Spring Boot, Apache Geode & VMware Tanzu GemFire applications locally or in a managed environment, such as with Pivotal CloudFoundry (PCF).

This project is a continuation and a logical extension to Spring Data for Apache Geode & VMware Tanzu GemFire’s Annotation-based configuration model and the goals set forth in that model: To enable application developers to get up and running as quickly and as easily as possible. In fact, Spring Boot for Apache Geode & VMware Tanzu GemFire builds on this very foundation cemented in Spring Data for Apache Geode & VMware Tanzu GemFire (SDG [1]) since the Spring Data Kay Release Train.

1. Introduction

Spring Boot for Apache Geode & VMware Tanzu GemFire automatically applies auto-configuration to several key application concerns (Use Cases) including, but not limited to:

-

Look-Aside, [Async] Inline, Near and Multi-Site Caching, using Apache Geode as a caching provider in Spring’s Cache Abstraction. Learn more.

-

System of Record (SOR), persisting application state reliably in Apache Geode using Spring Data Repositories. Learn more.

-

Transactions, managing application state consistently with Spring Transaction Management and SDG[1] with support for both Local Cache and Global JTA Transactions.

-

Distributed Computations, ran with Apache Geode’s Function Execution framework, and conveniently implemented and executed with SDG[1] POJO-based, annotation support for Functions. Learn more.

-

Continuous Queries, expressing interests in a stream of events, allowing applications to react to and process changes to data in near real-time with Apache Geode’s Continuous Query (CQ). Handlers are defined as simple Message-Driven POJOs (MDP) using Spring’s Message Listener Container, which has been extended in SDG[1] with its configurable CQ support. Learn more.

-

Data Serialization with Apache Geode PDX, including first-class configuration and support in SDG[1]. Learn more.

-

Logging - quickly and conveniently enable or adjust Apache Geode log levels in your Spring Boot application to gain insight into the runtime operations of the app as they occur. Learn more.

-

Security, including Authentication & Authorization as well as Transport Layer Security (TLS) using Apache Geode Secure Socket Layer (SSL). Once more, SDG[1] includes first-class support for configuring Auth and SSL. Learn more.

-

HTTP Session state management, by including Spring Session for Apache Geode on your application’s classpath. Learn more.

While Spring Data for Apache Geode & VMware Tanzu GemFire offers a simple, consistent, convenient and declarative approach to configure all these powerful Apache Geode features, Spring Boot for Apache Geode & VMware Tanzu GemFire makes it even easier to do as we will explore throughout this reference documentation.

1.1. Goals

While the SBDG project has many goals and objectives, the primary goals of this project centers around three key principles:

-

From Open Source (Apache Geode) to Commercial (VMware Tanzu GemFire)

-

From Non-Managed (self-managed/hosted or on-premise installations) to Managed (VMware Tanzu GemFire for VMs, VMware Tanzu GemFire) environments

-

With little to no code or configuration changes necessary

It is also possible to go in the reverse direction, from Managed back to a Non-Managed environment and even from Commercial back to the Open Source offering, again, with little to no code or configuration changes; it simply just works!

| SBDG’s promise is to deliver on these principles as much as is technically possible and is technically allowed by Apache Geode. |

2. Getting Started

In order to be immediately productive and as effective as possible using Spring Boot for Apache Geode & VMware Tanzu GemFire, it is helpful to understand the foundation on which this project was built.

Of course, our story begins with the Spring Framework and the core technologies and concepts built into the Spring container.

Then, our journey continues with the extensions built into Spring Data for Apache Geode & VMware Tanzu GemFire (SDG[1]) to truly simplify the development of Apache Geode & VMware Tanzu GemFire applications in a Spring context, using Spring’s powerful abstractions and highly consistent programming model. This part of the story was greatly enhanced in Spring Data Kay, with the SDG[1] Annotation-based configuration model. Though this new configuration approach using annotations provides sensible defaults out-of-the-box, its use is also very explicit and assumes nothing. If any part of the configuration is ambiguous, SDG will fail fast. SDG gives you "choice", so you still must tell SDG[1] what you want.

Next, we venture into Spring Boot and all of its wonderfully expressive and highly opinionated "convention over configuration" approach for getting the most out of your Spring, Apache Geode/VMware Tanzu GemFire based applications in the easiest, quickest and most reliable way possible. We accomplish this by combining Spring Data for Apache Geode & VMware Tanzu GemFire’s Annotation-based configuration with Spring Boot’s auto-configuration to get you up and running even faster and more reliably so that you are productive from the start.

As such, it would be pertinent to begin your Spring Boot education here.

Finally, we arrive at Spring Boot for Apache Geode & VMware Tanzu GemFire (SBDG).

3. Using Spring Boot for Apache Geode

To use Spring Boot for Apache Geode, simply declare the spring-geode-starter on your Spring Boot application

classpath:

<dependencies>

<dependency>

<groupId>org.springframework.geode</groupId>

<artifactId>spring-geode-starter</artifactId>

<version>1.3.12.RELEASE</version>

</dependency>

</dependencies>dependencies {

compile 'org.springframework.geode:spring-geode-starter:1.3.12.RELEASE'

}3.1. Maven BOM

If you anticipate using more than 1 Spring Boot for Apache Geode (SBDG) module in your Spring Boot application,

then you can also use the new org.springframework.geode:spring-geode-bom Maven BOM in your application Maven POM.

Your application use case(s) may require more than 1 module if, for example, you need (HTTP) Session state management

and replication (e.g. spring-geode-starter-session), or you need to enable Spring Boot Actuator endpoints for

Apache Geode (e.g. spring-geode-starter-actuator), or perhaps you need assistance writing complex Unit

and (distributed) Integration Tests using Spring Test for Apache Geode (STDG) (e.g. spring-geode-starter-test).

You can declare (include) and use any 1 of the SBDG modules:

-

spring-geode-starter -

spring-geode-starter-actuator -

spring-geode-starter-logging -

spring-geode-starter-session -

spring-geode-starter-test

When more than 1 SBDG module is in play, then it makes sense to use the spring-geode-bom to manage all

the dependencies so that the versions and transitive dependencies necessarily align properly.

Your Spring Boot application Maven POM using the spring-geode-bom along with 2 or more module dependencies

might appear as follows:

<project xmlns="http://maven.apache.org/POM/4.0.0">

<modelVersion>4.0.0</modelVersion>

<parent>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-parent</artifactId>

<version>2.3.12.RELEASE</version>

</parent>

<artifactId>my-spring-boot-application</artifactId>

<properties>

<spring-geode.version>1.3.12.RELEASE</spring-geode.version>

</properties>

<dependencyManagement>

<dependencies>

<dependency>

<groupId>org.springframework.geode</groupId>

<artifactId>spring-geode-bom</artifactId>

<version>${spring-geode.version}</version>

<scope>import</scope>

<type>pom</type>

</dependency>

</dependencies>

</dependencyManagement>

<dependencies>

<dependency>

<groupId>org.springframework.geode</groupId>

<artifactId>spring-geode-starter</artifactId>

</dependency>

<dependency>

<groupId>org.springframework.geode</groupId>

<artifactId>spring-geode-starter-session</artifactId>

</dependency>

<dependency>

<groupId>org.springframework.geode</groupId>

<artifactId>spring-geode-starter-test</artifactId>

<scope>test</scope>

</dependency>

</dependencies>

</project>Notice that 1) the Spring Boot application Maven POM (pom.xml) contains a <dependencyManagement> section declaring

the org.springframework.geode:spring-geode-bom and that 2) none of the spring-geode-starter[-xyz] dependencies

explicitly specify a <version>, which is 3) managed by the spring-geode.version property making it easy to switch

between versions of SBDG as needed, applied evenly to all the SBDG modules declared and used in your application Maven

POM.

If you change the version of SBDG, make sure to change the org.springframework.boot:spring-boot-starter-parent POM

version to match. SBDG is always 1 major version behind, but matches on minor version and patch version

(and version qualifier, e.g. SNAPSHOT, M#, RC#, or RELEASE, if applicable).

For example, SBDG 1.4.0 is based on Spring Boot 2.4.0. SBDG 1.3.5.RELEASE is based on

Spring Boot 2.3.5.RELEASE and so on. It is important that the versions align.

Of course, all of these concerns are easy to do and handled for you by simply going to start.spring.io and adding the "Spring for Apache Geode" dependency.

Clink on this link to get started!

3.2. Gradle Dependency Management

The user experience when using Gradle is similar to that of Maven.

Again, if you will be declaring and using more than 1 SBDG module in your Spring Boot application, for example,

the spring-geode-starter along with the spring-geode-starter-actuator dependency, then using the spring-geode-bom

inside your application Gradle build file will help.

Your application Gradle build file configuration will roughly appear as follows:

plugins {

id 'org.springframework.boot' version '2.3.12.RELEASE'

id 'io.spring.dependency-management' version '1.0.10.RELEASE'

id 'java'

}

// ...

ext {

set('springGeodeVersion', "1.3.12.RELEASE")

}

dependencies {

implementation 'org.springframework.geode:spring-geode-starter'

implementation 'org.springframework.geode:spring-geode-starter-actuator'

testImplementation 'org.springframework.boot:spring-boot-starter-test'

}

dependencyManagement {

imports {

mavenBom "org.springframework.geode:spring-geode-bom:${springGeodeVersion}"

}

}A combination of the Spring Boot Gradle Plugin and the Spring Dependency Management Gradle Plugin manage the application dependencies for you.

In a nutshell, the Spring Dependency Management Gradle Plugin provides dependency management capabilities for Gradle much like Maven. The Spring Boot Gradle Plugin defines a curated and tested set of versions for many 3rd party Java libraries. Together they make adding dependencies and managing (compatible) versions easier!

Again, you don’t need to explicitly declare the version when adding a dependency, including a new SBDG module dependency

(e.g. spring-geode-starter-session) since this has already been determined for you. You can simply declare the

dependency:

implementation 'org.springframework.geode:spring-geode-starter-session'The version of SBDG is controlled by the extension property (springGeodeVersion) in the application Gradle build file.

To use a different version of SBDG, simply set the springGeodeVersion property to the desired version

(e.g. 1.3.5.RELEASE). Of course, make sure the version of Spring Boot matches!

SBDG is always 1 major version behind, but matches on minor version and patch version (and version qualifier,

e.g. SNAPSHOT, M#, RC#, or RELEASE, if applicable). For example, SBDG 1.4.0 is based on Spring Boot 2.4.0.

SBDG 1.3.5.RELEASE is based on Spring Boot 2.3.5.RELEASE and so on. It is important that the versions align.

Of course, all of these concerns are easy to do and handled for you by simply going to start.spring.io and adding the "Spring for Apache Geode" dependency.

Clink on this link to get started!

4. Building ClientCache Applications

The first, opinionated option provided to you by Spring Boot for Apache Geode (SBDG) out-of-the-box is a ClientCache instance, simply by declaring Spring Boot for Apache Geode (SBDG) on your application classpath.

It is assumed that most application developers using Spring Boot to build applications backed by Apache Geode will be building cache client applications deployed in an Apache Geode Client/Server topology. A client/server topology is the most common and traditional architecture employed by enterprise applications.

For example, you can begin building a Spring Boot, Apache Geode ClientCache application with either

the spring-geode-starter or spring-gemfire-starter on your application’s classpath:

<dependency>

<groupId>org.springframework.geode</groupId>

<artifactId>spring-geode-starter</artifactId>

</dependency>Then, you configure and bootstrap your Spring Boot, Apache Geode ClientCache application with the following

main application class:

ClientCache Application@SpringBootApplication

public class SpringBootApacheGeodeClientCacheApplication {

public static void main(String[] args) {

SpringApplication.run(SpringBootApacheGeodeClientCacheApplication.class, args);

}

}Your application now has a ClientCache instance, which is able to connect to an Apache Geode server running on

localhost, listening on the default CacheServer port, 40404.

By default, an Apache Geode server (i.e. CacheServer) must be running in order to use the ClientCache instance.

However, it is perfectly valid to create a ClientCache instance and perform data access operations using LOCAL

Regions. This is very useful during development.

To develop with LOCAL Regions, you only need to define your cache Regions with the

ClientRegionShortcut.LOCAL

data management policy.

|

When you are ready to switch from your local development environment (IDE) to a client/server architecture in a managed

environment, you simply change the data management policy of the client Region from LOCAL back to the default PROXY,

or even a CACHING_PROXY, data management policy which will cause the data to be sent/received to and from 1 or more

servers, respectively.

| Compare and contrast the above configuration with Spring Data for Apache Geode’s approach. |

It is uncommon to ever need a direct reference to the ClientCache instance provided by SBDG injected into your

application components (e.g. @Service or @Repository beans defined in a Spring ApplicationContext) whether you

are configuring additional GemFire/Geode objects (e.g. Regions, Indexes, etc) or simply using those objects indirectly

in your applications. However, it is also possible to do so if and when needed.

For example, perhaps you want to perform some additional ClientCache initialization in a Spring Boot

ApplicationRunner on startup:

GemFireCache reference@SpringBootApplication

public class SpringBootApacheGeodeClientCacheApplication {

public static void main(String[] args) {

SpringApplication.run(SpringBootApacheGeodeClientCacheApplication.class, args);

}

@Bean

ApplicationRunner runAdditionalClientCacheInitialization(GemFireCache gemfireCache) {

return args -> {

ClientCache clientCache = (ClientCache) gemfireCache;

// perform additional ClientCache initialization as needed

};

}

}4.1. Building Embedded (Peer & Server) Cache Applications

What if you want to build an embedded, peer Cache application instead?

Perhaps you need an actual peer cache member, configured and bootstrapped with Spring Boot, along with the ability to join this member to an existing cluster (of data servers) as a peer node. Well, you can do that too.

Remember the 2nd goal in Spring Boot’s documentation:

Be opinionated out of the box but get out of the way quickly as requirements start to diverge from the defaults.

It is the 2nd part, "get out of the way quickly as requirements start to diverge from the defaults" that we refer to here.

If your application requirements demand you use Spring Boot to configure and bootstrap an embedded, peer Cache

Apache Geode application, then simply declare your intention with either SDG’s

@PeerCacheApplication annotation,

or alternatively, if you need to enable connections from ClientCache apps as well, use the SDG

@CacheServerApplication annotation:

@SpringBootApplication

@CacheServerApplication(name = "MySpringBootApacheGeodeCacheServerApplication")

public class SpringBootApacheGeodeCacheServerApplication {

public static void main(String[] args) {

SpringApplication.run(SpringBootApacheGeodeCacheServerApplication.class, args);

}

}| An Apache Geode "server" is not necessarily a “CacheServer” capable of serving cache clients. It is merely a peer member node in a GemFire/Geode cluster (a.k.a. distributed system) that stores and manages data. |

By explicitly declaring the @CacheServerApplication annotation, you are telling Spring Boot that you do not want

the default, ClientCache instance, but rather an embedded, peer Cache instance with a CacheServer component,

which enables connections from ClientCache apps.

You can also enable 2 other GemFire/Geode services, an embedded Locator, which allows clients or even other peers to "locate" servers in a cluster, as well as an embedded Manager, which allows the GemFire/Geode application process to be managed and monitored using Gfsh, GemFire/Geode’s shell tool:

CacheServer Application with Locator and Manager services enabled@SpringBootApplication

@CacheServerApplication(name = "SpringBootApacheGeodeCacheServerApplication")

@EnableLocator

@EnableManager

public class SpringBootApacheGeodeCacheServerApplication {

public static void main(String[] args) {

SpringApplication.run(SpringBootApacheGeodeCacheServerApplication.class, args);

}

}Then, you can use Gfsh to connect to and manage this server:

$ echo $GEMFIRE

/Users/jblum/pivdev/apache-geode-1.2.1

$ gfsh

_________________________ __

/ _____/ ______/ ______/ /____/ /

/ / __/ /___ /_____ / _____ /

/ /__/ / ____/ _____/ / / / /

/______/_/ /______/_/ /_/ 1.2.1

Monitor and Manage Apache Geode

gfsh>connect

Connecting to Locator at [host=localhost, port=10334] ..

Connecting to Manager at [host=10.0.0.121, port=1099] ..

Successfully connected to: [host=10.0.0.121, port=1099]

gfsh>list members

Name | Id

------------------------------------------- | --------------------------------------------------------------------------

SpringBootApacheGeodeCacheServerApplication | 10.0.0.121(SpringBootApacheGeodeCacheServerApplication:29798)<ec><v0>:1024

gfsh>

gfsh>describe member --name=SpringBootApacheGeodeCacheServerApplication

Name : SpringBootApacheGeodeCacheServerApplication

Id : 10.0.0.121(SpringBootApacheGeodeCacheServerApplication:29798)<ec><v0>:1024

Host : 10.0.0.121

Regions :

PID : 29798

Groups :

Used Heap : 168M

Max Heap : 3641M

Working Dir : /Users/jblum/pivdev/spring-boot-data-geode/spring-geode-docs/build

Log file : /Users/jblum/pivdev/spring-boot-data-geode/spring-geode-docs/build

Locators : localhost[10334]

Cache Server Information

Server Bind :

Server Port : 40404

Running : true

Client Connections : 0You can even start additional servers in Gfsh, which will connect to your Spring Boot configured and bootstrapped

Apache Geode CacheServer application. These additional servers started in Gfsh know about the Spring Boot,

Apache Geode server because of the embedded Locator service, which is running on localhost, listening on

the default Locator port, 10334:

gfsh>start server --name=GfshServer --log-level=config --disable-default-server

Starting a Geode Server in /Users/jblum/pivdev/lab/GfshServer...

...

Server in /Users/jblum/pivdev/lab/GfshServer on 10.0.0.121 as GfshServer is currently online.

Process ID: 30031

Uptime: 3 seconds

Geode Version: 1.2.1

Java Version: 1.8.0_152

Log File: /Users/jblum/pivdev/lab/GfshServer/GfshServer.log

JVM Arguments: -Dgemfire.default.locators=10.0.0.121:127.0.0.1[10334] -Dgemfire.use-cluster-configuration=true -Dgemfire.start-dev-rest-api=false -Dgemfire.log-level=config -XX:OnOutOfMemoryError=kill -KILL %p -Dgemfire.launcher.registerSignalHandlers=true -Djava.awt.headless=true -Dsun.rmi.dgc.server.gcInterval=9223372036854775806

Class-Path: /Users/jblum/pivdev/apache-geode-1.2.1/lib/geode-core-1.2.1.jar:/Users/jblum/pivdev/apache-geode-1.2.1/lib/geode-dependencies.jar

gfsh>list members

Name | Id

------------------------------------------- | --------------------------------------------------------------------------

SpringBootApacheGeodeCacheServerApplication | 10.0.0.121(SpringBootApacheGeodeCacheServerApplication:29798)<ec><v0>:1024

GfshServer | 10.0.0.121(GfshServer:30031)<v1>:1025Perhaps you want to start the other way around. As developer, I may need to connect my Spring Boot configured and bootstrapped GemFire/Geode server application to an existing cluster. You can start the cluster in Gfsh by executing the following commands:

gfsh>start locator --name=GfshLocator --port=11235 --log-level=config

Starting a Geode Locator in /Users/jblum/pivdev/lab/GfshLocator...

...

Locator in /Users/jblum/pivdev/lab/GfshLocator on 10.0.0.121[11235] as GfshLocator is currently online.

Process ID: 30245

Uptime: 3 seconds

Geode Version: 1.2.1

Java Version: 1.8.0_152

Log File: /Users/jblum/pivdev/lab/GfshLocator/GfshLocator.log

JVM Arguments: -Dgemfire.log-level=config -Dgemfire.enable-cluster-configuration=true -Dgemfire.load-cluster-configuration-from-dir=false -Dgemfire.launcher.registerSignalHandlers=true -Djava.awt.headless=true -Dsun.rmi.dgc.server.gcInterval=9223372036854775806

Class-Path: /Users/jblum/pivdev/apache-geode-1.2.1/lib/geode-core-1.2.1.jar:/Users/jblum/pivdev/apache-geode-1.2.1/lib/geode-dependencies.jar

Successfully connected to: JMX Manager [host=10.0.0.121, port=1099]

Cluster configuration service is up and running.

gfsh>start server --name=GfshServer --log-level=config --disable-default-server

Starting a Geode Server in /Users/jblum/pivdev/lab/GfshServer...

....

Server in /Users/jblum/pivdev/lab/GfshServer on 10.0.0.121 as GfshServer is currently online.

Process ID: 30270

Uptime: 4 seconds

Geode Version: 1.2.1

Java Version: 1.8.0_152

Log File: /Users/jblum/pivdev/lab/GfshServer/GfshServer.log

JVM Arguments: -Dgemfire.default.locators=10.0.0.121[11235] -Dgemfire.use-cluster-configuration=true -Dgemfire.start-dev-rest-api=false -Dgemfire.log-level=config -XX:OnOutOfMemoryError=kill -KILL %p -Dgemfire.launcher.registerSignalHandlers=true -Djava.awt.headless=true -Dsun.rmi.dgc.server.gcInterval=9223372036854775806

Class-Path: /Users/jblum/pivdev/apache-geode-1.2.1/lib/geode-core-1.2.1.jar:/Users/jblum/pivdev/apache-geode-1.2.1/lib/geode-dependencies.jar

gfsh>list members

Name | Id

----------- | --------------------------------------------------

GfshLocator | 10.0.0.121(GfshLocator:30245:locator)<ec><v0>:1024

GfshServer | 10.0.0.121(GfshServer:30270)<v1>:1025Then, modify the SpringBootApacheGeodeCacheServerApplication class to connect to the existing cluster, like so:

CacheServer Application with Locator and Manager services enabled@SpringBootApplication

@CacheServerApplication(name = "MySpringBootApacheGeodeCacheServerApplication", locators = "localhost[11235]")

public class SpringBootApacheGeodeCacheServerApplication {

public static void main(String[] args) {

SpringApplication.run(SpringBootApacheGeodeClientCacheApplication.class, args);

}

}

Notice I configured the SpringBootApacheGeodeCacheServerApplication class, @CacheServerApplication annotation’s

locators property with the host and port (i.e. "localhost[11235]") on which I started my Locator using Gfsh.

|

After running your Spring Boot, Apache Geode CacheServer application again, and then running list members in Gfsh,

you should see:

gfsh>list members

Name | Id

------------------------------------------- | ----------------------------------------------------------------------

GfshLocator | 10.0.0.121(GfshLocator:30245:locator)<ec><v0>:1024

GfshServer | 10.0.0.121(GfshServer:30270)<v1>:1025

SpringBootApacheGeodeCacheServerApplication | 10.0.0.121(SpringBootApacheGeodeCacheServerApplication:30279)<v2>:1026

gfsh>describe member --name=SpringBootApacheGeodeCacheServerApplication

Name : SpringBootApacheGeodeCacheServerApplication

Id : 10.0.0.121(SpringBootApacheGeodeCacheServerApplication:30279)<v2>:1026

Host : 10.0.0.121

Regions :

PID : 30279

Groups :

Used Heap : 165M

Max Heap : 3641M

Working Dir : /Users/jblum/pivdev/spring-boot-data-geode/spring-geode-docs/build

Log file : /Users/jblum/pivdev/spring-boot-data-geode/spring-geode-docs/build

Locators : localhost[11235]

Cache Server Information

Server Bind :

Server Port : 40404

Running : true

Client Connections : 0In both scenarios, the Spring Boot configured and bootstrapped Apache Geode server and the Gfsh Locator and Server formed a cluster.

While you can use either approach and Spring does not care, it is far more convenient to use Spring Boot and your IDE to form a small cluster while developing. By leveraging Spring profiles, it is far simpler and much faster to configure and start a small cluster.

Plus, this is useful for rapidly prototyping, testing and debugging your entire, end-to-end application and system architecture, all right from the comfort and familiarity of your IDE of choice. No additional tooling (e.g. Gfsh) or knowledge is required to get started quickly and easily.

Just build and run!

Be careful to vary your port numbers for the embedded services, like the CacheServer, Locators and Manager,

especially if you start multiple instances, otherwise you will run into a java.net.BindException

due to port conflicts.

|

| See the Appendix, Running an Apache Geode cluster using Spring Boot from your IDE for more details. |

4.2. Building Locator Applications

In addition to ClientCache, CacheServer and peer Cache applications, SDG, and by extension SBDG, now supports

Locator-based, Spring Boot applications.

An Apache Geode Locator is a location-based service, or alternatively and more typically, a standalone process enabling clients to "locate" a cluster of Apache Geode servers to manage data. Many cache clients can connect to the same cluster in order to share data. Running multiple clients is common in a Microservices architecture where you need to scale-up the number of app instances to satisfy the demand.

A Locator is also used by joining members of an existing cluster to scale-out and increase capacity of the logically pooled system resources (i.e. Memory, CPU and Disk). A Locator maintains metadata that is sent to the clients to enable capabilities like single-hop data access, routing data access operations to the data node in the cluster maintaining the data of interests. A Locator also maintains load information for servers in the cluster, which enables the load to be uniformly distributed across the cluster while also providing fail-over services to a redundant member if the primary fails. A Locator provides many more benefits and you are encouraged to read the documentation for more details.

As shown above, a Locator service can be embedded within either a peer Cache or CacheServer, Spring Boot application

using the SDG @EnableLocator annotation:

@SpringBootApplication

@CacheServerApplication

@EnableLocator

class SpringBootCacheServerWithEmbeddedLocatorApplication {

// ...

}However, it is more common to start standalone Locator JVM processes. This is useful when you want to increase the resiliency of your cluster in face of network and process failures, which are bound to happen. If a Locator JVM process crashes or gets severed from the cluster due to a network failure, then having multiple Locators provides a higher degree of availability (HA) through redundancy.

Not to worry though, if all Locators in the cluster go down, then the cluster will still remain intact. You simply won’t be able to add more peer members (i.e. scale-up the number of data nodes in the cluster) or connect any more clients. If all the Locators in the cluster go down, then it is safe to simply restart them only after a thorough diagnosis.

| Once a client receives metadata about the cluster of servers, then all data access operations are sent directly to servers in the cluster, not a Locator. Therefore, existing, connected clients will remain connected and operable. |

To configure and bootstrap Locator-based, Spring Boot applications as standalone JVM processes, use the following configuration:

@SpringBootApplication

@LocatorApplication

class SpringBootApacheGeodeLocatorApplication {

// ...

}Instead of using the @EnableLocator annotation, you now use the @LocatorApplication annotation.

The @LocatorApplication annotation works in the same way as the @PeerCacheApplication and @CacheServerApplication

annotations, bootstrapping a Apache Geode process, overriding the default ClientCache instance provided by SBDG

out-of-the-box.

If your @SpringBootApplication class is annotated with @LocatorApplication, then it can only be a Locator

and not a ClientCache, CacheServer or peer Cache application. If you need the application to function as a

peer Cache, perhaps with an embedded CacheServer components and embedded Locator, then you need to follow

the approach shown above using the @EnableLocator annotation with either the @PeerCacheApplication

or @CacheServerApplication annotation.

|

With our Spring Boot, Apache Geode Locator application, we can connect both Spring Boot configured and bootstrapped

peer members (peer Cache, CacheServer and Locator applications) as well as Gfsh started Locators and Servers.

First, let’s startup 2 Locators using our Apache Geode Locator, Spring Boot application class.

@UseLocators

@SpringBootApplication

@LocatorApplication(name = "SpringBootApacheGeodeLocatorApplication")

public class SpringBootApacheGeodeLocatorApplication {

public static void main(String[] args) {

new SpringApplicationBuilder(SpringBootApacheGeodeLocatorApplication.class)

.web(WebApplicationType.NONE)

.build()

.run(args);

System.err.println("Press <enter> to exit!");

new Scanner(System.in).nextLine();

}

@Configuration

@EnableManager(start = true)

@Profile("manager")

@SuppressWarnings("unused")

static class ManagerConfiguration { }

}We also need to vary the configuration for each Locator app instance.

Apache Geode requires each peer member in the cluster to be uniquely named. We can set the name of the Locator by using

the spring.data.gemfire.locator.name SDG property set as a JVM System Property in your IDE’s Run Configuration Profile

for the application main class like so: -Dspring.data.gemfire.locator.name=SpringLocatorOne. We name the second

Locator app instance, "SpringLocatorTwo".

Additionally, we must vary the port numbers that the Locators use to listen for connections. By default,

an Apache Geode Locator listens on port 10334. We can set the Locator port using

the spring.data.gemfire.locator.port SDG property.

For our first Locator app instance (i.e. "SpringLocatorOne"), we also enable the "manager" Profile so that we can connect to the Locator using Gfsh.

Our IDE Run Configuration Profile for our first Locator app instance appears as:

-server -ea -Dspring.profiles.active=manager -Dspring.data.gemfire.locator.name=SpringLocatorOne -Dlogback.log.level=INFO

And our IDE Run Configuration Profile for our second Locator app instance appears as:

-server -ea -Dspring.profiles.active= -Dspring.data.gemfire.locator.name=SpringLocatorTwo -Dspring.data.gemfire.locator.port=11235 -Dlogback.log.level=INFO

You should see log output similar to the following when you start a Locator app instance:

. ____ _ __ _ _

/\\ / ___'_ __ _ _(_)_ __ __ _ \ \ \ \

( ( )\___ | '_ | '_| | '_ \/ _` | \ \ \ \

\\/ ___)| |_)| | | | | || (_| | ) ) ) )

' |____| .__|_| |_|_| |_\__, | / / / /

=========|_|==============|___/=/_/_/_/

:: Spring Boot :: (v2.2.0.BUILD-SNAPSHOT)

2019-09-01 11:02:48,707 INFO .SpringBootApacheGeodeLocatorApplication: 55 - Starting SpringBootApacheGeodeLocatorApplication on jblum-mbpro-2.local with PID 30077 (/Users/jblum/pivdev/spring-boot-data-geode/spring-geode-docs/out/production/classes started by jblum in /Users/jblum/pivdev/spring-boot-data-geode/spring-geode-docs/build)

2019-09-01 11:02:48,711 INFO .SpringBootApacheGeodeLocatorApplication: 651 - No active profile set, falling back to default profiles: default

2019-09-01 11:02:49,374 INFO xt.annotation.ConfigurationClassEnhancer: 355 - @Bean method LocatorApplicationConfiguration.exclusiveLocatorApplicationBeanFactoryPostProcessor is non-static and returns an object assignable to Spring's BeanFactoryPostProcessor interface. This will result in a failure to process annotations such as @Autowired, @Resource and @PostConstruct within the method's declaring @Configuration class. Add the 'static' modifier to this method to avoid these container lifecycle issues; see @Bean javadoc for complete details.

2019-09-01 11:02:49,919 INFO ode.distributed.internal.InternalLocator: 530 - Starting peer location for Distribution Locator on 10.99.199.24[11235]

2019-09-01 11:02:49,925 INFO ode.distributed.internal.InternalLocator: 498 - Starting Distribution Locator on 10.99.199.24[11235]

2019-09-01 11:02:49,926 INFO distributed.internal.tcpserver.TcpServer: 242 - Locator was created at Sun Sep 01 11:02:49 PDT 2019

2019-09-01 11:02:49,927 INFO distributed.internal.tcpserver.TcpServer: 243 - Listening on port 11235 bound on address 0.0.0.0/0.0.0.0

2019-09-01 11:02:49,928 INFO ternal.membership.gms.locator.GMSLocator: 162 - GemFire peer location service starting. Other locators: localhost[10334] Locators preferred as coordinators: true Network partition detection enabled: true View persistence file: /Users/jblum/pivdev/spring-boot-data-geode/spring-geode-docs/build/locator11235view.dat

2019-09-01 11:02:49,928 INFO ternal.membership.gms.locator.GMSLocator: 416 - Peer locator attempting to recover from localhost/127.0.0.1:10334

2019-09-01 11:02:49,963 INFO ternal.membership.gms.locator.GMSLocator: 422 - Peer locator recovered initial membership of View[10.99.199.24(SpringLocatorOne:30043:locator)<ec><v0>:41000|0] members: [10.99.199.24(SpringLocatorOne:30043:locator)<ec><v0>:41000]

2019-09-01 11:02:49,963 INFO ternal.membership.gms.locator.GMSLocator: 407 - Peer locator recovered state from LocatorAddress [socketInetAddress=localhost/127.0.0.1:10334, hostname=localhost, isIpString=false]

2019-09-01 11:02:49,965 INFO ode.distributed.internal.InternalLocator: 644 - Starting distributed system

2019-09-01 11:02:50,007 INFO he.geode.internal.logging.LoggingSession: 82 -

---------------------------------------------------------------------------

Licensed to the Apache Software Foundation (ASF) under one or more

contributor license agreements. See the NOTICE file distributed with this

work for additional information regarding copyright ownership.

The ASF licenses this file to You under the Apache License, Version 2.0

(the "License"); you may not use this file except in compliance with the

License. You may obtain a copy of the License at

https://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS, WITHOUT

WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. See the

License for the specific language governing permissions and limitations

under the License.

---------------------------------------------------------------------------

Build-Date: 2019-04-19 11:49:13 -0700

Build-Id: onichols 0

Build-Java-Version: 1.8.0_192

Build-Platform: Mac OS X 10.14.4 x86_64

Product-Name: Apache Geode

Product-Version: 1.9.0

Source-Date: 2019-04-19 11:11:31 -0700

Source-Repository: release/1.9.0

Source-Revision: c0a73d1cb84986d432003bd12e70175520e63597

Native version: native code unavailable

Running on: 10.99.199.24/10.99.199.24, 8 cpu(s), x86_64 Mac OS X 10.13.6

Communications version: 100

Process ID: 30077

User: jblum

Current dir: /Users/jblum/pivdev/spring-boot-data-geode/spring-geode-docs/build

Home dir: /Users/jblum

Command Line Parameters:

-ea

-Dspring.profiles.active=

-Dspring.data.gemfire.locator.name=SpringLocatorTwo

-Dspring.data.gemfire.locator.port=11235

-Dlogback.log.level=INFO

-javaagent:/Applications/IntelliJ IDEA 19 CE.app/Contents/lib/idea_rt.jar=51961:/Applications/IntelliJ IDEA 19 CE.app/Contents/bin

-Dfile.encoding=UTF-8

Class Path:

...

..

.

2019-09-01 11:02:54,112 INFO ode.distributed.internal.InternalLocator: 661 - Locator started on 10.99.199.24[11235]

2019-09-01 11:02:54,113 INFO ode.distributed.internal.InternalLocator: 769 - Starting server location for Distribution Locator on 10.99.199.24[11235]

2019-09-01 11:02:54,134 INFO nt.internal.locator.wan.LocatorDiscovery: 138 - Locator discovery task exchanged locator information 10.99.199.24[11235] with localhost[10334]: {-1=[10.99.199.24[10334]]}.

2019-09-01 11:02:54,242 INFO .SpringBootApacheGeodeLocatorApplication: 61 - Started SpringBootApacheGeodeLocatorApplication in 6.137470354 seconds (JVM running for 6.667)

Press <enter> to exit!Next, start up the second Locator app instance (you should see log output similar to above). Then, connect to the cluster of Locators using Gfsh:

$ echo $GEMFIRE

/Users/jblum/pivdev/apache-geode-1.9.0

$ gfsh

_________________________ __

/ _____/ ______/ ______/ /____/ /

/ / __/ /___ /_____ / _____ /

/ /__/ / ____/ _____/ / / / /

/______/_/ /______/_/ /_/ 1.9.0

Monitor and Manage Apache Geode

gfsh>connect

Connecting to Locator at [host=localhost, port=10334] ..

Connecting to Manager at [host=10.99.199.24, port=1099] ..

Successfully connected to: [host=10.99.199.24, port=1099]

gfsh>list members

Name | Id

---------------- | ------------------------------------------------------------------------

SpringLocatorOne | 10.99.199.24(SpringLocatorOne:30043:locator)<ec><v0>:41000 [Coordinator]

SpringLocatorTwo | 10.99.199.24(SpringLocatorTwo:30077:locator)<ec><v1>:41001Using our SpringBootApacheGeodeCacheServerApplication main class from the previous section, we can configure

and bootstrap an Apache Geode CacheServer application with Spring Boot and connect it to our cluster of Locators.

@SpringBootApplication

@CacheServerApplication(name = "SpringBootApacheGeodeCacheServerApplication")

@SuppressWarnings("unused")

public class SpringBootApacheGeodeCacheServerApplication {

public static void main(String[] args) {

new SpringApplicationBuilder(SpringBootApacheGeodeCacheServerApplication.class)

.web(WebApplicationType.NONE)

.build()

.run(args);

}

@Configuration

@UseLocators

@Profile("clustered")

static class ClusteredConfiguration { }

@Configuration

@EnableLocator

@EnableManager(start = true)

@Profile("!clustered")

static class LonerConfiguration { }

}Simply enable the "clustered" Profile by using a IDE Run Profile Configuration similar to:

-server -ea -Dspring.profiles.active=clustered -Dspring.data.gemfire.name=SpringServer -Dspring.data.gemfire.cache.server.port=41414 -Dlogback.log.level=INFO

After the server starts up, you should see the new peer member in the cluster:

CacheServergfsh>list members

Name | Id

---------------- | ------------------------------------------------------------------------

SpringLocatorOne | 10.99.199.24(SpringLocatorOne:30043:locator)<ec><v0>:41000 [Coordinator]

SpringLocatorTwo | 10.99.199.24(SpringLocatorTwo:30077:locator)<ec><v1>:41001

SpringServer | 10.99.199.24(SpringServer:30216)<v2>:41002Finally, we can even start additional Locators and Servers connected to this cluster using Gfsh:

gfsh>start locator --name=GfshLocator --port=12345 --log-level=config

Starting a Geode Locator in /Users/jblum/pivdev/lab/GfshLocator...

......

Locator in /Users/jblum/pivdev/lab/GfshLocator on 10.99.199.24[12345] as GfshLocator is currently online.

Process ID: 30259

Uptime: 5 seconds

Geode Version: 1.9.0

Java Version: 1.8.0_192

Log File: /Users/jblum/pivdev/lab/GfshLocator/GfshLocator.log

JVM Arguments: -Dgemfire.default.locators=10.99.199.24[11235],10.99.199.24[10334] -Dgemfire.enable-cluster-configuration=true -Dgemfire.load-cluster-configuration-from-dir=false -Dgemfire.log-level=config -Dgemfire.launcher.registerSignalHandlers=true -Djava.awt.headless=true -Dsun.rmi.dgc.server.gcInterval=9223372036854775806

Class-Path: /Users/jblum/pivdev/apache-geode-1.9.0/lib/geode-core-1.9.0.jar:/Users/jblum/pivdev/apache-geode-1.9.0/lib/geode-dependencies.jar

gfsh>start server --name=GfshServer --server-port=45454 --log-level=config

Starting a Geode Server in /Users/jblum/pivdev/lab/GfshServer...

...

Server in /Users/jblum/pivdev/lab/GfshServer on 10.99.199.24[45454] as GfshServer is currently online.

Process ID: 30295

Uptime: 2 seconds

Geode Version: 1.9.0

Java Version: 1.8.0_192

Log File: /Users/jblum/pivdev/lab/GfshServer/GfshServer.log

JVM Arguments: -Dgemfire.default.locators=10.99.199.24[11235],10.99.199.24[12345],10.99.199.24[10334] -Dgemfire.start-dev-rest-api=false -Dgemfire.use-cluster-configuration=true -Dgemfire.log-level=config -XX:OnOutOfMemoryError=kill -KILL %p -Dgemfire.launcher.registerSignalHandlers=true -Djava.awt.headless=true -Dsun.rmi.dgc.server.gcInterval=9223372036854775806

Class-Path: /Users/jblum/pivdev/apache-geode-1.9.0/lib/geode-core-1.9.0.jar:/Users/jblum/pivdev/apache-geode-1.9.0/lib/geode-dependencies.jar

gfsh>list members

Name | Id

---------------- | ------------------------------------------------------------------------

SpringLocatorOne | 10.99.199.24(SpringLocatorOne:30043:locator)<ec><v0>:41000 [Coordinator]

SpringLocatorTwo | 10.99.199.24(SpringLocatorTwo:30077:locator)<ec><v1>:41001

SpringServer | 10.99.199.24(SpringServer:30216)<v2>:41002

GfshLocator | 10.99.199.24(GfshLocator:30259:locator)<ec><v3>:41003

GfshServer | 10.99.199.24(GfshServer:30295)<v4>:41004You must be careful to vary the ports and name your peer members appropriately. With Spring, and Spring Boot for Apache Geode (SBDG) in particular, it really is that easy!

4.3. Building Manager Applications

As discussed in the previous sections above, it is possible to enable a Spring Boot configured and bootstrapped Apache Geode peer member node in the cluster to function as a Manager.

An Apache Geode Manager is a peer member node in the cluster running the Management Service, allowing the cluster to be managed and monitored using JMX based tools, like Gfsh, JConsole or JVisualVM, for instance. Any tool that uses the JMX API can connect to and manage the GemFire/Geode cluster for whatever purpose.

The cluster may have more than 1 Manager for redundancy. Only server-side, peer member nodes in the cluster

may function as a Manager. Therefore, a ClientCache application cannot be a Manager.

To create a Manager, you use the SDG @EnableManager annotation.

The 3 primary uses of the @EnableManager annotation to create a Manager is:

@SpringBootApplication

@CacheServerApplication(name = "CacheServerManagerApplication")

@EnableManager(start = true)

class CacheServerManagerApplication {

// ...

}@SpringBootApplication

@PeerCacheApplication(name = "PeerCacheManagerApplication")

@EnableManager(start = "true")

class SpringBootPeerCacheManagerApplication {

// ...

}@SpringBootApplication

@LocatorApplication(name = "LocatorManagerApplication")

@EnableManager(start = true)

class LocatorManagerApplication {

// ...

}#1 creates a peer Cache instance with a CacheServer component accepting client connections along with

an embedded Manager enabling JMX clients to connect.

#2 creates only a peer Cache instance along with an embedded Manager. As a peer Cache with NO CacheServer

component, clients are not able to connect to this node. It is merely a server managing data.

#3 creates a Locator instance with an embedded Manager.

In all configuration arrangements, the Manager was configured to start immediately.

See the @EnableManager annotation

Javadoc

for additional configuration options.

|

As of Apache Geode 1.11.0, you must now include additional Geode dependencies on your Spring Boot application classpath to make your application a proper Apache Geode Manager in the cluster, particularly if you are also enabling the embedded HTTP service in the Manager.

The required dependencies are:

runtime "org.apache.geode:geode-http-service"

runtime "org.apache.geode:geode-web"

runtime "org.springframework.boot:spring-boot-starter-jetty"The embedded HTTP service (implemented with the Eclipse Jetty Servlet Container), runs the Management (Admin) REST API, which is used by tooling, such as Gfsh, to connect to the cluster over HTTP. In addition, it also runs the Pulse Monitoring Tool.

Even if you do not start the embedded HTTP service (Jetty Servlet Container), a Manager still requires

the geode-http-service, geode-web and spring-boot-starter-jetty dependencies.

5. Auto-configuration

The following Spring Framework, Spring Data for Apache Geode & VMware Tanzu GemFire (SDG) and Spring Session for Apache Geode and VMware Tanzu GemFire (SSDG) Annotations are implicitly declared by Spring Boot for Apache Geode & VMware Tanzu GemFire’s (SBDG) Auto-configuration.

-

@ClientCacheApplication -

@EnableGemfireCaching(or alternatively, Spring Framework’s@EnableCaching) -

@EnableContinuousQueries -

@EnableGemfireFunctionExecutions -

@EnableGemfireFunctions -

@EnableGemfireRepositories -

@EnableLogging -

@EnablePdx -

@EnableSecurity -

@EnableSsl -

@EnableGemFireHttpSession

This means you DO NOT need to explicitly declare any of these Annotations on your @SpringBootApplication class

since they are provided by SBDG already. The only reason you would explicitly declare any of these Annotations is if

you wanted to "override" Spring Boot’s, and in particular, SBDG’s Auto-configuration. Otherwise, it is unnecessary!

|

| You should read the chapter in Spring Boot’s Reference Documentation on Auto-configuration. |

| You should review the chapter in Spring Data for Apache Geode and VMware Tanzu GemFire’s (SDG) Reference Documentation on Annotation-based Configuration. For a quick reference, or an overview of Annotation-based Configuration, see here. |

| Refer to the corresponding Sample Guide and Code to see Spring Boot Auto-configuration for Apache Geode in action! |

5.1. Customizing Auto-configuration

You might ask how I can customize the Auto-configuration provided by SBDG if I do not explicitly declare the annotation?

For example, you mat want to customize the member’s "name". You know that the

@ClientCacheApplication annotation

provides the name attribute

so you can set the client member’s "name". But SBDG has already implicitly declared the @ClientCacheApplication

annotation via Auto-configuration on your behalf. What do you do?

Well, SBDG supplies a few very useful Annotations in this case.

For example, to set the (client or peer) member’s name, you can use the @UseMemberName annotation, like so:

@UseMemberName@SpringBootApplication

@UseMemberName("MyMemberName")

class SpringBootClientCacheApplication {

///...

}Alternatively, you could set the spring.application.name or the spring.data.gemfire.name property in Spring Boot

application.properties

spring.application.name property# Spring Boot application.properties

spring.application.name = MyMemberNameOr:

spring.data.gemfire.cache.name property# Spring Boot application.properties

spring.data.gemfire.cache.name = MyMemberNameIn general, there are 3 ways to customize configuration, even in the context of SBDG’s Auto-configuration:

-

Using Annotations provided by SBDG for common and popular concerns (e.g. naming client or peer members with

@UseMemberName, or enabling durable clients with@EnableDurableClient). -

Using well-known and documented Properties (e.g.

spring.application.name, orspring.data.gemfire.name, orspring.data.gemfire.cache.name). -

Using Configurers (e.g.

ClientCacheConfigurer).

| For the complete list of documented Properties, see here. |

5.2. Disabling Auto-configuration

Disabling Spring Boot Auto-configuration is explained in detail in Spring Boot’s Reference Guide.

Disabling SBDG Auto-confiugration was also explained in detail.

In a nutshell, if you want to disable any Auto-configuration provided by either Spring Boot or SBDG,

then you can declare your intent in the @SpringBootApplication annotation, like so:

@SpringBootApplication(

exclude = { DataSourceAutoConfiguration.class, PdxAutoConfiguration.class }

)

class SpringBootClientCacheApplication {

// ...

}| Make sure you understand what you are doing when you are "disabling" Auto-configuration. |

5.3. Overriding Auto-configuration

Overriding SBDG Auto-configuration was explained in detail as well.

In a nutshell, if you want to override the default Auto-configuration provided by SBDG then you must annotate

your @SpringBootApplication class with your intent.

For example, say you want to configure and bootstrap an Apache Geode CacheServer application (a peer;

not a client), then you would:

ClientCache Auto-Configuration by configuring & bootstrapping a CacheServer application@SpringBootApplication

@CacheServerApplication

class SpringBootCacheServerApplication {

// ...

}Even when you explicitly declare the @ClientCacheApplication annotation on your @SpringBootApplication class,

like so:

@ClientCacheApplication@SpringBootApplication

@ClientCacheApplication

class SpringBootClientCacheApplication {

// ...

}You are overriding SBDG’s Auto-configuration of the ClientCache instance. As a result, you now have also implicitly

consented to being responsible for other aspects of the configuration (e.g. Security)! Why?

This is because in certain cases, like Security, certain aspects of Security configuration (e.g. SSL) must be configured before the cache instance is created. And, Spring Boot always applies user configuration before Auto-configuration partially to determine what needs to be auto-configured in the first place.

| Especially make sure you understand what you are doing when you are "overriding" Auto-configuration. |

5.4. Replacing Auto-configuration

We will simply refer you to the Spring Boot Reference Guide on replacing Auto-configuration. See here.

5.5. Auto-configuration Explained

This section covers the SBDG provided Auto-configuration classes corresponding to the SDG Annotations in more detail.

To review the complete list of SBDG Auto-confiugration classes, see here.

5.5.1. @ClientCacheApplication

The ClientCacheAutoConfiguration class

corresponds to the @ClientCacheApplication annotation.

|

SBDG starts with the opinion that application developers will primarily be building Apache Geode client applications using Spring Boot.

Technically, this means building Spring Boot applications with an Apache Geode ClientCache instance connected

to a dedicated cluster of Apache Geode servers that manage the data as part of a

client/server topology.

By way of example, this means you do not need to explicitly declare and annotate your @SpringBootApplication class

with SDG’s @ClientCacheApplication annotation, like so:

@SpringBootApplication

@ClientCacheApplication

class SpringBootClientCacheApplication {

// ...

}This is because SBDG’s provided Auto-configuration class is already meta-annotated with SDG’s

@ClientCacheApplication annotation. Therefore, you simply need:

@SpringBootApplication

class SpringBootClientCacheApplication {

// ...

}| Refer to SDG’s Reference Documentation for more details on Apache Geode cache applications, and client/server applications in particular. |

5.5.2. @EnableGemfireCaching

The CachingProviderAutoConfiguration class

corresponds to the @EnableGemfireCaching annotation.

|

If you simply used the core Spring Framework to configure Apache Geode as a caching provider in Spring’s Cache Abstraction, you would need to do this:

@SpringBootApplication

@EnableCaching

class CachingUsingApacheGeodeConfiguration {

@Bean

GemfireCacheManager cacheManager(GemFireCache cache) {

GemfireCacheManager cacheManager = new GemfireCacheManager();

cacheManager.setCache(cache);

return cacheManager;

}

}If you were using Spring Data for Apache Geode’s @EnableGemfireCaching annotation, then the above configuration

could be simplified to:

@SpringBootApplication

@EnableGemfireCaching

class CachingUsingApacheGeodeConfiguration {

}And, if you use SBDG, then you only need to do this:

@SpringBootApplication

class CachingUsingApacheGeodeConfiguration {

}This allows you to focus on the areas in your application that would benefit from caching without having to enable the plumbing. Simply demarcate the service methods in your application that are good candidates for caching:

@Service

class CustomerService {

@Caching("CustomersByName")

Customer findBy(String name) {

// ...

}

}| Refer to the documentation for more details. |

5.5.3. @EnableContinuousQueries

The ContinuousQueryAutoConfiguration class

corresponds to the @EnableContinuousQueries annotation.

|

Without having to enable anything, you simply annotate your application (POJO) component method(s) with the SDG

@ContinuousQuery

annotation to register a CQ and start receiving events. The method acts as a CqEvent handler, or in Apache Geode’s

case, the method would be an implementation of

CqListener.

@Component

class MyCustomerApplicationContinuousQueries {

@ContinuousQuery("SELECT customer.* FROM /Customers customers"

+ " WHERE customer.getSentiment().name().equalsIgnoreCase('UNHAPPY')")

public void handleUnhappyCustomers(CqEvent event) {

// ...

}

}As shown above, you define the events you are interested in receiving by using a OQL query with a finely tuned query predicate describing the events of interests and implement the handler method to process the events (e.g. apply a credit to the customer’s account and follow up in email).

| Refer to the documentation for more details. |

5.5.4. @EnableGemfireFunctionExecutions & @EnableGemfireFunctions

The FunctionExecutionAutoConfiguration class

corresponds to both the @EnableGemfireFunctionExecutions

and @EnableGemfireFunctions annotations.

|

Whether you need to execute a Function

or implement a Function, SBDG will detect the Function

definition and auto-configure it appropriately for use in your Spring Boot application. You only need to define

the Function execution or implementation in a package below the main @SpringBootApplication class.

package example.app.functions;

@OnRegion("Accounts")

interface MyCustomerApplicationFunctions {

void applyCredit(Customer customer);

}Then you can inject the Function execution into any application component and use it:

package example.app.service;

@Service

class CustomerService {

@Autowired

private MyCustomerapplicationFunctions customerFunctions;

void analyzeCustomerSentiment(Customer customer) {

// ...

this.customerFunctions.applyCredit(customer);

// ...

}

}The same pattern basically applies to Function implementations, except in the implementation case, SBDG "registers" the Function implementation for use (i.e. to be called by a Function execution).

The point is, you are simply focusing on defining the logic required by your application, and not worrying about how Functions are registered, called, etc. SBDG is handling this concern for you!

| Function implementations are typically defined and registered on the server-side. |

| Refer to the documentation for more details. |

5.5.5. @EnableGemfireRepositories

The GemFireRepositoriesAutoConfigurationRegistrar class

corresponds to the @EnableGemfireRepositories annotation.

|

Like Functions, you are only concerned with the data access operations (e.g. basic CRUD and simple Queries) required by

your application to carry out its functions, not how to create and perform them (e.g. Region.get(key)

& Region.put(key, obj)) or execute (e.g. Query.execute(arguments)).

Simply define your Spring Data Repository:

package example.app.repo;

interface CustomerRepository extends CrudRepository<Customer, Long> {

List<Customer> findBySentimentEqualTo(Sentiment sentiment);

}And use it:

package example.app.sevice;

@Service

class CustomerService {

@Autowired

private CustomerRepository repository;

public void processCustomersWithSentiment(Sentiment sentiment) {

this.repository.findBySentimentEqualTo(sentiment).forEach(customer -> { /* ... */ });

// ...

}

}Your application-specific Repository simply needs to be declared in a package below the main @SpringBootApplication

class. Again, you are only focusing on the data access operations and queries required to carry out the functions

of your application, nothing more.

| Refer to the documentation for more details. |

5.5.6. @EnableLogging

The LoggingAutoConfiguration class

corresponds to the @EnableLogging annotation.

|

Logging is an essential application concern to understand what is happening in the system along with when and where the event occurred. As such, SBDG auto-configures logging for Apache Geode by default, using the default log-level, "config".

If you wish to change an aspect of logging, such as the log-level, you would typically do this in Spring Boot

application.properties:

# Spring Boot application.properites.

spring.data.gemfire.cache.log-level=debugOther aspects may be configured as well, such as the log file size and disk space limits for the file system location used to store the Apache Geode log files at runtime.

Under-the-hood, Apache Geode’s logging is based on Log4j. Therefore, you can configure Apache Geode logging using

any logging provider (e.g. Logback) and configuration metadata appropriate for that logging provider so long as you

supply the necessary adapter between Log4j and whatever logging system you are using. For instance, if you include

org.springframework.boot:spring-boot-starter-logging then you will be using Logback and you will need the

org.apache.logging.log4j:log4j-to-slf4j adapter.

5.5.7. @EnablePdx

The PdxSerializationAutoConfiguration class

corresponds to the @EnablePdx annotation.

|

Anytime you need to send an object over the network, overflow or persist an object to disk, then your application domain

object must be serializable. It would be painful to have to implement java.io.Serializable in everyone of your

application domain objects (e.g. Customer) that would potentially need to be serialized.

Furthermore, using Java Serialization may not be ideal (e.g. the most portable or efficient) in all cases, or even possible in other cases (e.g. when you are using a 3rd party library for which you have no control over).

In these situations, you need to be able to send your object anywhere without unduly requiring the class type to be serializable as well as to exist on the classpath for every place it is sent. Indeed, the final destination may not even be a Java application! This is where Apache Geode PDX Serialization steps into help.

However, you don’t have to figure out how to configure PDX to identify the application class types that will need to be serialized. You simply define your class type:

@Region("Customers")

class Customer {

@Id

private Long id;

@Indexed

private String name;

// ...

}And, SBDG’s Auto-configuration will handle the rest!

| Refer to the documentation for more details. |

5.5.8. @EnableSecurity

The ClientSecurityAutoConfiguration class

and PeerSecurityAutoConfiguration class

corresponds to the @EnableSecurity annotation, but applies

Security, and specifically, Authentication/Authorization configuration for both clients and servers.

|

Configuring your Spring Boot, Apache Geode ClientCache application to properly authenticate with a cluster of

secureApache Geode servers is as simple as setting a username and password in Spring Boot

application.properties:

# Spring Boot application.properties

spring.data.gemfire.security.username=Batman

spring.data.gemfire.security.password=r0b!n5ucks| Authentication is even easier to configure in a managed environment like PCF when using PCC; you don’t have to do anything! |

Authorization is configured on the server-side and is made simple with SBDG and the help of Apache Shiro. Of course, this assumes you are using SBDG to configure and bootstrap your Apache Geode cluster in the first place, which is possible, and made even easier with SBDG.

| Refer to the documentation for more details. |

5.5.9. @EnableSsl

The SslAutoConfiguration class

corresponds to the @EnableSsl annotation.

|

Configuring SSL for secure transport (TLS) between your Spring Boot, Apache Geode ClientCache application

and the cluster can be a real problematic task, especially to get correct from the start. So, it is something

that SBDG makes simple to do out-of-the-box.

Simply supply a trusted.keystore file containing the certificates in a well-known location (e.g. root of your

application classpath) and SBDG’s Auto-configuration will kick in and handle of the rest.

This is useful during development, but we highly recommend using a more secure procedure (e.g. integrating with a secure credential store like LDAP, CredHub or Vault) when deploying your Spring Boot application to production.

| Refer to the documentation for more details. |

5.5.10. @EnableGemFireHttpSession

The SpringSessionAutoConfiguration class

corresponds to the @EnableSsl annotation.

|

Configuring Apache Geode to serve as the (HTTP) Session state caching provider using Spring Session is as simple

as including the correct starter, e.g. spring-geode-starter-session.

<dependency>

<groupId>org.springframework.geode</groupId>

<artifactId>spring-geode-starter-session</artifactId>

<version>1.3.12.RELEASE</version>

</dependency>With Spring Session, and specifically Spring Session for Apache Geode (SSDG), on the classpath of your Spring

Boot, Apache Geode ClientCache Web application, you can manage your (HTTP) Session state with Apache Geode.

No further configuration is needed. SBDG Auto-configuration detects Spring Session on the application classpath

and does the right thing.

| Refer to the documentation for more details. |

5.5.11. RegionTemplateAutoConfiguration

The SBDG RegionTemplateAutoConfiguration class

has no corresponding SDG Annotation. However, the Auto-configuration of a GemfireTemplate for every single

Apache Geode Region defined and declared in your Spring Boot application is supplied by SBDG never-the-less.

For example, if you defined a Region using:

@Configuration

class GeodeConfiguration {

@Bean("Customers")

ClientRegionFactoryBean<Long, Customer> customersRegion(GemFireCache cache) {

ClientRegionFactoryBean<Long, Customer> customersRegion =

new ClientRegionFactoryBean<>();

customersRegion.setCache(cache);

customersRegion.setShortcut(ClientRegionShortcut.PROXY);

return customersRegion;

}

}Alternatively, you could define the "Customers" Region using:

@EnableEntityDefinedRegions@Configuration

@EnableEntityDefinedRegion(basePackageClasses = Customer.class)

class GeodeConfiguration {

}Then, SBDG will supply a GemfireTemplate instance that you can use to perform low-level, data access operations

(indirectly) on the "Customers" Region:

GemfireTemplate to access the "Customers" Region@Repository

class CustomersDao {

@Autowired

@Qualifier("customersTemplate")

private GemfireTemplate customersTemplate;

Customer findById(Long id) {

return this.customerTemplate.get(id);

}

}You do not need to explicitly configure GemfireTemplates for each Region you need to have low-level data access to

(e.g. such as when you are not using the Spring Data Repository abstraction).

Be careful to "qualify" the GemfireTemplate for the Region you need data access to, especially given that you will

probably have more than 1 Region defined in your Spring Boot application.

| Refer to the documentation for more details. |

6. Declarative Configuration

The primary purpose of any software development framework is to help you be productive as quickly and as easily as possible, and to do so in a reliable manner.

As application developers, we want a framework to provide constructs that are both intuitive and familiar so that their behaviors are boringly predictable. This provided convenience not only helps you hit the ground running in the right direction sooner but increases your focus on the application domain so you are able to better understand the problem you are trying to solve in the first place. Once the problem domain is well understood, you are more apt to make informed decisions about the design, which leads to better outcomes, faster.

This is exactly what Spring Boot’s auto-configuration provides for you… enabling features, services and supporting infrastructure for Spring applications in a loosely integrated way by using conventions (e.g. classpath) that ultimately helps you keep your attention and focus on solving the problem at hand and not on the plumbing.

For example, if you are building a Web application, simply include the org.springframework.boot:spring-boot-starter-web

dependency on your application classpath. Not only will Spring Boot enable you to build Spring Web MVC Controllers

appropriate to your application UC (your responsibility), but will also bootstrap your Web app in an embedded Servlet

Container on startup (Boot’s responsibility).

This saves you from having to handle many low-level, repetitive and tedious development tasks that are highly error-prone when you are simply trying to solve problems. You don’t have to care how the plumbing works until you do. And, when you do, you will be better informed and prepared to do so.

It is also equally essential that frameworks, like Spring Boot, get out of the way quickly when application requirements diverge from the provided defaults. The is the beautiful and powerful thing about Spring Boot and why it is second to none in its class.

Still, auto-configuration does not solve every problem all the time. Therefore, you will need to use declarative configuration in some cases, whether expressed as bean definitions, in properties or by some other means. This is so frameworks don’t leave things to chance, especially when they are ambiguous. The framework simply gives you a choice.

Now, that we explained the motivation behind this chapter, let’s outline what we will discuss:

-

Refer you to the SDG Annotations covered by SBDG’s Auto-configuration

-

List all SDG Annotations not covered by SBDG’s Auto-configuration

-

Cover the SBDG, SSDG and SDG Annotations that must be declared explicitly and that provide the most value and productivity when getting started using Apache Geode in Spring [Boot] applications.

| SDG refers to Spring Data for Apache Geode. SSDG refers to Spring Session for Apache Geode and SBDG refers to Spring Boot for Apache Geode, this project. |

| The list of SDG Annotations covered by SBDG’s Auto-configuration is discussed in detail in the Appendix, in the section, Auto-configuration vs. Annotation-based configuration. |

To be absolutely clear about which SDG Annotations we are referring to, we mean the SDG Annotations in the package: org.springframework.data.gemfire.config.annotation.

Additionally, in subsequent sections, we will cover which Annotations are added by SBDG.

6.1. Auto-configuration

Auto-configuration was explained in complete detail in the chapter, "Auto-configuration".

6.2. Annotations not covered by Auto-configuration

The following SDG Annotations are not implicitly applied by SBDG’s Auto-configuration:

-

@EnableAutoRegionLookup -

@EnableBeanFactoryLocator -

@EnableCacheServer(s) -

@EnableCachingDefinedRegions -

@EnableClusterConfiguration -

@EnableClusterDefinedRegions -

@EnableCompression -

@EnableDiskStore(s) -

@EnableEntityDefinedRegions -

@EnableEviction -

@EnableExpiration -

@EnableGatewayReceiver -

@EnableGatewaySender(s) -

@EnableGemFireAsLastResource -

@EnableGemFireMockObjects -

@EnableHttpService -

@EnableIndexing -

@EnableOffHeap -

@EnableLocator -

@EnableManager -

@EnableMemcachedServer -

@EnablePool(s) -

@EnableRedisServer -

@EnableStatistics -

@UseGemFireProperties

| This was also covered here. |

Part of the reason for this is because several of the Annotations are server-specific:

-

@EnableCacheServer(s) -

@EnableGatewayReceiver -

@EnableGatewaySender(s). -

@EnableHttpService -

@EnableLocator -

@EnableManager -

@EnableMemcachedServer -

@EnableRedisServer

And, we already stated that SBDG is opinionated about providing a ClientCache

instance out-of-the-box.

Other Annotations are driven by need, for example:

-

@EnableAutoRegionLookup&@EnableBeanFactoryLocator- really only useful when mixing configuration metadata formats, e.g. Spring config with GemFirecache.xml. This is usually only the case if you have legacycache.xmlconfig to begin with, otherwise, don’t do this! -

@EnableCompression- requires the Snappy Compression Library on your application classpath. -

@EnableDiskStore(s)- only used for overflow and persistence. -

@EnableOffHeap- enables data to be stored in main memory, which is only useful when your application data (i.e. Objects stored in GemFire/Geode) are generally uniform in size. -

@EnableGemFireAsLastResource- only needed in the context of JTA Transactions. -

@EnableStatistics- useful if you need runtime metrics, however enabling statistics gathering does consume considerable system resources (e.g. CPU & Memory).

While still other Annotations require more careful planning, for example:

-

@EnableEviction -

@EnableExpiration -

@EnableIndexing

One in particular is used exclusively for Unit Testing:

-

@EnableGemFireMockObjects

The bottom-line is, a framework should not Auto-configure every possible feature, especially when the features consume additional system resources, or requires more careful planning as determined by the use case.

Still, all of these Annotations are available for the application developer to use when needed.

6.3. Productivity Annotations

This section calls out the Annotations we believe to be most beneficial for your application development purposes when using Apache Geode in Spring Boot applications.

6.3.1. @EnableClusterAware (SBDG)

The @EnableClusterAware annotation is arguably the most powerful and valuable Annotation in the set of Annotations!

When you annotate your main @SpringBootApplication class with @EnableClusterAware:

@EnableClusterAware@SpringBootApplication

@EnableClusterAware

class SpringBootApacheGeodeClientCacheApplication { }Your Spring Boot, Apache Geode ClientCache application is able to seamlessly switch between client/server

and local-only topologies with no code or configuration changes.

When a cluster of Apache Geode servers is detected, the client application will send and receive data to and from

the cluster. If a cluster is not available, then the client automatically switches to storing data locally

on the client using LOCAL Regions.

Additionally, the @EnableClusterAware annotation is meta-annotated with SDG’s

@EnableClusterConfiguration annotation.

The @EnableClusterConfiguration enables configuration metadata defined on the client (e.g. Region and Index

definitions) as needed by the application based on requirements and use cases, to be sent to the cluster of servers.

If those schema objects are not already present, they will be created by the servers in the cluster in such a way that

the servers will remember the configuration on a restart as well as provide the configuration to new servers joining

the cluster when scaling out. This feature is careful not to stomp on any existing Region or Index objects already

present on the servers, particularly since you may already have data stored in the Regions.

The primary motivation behind the @EnableClusterAware annotation is to allow you to switch environments with very

little effort. It is a very common development practice to debug and test your application locally, in your IDE,

then push up to a production-like environment for more rigorous integration testing.

By default, the configuration metadata is sent to the cluster using a non-secure HTTP connection. Using HTTPS, changing host and port, and configuring the data management policy used by the servers when creating Regions is all configurable.

| Refer to the section in the SDG Reference Guide on Configuring Cluster Configuration Push for more details. |

6.3.2. @EnableCachingDefinedRegions, @EnableClusterDefinedRegions & @EnableEntityDefinedRegions (SDG)

These Annotations are used to create Regions in the cache to manage your application data.

Of course, you can create Regions using Java configuration and the Spring API as follows:

@Bean("Customers")

ClientRegionFactoryBean<Long, Customer> customersRegion(GemFireCache cache) {

ClientRegionFactoryBean<Long, Customer> customers = new ClientRegionFactoryBean<>();

customers.setCache(cache);

customers.setShortcut(ClientRegionShortcut.PROXY);

return customers;

}Or XML:

<gfe:client-region id="Customers" shorcut="PROXY"/>However, using the provided Annotations is far easier, especially during development when the complete Region configuration may be unknown and you simply want to create a Region to persist your application data and move on.

@EnableCachingDefinedRegions

The @EnableCachingDefinedRegions annotation is used when you have application components registered in the Spring

Container that are annotated with Spring or JSR-107, JCache annotations.

Caches that identified by name in the caching annotations are used to create Regions holding the data you want cached.

For example, given:

@Service

class CustomerService {

@Cacheable(cacheName = "CustomersByAccountNumber", key="#account.number")

Customer findBy(Account account) {

// ...

}

}When your main @SpringBootApplication class is annotated with @EnableCachingDefinedRegions:

@EnableCachingDefinedRegions@SpringBootApplication

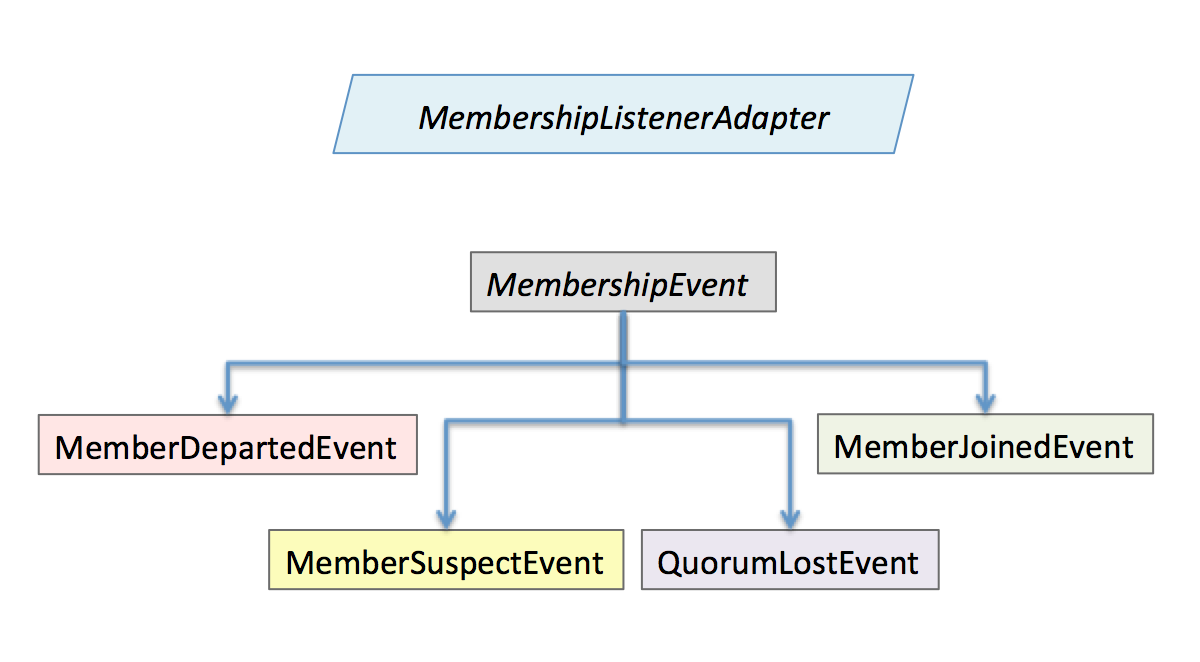

@EnableCachingDefineRegions