Version 1.7.1.BUILD-SNAPSHOT

© 2012-2018 Pivotal Software, Inc.

Copies of this document may be made for your own use and for distribution to others, provided that you do not charge any fee for such copies and further provided that each copy contains this Copyright Notice, whether distributed in print or electronically.

Getting Started

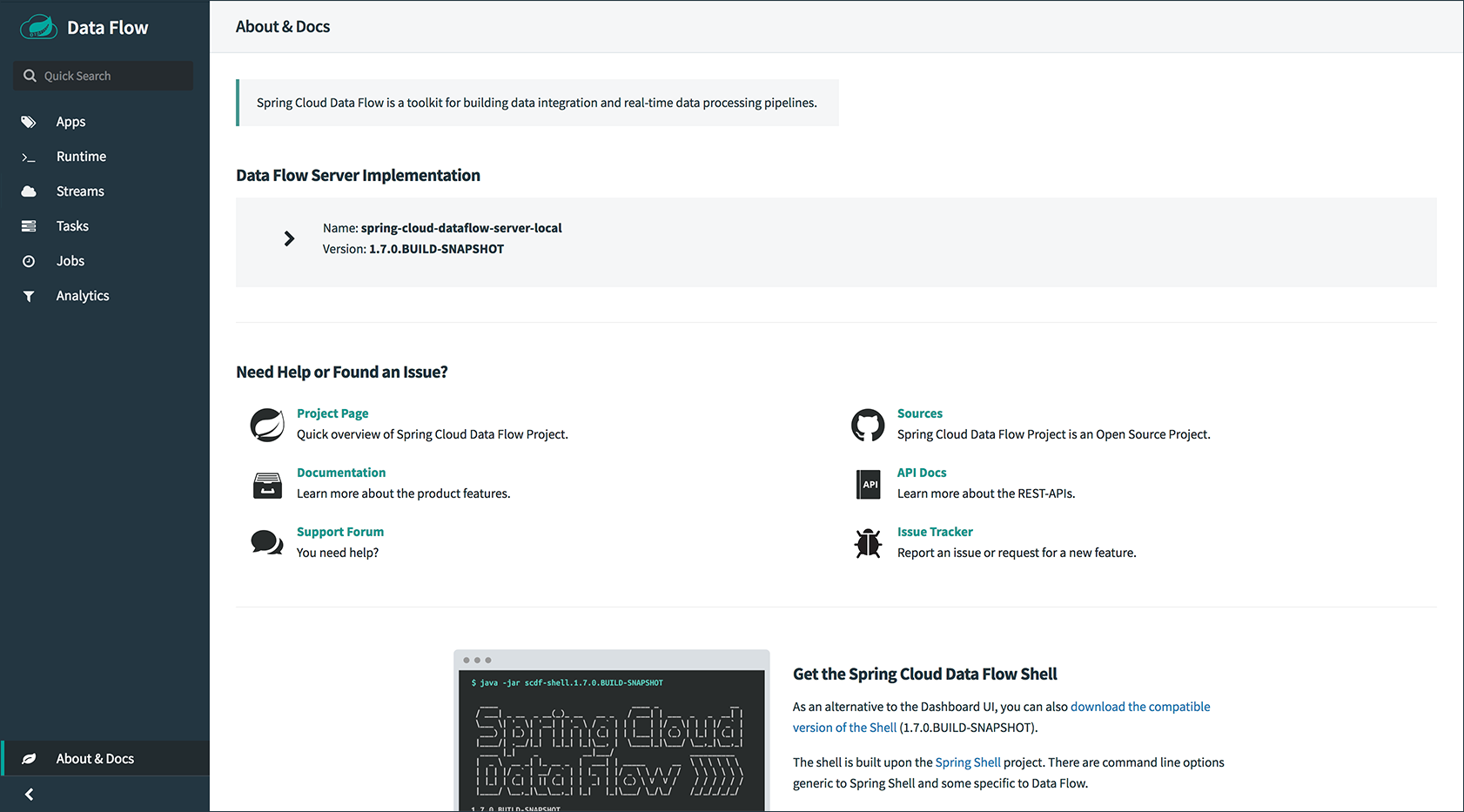

Spring Cloud Data Flow is a toolkit for building data integration and real-time data-processing pipelines.

Pipelines consist of Spring Boot apps, built with the Spring Cloud Stream or Spring Cloud Task microservice frameworks. This makes Spring Cloud Data Flow suitable for a range of data-processing use cases, from import-export to event-streaming and predictive analytics.

This project provides support for using Spring Cloud Data Flow with Kubernetes as the runtime for these pipelines, with applications packaged as Docker images.

1. Installation

This section covers how to install the Spring Cloud Data Flow Server on a Kubernetes cluster. Spring Cloud Data Flow depends on a few services and their availability. For example, we need an RDBMS service for the application registry, stream and task repositories, and task management. For streaming pipelines, we also need a messaging middleware option, such as Apache Kafka or RabbitMQ. In addition, if the analytics features are in use, we need a Redis service.

| This guide describes setting up an environment for testing Spring Cloud Data Flow on Google Kubernetes Engine and is not meant to be a definitive guide for setting up a production environment. Feel free to adjust the suggestions to fit your test setup. Remember that a production environment requires much more consideration for persistent storage of message queues, high availability, security, and other concerns. |

|

Currently, only applications registered with a However, any application registered with a Maven, HTTP, or File resource for the executable jar (by using a |

1.1. Kubernetes Compatibility

The Spring Cloud Data Flow implementation for Kubernetes uses the Spring Cloud Deployer Kubernetes library for orchestration. Before you begin setting up a Kubermetes cluster, refer to the compatibility matrix to learn more about deployer and server compatibility against Kubernetes release versions.

1.2. Create a Kubernetes Cluster

The Kubernetes Picking the Right Solution guide lets you choose among many options, so you can pick the one that you are most comfortable using.

All our testing is done with the Google Kubernetes Engine that is part of the Google Cloud Platform. That is also the target platform for this section. We have also successfully deployed with Minikube. We note where you need to adjust for deploying on Minikube.

When starting Minikube, you should allocate some extra resources, since we deploy several services. We start with minikube start --cpus=4 --memory=4096. Please note that the allocated memory and cpu for the Minikube VM directly gets assigned to the number of applications deployed in a stream/task. The more you add, the more VM resources is required.

|

The rest of this getting started guide assumes that you have a working Kubernetes cluster and a kubectl command line utility. See the docs for installation instructions: Installing and Setting up kubectl.

1.3. Deploying with kubectl

To deploy with kubectl

-

Get the Kubernetes configuration files.

You can use the sample deployment and service YAML files in the https://github.com/spring-cloud/spring-cloud-dataflow-server-kubernetes repository as a starting point. They have the required metadata set for service discovery by the different applications and services deployed. To check out the code, enter the following commands:

$ git clone https://github.com/spring-cloud/spring-cloud-dataflow-server-kubernetes $ cd spring-cloud-dataflow-server-kubernetes $ git checkout v1.7.1.BUILD-SNAPSHOT

1.3.1. Choosing a Message Broker

For deployed applications to communicate with each other, you need to select a message broker. The sample deployment and service YAML files provide configurations for RabbitMQ and Kafka. You need to configure only one message broker.

-

RabbitMQ

Run the following command to start the RabbitMQ service:

$ kubectl create -f src/kubernetes/rabbitmq/You can use

kubectl get all -l app=rabbitmqto verify that the deployment, pod, and service resources are running. You can usekubectl delete all -l app=rabbitmqto clean up afterwards. -

Kafka

Run the following command to start the Kafka service:

$ kubectl create -f src/kubernetes/kafka/You can use

kubectl get all -l app=kafkato verify that the deployment, pod, and service resources are running. You can usekubectl delete all -l app=kafkato clean up afterwards.

1.3.2. Deploy Services and Data Flow

You must deploy a number of services and the Data Flow server. To do so:

-

Deploy MySQL.

We use MySQL for this guide, but you could use a Postgres or H2 database instead. We include JDBC drivers for all three of these databases. To use a database other than MySQL, you must adjust the database URL and driver class name settings.

You can modify the password in the src/kubernetes/mysql/mysql-deployment.yamlfiles if you prefer to be more secure. If you do modify the password, you must also provide it as base64-encoded string in thesrc/kubernetes/mysql/mysql-secrets.yamlfile.Run the following command to start the MySQL service:

kubectl create -f src/kubernetes/mysql/You can use

kubectl get all -l app=mysqlto verify that the deployment, pod, and service resources are running. You can usekubectl delete all,pvc,secrets -l app=mysqlto clean up afterwards. -

Deploy Redis.

The Redis service is used for the analytics functionality. Run the following command to start the Redis service:

kubectl create -f src/kubernetes/redis/If you do not need the analytics functionality, you can turn this feature off by setting

SPRING_CLOUD_DATAFLOW_FEATURES_ANALYTICS_ENABLEDtofalsein thesrc/kubernetes/server/server-deployment.yamlfile. If you do not install the Redis service, you should also remove the Redis configuration settings from the following files depending on your message broker:-

RabbitMQ:

src/kubernetes/server/server-config-rabbit.yaml -

Kafka:

src/kubernetes/server/server-config-kafka.yaml

You can use

kubectl get all -l app=redisto verify that the deployment, pod, and service resources are running. You can usekubectl delete all -l app=redisto clean up afterwards. -

-

Deploy the Metrics Collector.

The Metrics Collector provides message rates for all deployed stream applications. These message rates are visible in the Dashboard UI. Run one of the following commands (depending on your message broker) to start the Metrics Collector:

-

RabbitMQ:

kubectl create -f src/kubernetes/metrics/metrics-deployment-rabbit.yaml -

Kafka:

kubectl create -f src/kubernetes/metrics/metrics-deployment-kafka.yamlCreate the metrics service:

$ kubectl create -f src/kubernetes/metrics/metrics-svc.yamlYou can use

kubectl get all -l app=metricsto verify that the deployment, pod, and service resources are running. You can usekubectl delete all -l app=metricsto clean up afterwards.

-

-

Deploy Skipper (optional, recommended)

Optionally, you can deploy Skipper to leverage the features of upgrading and rolling back Streams, since Data Flow delegates to Skipper for those features. For more details, see Spring Cloud Skipper Reference Guide for a complete overview.

Specify the version of Skipper that you want to deploy. The deployment is defined in the

src/kubernetes/skipper/skipper-deployment.yamlfile. To control what version of Skipper gets deployed, modify the tag used for the Docker image in the container specification, as the following example shows:spec: containers: - name: skipper image: springcloud/spring-cloud-skipper-server:1.1.2.RELEASE (1) imagePullPolicy: Always1 You may change the version as you like. Skipper includes the concept of platforms, so it is important to define the “accounts” based on the project preferences. In the preceding YAML file, the accounts map to minikubeas the platform. You can modify this, and you can have any number of platform definitions. More details are in the Spring Cloud Skipper Reference Guide.If you want to orchestrate stream processing pipelines with Apache Kafka as the messaging middleware by using Skipper, you must change the

SPRING_APPLICATION_JSONenvironment variable value in thesrc/kubernetes/skipper/skipper-deployment.yamlfile as follows:"{\"spring.cloud.skipper.server.enableLocalPlatform\" : false, \"spring.cloud.skipper.server.platform.kubernetes.accounts.minikube.environmentVariables\": \"SPRING_CLOUD_STREAM_KAFKA_BINDER_BROKERS=${KAFKA_SERVICE_HOST}:${KAFKA_SERVICE_PORT}, SPRING_CLOUD_STREAM_KAFKA_BINDER_ZK_NODES=${KAFKA_ZK_SERVICE_HOST}:${KAFKA_ZK_SERVICE_PORT}\",\"spring.cloud.skipper.server.platform.kubernetes.accounts.minikube.memory\" : \"1024Mi\"}"Additionally, if you want to use the Apache Kafka Streams Binder, configure the

SPRING_APPLICATION_JSONenvironment variable insrc/kubernetes/skipper/skipper-deployment.yamlas follows:"{\"spring.cloud.skipper.server.enableLocalPlatform\" : false, \"spring.cloud.skipper.server.platform.kubernetes.accounts.minikube.environmentVariables\": \"SPRING_CLOUD_STREAM_KAFKA_BINDER_BROKERS=${KAFKA_SERVICE_HOST}:${KAFKA_SERVICE_PORT}, SPRING_CLOUD_STREAM_KAFKA_BINDER_ZK_NODES=${KAFKA_ZK_SERVICE_HOST}:${KAFKA_ZK_SERVICE_PORT}, SPRING_CLOUD_STREAM_KAFKA_STREAMS_BINDER_BROKERS=${KAFKA_SERVICE_HOST}:${KAFKA_SERVICE_PORT}, SPRING_CLOUD_STREAM_KAFKA_STREAMS_BINDER_ZK_NODES=${KAFKA_ZK_SERVICE_HOST}:${KAFKA_ZK_SERVICE_PORT}\",\"spring.cloud.skipper.server.platform.kubernetes.accounts.minikube.memory\" : \"1024Mi\"}"Run the following commands to start Skipper as the companion server for Spring Cloud Data Flow:

kubectl create -f src/kubernetes/skipper/skipper-deployment.yaml kubectl create -f src/kubernetes/skipper/skipper-svc.yamlYou can use the command

kubectl get all -l app=skipperto verify that the deployment, pod, and service resources are running. You can usekubectl delete all -l app=skipperto clean up afterwards. -

Deploy the Data Flow Server.

Specify the version of Spring Cloud Data Flow server that you want to deploy. The deployment is defined in the

src/kubernetes/server/server-deployment.yamlfile. To control which version of Spring Cloud Data Flow server gets deployed, modify the tag used for the Docker image in the container specification, as follows:spec: containers: - name: scdf-server image: springcloud/spring-cloud-dataflow-server-kubernetes:1.7.1.RELEASE (1) imagePullPolicy: Always1 Change the version as you like. This document is based on the 1.7.1.BUILD-SNAPSHOTrelease. The docker taglatestcan be used forBUILD-SNAPSHOTreleases.To use Skipper, you must uncomment the following properties in

src/kubernetes/server/server-deployment.yaml, under theenv:section- name: SPRING_CLOUD_SKIPPER_CLIENT_SERVER_URI value: 'http://${SKIPPER_SERVICE_HOST}/api' - name: SPRING_CLOUD_DATAFLOW_FEATURES_SKIPPER_ENABLED value: 'true'The Data Flow Server uses the Fabric8 Java client library to connect to the Kubernetes cluster. We use environment variables to set the values needed when deploying the Data Flow server to Kubernetes. We also use the Fabric8 Spring Cloud integration with Kubernetes library to access the Kubernetes ConfigMap and Secrets settings. The ConfigMap settings for RabbitMQ are specified in the

src/kubernetes/server/server-config-rabbit.yamlfile and for Kafka in thesrc/kubernetes/server/server-config-kafka.yamlfile. MySQL secrets are located in thesrc/kubernetes/mysql/mysql-secrets.yamlfile. If you modified the password for MySQL, you should change it in thesrc/kubernetes/mysql/mysql-secrets.yamlfile. Any secrets have to be provided in base64 encoding.We now configure the Data Flow server with file-based security, and the default user is 'user' with a password of 'password'. You should change these values in src/kubernetes/server/server-config-rabbit.yamlfor RabbitMQ orsrc/kubernetes/server/server-config-kafka.yamlfor Kafka.The default memory for the pods is 1024Mi. If you expect most of your applications to require more memory, update the value in the src/kubernetes/server/server-deployment.yamlfile .Since version 1.9, the latest releases of Kubernetes have enabled RBAC on the api-server. If your target platform has RBAC enabled, you must ask a cluster-adminto create therolesandrole-bindingsfor you before deploying the Data Flow server. They associate the Data Flow service account with the roles it needs to be run with.To create Role Bindings and Service account:

kubectl create -f src/kubernetes/server/server-roles.yaml kubectl create -f src/kubernetes/server/server-rolebinding.yaml kubectl create -f src/kubernetes/server/service-account.yaml-

RabbitMQ:

kubectl create -f src/kubernetes/server/server-config-rabbit.yaml -

Kafka:

kubectl create -f src/kubernetes/server/server-config-kafka.yamlTo create a server deployment:

kubectl create -f src/kubernetes/server/server-svc.yaml kubectl create -f src/kubernetes/server/server-deployment.yamlYou can use

kubectl get all -l app=scdf-serverto verify that the deployment, pod, and service resources are running. You can usekubectl delete all,cm -l app=scdf-serverto clean up afterwards. To cleanup roles, bindings and the service account, use the following commands:$ kubectl delete role scdf-role $ kubectl delete rolebinding scdf-rb $ kubectl delete serviceaccount scdf-saYou can use the

kubectl get svc scdf-servercommand to locate theEXTERNAL_IPaddress assigned toscdf-server. We use that later to connect from the shell. The following example (with output) shows how to do so:$ kubectl get svc scdf-server NAME CLUSTER-IP EXTERNAL-IP PORT(S) AGE scdf-server 10.103.246.82 130.211.203.246 80/TCP 4mThe URL you need to use is in this case is

130.211.203.246.If you use Minikube, you do not have an external load balancer and the

EXTERNAL_IPshows as<pending>. You need to use theNodePortassigned for thescdf-serverservice. You can use the following command to look up the URL to use:$ minikube service --url scdf-server http://192.168.99.100:31991

-

2. Helm Installation

Spring Cloud Data Flow offers a Helm Chart for deploying the Spring Cloud Data Flow server and its required services to a Kubernetes Cluster.

| The Helm chart is available since the 1.2 GA release of Spring Cloud Data Flow for Kubernetes. |

The following instructions cover how to initialize Helm and install Spring Cloud Data Flow on a Kubernetes cluster.

-

Installing Helm

Helmis comprised of two components: the client (Helm) and the server (Tiller). TheHelmclient runs on your local machine and can be installed by following the instructions found here. If Tiller has not been installed on your cluster, run the followingHelmclient command:$ helm initTo verify that the Tillerpod is running, use the following command:kubectl get pod --namespace kube-system. You should see theTillerpod running. -

Installing the Spring Cloud Data Flow Server and required services.

Update the

Helmrepository and install the chart:$ helm repo update $ helm install --name my-release stable/spring-cloud-data-flow

|

As of Spring Cloud Data Flow 1.7.0, the |

|

If you run on a Kubernetes cluster without a load balancer, such as in Minikube, you should override the service type to use |

|

If you run on a Kubernetes cluster without RBAC, such as in Minikube, you should override |

If you wish to specify a version of Spring Cloud Data Flow other than the

current GA release, you can set the server.version, as follows (replacing stable with incubator if needed):

helm install --name my-release stable/spring-cloud-data-flow --set server.version=<version-you-want>| To see all of the settings that can be configured on the Spring Cloud Data Flow chart, view the README. |

|

The following listing shows Spring Cloud Data Flow’s Kubernetes version compatibility with the respective Helm Chart releases: |

You should see the following output:

NAME: my-release

LAST DEPLOYED: Sat Mar 10 11:33:29 2018

NAMESPACE: default

STATUS: DEPLOYED

RESOURCES:

==> v1/Secret

NAME TYPE DATA AGE

my-release-mysql Opaque 2 1s

my-release-data-flow Opaque 2 1s

my-release-redis Opaque 1 1s

my-release-rabbitmq Opaque 2 1s

==> v1/ConfigMap

NAME DATA AGE

my-release-data-flow-server 1 1s

my-release-data-flow-skipper 1 1s

==> v1/PersistentVolumeClaim

NAME STATUS VOLUME CAPACITY ACCESSMODES STORAGECLASS AGE

my-release-rabbitmq Bound pvc-e9ed7f55-2499-11e8-886f-08002799df04 8Gi RWO standard 1s

my-release-mysql Pending standard 1s

my-release-redis Pending standard 1s

==> v1/ServiceAccount

NAME SECRETS AGE

my-release-data-flow 1 1s

==> v1/Service

NAME CLUSTER-IP EXTERNAL-IP PORT(S) AGE

my-release-mysql 10.110.98.253 <none> 3306/TCP 1s

my-release-data-flow-server 10.105.216.155 <pending> 80:32626/TCP 1s

my-release-redis 10.111.63.33 <none> 6379/TCP 1s

my-release-data-flow-metrics 10.107.157.1 <none> 80/TCP 1s

my-release-rabbitmq 10.106.76.215 <none> 4369/TCP,5672/TCP,25672/TCP,15672/TCP 1s

my-release-data-flow-skipper 10.100.28.64 <none> 80/TCP 1s

==> v1beta1/Deployment

NAME DESIRED CURRENT UP-TO-DATE AVAILABLE AGE

my-release-mysql 1 1 1 0 1s

my-release-rabbitmq 1 1 1 0 1s

my-release-data-flow-metrics 1 1 1 0 1s

my-release-data-flow-skipper 1 1 1 0 1s

my-release-redis 1 1 1 0 1s

my-release-data-flow-server 1 1 1 0 1s

NOTES:

1. Get the application URL by running these commands:

NOTE: It may take a few minutes for the LoadBalancer IP to be available.

You can watch the status of the server by running 'kubectl get svc -w my-release-data-flow-server'

export SERVICE_IP=$(kubectl get svc --namespace default my-release-data-flow-server -o jsonpath='{.status.loadBalancer.ingress[0].ip}')

echo http://$SERVICE_IP:80You have just created a new release in the default namespace of your Kubernetes cluster.

The NOTES section gives instructions for connecting to the newly installed server.

It takes a couple of minutes for the application and its required services to start up.

You can check on the status by issuing a kubectl get pod -w command.

Wait for the READY column to show 1/1 for all pods. Once that is done, you can

connect to the Data Flow server with the external IP listed by the

kubectl get svc my-release-data-flow-server command.

The default username is user, and its password is password.

|

If you run on Minikube, you can use the following command to get the URL for the server: |

To see what Helm releases you have running, you can use the helm list command.

When it is time to delete the release, run helm delete my-release.

This removes any resources created for the release but keeps release information

so that you can rollback any changes by using a helm rollback my-release 1 command.

To completely delete the release and purge any release metadata, use helm delete my-release --purge.

|

There is an issue with generated secrets used for the required services getting rotated on chart upgrades. To avoid this issue, set the password for these services when installing the chart. You can use the following command: |

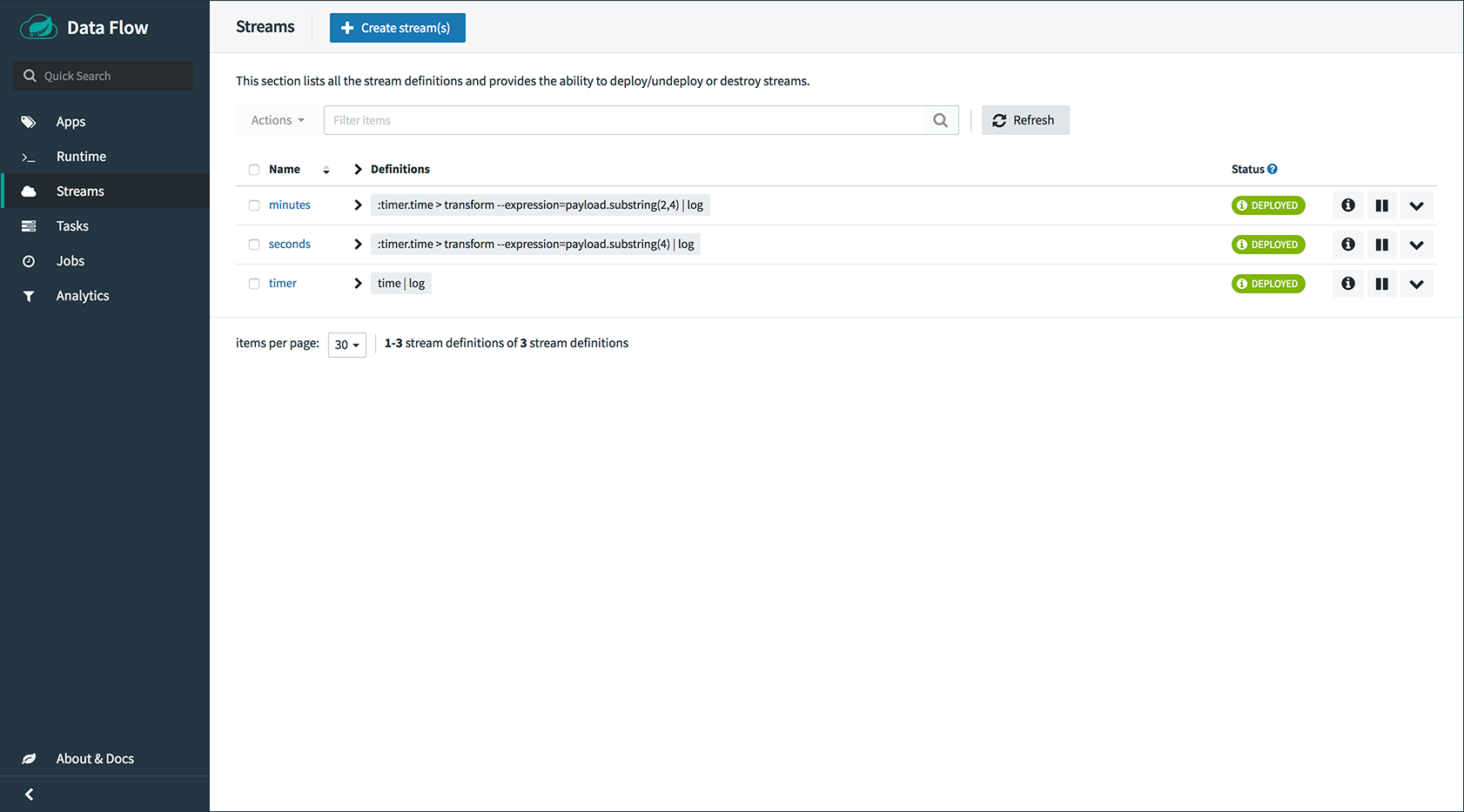

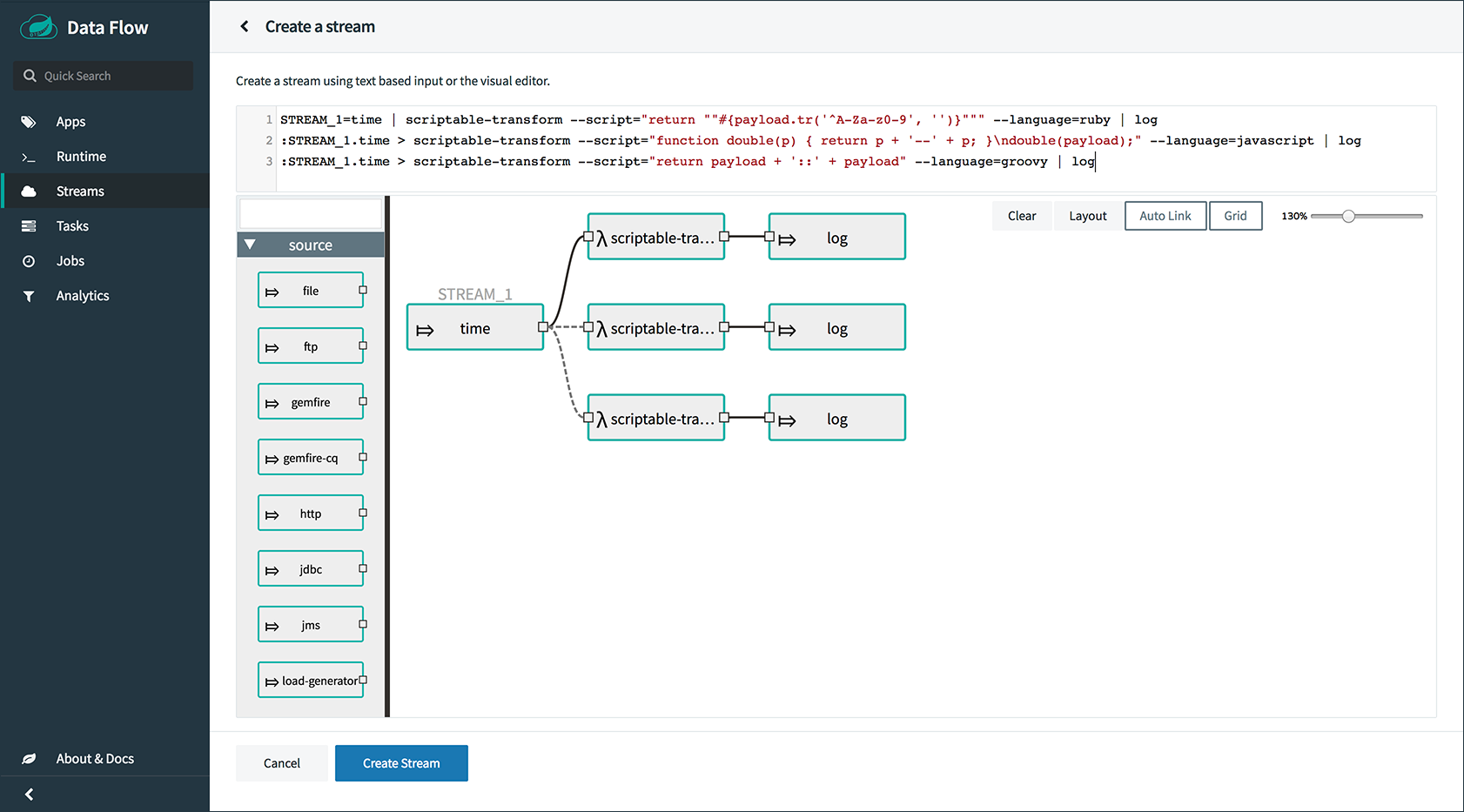

3. Deploying Streams

This section covers how to deploy streams with Spring Cloud Data Flow. Your choice at this point is whether to use Skipper, which lets you discover applications and manage their lifecycles. For more about Skipper, see cloud.spring.io/spring-cloud-skipper/.

If you want to use Skipper, see Create Streams with Skipper. If not, continue reading.

3.1. Create Streams without Skipper

This section describes how to create streams without using Skipper. To do so:

-

Download and run the Spring Cloud Data Flow shell.

wget http://repo.spring.io/release/org/springframework/cloud/spring-cloud-dataflow-shell/1.7.2.RELEASE/spring-cloud-dataflow-shell-1.7.2.RELEASE.jar java -jar spring-cloud-dataflow-shell-1.7.2.RELEASE.jarYou should see the following startup message from the shell:

____ ____ _ __ / ___| _ __ _ __(_)_ __ __ _ / ___| | ___ _ _ __| | \___ \| '_ \| '__| | '_ \ / _` | | | | |/ _ \| | | |/ _` | ___) | |_) | | | | | | | (_| | | |___| | (_) | |_| | (_| | |____/| .__/|_| |_|_| |_|\__, | \____|_|\___/ \__,_|\__,_| ____ |_| _ __|___/ __________ | _ \ __ _| |_ __ _ | ___| | _____ __ \ \ \ \ \ \ | | | |/ _` | __/ _` | | |_ | |/ _ \ \ /\ / / \ \ \ \ \ \ | |_| | (_| | || (_| | | _| | | (_) \ V V / / / / / / / |____/ \__,_|\__\__,_| |_| |_|\___/ \_/\_/ /_/_/_/_/_/ 1.7.2.RELEASE Welcome to the Spring Cloud Data Flow shell. For assistance hit TAB or type "help". server-unknown:> -

Configure the Data Flow server URI with the following command (use the URL determined in the previous step and the default user name and password):

server-unknown:>dataflow config server --username user --password password --uri http://130.211.203.246/ Successfully targeted http://130.211.203.246/ dataflow:> -

Register the Docker images of the Rabbit or Kafka binder based

timeandlogapps by using the shell.RabbitMQ:

dataflow:>app register --type source --name time --uri docker://springcloudstream/time-source-rabbit:1.3.1.RELEASE --metadata-uri maven://org.springframework.cloud.stream.app:time-source-rabbit:jar:metadata:1.3.1.RELEASE dataflow:>app register --type sink --name log --uri docker://springcloudstream/log-sink-rabbit:1.3.1.RELEASE --metadata-uri maven://org.springframework.cloud.stream.app:log-sink-rabbit:jar:metadata:1.3.1.RELEASE

Kafka:

dataflow:>app register --type source --name time --uri docker://springcloudstream/time-source-kafka-10:1.3.1.RELEASE --metadata-uri maven://org.springframework.cloud.stream.app:time-source-kafka-10:jar:metadata:1.3.1.RELEASE dataflow:>app register --type sink --name log --uri docker://springcloudstream/log-sink-kafka-10:1.3.1.RELEASE --metadata-uri maven://org.springframework.cloud.stream.app:log-sink-kafka-10:jar:metadata:1.3.1.RELEASE

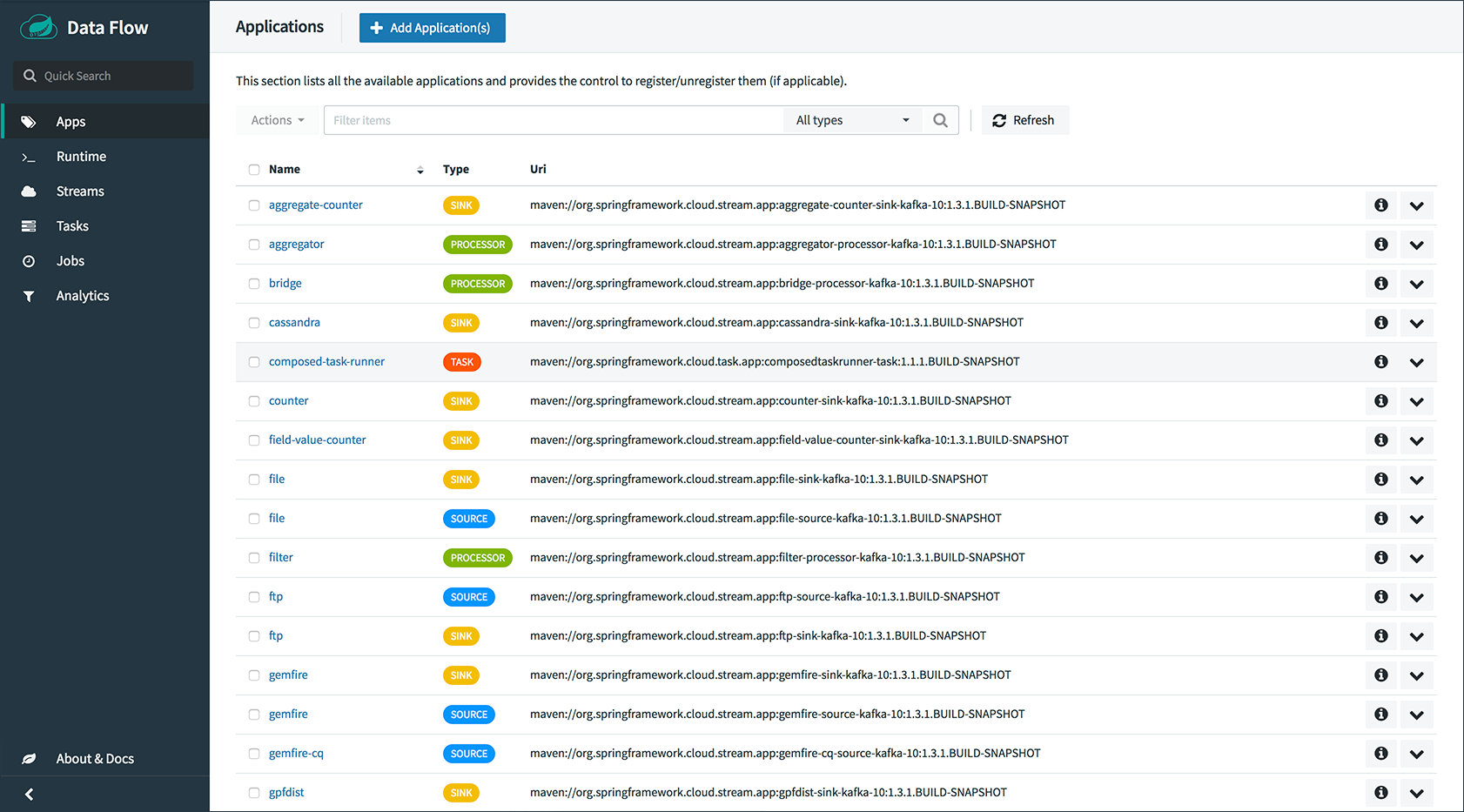

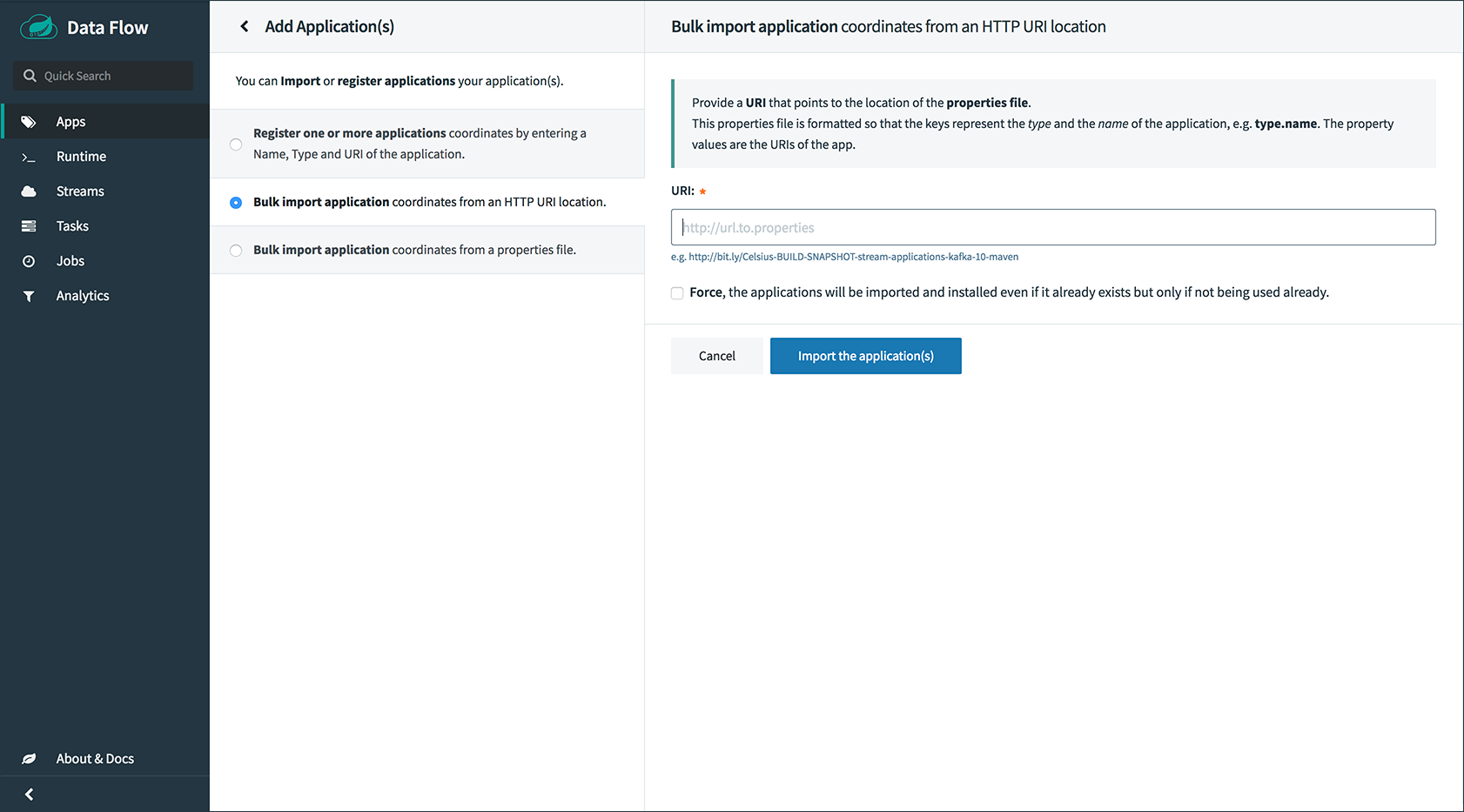

Alternatively, if you want register all out-of-the-box stream applications for a particular binder in bulk, you can use one of the following commands:

-

RabbitMQ:

dataflow:>app import --uri bit.ly/Celsius-SR3-stream-applications-rabbit-docker -

Kafka:

dataflow:>app import --uri bit.ly/Celsius-SR3-stream-applications-kafka-10-dockerFor more details, review how to register applications.

-

-

Deploy a simple stream in the shell, bu running the following command:

dataflow:>stream create --name ticktock --definition "time | log" --deployYou can use

kubectl get podsto check on the state of the pods that correspond to this stream. You can run this from the shell by by adding a "!" before the command (which makes a command run as an OS command ).dataflow:>! kubectl get pods -l role=spring-app command is:kubectl get pods -l role=spring-app NAME READY STATUS RESTARTS AGE ticktock-log-0-qnk72 1/1 Running 0 2m ticktock-time-r65cn 1/1 Running 0 2mNow you can view the logs for the pod deployed for the log sink by using the following command:

dataflow:>! kubectl logs ticktock-log-0-qnk72 command is:kubectl logs ticktock-log-0-qnk72 ... 2017-07-20 04:34:37.369 INFO 1 --- [time.ticktock-1] log-sink : 07/20/17 04:34:37 2017-07-20 04:34:38.371 INFO 1 --- [time.ticktock-1] log-sink : 07/20/17 04:34:38 2017-07-20 04:34:39.373 INFO 1 --- [time.ticktock-1] log-sink : 07/20/17 04:34:39 2017-07-20 04:34:40.380 INFO 1 --- [time.ticktock-1] log-sink : 07/20/17 04:34:40 2017-07-20 04:34:41.381 INFO 1 --- [time.ticktock-1] log-sink : 07/20/17 04:34:41 -

Destroy the stream, by using the following command:

dataflow:>stream destroy --name ticktockTo troubleshoot issues such as a container that has a fatal error starting up, add the

--previousoption to view the last terminated container log. You can also get more detailed information about the pods by using thekubctl describe, as the following example shows:kubectl describe pods/ticktock-log-qnk72If you need to specify any of the application-specific configuration properties, you might use the “long form” of them by including the application-specific prefix (for example, --jdbc.tableName=TEST_DATA). If you did not register the--metadata-urifor the Docker based starter applications, this form is required. In this case, you also do not see the configuration properties listed when using theapp infocommand or in the Dashboard GUI.

3.2. Create Streams with Skipper

You can create a stream with Spring Cloud Skipper. Skipper lets you discover applications and manage their lifecycles. For more about Skipper, see cloud.spring.io/spring-cloud-skipper/. For more about using Skipper with streams, see Deploying Streams by Using Skipper.

3.3. Accessing an Application from outside the Cluster

If you need to be able to connect from outside of the Kubernetes cluster to an application that you deploy (such as the http-source), you need to use either an external load balancer for the incoming connections or you need to use a NodePort configuration that exposes a proxy port on each Kubetnetes node. If your cluster does not support external load balancers (such as Minikube), you must use the NodePort approach. You can use deployment properties to configure the access. To specify that you want to have a load balancer with an external IP address created for your application’s service, use deployer.http.kubernetes.createLoadBalancer=true for the application. For the NodePort configuration, use deployer.http.kubernetes.createNodePort=<port>, where <port> is a number between 30000 and 32767.

-

Register the

http-sourceby using one of the following commands:RabbitMQ:

dataflow:>app register --type source --name http --uri docker//springcloudstream/http-source-rabbit:1.3.1.RELEASE --metadata-uri maven://org.springframework.cloud.stream.app:http-source-rabbit:jar:metadata:1.3.1.RELEASEKafka:

dataflow:>app register --type source --name http --uri docker//springcloudstream/http-source-kafka:1.3.1.RELEASE --metadata-uri maven://org.springframework.cloud.stream.app:http-source-kafka:jar:metadata:1.3.1.RELEASE -

Create the

http | logstream without deploying it by using the following command:dataflow:>stream create --name test --definition "http | log"If your cluster supports an External LoadBalancer for the

http-source, you can use the following command to deploy the stream:dataflow:>stream deploy test --properties "deployer.http.kubernetes.createLoadBalancer=true" -

Check whether the pods have started by using the following command:

dataflow:>! kubectl get pods -l role=spring-app command is:kubectl get pods -l role=spring-app NAME READY STATUS RESTARTS AGE test-http-2bqx7 1/1 Running 0 3m test-log-0-tg1m4 1/1 Running 0 3mPods that are ready show

1/1in theREADYcolumn. Now you can look up the external IP address for thehttpapplication (it can sometimes take a minute or two for the external IP to get assigned) by using the following command:dataflow:>! kubectl get service test-http command is:kubectl get service test-http NAME CLUSTER-IP EXTERNAL-IP PORT(S) AGE test-http 10.103.251.157 130.211.200.96 8080/TCP 58sIf you use Minikube or any cluster that does not support an external load balancer, you should deploy the stream with a NodePort in the range of 30000-32767. You can use the following command to deploy it:

dataflow:>stream deploy test --properties "deployer.http.kubernetes.createNodePort=32123" -

Check whether the pods have started by using the following command:

dataflow:>! kubectl get pods -l role=spring-app command is:kubectl get pods -l role=spring-app NAME READY STATUS RESTARTS AGE test-http-9obkq 1/1 Running 0 3m test-log-0-ysiz3 1/1 Running 0 3mPods that are ready show

1/1in theREADYcolumn. Now you can look up the URL to use with the following command:dataflow:>! minikube service --url test-http command is:minikube service --url test-http http://192.168.99.100:32123 -

Post some data to the

test-httpapplication either by using theEXTERNAL_IPaddress (mentioned earlier) with port 8080 or by using the URL provided by the following Minikube command:dataflow:>http post --target http://130.211.200.96:8080 --data "Hello" -

View the logs for the

test-logpod, by using the following command:dataflow:>! kubectl get pods-l role=spring-app command is:kubectl get pods-l role=spring-app NAME READY STATUS RESTARTS AGE test-http-9obkq 1/1 Running 0 2m test-log-0-ysiz3 1/1 Running 0 2m dataflow:>! kubectl logs test-log-0-ysiz3 command is:kubectl logs test-log-0-ysiz3 ... 2016-04-27 16:54:29.789 INFO 1 --- [ main] o.s.c.s.b.k.KafkaMessageChannelBinder$3 : started inbound.test.http.test 2016-04-27 16:54:29.799 INFO 1 --- [ main] o.s.c.support.DefaultLifecycleProcessor : Starting beans in phase 0 2016-04-27 16:54:29.799 INFO 1 --- [ main] o.s.c.support.DefaultLifecycleProcessor : Starting beans in phase 2147482647 2016-04-27 16:54:29.895 INFO 1 --- [ main] s.b.c.e.t.TomcatEmbeddedServletContainer : Tomcat started on port(s): 8080 (http) 2016-04-27 16:54:29.896 INFO 1 --- [ kafka-binder-] log.sink : Hello -

Destroy the stream

dataflow:>stream destroy --name test

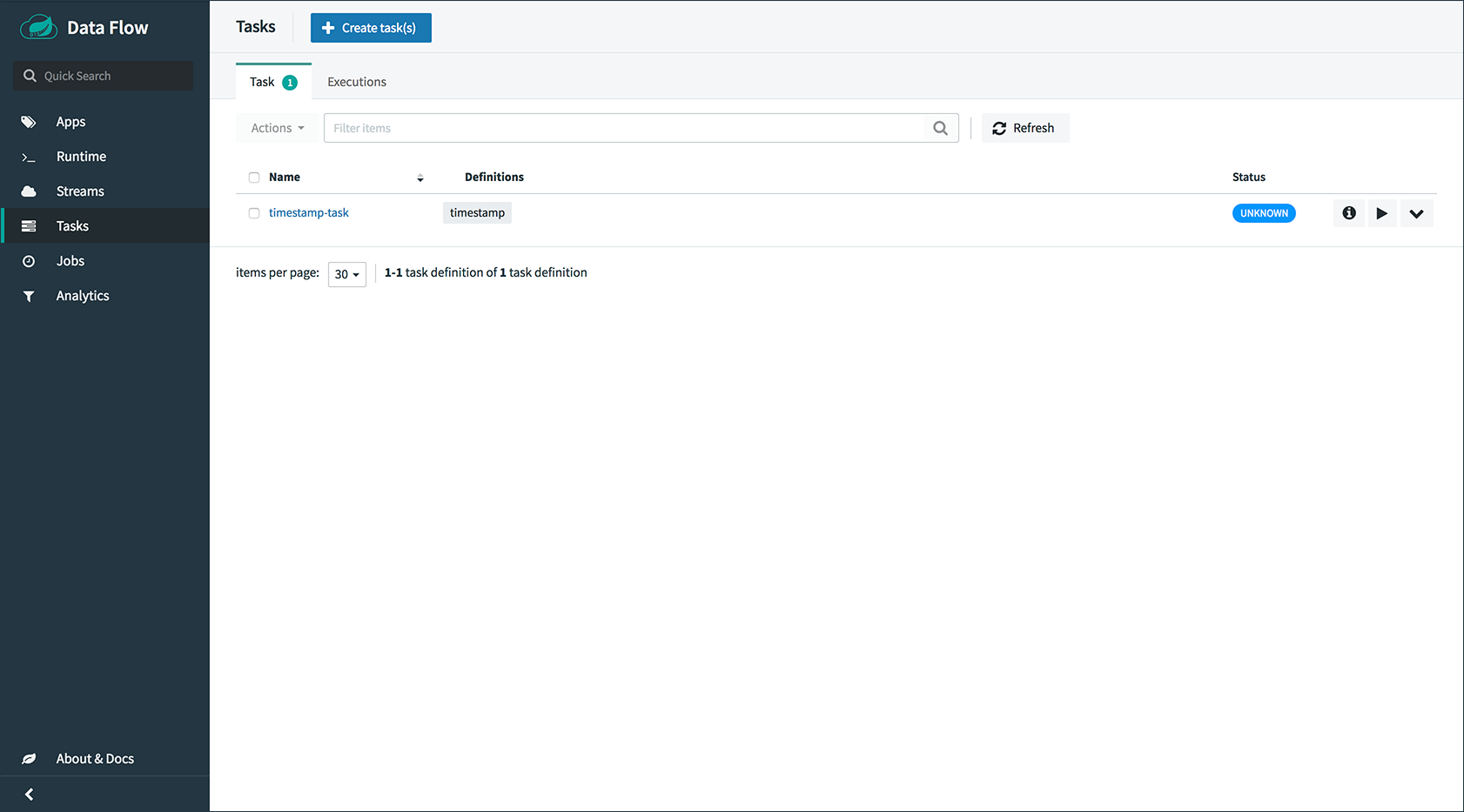

4. Deploying Tasks

This section covers how to deploy tasks. To do so:

-

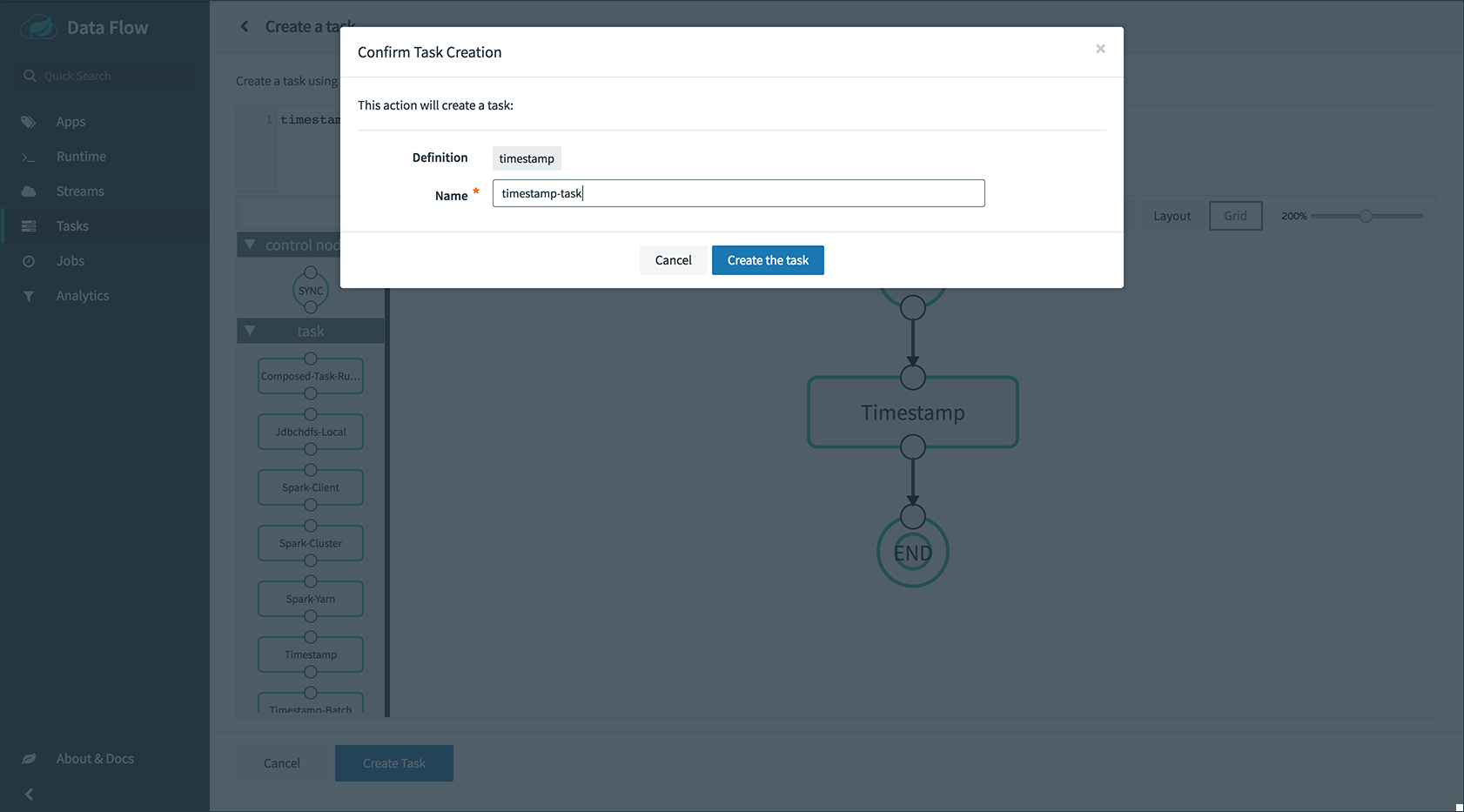

Create a task and launch it. To do so, register the

timestamptask app and create a simple task definition and launch it, as follows:dataflow:>app register --type task --name timestamp --uri docker:springcloudtask/timestamp-task:1.3.1.RELEASE --metadata-uri maven://org.springframework.cloud.task.app:timestamp-task:jar:metadata:1.3.1.RELEASE dataflow:>task create task1 --definition "timestamp" dataflow:>task launch task1You can now list the tasks and executions byusing the following commands:

dataflow:>task list ╔═════════╤═══════════════╤═══════════╗ ║Task Name│Task Definition│Task Status║ ╠═════════╪═══════════════╪═══════════╣ ║task1 │timestamp │running ║ ╚═════════╧═══════════════╧═══════════╝ dataflow:>task execution list ╔═════════╤══╤════════════════════════════╤════════════════════════════╤═════════╗ ║Task Name│ID│ Start Time │ End Time │Exit Code║ ╠═════════╪══╪════════════════════════════╪════════════════════════════╪═════════╣ ║task1 │1 │Fri May 05 18:12:05 EDT 2017│Fri May 05 18:12:05 EDT 2017│0 ║ ╚═════════╧══╧════════════════════════════╧════════════════════════════╧═════════╝ -

Destroy the task, by using the following command:

dataflow:>task destroy --name task1

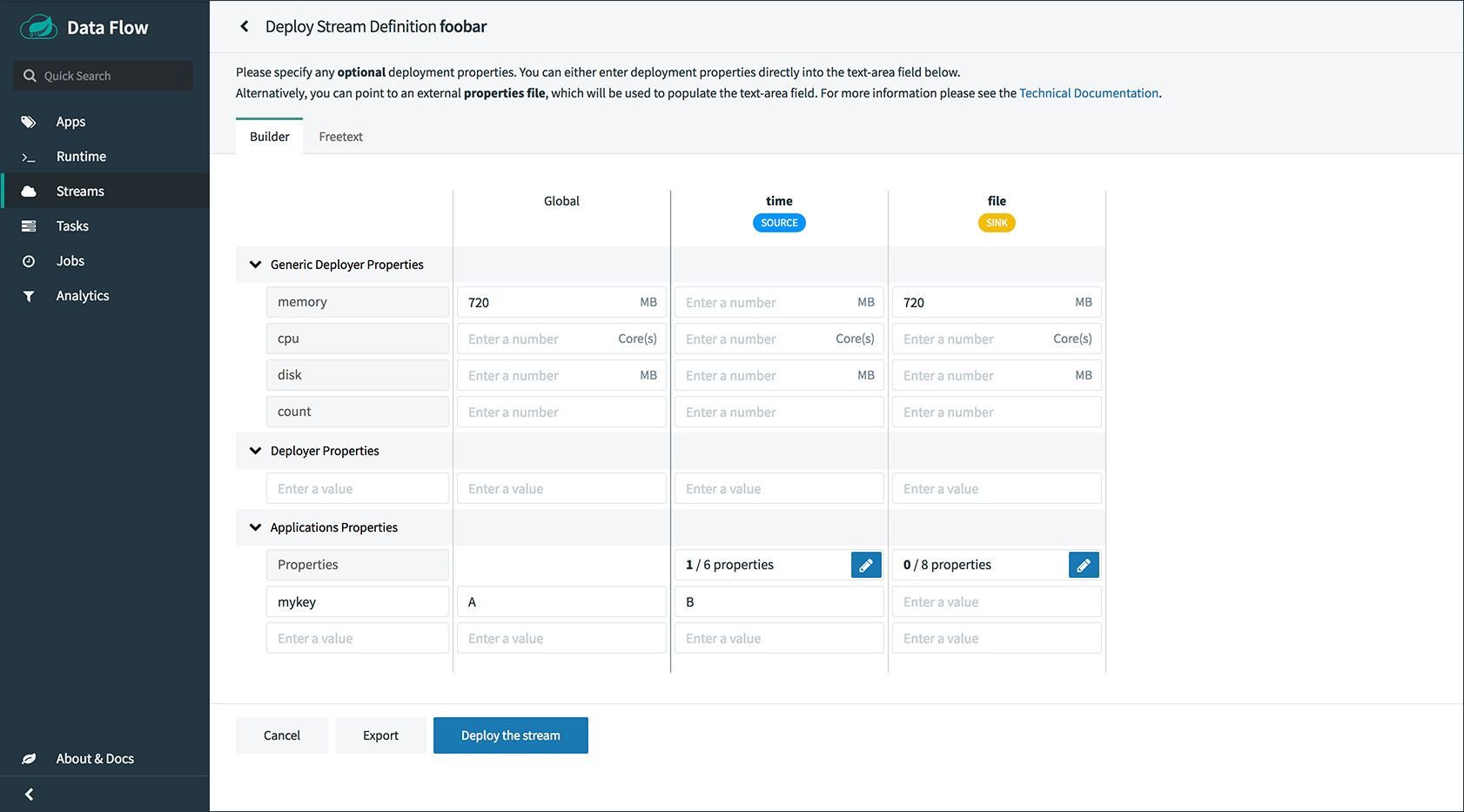

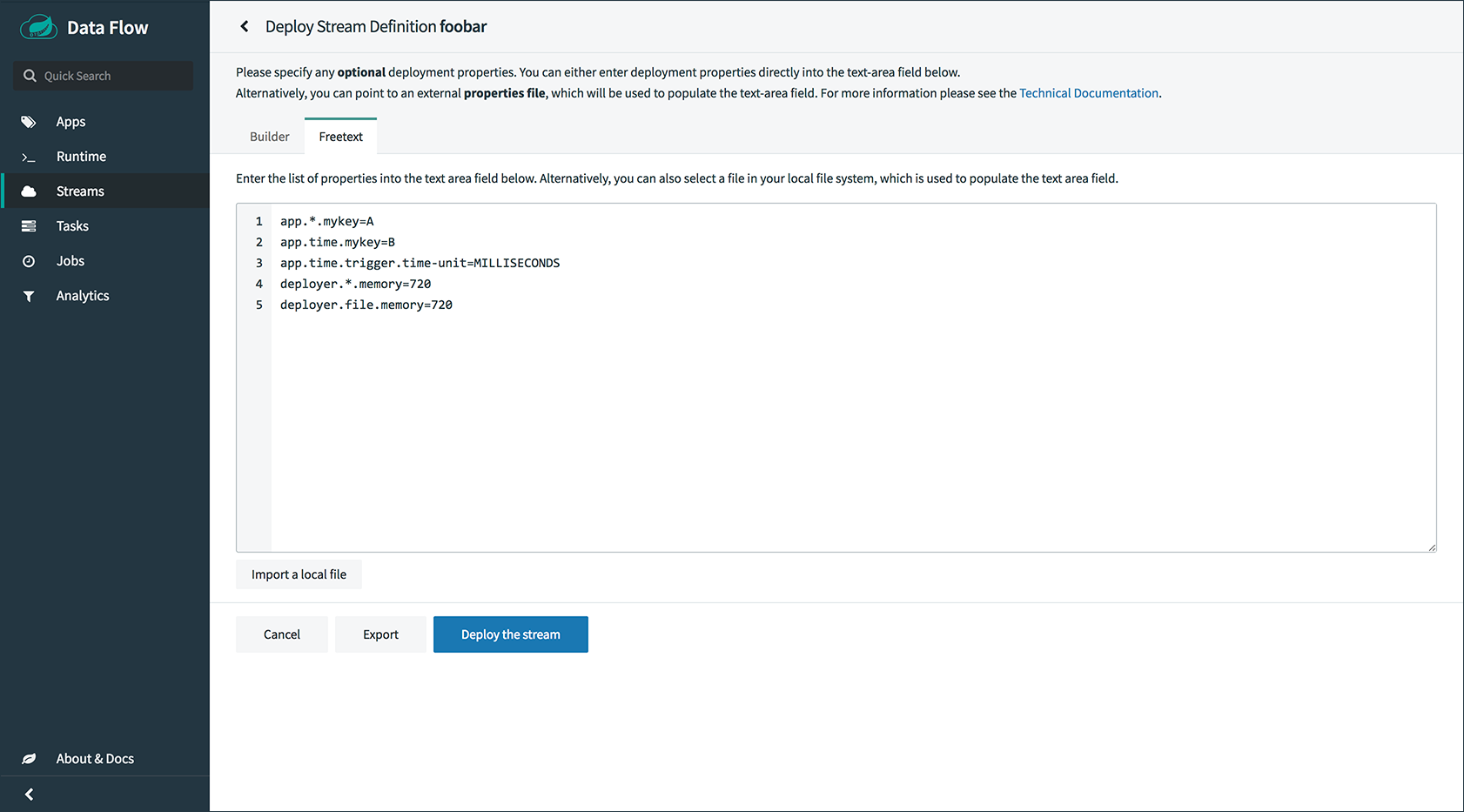

5. Application and Server Properties

This section covers how you can customize the deployment of your applications. You can use a number of properties to influence settings for the applications that are deployed. Properties can be applied on a per-application basis or in the server configuration for all deployed applications.

| Properties set on a per-application basis always take precedence over properties set as the server configuration. This arrangement allows for the ability to override global server level properties on a per-application basis. |

See KubernetesDeployerProperties for more on the supported options.

5.1. Using Deployments

The deployer uses ReplicationController by default. To use deployments instead, you can set the following option as part of the container env section in a deployment YAML file:

env:

- name: SPRING_CLOUD_DEPLOYER_KUBERNETES_CREATE_DEPLOYMENT

value: 'true'This is now the preferred setting and will be the default in future releases of the deployer.

5.2. Memory and CPU Settings

The apps are deployed by default with the following Limits and Requests settings:

Limits:

cpu: 500m

memory: 512Mi

Requests:

cpu: 500m

memory: 512MiYou might find that the 512Mi memory limit is too low. To increase it, you can provide a common spring.cloud.deployer.memory deployer property, as the following example shows (replace <app> with the name of the app for which you want to set the memory):

deployer.<app>.memory=640mThis property affects both the Requests and Limits memory value set for the container.

If you want to set the Requests and Limits values separately, you can use the deployer properties that are specific to the Kubernetes deployer. The following example shows how to set Limits to 1000m for CPU and 1024Mi for memory and Requests to 800m for CPU and 640Mi for memory:

deployer.<app>.kubernetes.limits.cpu=1000m

deployer.<app>.kubernetes.limits.memory=1024Mi

deployer.<app>.kubernetes.requests.cpu=800m

deployer.<app>.kubernetes.requests.memory=640MiThose values results in the following container settings being used:

Limits:

cpu: 1

memory: 1Gi

Requests:

cpu: 800m

memory: 640Mi

When using the common memory property, you should use an m suffix for the value. When using the Kubernetes-specific properties, you should use the Kubernetes Mi style suffix.

|

You can also control the default values to which to set the cpu and memory requirements for the pods that are created as part of application deployments. You can declare the following as part of the container env section in a deployment YAML file:

env:

- name: SPRING_CLOUD_DEPLOYER_KUBERNETES_CPU

value: 500m

- name: SPRING_CLOUD_DEPLOYER_KUBERNETES_MEMORY

value: 640MiThe settings we have used so far only affect the settings for the container. They do not affect the memory setting for the JVM process in the container. If you would like to set JVM memory settings, you can provide an environment variable to do so. See the next section for details.

5.3. Environment Variables

To influence the environment settings for a given application, you can take advantage of the spring.cloud.deployer.kubernetes.environmentVariables deployer property.

For example, a common requirement in production settings is to influence the JVM memory arguments.

You can achieve this by using the JAVA_TOOL_OPTIONS environment variable, as the following example shows:

deployer.<app>.kubernetes.environmentVariables=JAVA_TOOL_OPTIONS=-Xmx1024m

The environmentVariables property accepts a comma-delimited string. If an environment variable contains a value

which is also a comma-delimited string, it must be enclosed in single quotation marks — for example,

spring.cloud.deployer.kubernetes.environmentVariables=spring.cloud.stream.kafka.binder.brokers='somehost:9092,

anotherhost:9093'

|

This overrides the JVM memory setting for the desired <app> (replace <app> with the name of your application).

5.4. Liveness and Readiness Probes

The liveness and readiness probes use paths called /health and /info respectively. They use a delay of 10 for both and a period of 60 and 10 respectively. You can change these defaults when you deploy the stream by using deployer properties.

The following example changes the liveness probe (replace <app> with the name of your application) by setting deployer properties:

deployer.<app>.kubernetes.livenessProbePath=/health

deployer.<app>.kubernetes.livenessProbeDelay=120

deployer.<app>.kubernetes.livenessProbePeriod=20The same can be declared as part of the container env section in a deployment YAML file:

env:

- name: SPRING_CLOUD_DEPLOYER_KUBERNETES_LIVENESS_PROBE_PATH

value: '/health'

- name: SPRING_CLOUD_DEPLOYER_KUBERNETES_LIVENESS_PROBE_DELAY

value: '120'

- name: SPRING_CLOUD_DEPLOYER_KUBERNETES_LIVENESS_PROBE_PERIOD

value: '20'Similarly, you can swap liveness for readiness to override the default readiness settings.

By default, port 8080 is used as the probe port. You can change the defaults for both liveness and readiness probe ports by using deployer properties, as the following example shows:

deployer.<app>.kubernetes.readinessProbePort=7000

deployer.<app>.kubernetes.livenessProbePort=7000You can also set the port values in the container env section of a deployment YAML file:

env:

- name: SPRING_CLOUD_DEPLOYER_KUBERNETES_READINESS_PROBE_PORT

value: '7000'

- name: SPRING_CLOUD_DEPLOYER_KUBERNETES_LIVENESS_PROBE_PORT

value: '7000'|

If you intend to use Spring Boot 2.x+, all Actuator endpoints in Spring Boot 2.x have been moved under To automatically set both See the Spring Boot 2.0 Migration Guide for more information and how to restore the Spring Boot 1.x base path behavior. |

You can access secured probe endpoints by using credentials stored in a Kubernetes secret. You can use an existing secret, provided the credentials are contained under the credentials key name of the secret’s data block. You can configure probe authentication on a per-application basis. When enabled, it is applied to both the liveness and readiness probe endpoints by using the same credentials and authentication type. Currently, only Basic authentication is supported.

To create a new secret:

-

First generate the base64 string with the credentials used to access the secured probe endpoints.

Basic authentication encodes a username and password as a base64 string in the format of

username:password.The following example (which includes output and in which you should replace

userandpasswith your values) shows how to generate a base64 string:$ echo -n "user:pass" | base64 dXNlcjpwYXNz $ -

With the encoded credentials, create a file (for example,

myprobesecret.yml) with the following contents:apiVersion: v1 kind: Secret metadata: name: myprobesecret type: Opaque data: credentials: GENERATED_BASE64_STRING -

Replace

GENERATED_BASE64_STRINGwith the base64-encoded value generated earlier. -

Create the secret by using

kubectl, as the following example shows:$ kubectl create -f ./myprobesecret.yml secret "myprobesecret" created $ -

Set the following deployer properties to use authentication when accessing probe endpoints, as the following example shows:

deployer.<app>.kubernetes.probeCredentialsSecret=myprobesecretReplace

<app>with the name of the application to which to apply authentication.

5.5. Using SPRING_APPLICATION_JSON

You can use a SPRING_APPLICATION_JSON environment variable to set Data Flow server properties (including the configuration of maven repository settings) that are common across all of the Data Flow server implementations. These settings go at the server level in the container env section of a deployment YAML. The following example shows how to do so:

env:

- name: SPRING_APPLICATION_JSON

value: "{ \"maven\": { \"local-repository\": null, \"remote-repositories\": { \"repo1\": { \"url\": \"https://repo.spring.io/libs-snapshot\"} } } }"5.6. Private Docker Registry

You can pull Docker images from a private registry on a per-application basis. First, you must create a secret in the cluster. Follow the Pull an Image from a Private Registry guide to create the secret.

Once you have created the secret, use the imagePullSecret property to set the secret to use, as the following example shows:

deployer.<app>.kubernetes.imagePullSecret=mysecretReplace <app> with the name of your application and mysecret with the name of the secret you created earlier.

You can also configure the image pull secret at the server level in the container env section of a deployment YAML, as the following example shows:

env:

- name: SPRING_CLOUD_DEPLOYER_KUBERNETES_IMAGE_PULL_SECRET

value: mysecretReplace mysecret with the name of the secret you created earlier.

5.7. Annotations

You can add annotations to Kubernetes objects on a per-application basis. The supported object types are pod Deployment, Service and Job. Annotations are defined in a key:value format allowing for multiple annotations separated by a comma. For more information and use cases on annotations see Annotations.

The following example shows how you can configure applications to use annotations:

deployer.<app>.kubernetes.podAnnotations=annotationName:annotationValue

deployer.<app>.kubernetes.serviceAnnotations=annotationName:annotationValue,annotationName2:annotationValue2

deployer.<app>.kubernetes.jobAnnotations=annotationName:annotationValueReplace <app> with the name of your application and the value of your annotations.

5.8. Entry Point Style

An Entry Point Style affects how application properties are passed to the container to be deployed. Currently, three styles are supported:

-

exec: (default) Passes all application properties and command line arguments in the deployment request as container args. Application properties are transformed into the format of--key=value. -

shell: Passes all application properties as environment variables. Command line arguments from the deployment request are not converted into environment variables nor set on the container. Application properties are transformed into an uppercase string and.characters are replaced with_. -

boot: Creates an environment variable calledSPRING_APPLICATION_JSONthat contains a JSON representation of all application properties. Command line arguments from the deployment request are set as container args.

| In all cases, environment variables defined at the server level configuration and on a per-application basis are set onto the container as-is. |

You can configure applications as follows:

deployer.<app>.kubernetes.entryPointStyle=<Entry Point Style>Replace <app> with the name of your application and <Entry Point Style> with your desired Entry Point Style.

You can also configure the Entry Point Style at the server level in the container env section of a deployment YAML, as the following example shows:

env:

- name: SPRING_CLOUD_DEPLOYER_KUBERNETES_ENTRY_POINT_STYLE

value: entryPointStyleReplace entryPointStye with the desired Entry Point Style.

You should choose an Entry Point Style of either exec or shell, to correspond to how the ENTRYPOINT syntax is defined in the container’s Dockerfile. For more information and uses cases on exec vs shell, see the ENTRYPOINT section of the Docker documentation.

Using the boot Entry Point Style corresponds to using the exec style ENTRYPOINT. Command line arguments from the deployment request are passed to the container, with the addition of application properties mapped into the SPRING_APPLICATION_JSON environment variable rather than command line arguments.

When you use the boot Entry Point Style, the deployer.<app>.kubernetes.environmentVariables property must not contain SPRING_APPLICATION_JSON.

|

5.9. Deployment Service Account

You can configure a custom service account for application deployments through properties. You can use an existing service account or create a new one. One way to create a service account is by using kubectl, as the following example shows:

$ kubectl create serviceaccount myserviceaccountname

serviceaccount "myserviceaccountname" createdThen you can configure individual applications as follows:

deployer.<app>.kubernetes.deploymentServiceAccountName=myserviceaccountnameReplace <app> with the name of your application and myserviceaccountname with your service account name.

You can also configure the service account name at the server level in the container env section of a deployment YAML, as the following example shows:

env:

- name: SPRING_CLOUD_DEPLOYER_KUBERNETES_DEPLOYMENT_SERVICE_ACCOUNT_NAME

value: myserviceaccountnameReplace myserviceaccountname with the service account name to be applied to all deployments.

5.10. Image Pull Policy

An image pull policy defines when a Docker image should be pulled to the local registry. Currently, three policies are supported:

-

IfNotPresent: (default) Do not pull an image if it already exists. -

Always: Always pull the image regardless of whether it already exists. -

Never: Never pull an image. Use only an image that already exists.

The following example shows how you can individually configure applications:

deployer.<app>.kubernetes.imagePullPolicy=AlwaysReplace <app> with the name of your application and Always with your desired image pull policy.

You can configure an image pull policy at the server level in the container env section of a deployment YAML, as the following example shows:

env:

- name: SPRING_CLOUD_DEPLOYER_KUBERNETES_IMAGE_PULL_POLICY

value: AlwaysReplace Always with your desired image pull policy.

5.11. Deployment Labels

Custom labels can be set on Deployment related objects. See Labels for more information on labels. Labels are specified in key:value format.

The following example shows how you can individually configure applications:

deployer.<app>.kubernetes.deploymentLabels=myLabelName:myLabelValueReplace <app> with the name of your application, myLabelName with your label name and myLabelValue with the value of your label.

Additionally, multiple labels can be applied, for example:

deployer.<app>.kubernetes.deploymentLabels=myLabelName:myLabelValue,myLabelName2:myLabelValue2Applications

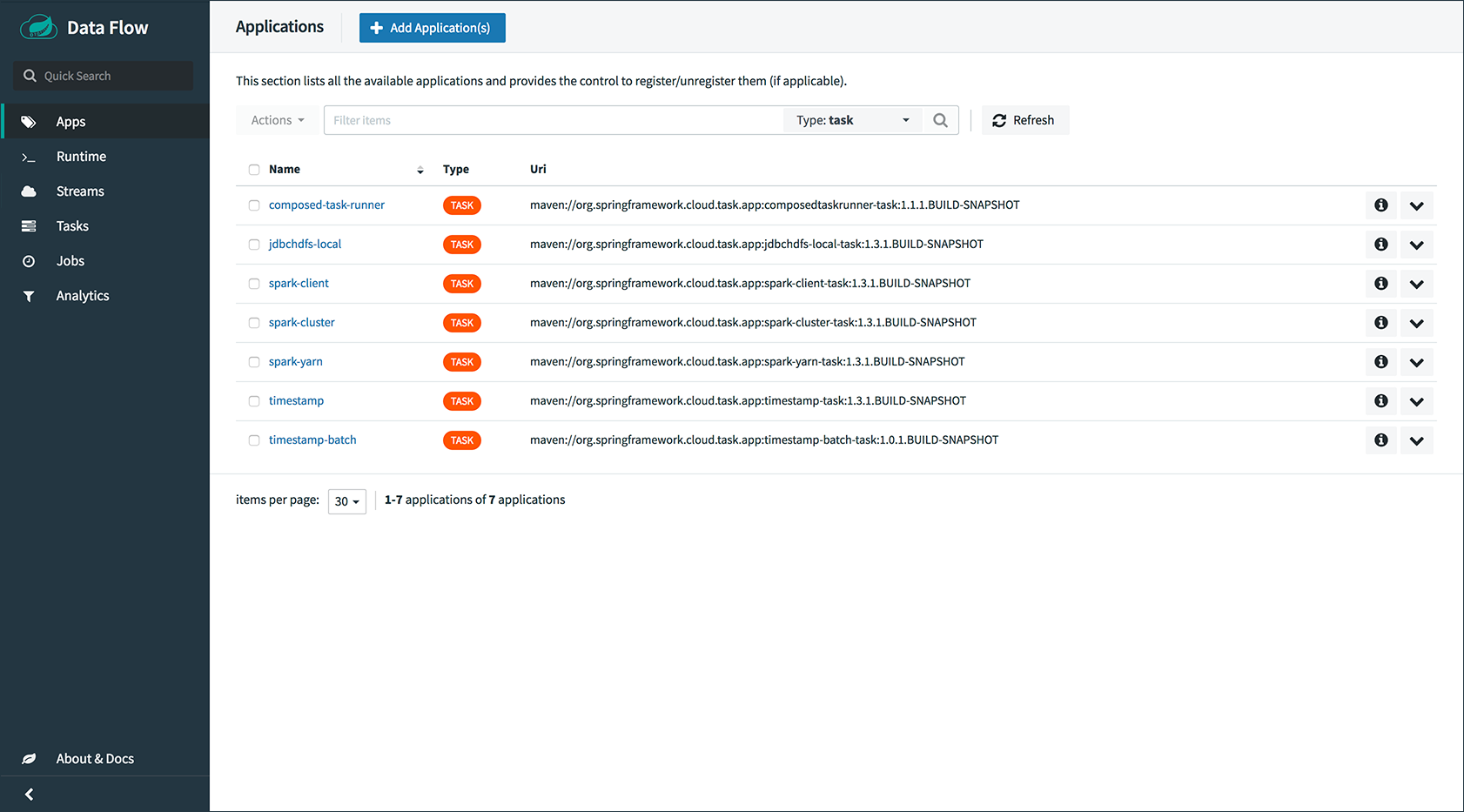

A selection of pre-built stream and task/batch starter apps for various data integration and processing scenarios to facilitate learning and experimentation. The table below includes the pre-built applications at a glance. For more details, review how to register supported applications.

6. Available Applications

| Source | Processor | Sink | Task |

|---|---|---|---|

task-launcher-yarn |

|||

task-launcher-local |

|||

loggregator |

|||

tasklaunchrequest-transform |

|||

task-launcher-cloudfoundry |

Architecture

7. Introduction

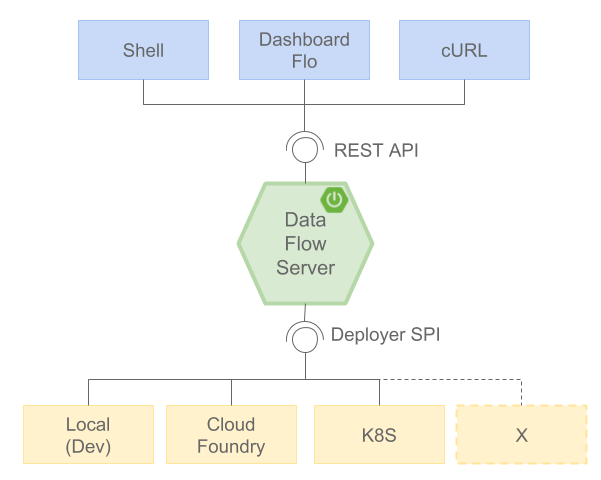

Spring Cloud Data Flow simplifies the development and deployment of applications focused on data processing use cases. The major concepts of the architecture are Applications, the Data Flow Server, and the target runtime.

Applications come in two flavors:

-

Long-lived Stream applications where an unbounded amount of data is consumed or produced through messaging middleware.

-

Short-lived Task applications that process a finite set of data and then terminate.

Depending on the runtime, applications can be packaged in two ways:

-

Spring Boot uber-jar that is hosted in a maven repository, file, or HTTP(S).

-

Docker image.

The runtime is the place where applications execute. The target runtimes for applications are platforms that you may already be using for other application deployments.

The supported platforms are:

-

Cloud Foundry

-

Kubernetes

-

Local Server

| The local server is supported in production for Task deployment as a replacement for the Spring Batch Admin project. The local server is not supported in production for Stream deployments. |

There is a deployer Service Provider Interface (SPI) that lets you extend Data Flow to deploy onto other runtimes. There are community implementations

The Apache YARN implementation has reached end-of-line status. Let us know at Gitter if youare interested in forking the project to continue developing and maintaining it.

There are two mutually exclusive options that determine how long lived streaming applications are deployed to the platform.

-

Select a Spring Cloud Data Flow Server executable jar that targets a single platform.

-

Enable the Spring Cloud Data Flow Server to delegate the deployment and runtime status of applications to the Spring Cloud Skipper Server, which has the capability to deploy to multiple platforms.

Selecting the Spring Cloud Skipper option also enables the ability to update and rollback applications in a Stream at runtime.

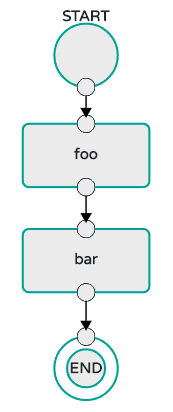

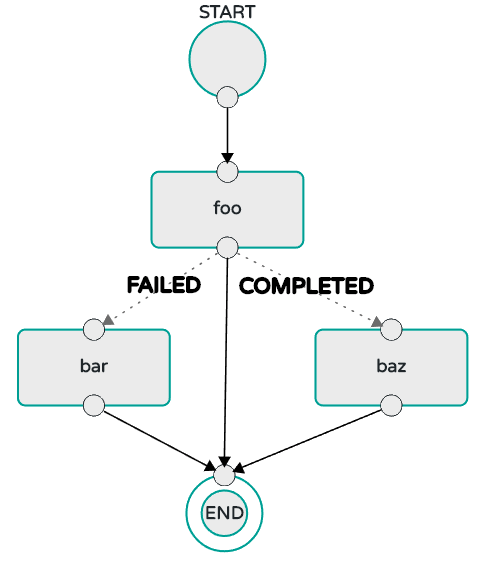

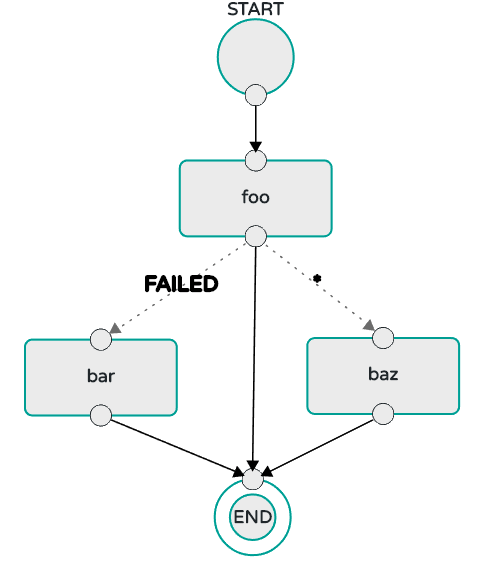

The Data Flow server is also responsible for:

-

Interpreting and executing a stream DSL that describes the logical flow of data through multiple long-lived applications.

-

Launching a long-lived task application.

-

Interpreting and executing a composed task DSL that describes the logical flow of data through multiple short-lived applications.

-

Applying a deployment manifest that describes the mapping of applications onto the runtime - for example, to set the initial number of instances, memory requirements, and data partitioning.

-

Providing the runtime status of deployed applications.

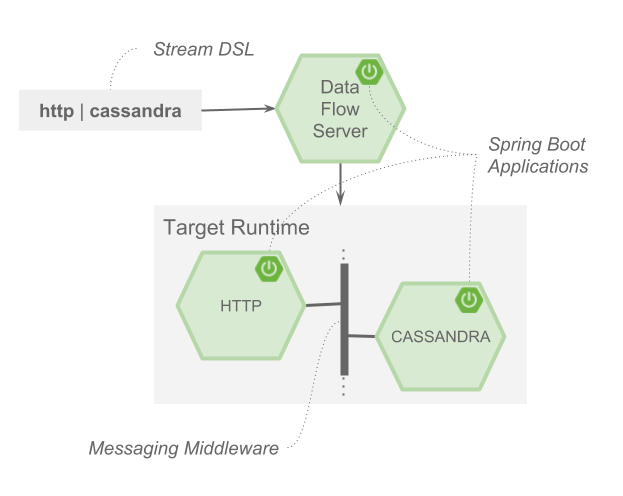

As an example, the stream DSL to describe the flow of data from an HTTP source to an Apache Cassandra sink would be written using a Unix pipes and filter syntax " http | cassandra ". Each name in the DSL is mapped to an application that can that Maven or Docker repositories. You can also register an application to an http location. Many source, processor, and sink applications for common use cases (such as JDBC, HDFS, HTTP, and router) are provided by the Spring Cloud Data Flow team. The pipe symbol represents the communication between the two applications through messaging middleware. The two messaging middleware brokers that are supported are:

-

Apache Kafka

-

RabbitMQ

In the case of Kafka, when deploying the stream, the Data Flow server is responsible for creating the topics that correspond to each pipe symbol and configure each application to produce or consume from the topics so the desired flow of data is achieved. Similarly for RabbitMQ, exchanges and queues are created as needed to achieve the desired flow.

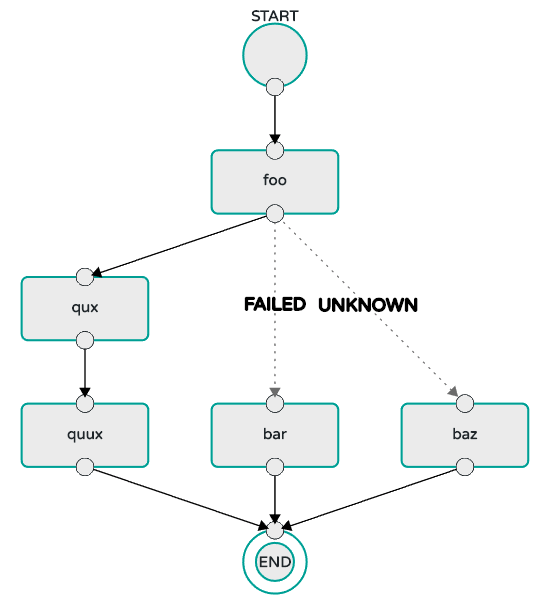

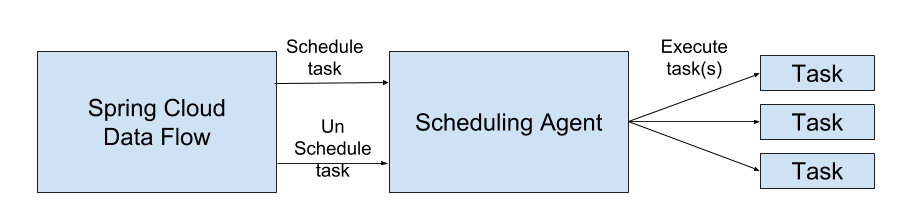

The interaction of the main components is shown in the following image:

In the preceding diagram, a DSL description of a stream is POSTed to the Data Flow Server. Based on the mapping of DSL application names to Maven and Docker artifacts, the http-source and cassandra-sink applications are deployed on the target runtime. Data that is posted to the HTTP application will then be stored in Cassandra. The Samples Repository shows this use case in full detail.

8. Microservice Architectural Style

The Data Flow Server deploys applications onto the target runtime that conform to the microservice architectural style. For example, a stream represents a high-level application that consists of multiple small microservice applications each running in their own process. Each microservice application can be scaled up or down independently of the other and each has its own versioning lifecycle. Using Data Flow with Skipper enables you to independently upgrade or rollback each application at runtime.

Both Streaming and Task-based microservice applications build upon Spring Boot as the foundational library. This gives all microservice applications functionality such as health checks, security, configurable logging, monitoring, and management functionality, as well as executable JAR packaging.

It is important to emphasize that these microservice applications are 'just apps' that you can run by yourself by using java -jar and passing in appropriate configuration properties. We provide many common microservice applications for common operations so you need not start from scratch when addressing common use cases that build upon the rich ecosystem of Spring Projects, such as Spring Integration, Spring Data, and Spring Batch. Creating your own microservice application is similar to creating other Spring Boot applications. You can start by using the Spring Initializr web site to create the basic scaffolding of either a Stream or Task-based microservice.

In addition to passing the appropriate application properties to each applications, the Data Flow server is responsible for preparing the target platform’s infrastructure so that the applications can be deployed. For example, in Cloud Foundry, it would bind specified services to the applications and execute the cf push command for each application. For Kubernetes, it would create the replication controller, service, and load balancer.

The Data Flow Server helps simplify the deployment of multiple, relatated, applications onto a target runtime, setting up necessary input and output topics, partitions, and metrics functionality. However, one could also opt to deploy each of the microservice applications manually and not use Data Flow at all. This approach might be more appropriate to start out with for small scale deployments, gradually adopting the convenience and consistency of Data Flow as you develop more applications. Manual deployment of Stream- and Task-based microservices is also a useful educational exercise that can help you better understand some of the automatic application configuration and platform targeting steps that the Data Flow Server provides.

8.1. Comparison to Other Platform Architectures

Spring Cloud Data Flow’s architectural style is different than other Stream and Batch processing platforms. For example in Apache Spark, Apache Flink, and Google Cloud Dataflow, applications run on a dedicated compute engine cluster. The nature of the compute engine gives these platforms a richer environment for performing complex calculations on the data as compared to Spring Cloud Data Flow, but it introduces the complexity of another execution environment that is often not needed when creating data-centric applications. That does not mean you cannot do real-time data computations when using Spring Cloud Data Flow. Refer to the section Analytics, which describes the integration of Redis to handle common counting-based use cases. Spring Cloud Stream also supports using Reactive APIs such as Project Reactor and RxJava which can be useful for creating functional style applications that contain time-sliding-window and moving-average functionality. Similarly, Spring Cloud Stream also supports the development of applications in that use the Kafka Streams API.

Apache Storm, Hortonworks DataFlow, and Spring Cloud Data Flow’s predecessor, Spring XD, use a dedicated application execution cluster, unique to each product, that determines where your code should run on the cluster and performs health checks to ensure that long-lived applications are restarted if they fail. Often, framework-specific interfaces are required in order to correctly “plug in” to the cluster’s execution framework.

As we discovered during the evolution of Spring XD, the rise of multiple container frameworks in 2015 made creating our own runtime a duplication of effort. There is no reason to build your own resource management mechanics when there are multiple runtime platforms that offer this functionality already. Taking these considerations into account is what made us shift to the current architecture, where we delegate the execution to popular runtimes, which you may already be using for other purposes. This is an advantage in that it reduces the cognitive distance for creating and managing data-centric applications as many of the same skills used for deploying other end-user/web applications are applicable.

9. Data Flow Server

The Data Flow Server provides the following functionality:

9.1. Endpoints

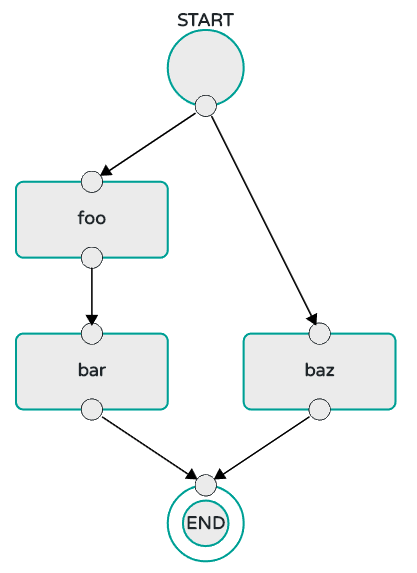

The Data Flow Server uses an embedded servlet container and exposes REST endpoints for creating, deploying, undeploying, and destroying streams and tasks, querying runtime state, analytics, and the like. The Data Flow Server is implemented by using Spring’s MVC framework and the Spring HATEOAS library to create REST representations that follow the HATEOAS principle, as shown in the following image:

[NOTE] The Data Flow Server that deploys applications to the local machine is not intended to be used in production for streaming use cases but for the development and testing of stream based applications. The local Data Flow is intended to be used in production for batch use cases as a replacement for the Spring Batch Admin project. Both streaming and batch use cases are intended to be used in production when deploying to Cloud Foundry or Kuberenetes.

9.2. Security

The Data Flow Server executable jars support basic HTTP, LDAP(S), File-based, and OAuth 2.0 authentication to access its endpoints. Refer to the security section for more information.

10. Streams

10.1. Topologies

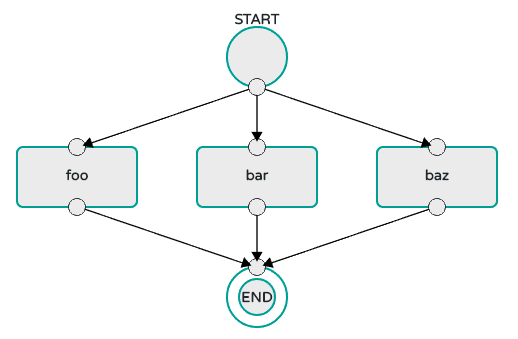

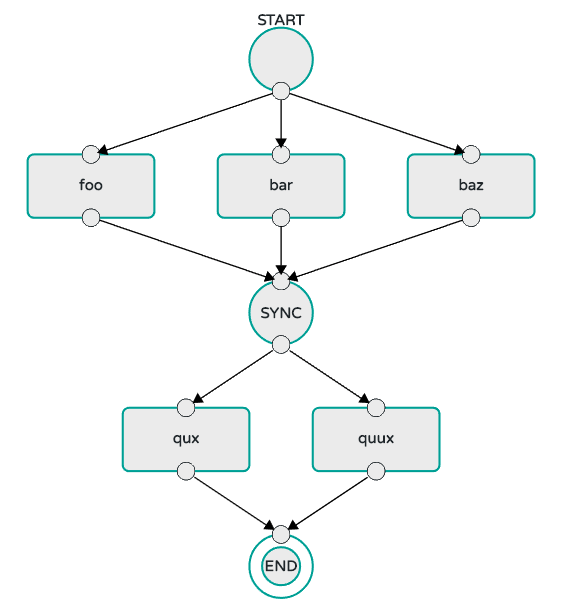

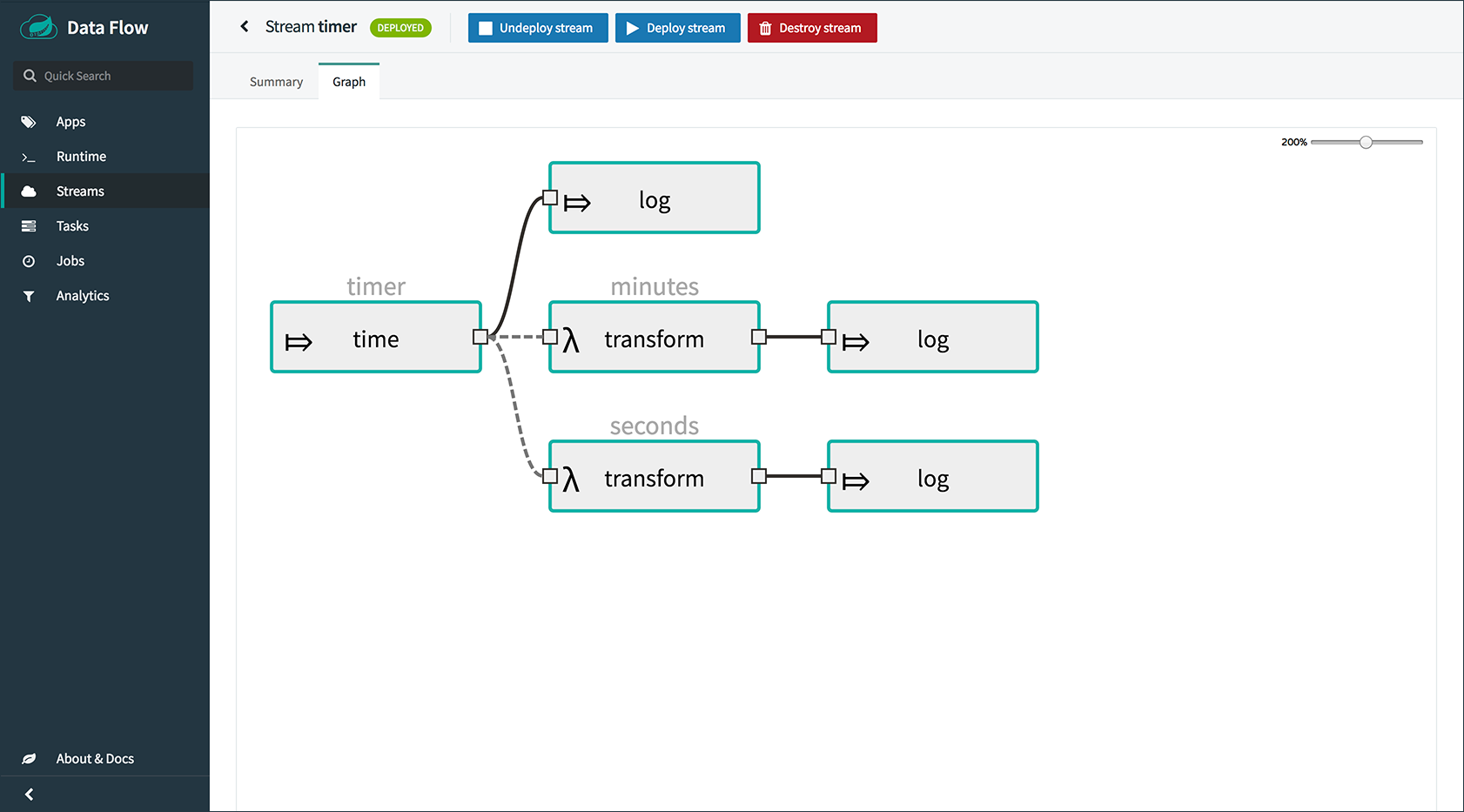

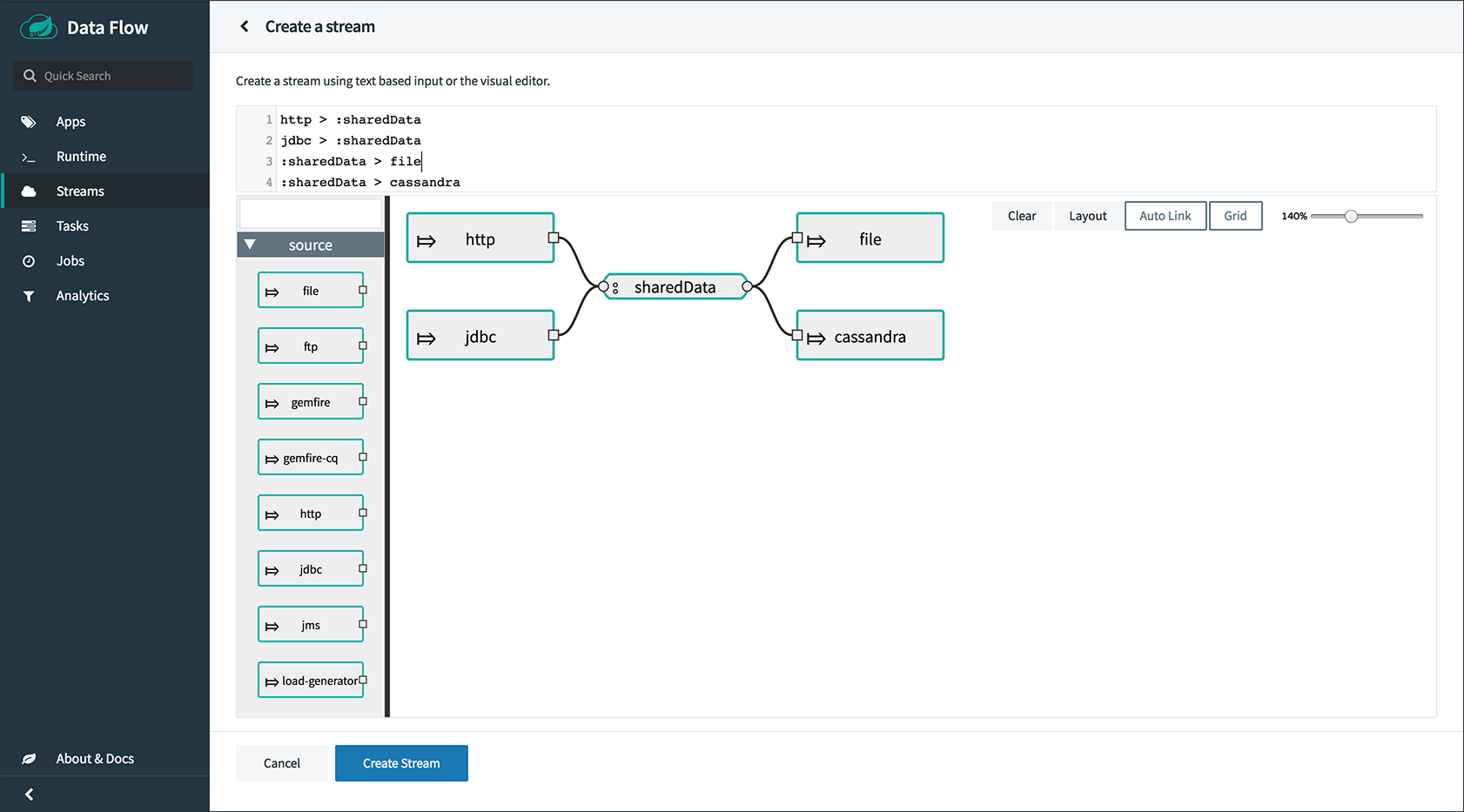

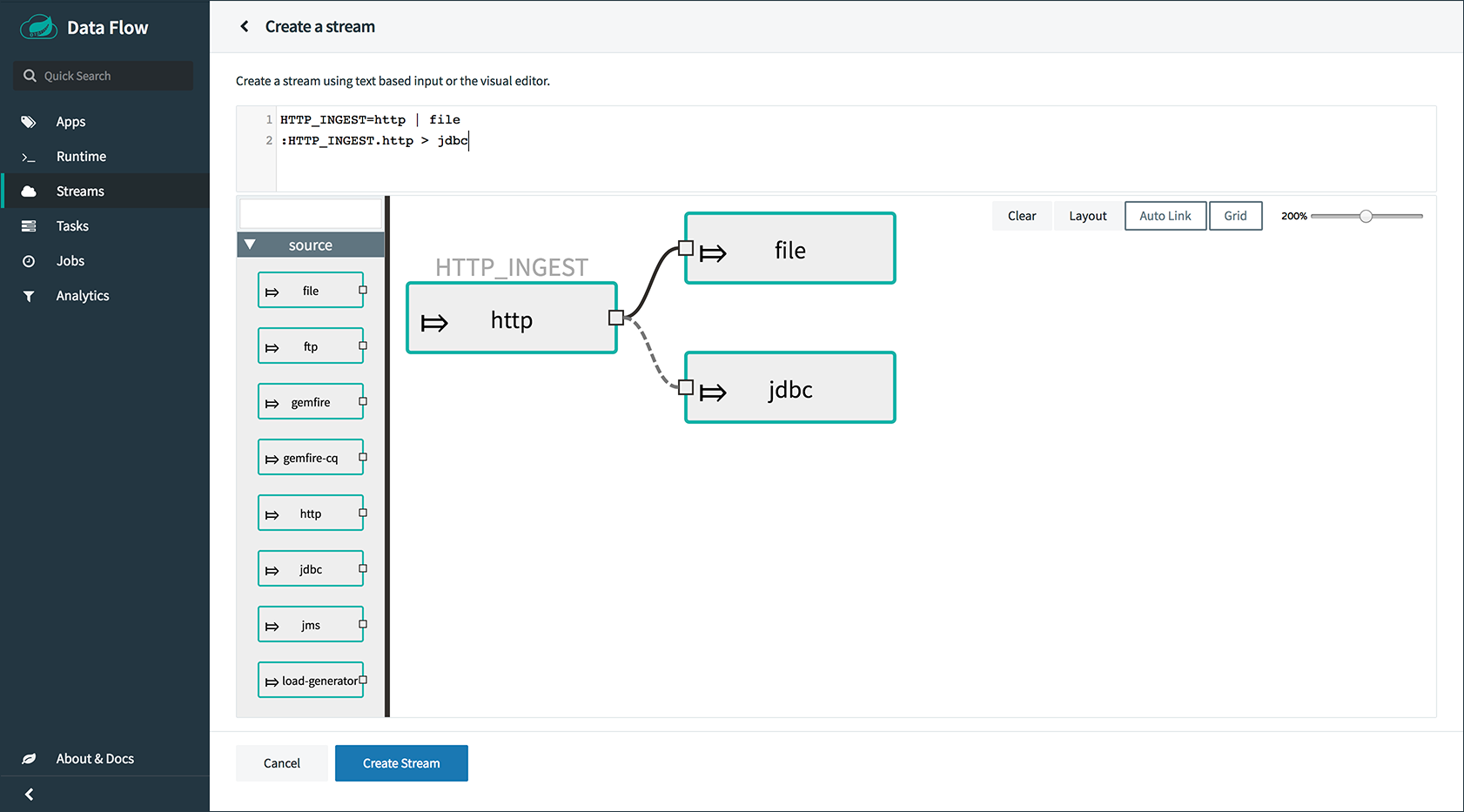

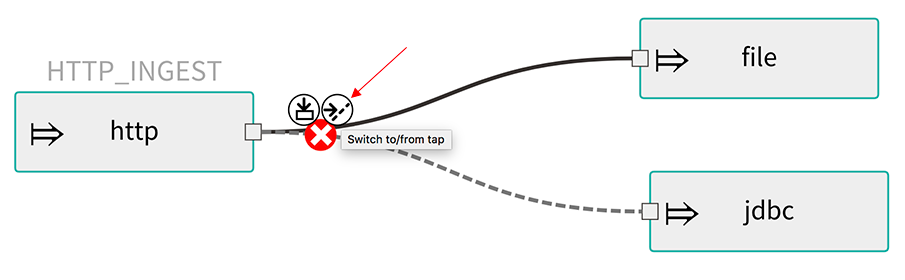

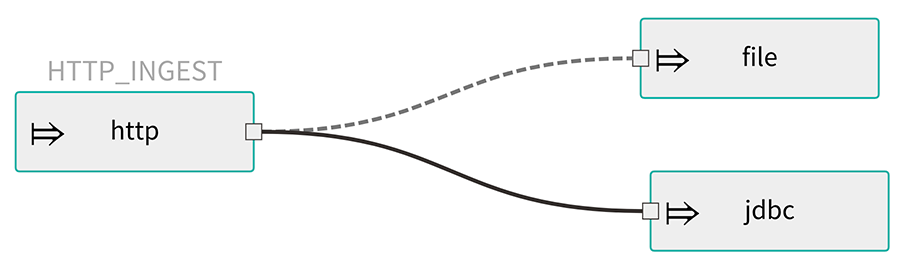

The Stream DSL describes linear sequences of data flowing through the system. For example, in the stream definition http | transformer | cassandra, each pipe symbol connects the application on the left to the one on the right. Named channels can be used for routing and to fan in/fan out data to multiple messaging destinations.

The concept of a tap can be used to ‘listen’ to the data that is flowing across any of the pipe symbols. "Taps" are just other streams that use an input any one of the "pipes" in a target stream and have an independent life cycle from the target stream.

10.2. Concurrency

For an application that consumes events, Spring Cloud Stream exposes a concurrency setting that controls the size of a thread pool used for dispatching incoming messages. See the {spring-cloud-stream-docs}#_consumer_properties[Consumer properties] documentation for more information.

10.3. Partitioning

A common pattern in stream processing is to partition the data as it moves from one application to the next. Partitioning is a critical concept in stateful processing, for either performance or consistency reasons, to ensure that all related data is processed together. For example, in a time-windowed average calculation example, it is important that all measurements from any given sensor are processed by the same application instance. Alternatively, you may want to cache some data related to the incoming events so that it can be enriched without making a remote procedure call to retrieve the related data.

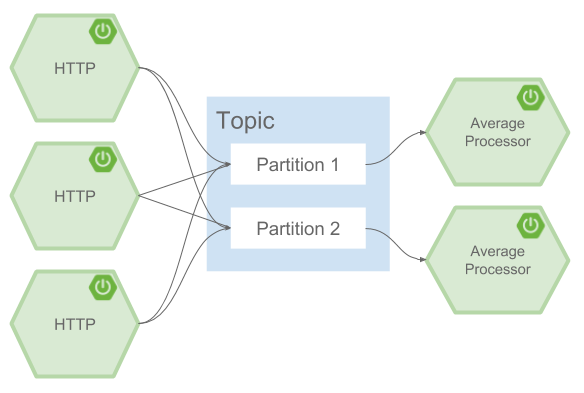

Spring Cloud Data Flow supports partitioning by configuring Spring Cloud Stream’s output and input bindings. Spring Cloud Stream provides a common abstraction for implementing partitioned processing use cases in a uniform fashion across different types of middleware. Partitioning can thus be used whether the broker itself is naturally partitioned (for example, Kafka topics) or not (RabbitMQ). The following image shows how data could be partitioned into two buckets, such that each instance of the average processor application consumes a unique set of data.

To use a simple partitioning strategy in Spring Cloud Data Flow, you need only set the instance count for each application in the stream and a partitionKeyExpression producer property when deploying the stream. The partitionKeyExpression identifies what part of the message is used as the key to partition data in the underlying middleware. An ingest stream can be defined as http | averageprocessor | cassandra. (Note that the Cassandra sink is not shown in the diagram above.) Suppose the payload being sent to the HTTP source was in JSON format and had a field called sensorId. For example, consider the case of deploying the stream with the shell command stream deploy ingest --propertiesFile ingestStream.properties where the contents of the ingestStream.properties file are as follows:

deployer.http.count=3

deployer.averageprocessor.count=2

app.http.producer.partitionKeyExpression=payload.sensorIdThe result is to deploy the stream such that all the input and output destinations are configured for data to flow through the applications but also ensure that a unique set of data is always delivered to each averageprocessor instance. In this case, the default algorithm is to evaluate payload.sensorId % partitionCount where the partitionCount is the application count in the case of RabbitMQ and the partition count of the topic in the case of Kafka.

Please refer to Passing Stream Partition Properties for additional strategies to partition streams during deployment and how they map onto the underlying {spring-cloud-stream-docs}#_partitioning[Spring Cloud Stream Partitioning properties].

Also note that you cannot currently scale partitioned streams. Read Scaling at Runtime for more information.

10.4. Message Delivery Guarantees

Streams are composed of applications that use the Spring Cloud Stream library as the basis for communicating with the underlying messaging middleware product. Spring Cloud Stream also provides an opinionated configuration of middleware from several vendors, in particular providing {spring-cloud-stream-docs}#_persistent_publish_subscribe_support[persistent publish-subscribe semantics].

The {spring-cloud-stream-docs}#_binders[Binder abstraction] in Spring Cloud Stream is what connects the application to the middleware. There are several configuration properties of the binder that are portable across all binder implementations and some that are specific to the middleware.

For consumer applications, there is a retry policy for exceptions generated during message handling. The retry policy is configured by using the {spring-cloud-stream-docs}#_consumer_properties[common consumer properties] maxAttempts, backOffInitialInterval, backOffMaxInterval, and backOffMultiplier. The default values of these properties retry the callback method invocation 3 times and wait one second for the first retry. A backoff multiplier of 2 is used for the second and third attempts.

When the number of retry attempts has exceeded the maxAttempts value, the exception and the failed message become the payload of a message and are sent to the application’s error channel. By default, the default message handler for this error channel logs the message. You can change the default behavior in your application by creating your own message handler that subscribes to the error channel.

Spring Cloud Stream also supports a configuration option for both Kafka and RabbitMQ binder implementations that sends the failed message and stack trace to a dead letter queue. The dead letter queue is a destination and its nature depends on the messaging middleware (for example, in the case of Kafka, it is a dedicated topic). To enable this for RabbitMQ set the republishtoDlq and autoBindDlq {spring-cloud-stream-docs}#_rabbitmq_consumer_properties[consumer properties] and the autoBindDlq {spring-cloud-stream-docs}#_rabbit_producer_properties[producer property] to true when deploying the stream. To always apply these producer and consumer properties when deploying streams, configure them as common application properties when starting the Data Flow server.

Additional messaging delivery guarantees are those provided by the underlying messaging middleware that is chosen for the application for both producing and consuming applications. Refer to the Kafka {spring-cloud-stream-docs}#_kafka_consumer_properties[Consumer] and {spring-cloud-stream-docs}#_kafka_producer_properties[Producer] and Rabbit {spring-cloud-stream-docs}#_rabbitmq_consumer_properties[Consumer] and {spring-cloud-stream-docs}#_rabbit_producer_properties[Producer] documentation for more details. You can find extensive declarative support for all the native QOS options.

11. Stream Programming Models

While Spring Boot provides the foundation for creating DevOps-friendly microservice applications, other libraries in the Spring ecosystem help create Stream-based microservice applications. The most important of these is Spring Cloud Stream.

The essence of the Spring Cloud Stream programming model is to provide an easy way to describe multiple inputs and outputs of an application that communicate over messaging middleware. These input and outputs map onto Kafka topics or Rabbit exchanges and queues as well as the KStream/KTable programming model. Common application configuration for a Source that generates data, a Processor that consumes and produces data, and a Sink that consumes data is provided as part of the library.

11.1. Imperative Programming Model

Spring Cloud Stream is most closely integrated with Spring Integration’s imperative "one event at a time" programming model. This means you write code that handles a single event callback, as shown in the following example,

@EnableBinding(Sink.class)

public class LoggingSink {

@StreamListener(Sink.INPUT)

public void log(String message) {

System.out.println(message);

}

}In this case, the String payload of a message coming on the input channel is handed to the log method. The @EnableBinding annotation is used to tie the input channel to the external middleware.

11.2. Functional Programming Model

However, Spring Cloud Stream can support other programming styles, such as reactive APIs, where incoming and outgoing data is handled as continuous data flows and how each individual message should be handled is defined. With many reactive AOIs, you can also use operators that describe functional transformations from inbound to outbound data flows. Here is an example:

@EnableBinding(Processor.class)

public static class UppercaseTransformer {

@StreamListener

@Output(Processor.OUTPUT)

public Flux<String> receive(@Input(Processor.INPUT) Flux<String> input) {

return input.map(s -> s.toUpperCase());

}

}12. Application Versioning

Application versioning within a Stream is now supported when using Data Flow together with Skipper. You can update application and deployment properties as well as the version of the application. Rolling back to a previous application version is also supported.

13. Task Programming Model

The Spring Cloud Task programming model provides:

-

Persistence of the Task’s lifecycle events and exit code status.

-

Lifecycle hooks to execute code before or after a task execution.

-

The ability to emit task events to a stream (as a source) during the task lifecycle.

-

Integration with Spring Batch Jobs.

See the Tasks section for more information.

14. Analytics

Spring Cloud Data Flow is aware of certain Sink applications that write counter data to Redis and provides a REST endpoint to read counter data. The types of counters supported are as follows:

-

Counter: Counts the number of messages it receives, optionally storing counts in a separate store such as Redis.

-

Field Value Counter: Counts occurrences of unique values for a named field in a message payload.

-

Aggregate Counter: Stores total counts but also retains the total count values for each minute, hour, day, and month.

Note that the timestamp used in the aggregate counter can come from a field in the message itself so that out-of-order messages are properly accounted.

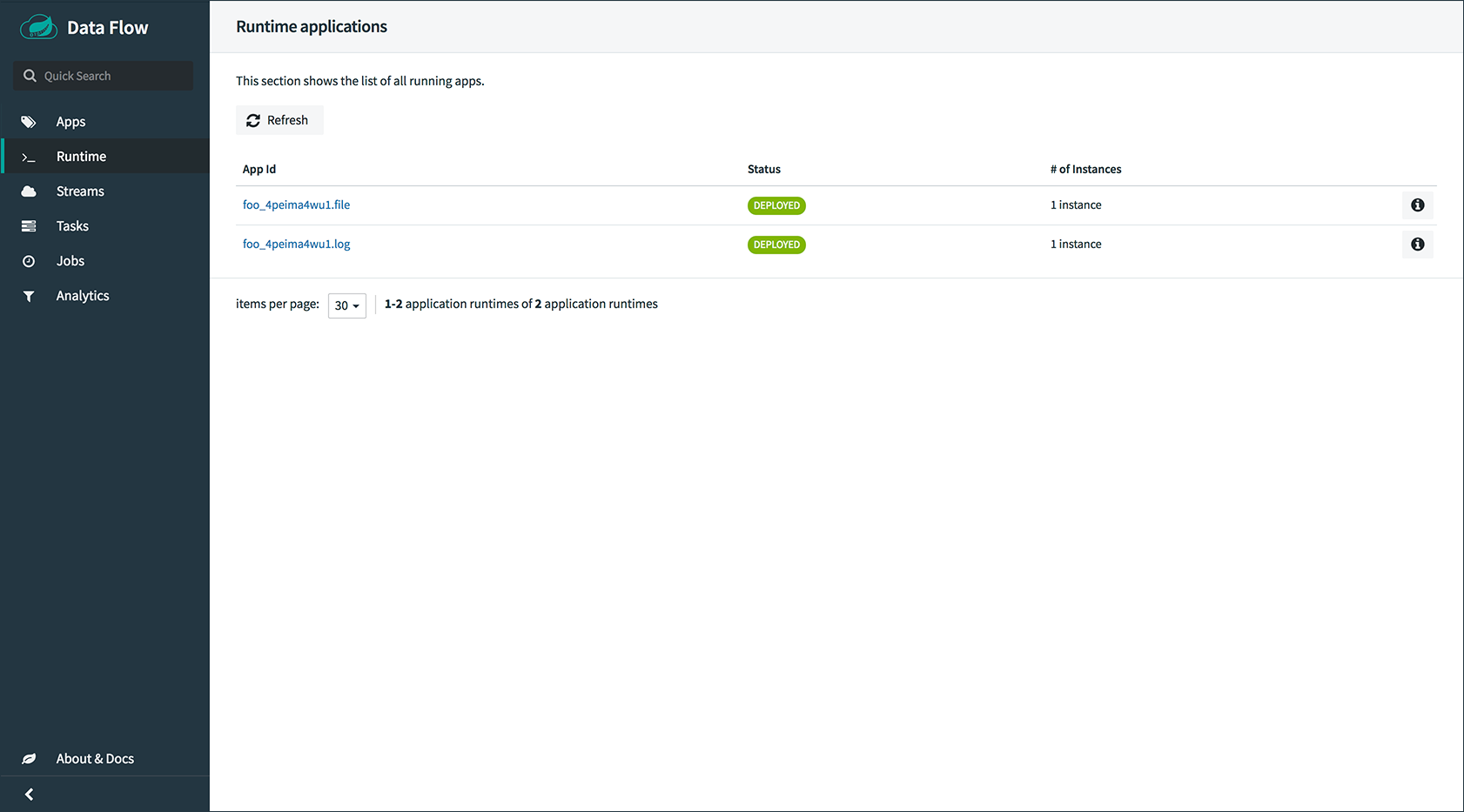

15. Runtime

The Data Flow Server relies on the target platform for the following runtime functionality:

15.1. Fault Tolerance

The target runtimes supported by Data Flow all have the ability to restart a long-lived application. Spring Cloud Data Flow sets up health probes are required by the runtime environment when deploying the application. You also have the ability to customize the health probes.

The collective state of all applications that make up the stream is used to determine the state of the stream. If an application fails, the state of the stream changes from ‘deployed’ to ‘partial’.

15.2. Resource Management

Each target runtime lets you control the amount of memory, disk, and CPU allocated to each application. These are passed as properties in the deployment manifest by using key names that are unique to each runtime. Refer to each platform’s server documentation for more information.

15.3. Scaling at Runtime

When deploying a stream, you can set the instance count for each individual application that makes up the stream. Once the stream is deployed, each target runtime lets you control the target number of instances for each individual application. Using the APIs, UIs, or command line tools for each runtime, you can scale up or down the number of instances as required.

Currently, scaling at runtime is not supported with the Kafka binder, as well as with partitioned streams, for which the suggested workaround is redeploying the stream with an updated number of instances. Both cases require a static consumer to be set up, based on information about the total instance count and current instance index.

Server Configuration

16. Feature Toggles

Data Flow server offers specific set of features that can be enabled or disabled when launching. These features include all the lifecycle operations, REST endpoints (server and client implementations including Shell and the UI) for:

-

Streams

-

Tasks

-

Analytics

-

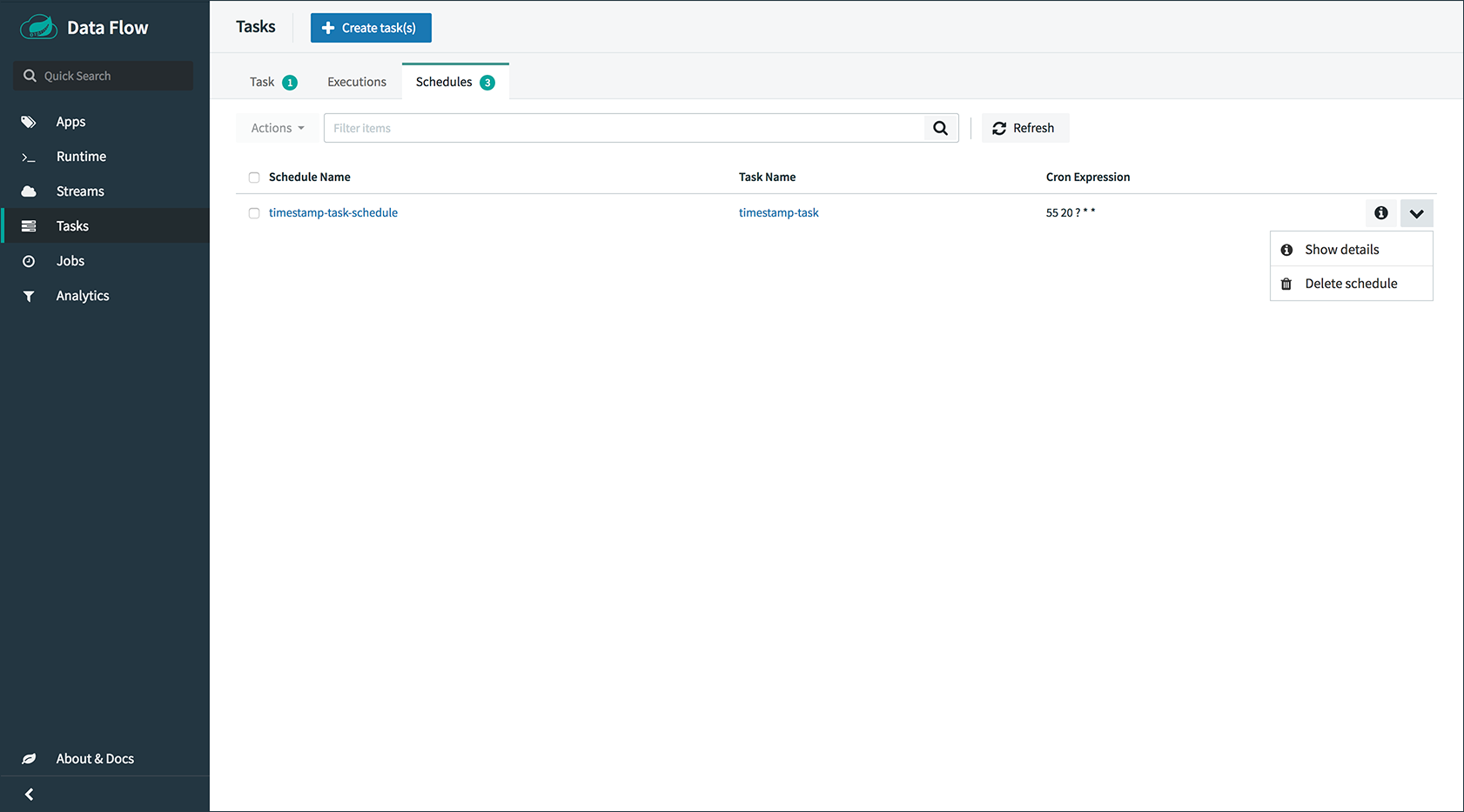

Schedules

You can enable or disable these features by setting the following boolean environment variables when launching the Data Flow server:

-

SPRING_CLOUD_DATAFLOW_FEATURES_STREAMS_ENABLED -

SPRING_CLOUD_DATAFLOW_FEATURES_TASKS_ENABLED -

SPRING_CLOUD_DATAFLOW_FEATURES_ANALYTICS_ENABLED -

SPRING_CLOUD_DATAFLOW_FEATURES_SCHEDULES_ENABLED

By default, all the features are enabled.

Since the analytics feature is enabled by default, the Data Flow server is expected to have a valid Redis store available as an analytics repository. Consequently, we provide a default implementation of analytics based on Redis. This also means that the Data Flow server’s health depends on the redis store availability as well. If you do not want to enable HTTP endpoints to read analytics data written to Redis, you can disable the analytics feature by setting the property mentioned earlier to false.

|

The /features REST endpoint provides information on the features that have been enabled and disabled.

17. General Configuration

The Spring Cloud Data Flow server for Kubernetes uses the spring-cloud-kubernetes module process both the ConfigMap and the secrets settings. To enable the ConfigMap support, pass in an environment variable of SPRING_CLOUD_KUBERNETES_CONFIG_NAME and set it to the name of the ConfigMap. The same is true for the secrets, where the environment variable is SPRING_CLOUD_KUBERNETES_SECRETS_NAME. To use the secrets, you also need to set SPRING_CLOUD_KUBERNETES_SECRETS_ENABLE_API to true.

The following example shows a snippet from a deployment script that sets these environment variables:

env:

- name: SPRING_CLOUD_KUBERNETES_SECRETS_ENABLE_API

value: 'true'

- name: SPRING_CLOUD_KUBERNETES_SECRETS_NAME

value: mysql

- name: SPRING_CLOUD_KUBERNETES_CONFIG_NAME

value: scdf-server17.1. Using ConfigMap and Secrets

You can pass configuration properties to the Data Flow Server by using Kubernetes ConfigMap and secrets.

The following example shows one possible configuration, which enables RabbitMQ, MySQL and Redis as well as basic security settings for the server:

apiVersion: v1

kind: ConfigMap

metadata:

name: scdf-server

labels:

app: scdf-server

data:

application.yaml: |-

security:

basic:

enabled: true

realm: Spring Cloud Data Flow

spring:

cloud:

dataflow:

security:

authentication:

file:

enabled: true

users:

admin: admin, ROLE_MANAGE, ROLE_VIEW

user: password, ROLE_VIEW, ROLE_CREATE

deployer:

kubernetes:

environmentVariables: 'SPRING_RABBITMQ_HOST=${RABBITMQ_SERVICE_HOST},SPRING_RABBITMQ_PORT=${RABBITMQ_SERVICE_PORT},SPRING_REDIS_HOST=${REDIS_SERVICE_HOST},SPRING_REDIS_PORT=${REDIS_SERVICE_PORT}'

datasource:

url: jdbc:mysql://${MYSQL_SERVICE_HOST}:${MYSQL_SERVICE_PORT}/mysql

username: root

password: ${mysql-root-password}

driverClassName: org.mariadb.jdbc.Driver

testOnBorrow: true

validationQuery: "SELECT 1"

redis:

host: ${REDIS_SERVICE_HOST}

port: ${REDIS_SERVICE_PORT}The preceding example assumes that RabbitMQ is deployed with rabbitmq as the service name. For MySQL, it assumes that the service name is mysql. For Redis, it assumes that the service name is redis. Kubernetes publishes the host and port values of these services as environment variables that we can use when configuring the apps we deploy.

We prefer to provide the MySQL connection password in a Secrets file, as the following example shows:

apiVersion: v1

kind: Secret

metadata:

name: mysql

labels:

app: mysql

data:

mysql-root-password: eW91cnBhc3N3b3JkThe password is a base64-encoded value.

18. Database Configuration

Spring Cloud Data Flow provides schemas for H2, HSQLDB, MySQL, Oracle, PostgreSQL, DB2, and SQL Server. The appropriate schema is automatically created when the server starts, provided the right database driver and appropriate credentials are in the classpath.

The JDBC drivers for MySQL (via MariaDB driver), HSQLDB, PostgreSQL, and embedded H2 are available out of the box. If you use any other database, you need to put the corresponding JDBC driver jar on the classpath of the server.

For instance, if you use MySQL in addition to a password in the secrets file, you could provide the following properties in the ConfigMap:

data:

application.yaml: |-

spring:

datasource:

url: jdbc:mysql://${MYSQL_SERVICE_HOST}:${MYSQL_SERVICE_PORT}/mysql

username: root

password: ${mysql-root-password}

driverClassName: org.mariadb.jdbc.Driver

url: jdbc:mysql://${MYSQL_SERVICE_HOST}:${MYSQL_SERVICE_PORT}/test

driverClassName: org.mariadb.jdbc.DriverFor PostgreSQL, you could use the following configuration:

data:

application.yaml: |-

spring:

datasource:

url: jdbc:postgresql://${PGSQL_SERVICE_HOST}:${PGSQL_SERVICE_PORT}/database

username: root

password: ${postgres-password}

driverClassName: org.postgresql.DriverFor HSQLDB, you could use the following configuration:

data:

application.yaml: |-

spring:

datasource:

url: jdbc:hsqldb:hsql://${HSQLDB_SERVICE_HOST}:${HSQLDB_SERVICE_PORT}/database

username: sa

driverClassName: org.hsqldb.jdbc.JDBCDriverYou can find migration scripts for specific database types in the spring-cloud-task repo.

19. Security

This section covers how to secure the server application in the sample configurations file used in Getting Started.

This section covers the basic configuration settings in the sample configuration. See the core security documentation for more detailed coverage of the security configuration options for the Spring Cloud Data Flow server and shell.

When using RabbitMQ as the transport, the security settings are located in the src/kubernetes/server/server-config-rabbit.yaml file. For Kafka, the settings are located in the src/kubernetes/server/server-config-kafka.yaml file. The following example shows a security configuration in YAML:

security:

basic:

enabled: true (1)

realm: Spring Cloud Data Flow (2)

spring:

cloud:

dataflow:

security:

authentication:

file:

enabled: true

users:

admin: admin, ROLE_MANAGE, ROLE_VIEW (3)

user: password, ROLE_VIEW, ROLE_CREATE (4)| 1 | Enable security |

| 2 | Optionally set the realm (defaults to Spring) |

| 3 | Create an 'admin' user with its password set to 'admin'. It can view applications, streams, and tasks and can also view management endpoints. |

| 4 | Create a 'user' user with its password set to 'password'. It can register applications, create streams and tasks, and view them. |

Feel free to change user names and passwords to suit and move the definition of user passwords to a Kubernetes secret.

20. Monitoring and Management

We recommend using the kubectl command for troubleshooting streams and tasks.

You can list all artifacts and resources used by using the following command:

kubectl get all,cm,secrets,pvcYou can list all resources used by a specific application or service by using a label to select resources. The following command lists all resources used by the mysql service:

kubectl get all -l app=mysqlYou can get the logs for a specific pod by issuing the following command:

kubectl logs pod <pod-name>If the pod is continuously getting restarted, you can add -p as an option to see the previous log, as follows:

kubectl logs -p <pod-name>You can also tail or follow a log by adding an -f option, as follows:

kubectl logs -f <pod-name>A useful command to help in troubleshooting issues, such as a container that has a fatal error when starting up, is to use the describe command, as the following example shows:

kubectl describe pod ticktock-log-0-qnk7220.1. Inspecting Server Logs

You can access the server logs by using the following command:

kubectl get pod -l app=scdf=server

kubectl logs <scdf-server-pod-name>20.2. Streams

Stream applications are deployed with the stream name followed by the name of the application. For processors and sinks, an instance index is also appended.

To see all the pods that are deployed by the Spring Cloud Data Flow server, you can specify the role=spring-app label, as follows:

kubectl get pod -l role=spring-appTo see details for a specific application deployment you can use the following command:

kubectl describe pod <app-pod-name>To view the application logs, you can use the following command:

kubectl logs <app-pod-name>If you would like to tail a log you can use the following command:

kubectl logs -f <app-pod-name>20.3. Tasks

Tasks are launched as bare pods without a replication controller. The pods remain after the tasks complete, which gives you an opportunity to review the logs.

To see all pods for a specific task, use the following command:

kubectl get pod -l task-name=<task-name>To review the task logs, use the following command:

kubectl logs <task-pod-name>You have two options to delete completed pods. You can delete them manually once they are no longer needed or you can use the Data Flow shell task execution cleanup command to remove the completed pod for a task execution.

To delete the task pod manually, use the following command:

kubectl delete pod <task-pod-name>To use the task execution cleanup command, you must first determine the ID for the task execution. To do so, use the task execution list command, as the following example (with output) shows:

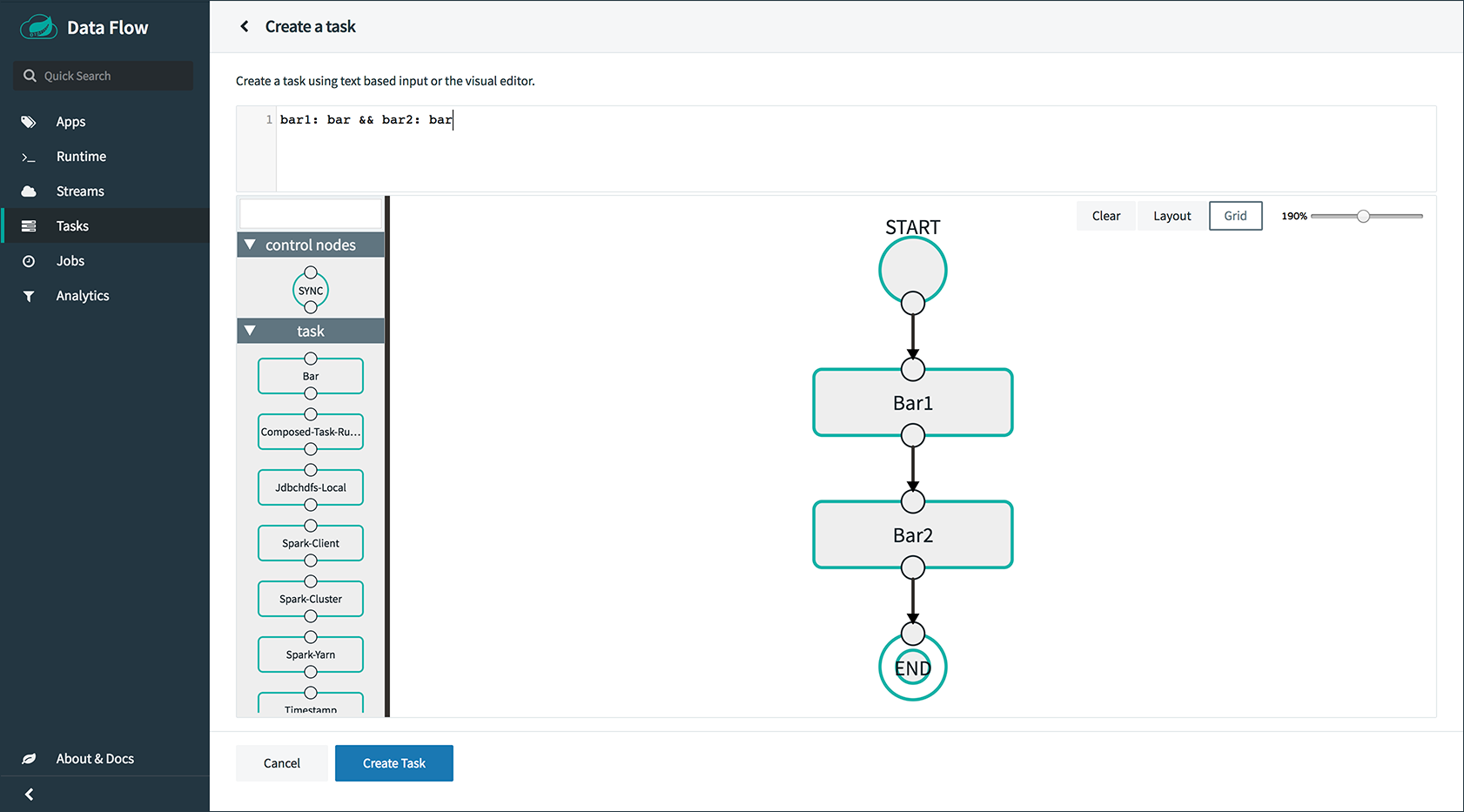

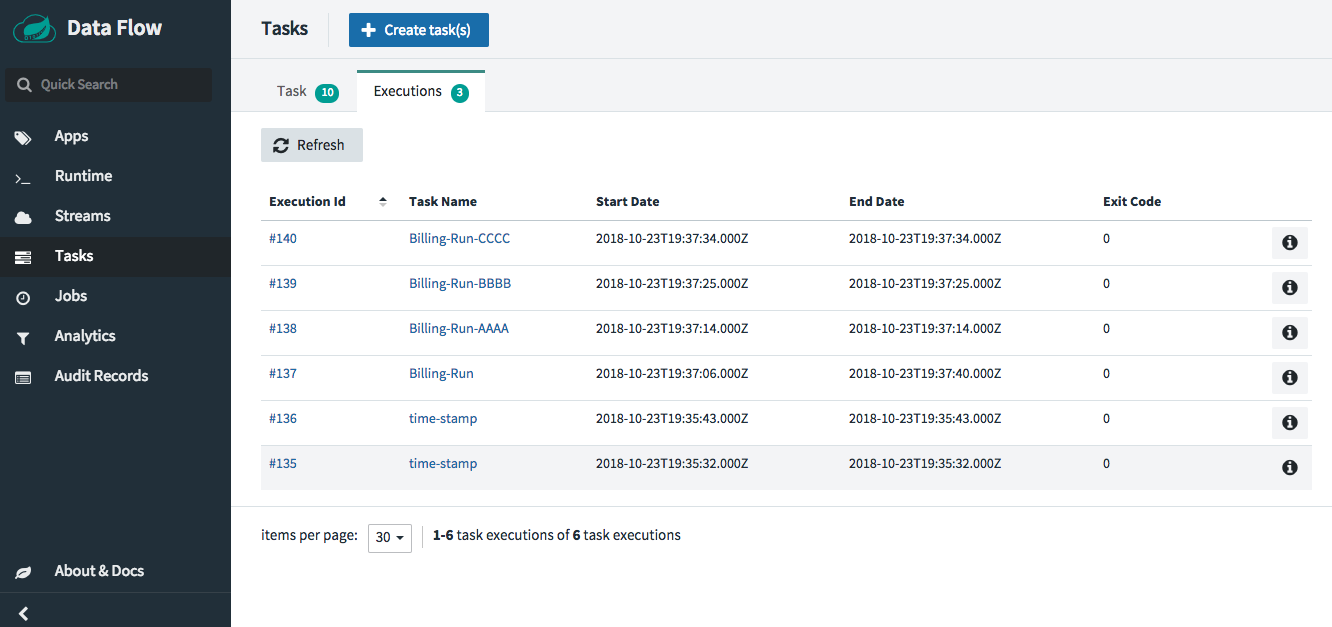

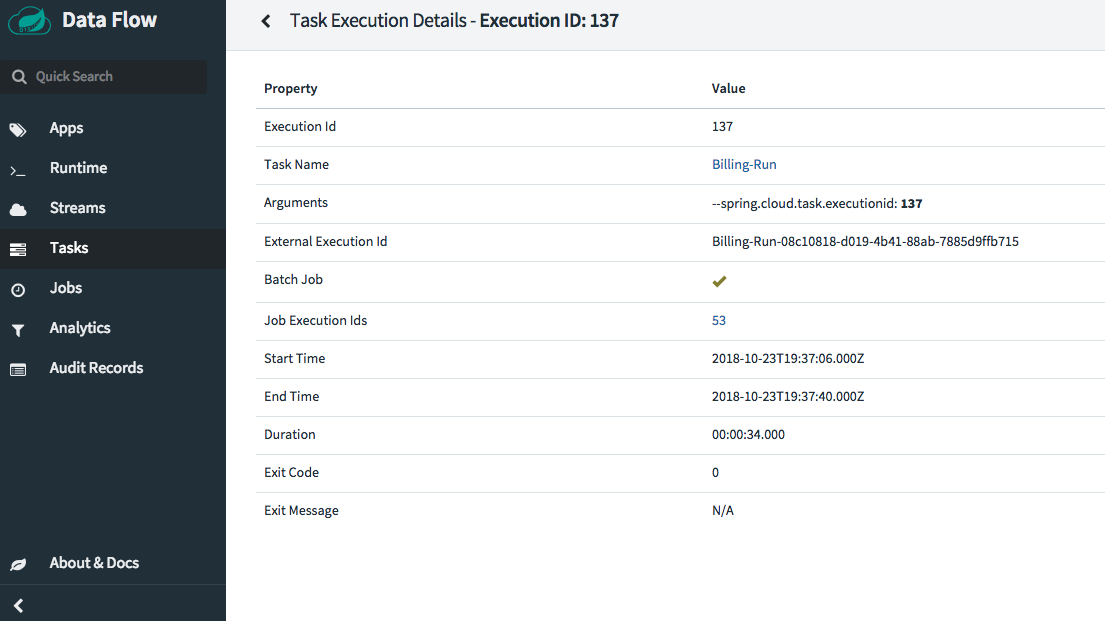

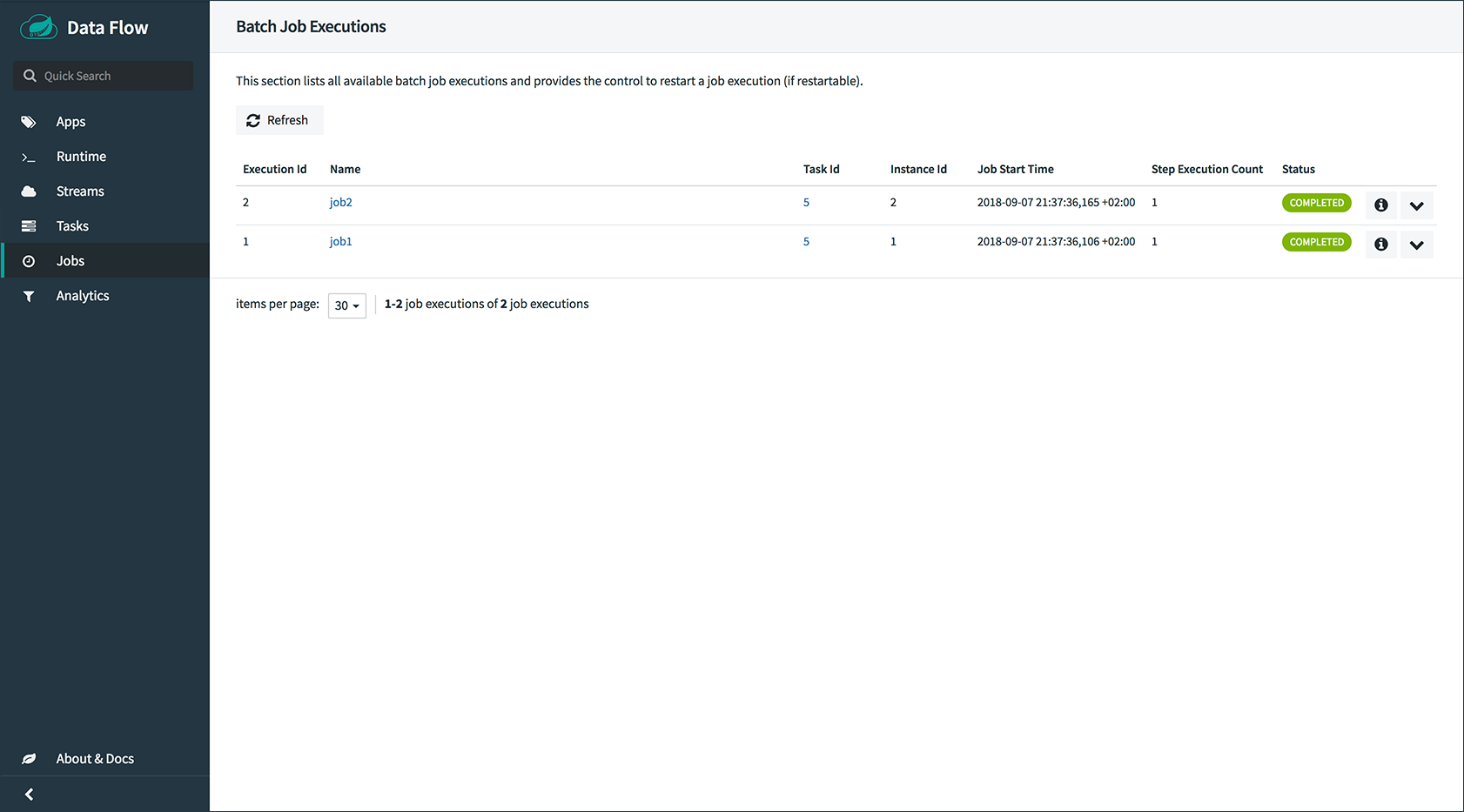

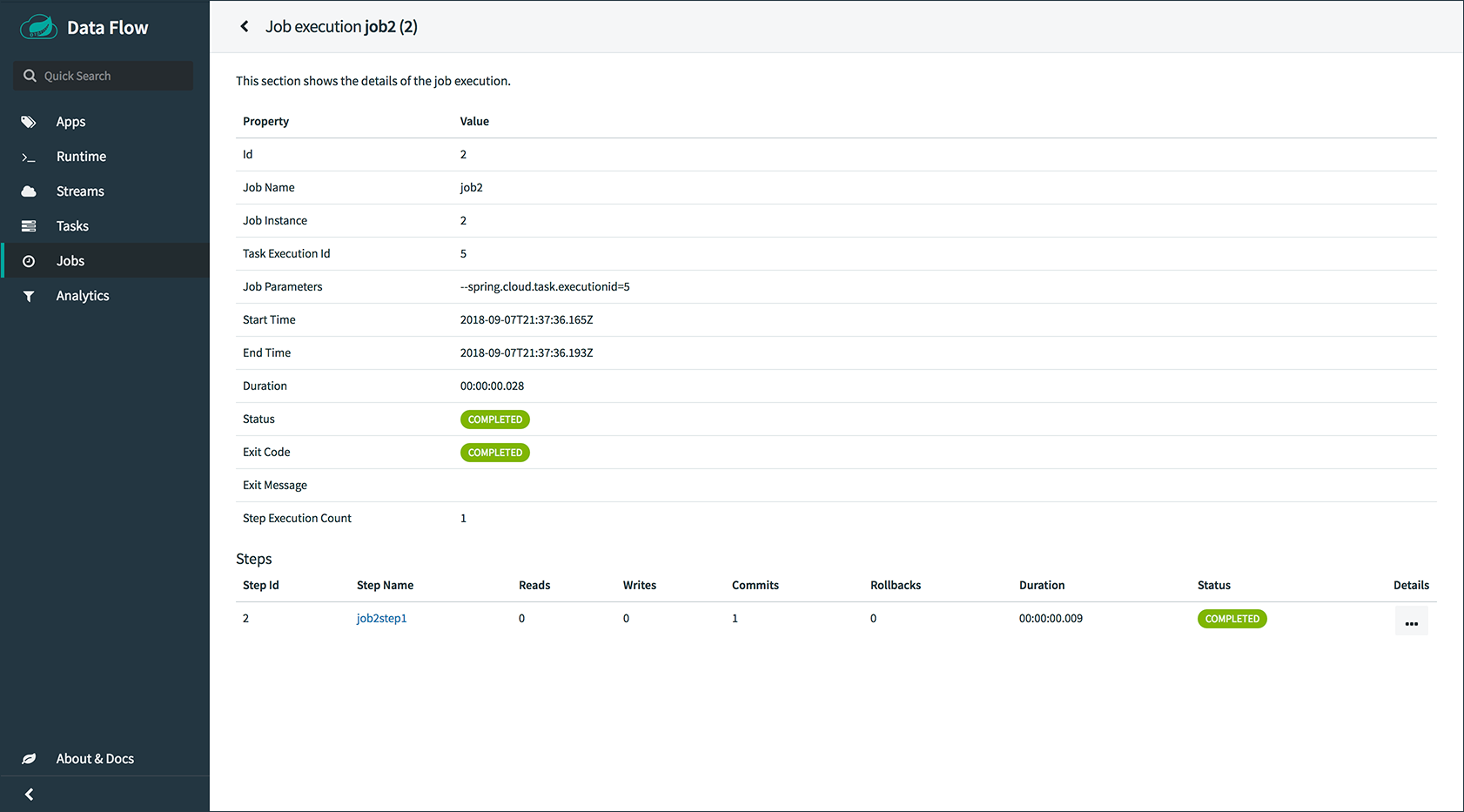

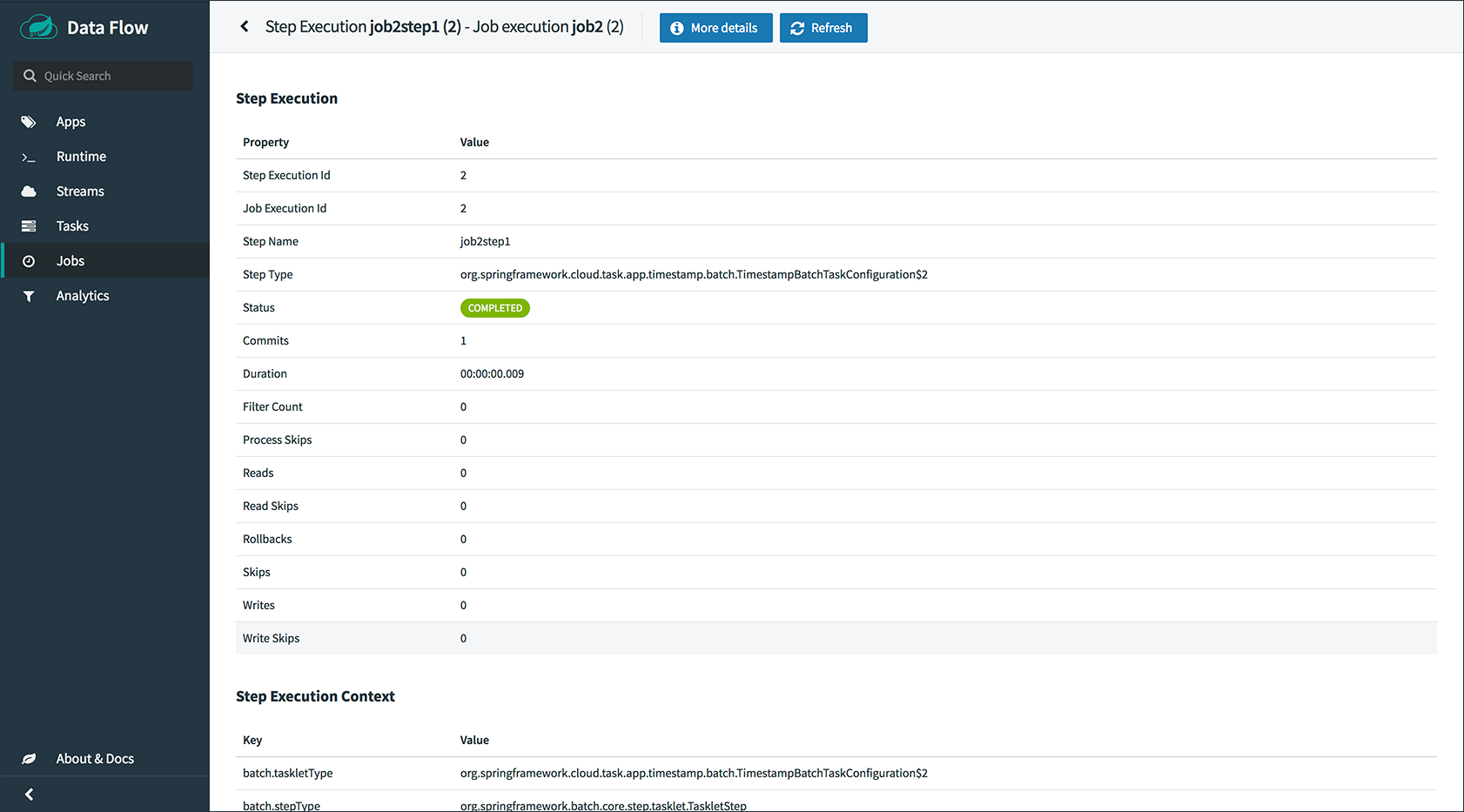

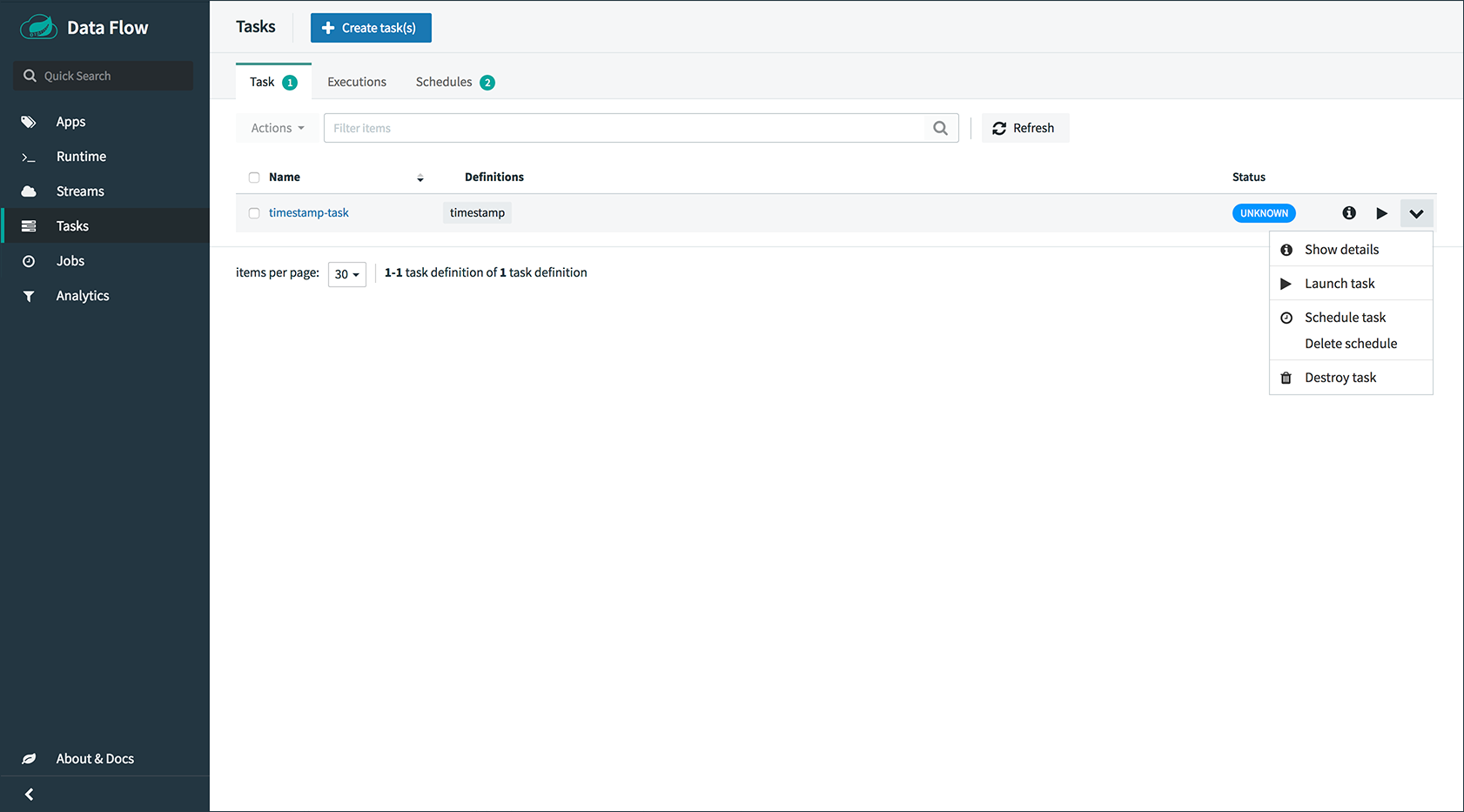

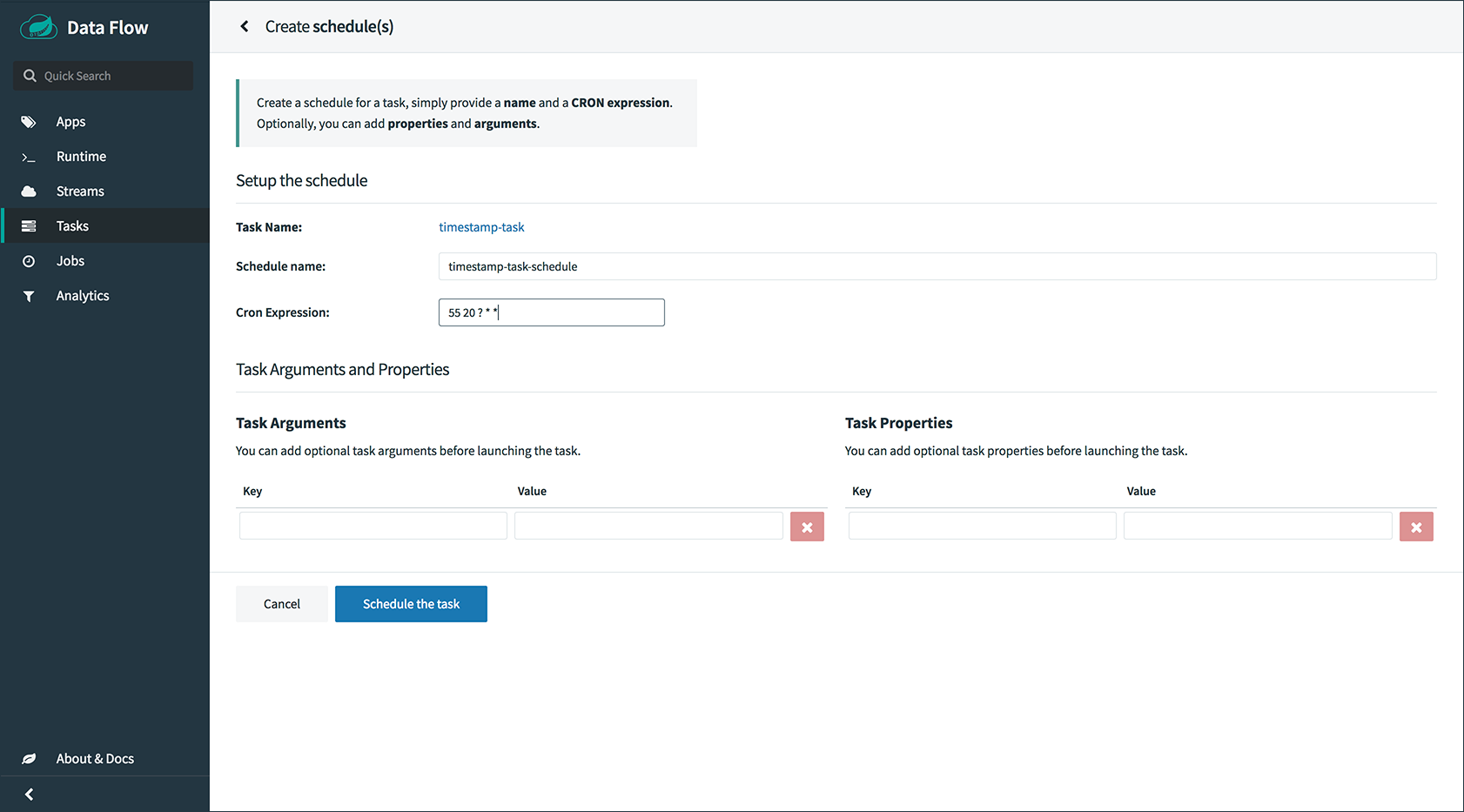

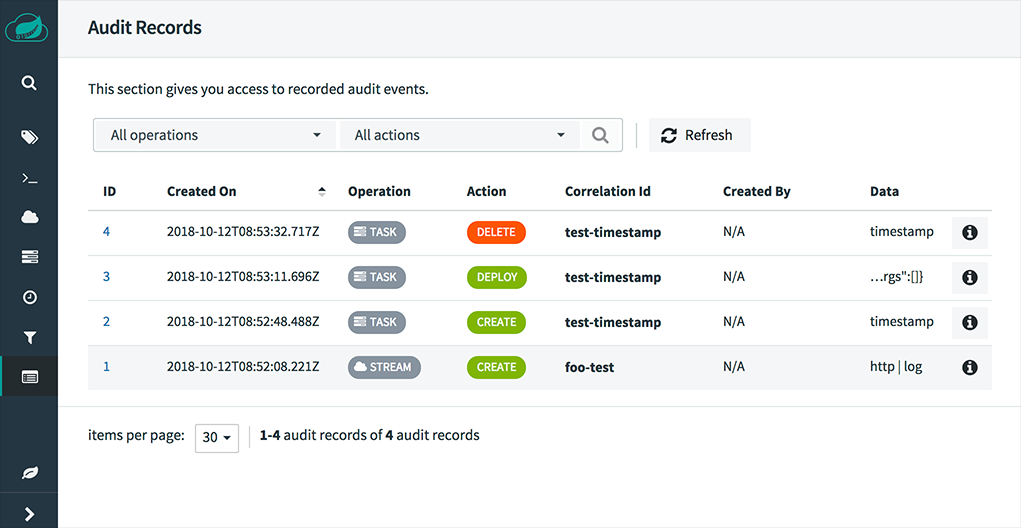

dataflow:>task execution list