Mark Fisher, Dave Syer, Oleg Zhurakousky, Anshul Mehra

3.0.8.RELEASE

Introduction

Spring Cloud Function is a project with the following high-level goals:

-

Promote the implementation of business logic via functions.

-

Decouple the development lifecycle of business logic from any specific runtime target so that the same code can run as a web endpoint, a stream processor, or a task.

-

Support a uniform programming model across serverless providers, as well as the ability to run standalone (locally or in a PaaS).

-

Enable Spring Boot features (auto-configuration, dependency injection, metrics) on serverless providers.

It abstracts away all of the transport details and infrastructure, allowing the developer to keep all the familiar tools and processes, and focus firmly on business logic.

Here’s a complete, executable, testable Spring Boot application (implementing a simple string manipulation):

@SpringBootApplication

public class Application {

@Bean

public Function<Flux<String>, Flux<String>> uppercase() {

return flux -> flux.map(value -> value.toUpperCase());

}

public static void main(String[] args) {

SpringApplication.run(Application.class, args);

}

}It’s just a Spring Boot application, so it can be built, run and

tested, locally and in a CI build, the same way as any other Spring

Boot application. The Function is from java.util and Flux is a

Reactive Streams Publisher from

Project Reactor. The function can be

accessed over HTTP or messaging.

Spring Cloud Function has 4 main features:

In the nutshell Spring Cloud Function provides the following features:

1. Wrappers for @Beans of type Function, Consumer and

Supplier, exposing them to the outside world as either HTTP

endpoints and/or message stream listeners/publishers with RabbitMQ, Kafka etc.

-

Choice of programming styles - reactive, imperative or hybrid.

-

Function composition and adaptation (e.g., composing imperative functions with reactive).

-

Support for reactive function with multiple inputs and outputs allowing merging, joining and other complex streaming operation to be handled by functions.

-

Transparent type conversion of inputs and outputs.

-

Packaging functions for deployments, specific to the target platform (e.g., Project Riff, AWS Lambda and more)

-

Adapters to expose function to the outside world as HTTP endpoints etc.

-

Deploying a JAR file containing such an application context with an isolated classloader, so that you can pack them together in a single JVM.

-

Compiling strings which are Java function bodies into bytecode, and then turning them into

@Beansthat can be wrapped as above. -

Adapters for AWS Lambda, Azure, Google Cloud Functions, Apache OpenWhisk and possibly other "serverless" service providers.

| Spring Cloud is released under the non-restrictive Apache 2.0 license. If you would like to contribute to this section of the documentation or if you find an error, please find the source code and issue trackers in the project at github. |

Getting Started

Build from the command line (and "install" the samples):

$ ./mvnw clean install

(If you like to YOLO add -DskipTests.)

Run one of the samples, e.g.

$ java -jar spring-cloud-function-samples/function-sample/target/*.jar

This runs the app and exposes its functions over HTTP, so you can convert a string to uppercase, like this:

$ curl -H "Content-Type: text/plain" localhost:8080/uppercase -d Hello HELLO

You can convert multiple strings (a Flux<String>) by separating them

with new lines

$ curl -H "Content-Type: text/plain" localhost:8080/uppercase -d 'Hello > World' HELLOWORLD

(You can use QJ in a terminal to insert a new line in a literal

string like that.)

Programming model

Function Catalog and Flexible Function Signatures

One of the main features of Spring Cloud Function is to adapt and support a range of type signatures for user-defined functions,

while providing a consistent execution model.

That’s why all user defined functions are transformed into a canonical representation by FunctionCatalog.

While users don’t normally have to care about the FunctionCatalog at all, it is useful to know what

kind of functions are supported in user code.

It is also important to understand that Spring Cloud Function provides first class support for reactive API

provided by Project Reactor allowing reactive primitives such as Mono and Flux

to be used as types in user defined functions providing greater flexibility when choosing programming model for

your function implementation.

Reactive programming model also enables functional support for features that would be otherwise difficult to impossible to implement

using imperative programming style. For more on this please read Function Arity section.

Java 8 function support

Spring Cloud Function embraces and builds on top of the 3 core functional interfaces defined by Java and available to us since Java 8.

-

Supplier<O>

-

Function<I, O>

-

Consumer<I>

Supplier

Supplier can be reactive - Supplier<Flux<T>>

or imperative - Supplier<T>. From the invocation standpoint this should make no difference

to the implementor of such Supplier. However, when used within frameworks

(e.g., Spring Cloud Stream), Suppliers, especially reactive,

often used to represent the source of the stream, therefore they are invoked once to get the stream (e.g., Flux)

to which consumers can subscribe to. In other words such suppliers represent an equivalent of an infinite stream.

However, the same reactive suppliers can also represent finite stream(s) (e.g., result set on the polled JDBC data).

In those cases such reactive suppliers must be hooked up to some polling mechanism of the underlying framework.

To assist with that Spring Cloud Function provides a marker annotation

org.springframework.cloud.function.context.PollableSupplier to signal that such supplier produces a

finite stream and may need to be polled again. That said, it is important to understand that Spring Cloud Function itself

provides no behavior for this annotation.

In addition PollableSupplier annotation exposes a splittable attribute to signal that produced stream

needs to be split (see Splitter EIP)

Here is the example:

@PollableSupplier(splittable = true)

public Supplier<Flux<String>> someSupplier() {

return () -> {

String v1 = String.valueOf(System.nanoTime());

String v2 = String.valueOf(System.nanoTime());

String v3 = String.valueOf(System.nanoTime());

return Flux.just(v1, v2, v3);

};

}Function

Function can also be written in imperative or reactive way, yet unlike Supplier and Consumer there are no special considerations for the implementor other then understanding that when used within frameworks such as Spring Cloud Stream and others, reactive function is invoked only once to pass a reference to the stream (Flux or Mono) and imperative is invoked once per event.

Consumer

Consumer is a little bit special because it has a void return type,

which implies blocking, at least potentially. Most likely you will not

need to write Consumer<Flux<?>>, but if you do need to do that,

remember to subscribe to the input flux.

Function Composition

Function Composition is a feature that allows one to compose several functions into one. The core support is based on function composition feature available with Function.andThen(..) support available since Java 8. However on top of it, we provide few additional features.

Declarative Function Composition

This feature allows you to provide composition instruction in a declarative way using | (pipe) or , (comma) delimiter

when providing spring.cloud.function.definition property.

For example

--spring.cloud.function.definition=uppercase|reverse

Here we effectively provided a definition of a single function which itself is a composition of

function uppercase and function reverse. In fact that is one of the reasons why the property name is definition and not name,

since the definition of a function can be a composition of several named functions.

And as mentioned you can use , instead of pipe (such as …definition=uppercase,reverse).

Composing non-Functions

Spring Cloud Function also supports composing Supplier with Consumer or Function as well as Function with Consumer.

What’s important here is to understand the end product of such definitions.

Composing Supplier with Function still results in Supplier while composing Supplier with Consumer will effectively render Runnable.

Following the same logic composing Function with Consumer will result in Consumer.

And of course you can’t compose uncomposable such as Consumer and Function, Consumer and Supplier etc.

Function Routing

Since version 2.2 Spring Cloud Function provides routing feature allowing you to invoke a single function which acts as a router to an actual function you wish to invoke This feature is very useful in certain FAAS environments where maintaining configurations for several functions could be cumbersome or exposing more then one function is not possible.

The RoutingFunction is registered in FunctionCatalog under the name functionRouter. For simplicity

and consistency you can also refer to RoutingFunction.FUNCTION_NAME constant.

This function has the following signature:

public class RoutingFunction implements Function<Object, Object> {

. . .

}The routing instructions could be communicated in several ways;

Message Headers

If the input argument is of type Message<?>, you can communicate routing instruction by setting one of

spring.cloud.function.definition or spring.cloud.function.routing-expression Message headers.

For more static cases you can use spring.cloud.function.definition header which allows you to provide

the name of a single function (e.g., …definition=foo) or a composition instruction (e.g., …definition=foo|bar|baz).

For more dynamic cases you can use spring.cloud.function.routing-expression header which allows

you to use Spring Expression Language (SpEL) and provide SpEL expression that should resolve

into definition of a function (as described above).

SpEL evaluation context’s root object is the

actual input argument, so in he case of Message<?> you can construct expression that has access

to both payload and headers (e.g., spring.cloud.function.routing-expression=headers.function_name).

|

In specific execution environments/models the adapters are responsible to translate and communicate

spring.cloud.function.definition and/or spring.cloud.function.routing-expression via Message header.

For example, when using spring-cloud-function-web you can provide spring.cloud.function.definition as an HTTP

header and the framework will propagate it as well as other HTTP headers as Message headers.

Application Properties

Routing instruction can also be communicated via spring.cloud.function.definition

or spring.cloud.function.routing-expression as application properties. The rules described in the

previous section apply here as well. The only difference is you provide these instructions as

application properties (e.g., --spring.cloud.function.definition=foo).

| When dealing with reactive inputs (e.g., Publisher), routing instructions must only be provided via Function properties. This is due to the nature of the reactive functions which are invoked only once to pass a Publisher and the rest is handled by the reactor, hence we can not access and/or rely on the routing instructions communicated via individual values (e.g., Message). |

Function Arity

There are times when a stream of data needs to be categorized and organized. For example, consider a classic big-data use case of dealing with unorganized data containing, let’s say, ‘orders’ and ‘invoices’, and you want each to go into a separate data store. This is where function arity (functions with multiple inputs and outputs) support comes to play.

Let’s look at an example of such a function (full implementation details are available here),

@Bean

public Function<Flux<Integer>, Tuple2<Flux<String>, Flux<String>>> organise() {

return flux -> ...;

}Given that Project Reactor is a core dependency of SCF, we are using its Tuple library. Tuples give us a unique advantage by communicating to us both cardinality and type information. Both are extremely important in the context of SCSt. Cardinality lets us know how many input and output bindings need to be created and bound to the corresponding inputs and outputs of a function. Awareness of the type information ensures proper type conversion.

Also, this is where the ‘index’ part of the naming convention for binding

names comes into play, since, in this function, the two output binding

names are organise-out-0 and organise-out-1.

IMPORTANT: At the moment, function arity is only supported for reactive functions

(Function<TupleN<Flux<?>…>, TupleN<Flux<?>…>>) centered on Complex event processing

where evaluation and computation on confluence of events typically requires view into a

stream of events rather than single event.

|

Kotlin Lambda support

We also provide support for Kotlin lambdas (since v2.0). Consider the following:

@Bean

open fun kotlinSupplier(): () -> String {

return { "Hello from Kotlin" }

}

@Bean

open fun kotlinFunction(): (String) -> String {

return { it.toUpperCase() }

}

@Bean

open fun kotlinConsumer(): (String) -> Unit {

return { println(it) }

}The above represents Kotlin lambdas configured as Spring beans. The signature of each maps to a Java equivalent of

Supplier, Function and Consumer, and thus supported/recognized signatures by the framework.

While mechanics of Kotlin-to-Java mapping are outside of the scope of this documentation, it is important to understand that the

same rules for signature transformation outlined in "Java 8 function support" section are applied here as well.

To enable Kotlin support all you need is to add spring-cloud-function-kotlin module to your classpath which contains the appropriate

autoconfiguration and supporting classes.

Function Component Scan

Spring Cloud Function will scan for implementations of Function,

Consumer and Supplier in a package called functions if it

exists. Using this feature you can write functions that have no

dependencies on Spring - not even the @Component annotation is

needed. If you want to use a different package, you can set

spring.cloud.function.scan.packages. You can also use

spring.cloud.function.scan.enabled=false to switch off the scan

completely.

Standalone Web Applications

Functions could be automatically exported as HTTP endpoints.

The spring-cloud-function-web module has autoconfiguration that

activates when it is included in a Spring Boot web application (with

MVC support). There is also a spring-cloud-starter-function-web to

collect all the optional dependencies in case you just want a simple

getting started experience.

With the web configurations activated your app will have an MVC

endpoint (on "/" by default, but configurable with

spring.cloud.function.web.path) that can be used to access the

functions in the application context where function name becomes part of the URL path. The supported content types are

plain text and JSON.

| Method | Path | Request | Response | Status |

|---|---|---|---|---|

GET |

/{supplier} |

- |

Items from the named supplier |

200 OK |

POST |

/{consumer} |

JSON object or text |

Mirrors input and pushes request body into consumer |

202 Accepted |

POST |

/{consumer} |

JSON array or text with new lines |

Mirrors input and pushes body into consumer one by one |

202 Accepted |

POST |

/{function} |

JSON object or text |

The result of applying the named function |

200 OK |

POST |

/{function} |

JSON array or text with new lines |

The result of applying the named function |

200 OK |

GET |

/{function}/{item} |

- |

Convert the item into an object and return the result of applying the function |

200 OK |

As the table above shows the behaviour of the endpoint depends on the method and also the type of incoming request data. When the incoming data

is single valued, and the target function is declared as obviously single valued (i.e. not returning a collection or Flux), then the response

will also contain a single value.

For multi-valued responses the client can ask for a server-sent event stream by sending `Accept: text/event-stream".

Functions and consumers that are declared with input and output in Message<?> will see the request headers on the input messages, and the output message headers will be converted to HTTP headers.

When POSTing text the response format might be different with Spring Boot 2.0 and older versions, depending on the content negotiation (provide content type and accept headers for the best results).

See Testing Functional Applications to see the details and example on how to test such application.

Function Mapping rules

If there is only a single function (consumer etc.) in the catalog, the name in the path is optional.

In other words, providing you only have uppercase function in catalog

curl -H "Content-Type: text/plain" localhost:8080/uppercase -d hello and curl -H "Content-Type: text/plain" localhost:8080/ -d hello calls are identical.

Composite functions can be addressed using pipes or commas to separate function names (pipes are legal in URL paths, but a bit awkward to type on the command line).

For example, curl -H "Content-Type: text/plain" localhost:8080/uppercase,reverse -d hello.

For cases where there is more then a single function in catalog, each function will be exported and mapped with function name being

part of the path (e.g., localhost:8080/uppercase).

In this scenario you can still map specific function or function composition to the root path by providing

spring.cloud.function.definition property

For example,

--spring.cloud.function.definition=foo|bar

The above property will compose 'foo' and 'bar' function and map the composed function to the "/" path.

Function Filtering rules

In situations where there are more then one function in catalog there may be a need to only export certain functions or function compositions. In that case you can use

the same spring.cloud.function.definition property listing functions you intend to export delimited by ;.

Note that in this case nothing will be mapped to the root path and functions that are not listed (including compositions) are not going to be exported

For example,

--spring.cloud.function.definition=foo;bar

This will only export function foo and function bar regardless how many functions are available in catalog (e.g., localhost:8080/foo).

--spring.cloud.function.definition=foo|bar;baz

This will only export function composition foo|bar and function baz regardless how many functions are available in catalog (e.g., localhost:8080/foo,bar).

Standalone Streaming Applications

To send or receive messages from a broker (such as RabbitMQ or Kafka) you can leverage spring-cloud-stream project and it’s integration with Spring Cloud Function.

Please refer to Spring Cloud Function section of the Spring Cloud Stream reference manual for more details and examples.

Deploying a Packaged Function

Spring Cloud Function provides a "deployer" library that allows you to launch a jar file (or exploded archive, or set of jar files) with an isolated class loader and expose the functions defined in it. This is quite a powerful tool that would allow you to, for instance, adapt a function to a range of different input-output adapters without changing the target jar file. Serverless platforms often have this kind of feature built in, so you could see it as a building block for a function invoker in such a platform (indeed the Riff Java function invoker uses this library).

The standard entry point is to add spring-cloud-function-deployer to the classpath, the deployer kicks in and looks for some configuration to tell it where to find the function jar.

<dependency>

<groupId>org.springframework.cloud</groupId>

<artifactId>spring-cloud-function-deployer</artifactId>

<version>${spring.cloud.function.version}</version>

</dependency>At a minimum the user has to provide a spring.cloud.function.location which is a URL or resource location for the archive containing the functions. It can optionally use a maven: prefix to locate the artifact via a dependency lookup (see FunctionProperties for complete details). A Spring Boot application is bootstrapped from the jar file, using the MANIFEST.MF to locate a start class, so that a standard Spring Boot fat jar works well, for example. If the target jar can be launched successfully then the result is a function registered in the main application’s FunctionCatalog. The registered function can be applied by code in the main application, even though it was created in an isolated class loader (by deault).

Here is the example of deploying a JAR which contains an 'uppercase' function and invoking it .

@SpringBootApplication

public class DeployFunctionDemo {

public static void main(String[] args) {

ApplicationContext context = SpringApplication.run(DeployFunctionDemo.class,

"--spring.cloud.function.location=..../target/uppercase-0.0.1-SNAPSHOT.jar",

"--spring.cloud.function.definition=uppercase");

FunctionCatalog catalog = context.getBean(FunctionCatalog.class);

Function<String, String> function = catalog.lookup("uppercase");

System.out.println(function.apply("hello"));

}

}Supported Packaging Scenarios

Currently Spring Cloud Function supports several packaging scenarios to give you the most flexibility when it comes to deploying functions.

Simple JAR

This packaging option implies no dependency on anything related to Spring. For example; Consider that such JAR contains the following class:

package function.example;

. . .

public class UpperCaseFunction implements Function<String, String> {

@Override

public String apply(String value) {

return value.toUpperCase();

}

}All you need to do is specify location and function-class properties when deploying such package:

--spring.cloud.function.location=target/it/simplestjar/target/simplestjar-1.0.0.RELEASE.jar

--spring.cloud.function.function-class=function.example.UpperCaseFunctionIt’s conceivable in some cases that you might want to package multiple functions together. For such scenarios you can use

spring.cloud.function.function-class property to list several classes delimiting them by ;.

For example,

--spring.cloud.function.function-class=function.example.UpperCaseFunction;function.example.ReverseFunctionHere we are identifying two functions to deploy, which we can now access in function catalog by name (e.g., catalog.lookup("reverseFunction");).

For more details please reference the complete sample available here. You can also find a corresponding test in FunctionDeployerTests.

Spring Boot JAR

This packaging option implies there is a dependency on Spring Boot and that the JAR was generated as Spring Boot JAR. That said, given that the deployed JAR runs in the isolated class loader, there will not be any version conflict with the Spring Boot version used by the actual deployer. For example; Consider that such JAR contains the following class (which could have some additional Spring dependencies providing Spring/Spring Boot is on the classpath):

package function.example;

. . .

public class UpperCaseFunction implements Function<String, String> {

@Override

public String apply(String value) {

return value.toUpperCase();

}

}As before all you need to do is specify location and function-class properties when deploying such package:

--spring.cloud.function.location=target/it/simplestjar/target/simplestjar-1.0.0.RELEASE.jar

--spring.cloud.function.function-class=function.example.UpperCaseFunctionFor more details please reference the complete sample available here. You can also find a corresponding test in FunctionDeployerTests.

Spring Boot Application

This packaging option implies your JAR is complete stand alone Spring Boot application with functions as managed Spring beans. As before there is an obvious assumption that there is a dependency on Spring Boot and that the JAR was generated as Spring Boot JAR. That said, given that the deployed JAR runs in the isolated class loader, there will not be any version conflict with the Spring Boot version used by the actual deployer. For example; Consider that such JAR contains the following class:

package function.example;

. . .

@SpringBootApplication

public class SimpleFunctionAppApplication {

public static void main(String[] args) {

SpringApplication.run(SimpleFunctionAppApplication.class, args);

}

@Bean

public Function<String, String> uppercase() {

return value -> value.toUpperCase();

}

}Given that we’re effectively dealing with another Spring Application context and that functions are spring managed beans,

in addition to the location property we also specify definition property instead of function-class.

--spring.cloud.function.location=target/it/bootapp/target/bootapp-1.0.0.RELEASE-exec.jar

--spring.cloud.function.definition=uppercaseFor more details please reference the complete sample available here. You can also find a corresponding test in FunctionDeployerTests.

| This particular deployment option may or may not have Spring Cloud Function on it’s classpath. From the deployer perspective this doesn’t matter. |

Functional Bean Definitions

Spring Cloud Function supports a "functional" style of bean declarations for small apps where you need fast startup. The functional style of bean declaration was a feature of Spring Framework 5.0 with significant enhancements in 5.1.

Comparing Functional with Traditional Bean Definitions

Here’s a vanilla Spring Cloud Function application from with the

familiar @Configuration and @Bean declaration style:

@SpringBootApplication

public class DemoApplication {

@Bean

public Function<String, String> uppercase() {

return value -> value.toUpperCase();

}

public static void main(String[] args) {

SpringApplication.run(DemoApplication.class, args);

}

}Now for the functional beans: the user application code can be recast into "functional" form, like this:

@SpringBootConfiguration

public class DemoApplication implements ApplicationContextInitializer<GenericApplicationContext> {

public static void main(String[] args) {

FunctionalSpringApplication.run(DemoApplication.class, args);

}

public Function<String, String> uppercase() {

return value -> value.toUpperCase();

}

@Override

public void initialize(GenericApplicationContext context) {

context.registerBean("demo", FunctionRegistration.class,

() -> new FunctionRegistration<>(uppercase())

.type(FunctionType.from(String.class).to(String.class)));

}

}The main differences are:

-

The main class is an

ApplicationContextInitializer. -

The

@Beanmethods have been converted to calls tocontext.registerBean() -

The

@SpringBootApplicationhas been replaced with@SpringBootConfigurationto signify that we are not enabling Spring Boot autoconfiguration, and yet still marking the class as an "entry point". -

The

SpringApplicationfrom Spring Boot has been replaced with aFunctionalSpringApplicationfrom Spring Cloud Function (it’s a subclass).

The business logic beans that you register in a Spring Cloud Function app are of type FunctionRegistration.

This is a wrapper that contains both the function and information about the input and output types. In the @Bean

form of the application that information can be derived reflectively, but in a functional bean registration some of

it is lost unless we use a FunctionRegistration.

An alternative to using an ApplicationContextInitializer and FunctionRegistration is to make the application

itself implement Function (or Consumer or Supplier). Example (equivalent to the above):

@SpringBootConfiguration

public class DemoApplication implements Function<String, String> {

public static void main(String[] args) {

FunctionalSpringApplication.run(DemoApplication.class, args);

}

@Override

public String apply(String value) {

return value.toUpperCase();

}

}It would also work if you add a separate, standalone class of type Function and register it with

the SpringApplication using an alternative form of the run() method. The main thing is that the generic

type information is available at runtime through the class declaration.

Suppose you have

@Component

public class CustomFunction implements Function<Flux<Foo>, Flux<Bar>> {

@Override

public Flux<Bar> apply(Flux<Foo> flux) {

return flux.map(foo -> new Bar("This is a Bar object from Foo value: " + foo.getValue()));

}

}You register it as such:

@Override

public void initialize(GenericApplicationContext context) {

context.registerBean("function", FunctionRegistration.class,

() -> new FunctionRegistration<>(new CustomFunction()).type(CustomFunction.class));

}Limitations of Functional Bean Declaration

Most Spring Cloud Function apps have a relatively small scope compared to the whole of Spring Boot,

so we are able to adapt it to these functional bean definitions easily. If you step outside that limited scope,

you can extend your Spring Cloud Function app by switching back to @Bean style configuration, or by using a hybrid

approach. If you want to take advantage of Spring Boot autoconfiguration for integrations with external datastores,

for example, you will need to use @EnableAutoConfiguration. Your functions can still be defined using the functional

declarations if you want (i.e. the "hybrid" style), but in that case you will need to explicitly switch off the "full

functional mode" using spring.functional.enabled=false so that Spring Boot can take back control.

Testing Functional Applications

Spring Cloud Function also has some utilities for integration testing that will be very familiar to Spring Boot users.

Suppose this is your application:

@SpringBootApplication

public class SampleFunctionApplication {

public static void main(String[] args) {

SpringApplication.run(SampleFunctionApplication.class, args);

}

@Bean

public Function<String, String> uppercase() {

return v -> v.toUpperCase();

}

}Here is an integration test for the HTTP server wrapping this application:

@SpringBootTest(classes = SampleFunctionApplication.class,

webEnvironment = WebEnvironment.RANDOM_PORT)

public class WebFunctionTests {

@Autowired

private TestRestTemplate rest;

@Test

public void test() throws Exception {

ResponseEntity<String> result = this.rest.exchange(

RequestEntity.post(new URI("/uppercase")).body("hello"), String.class);

System.out.println(result.getBody());

}

}or when function bean definition style is used:

@FunctionalSpringBootTest

public class WebFunctionTests {

@Autowired

private TestRestTemplate rest;

@Test

public void test() throws Exception {

ResponseEntity<String> result = this.rest.exchange(

RequestEntity.post(new URI("/uppercase")).body("hello"), String.class);

System.out.println(result.getBody());

}

}This test is almost identical to the one you would write for the @Bean version of the same app - the only difference

is the @FunctionalSpringBootTest annotation, instead of the regular @SpringBootTest. All the other pieces,

like the @Autowired TestRestTemplate, are standard Spring Boot features.

And to help with correct dependencies here is the excerpt from POM

<parent>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-parent</artifactId>

<version>2.2.2.RELEASE</version>

<relativePath/> <!-- lookup parent from repository -->

</parent>

. . . .

<dependency>

<groupId>org.springframework.cloud</groupId>

<artifactId>spring-cloud-function-web</artifactId>

<version>3.0.1.BUILD-SNAPSHOT</version>

</dependency>

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter</artifactId>

</dependency>

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-web</artifactId>

<scope>test</scope>

</dependency>

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-test</artifactId>

<scope>test</scope>

<exclusions>

<exclusion>

<groupId>org.junit.vintage</groupId>

<artifactId>junit-vintage-engine</artifactId>

</exclusion>

</exclusions>

</dependency>Or you could write a test for a non-HTTP app using just the FunctionCatalog. For example:

@RunWith(SpringRunner.class)

@FunctionalSpringBootTest

public class FunctionalTests {

@Autowired

private FunctionCatalog catalog;

@Test

public void words() throws Exception {

Function<String, String> function = catalog.lookup(Function.class,

"uppercase");

assertThat(function.apply("hello")).isEqualTo("HELLO");

}

}Dynamic Compilation

There is a sample app that uses the function compiler to create a

function from a configuration property. The vanilla "function-sample"

also has that feature. And there are some scripts that you can run to

see the compilation happening at run time. To run these examples,

change into the scripts directory:

cd scripts

Also, start a RabbitMQ server locally (e.g. execute rabbitmq-server).

Start the Function Registry Service:

./function-registry.sh

Register a Function:

./registerFunction.sh -n uppercase -f "f->f.map(s->s.toString().toUpperCase())"

Run a REST Microservice using that Function:

./web.sh -f uppercase -p 9000 curl -H "Content-Type: text/plain" -H "Accept: text/plain" localhost:9000/uppercase -d foo

Register a Supplier:

./registerSupplier.sh -n words -f "()->Flux.just(\"foo\",\"bar\")"

Run a REST Microservice using that Supplier:

./web.sh -s words -p 9001 curl -H "Accept: application/json" localhost:9001/words

Register a Consumer:

./registerConsumer.sh -n print -t String -f "System.out::println"

Run a REST Microservice using that Consumer:

./web.sh -c print -p 9002 curl -X POST -H "Content-Type: text/plain" -d foo localhost:9002/print

Run Stream Processing Microservices:

First register a streaming words supplier:

./registerSupplier.sh -n wordstream -f "()->Flux.interval(Duration.ofMillis(1000)).map(i->\"message-\"+i)"

Then start the source (supplier), processor (function), and sink (consumer) apps (in reverse order):

./stream.sh -p 9103 -i uppercaseWords -c print ./stream.sh -p 9102 -i words -f uppercase -o uppercaseWords ./stream.sh -p 9101 -s wordstream -o words

The output will appear in the console of the sink app (one message per second, converted to uppercase):

MESSAGE-0 MESSAGE-1 MESSAGE-2 MESSAGE-3 MESSAGE-4 MESSAGE-5 MESSAGE-6 MESSAGE-7 MESSAGE-8 MESSAGE-9 ...

Serverless Platform Adapters

As well as being able to run as a standalone process, a Spring Cloud Function application can be adapted to run one of the existing serverless platforms. In the project there are adapters for AWS Lambda, Azure, and Apache OpenWhisk. The Oracle Fn platform has its own Spring Cloud Function adapter. And Riff supports Java functions and its Java Function Invoker acts natively is an adapter for Spring Cloud Function jars.

AWS Lambda

The AWS adapter takes a Spring Cloud Function app and converts it to a form that can run in AWS Lambda.

The details of how to get stared with AWS Lambda is out of scope of this document, so the expectation is that user has some familiarity with AWS and AWS Lambda and wants to learn what additional value spring provides.

Getting Started

One of the goals of Spring Cloud Function framework is to provide necessary infrastructure elements to enable a simple function application to interact in a certain way in a particular environment. A simple function application (in context or Spring) is an application that contains beans of type Supplier, Function or Consumer. So, with AWS it means that a simple function bean should somehow be recognised and executed in AWS Lambda environment.

Let’s look at the example:

@SpringBootApplication

public class FunctionConfiguration {

public static void main(String[] args) {

SpringApplication.run(FunctionConfiguration.class, args);

}

@Bean

public Function<String, String> uppercase() {

return value -> value.toUpperCase();

}

}It shows a complete Spring Boot application with a function bean defined in it. What’s interesting is that on the surface this is just another boot app, but in the context of AWS Adapter it is also a perfectly valid AWS Lambda application. No other code or configuration is required. All you need to do is package it and deploy it, so let’s look how we can do that.

To make things simpler we’ve provided a sample project ready to be built and deployed and you can access it here.

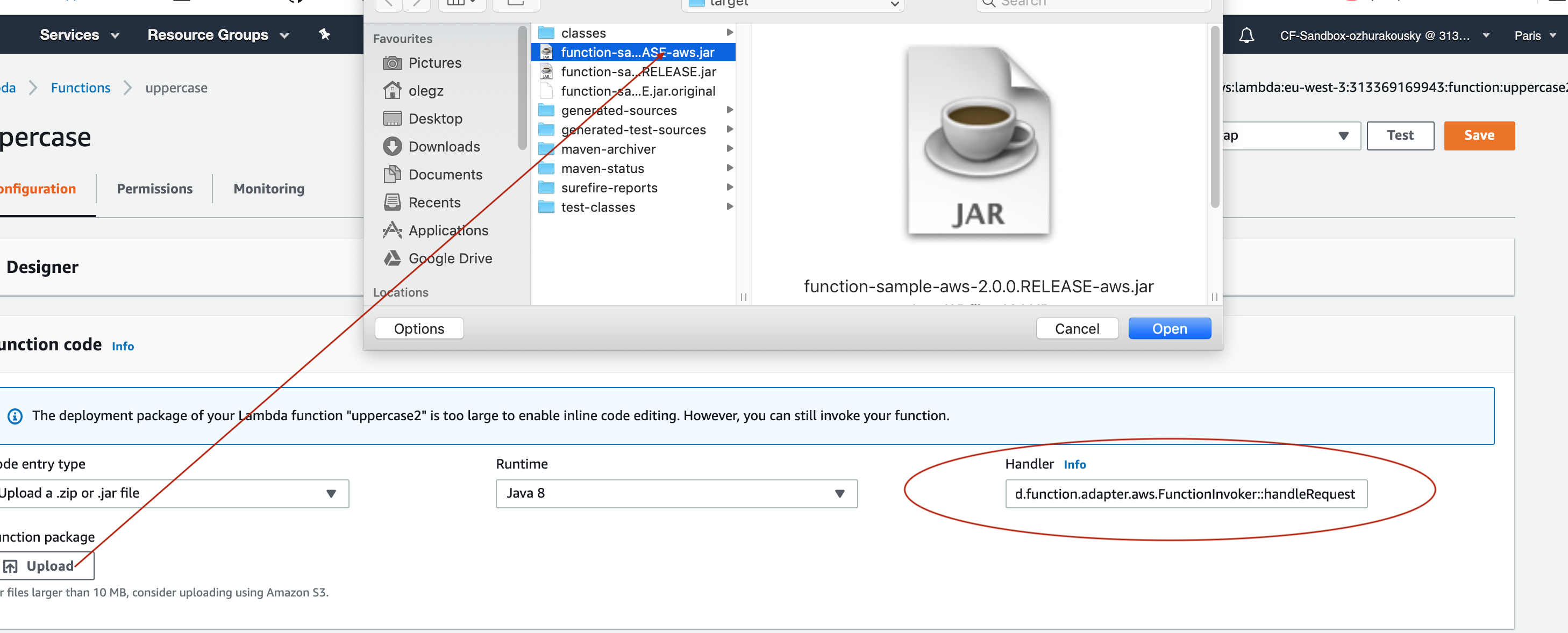

You simply execute ./mvnw clean package to generate JAR file. All the necessary maven plugins have already been setup to generate

appropriate AWS deployable JAR file. (You can read more details about JAR layout in Notes on JAR Layout).

Then you have to upload the JAR file (via AWS dashboard or AWS CLI) to AWS.

When ask about handler you specify org.springframework.cloud.function.adapter.aws.FunctionInvoker::handleRequest which is a generic request handler.

That is all. Save and execute the function with some sample data which for this function is expected to be a String which function will uppercase and return back.

While org.springframework.cloud.function.adapter.aws.FunctionInvoker is a general purpose AWS’s RequestHandler implementation aimed at completely

isolating you from the specifics of AWS Lambda API, for some cases you may want to specify which specific AWS’s RequestHandler you want

to use. The next section will explain you how you can accomplish just that.

AWS Request Handlers

The adapter has a couple of generic request handlers that you can use. The most generic is (and the one we used in the Getting Started section)

is org.springframework.cloud.function.adapter.aws.FunctionInvoker which is the implementation of AWS’s RequestStreamHandler.

User doesn’t need to do anything other then specify it as 'handler' on AWS dashborad when deploying function.

It will handle most of the case including Kinesis, streaming etc. .

If your app has more than one @Bean of type Function etc. then you can choose the one to use by configuring spring.cloud.function.definition

property or environment variable. The functions are extracted from the Spring Cloud FunctionCatalog. In the event you don’t specify spring.cloud.function.definition

the framework will attempt to find a default following the search order where it searches first for Function then Consumer and finally Supplier).

Notes on JAR Layout

You don’t need the Spring Cloud Function Web or Stream adapter at runtime in Lambda, so you might

need to exclude those before you create the JAR you send to AWS. A Lambda application has to be

shaded, but a Spring Boot standalone application does not, so you can run the same app using 2

separate jars (as per the sample). The sample app creates 2 jar files, one with an aws

classifier for deploying in Lambda, and one executable (thin) jar that includes spring-cloud-function-web

at runtime. Spring Cloud Function will try and locate a "main class" for you from the JAR file

manifest, using the Start-Class attribute (which will be added for you by the Spring Boot

tooling if you use the starter parent). If there is no Start-Class in your manifest you can

use an environment variable or system property MAIN_CLASS when you deploy the function to AWS.

If you are not using the functional bean definitions but relying on Spring Boot’s auto-configuration, then additional transformers must be configured as part of the maven-shade-plugin execution.

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-shade-plugin</artifactId>

<dependencies>

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-maven-plugin</artifactId>

</dependency>

</dependencies>

<configuration>

<createDependencyReducedPom>false</createDependencyReducedPom>

<shadedArtifactAttached>true</shadedArtifactAttached>

<shadedClassifierName>aws</shadedClassifierName>

<transformers>

<transformer implementation="org.apache.maven.plugins.shade.resource.AppendingTransformer">

<resource>META-INF/spring.handlers</resource>

</transformer>

<transformer implementation="org.springframework.boot.maven.PropertiesMergingResourceTransformer">

<resource>META-INF/spring.factories</resource>

</transformer>

<transformer implementation="org.apache.maven.plugins.shade.resource.AppendingTransformer">

<resource>META-INF/spring.schemas</resource>

</transformer>

</transformers>

</configuration>

</plugin>Build file setup

In order to run Spring Cloud Function applications on AWS Lambda, you can leverage Maven or Gradle plugins offered by the cloud platform provider.

Maven

In order to use the adapter plugin for Maven, add the plugin dependency to your pom.xml

file:

<dependencies>

<dependency>

<groupId>org.springframework.cloud</groupId>

<artifactId>spring-cloud-function-adapter-aws</artifactId>

</dependency>

</dependencies>As pointed out in the Notes on JAR Layout, you will need a shaded jar in order to upload it to AWS Lambda. You can use the Maven Shade Plugin for that. The example of the setup can be found above.

You can use theSpring Boot Maven Plugin to generate the thin jar.

<plugin>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-maven-plugin</artifactId>

<dependencies>

<dependency>

<groupId>org.springframework.boot.experimental</groupId>

<artifactId>spring-boot-thin-layout</artifactId>

<version>${wrapper.version}</version>

</dependency>

</dependencies>

</plugin>You can find the entire sample pom.xml file for deploying Spring Cloud Function

applications to AWS Lambda with Maven here.

Gradle

In order to use the adapter plugin for Gradle, add the dependency to your build.gradle file:

dependencies {

compile("org.springframework.cloud:spring-cloud-function-adapter-aws:${version}")

}As pointed out in Notes on JAR Layout, you will need a shaded jar in order to upload it to AWS Lambda. You can use the Gradle Shadow Plugin for that:

buildscript {

dependencies {

classpath "com.github.jengelman.gradle.plugins:shadow:${shadowPluginVersion}"

}

}

apply plugin: 'com.github.johnrengelman.shadow'

assemble.dependsOn = [shadowJar]

import com.github.jengelman.gradle.plugins.shadow.transformers.*

shadowJar {

classifier = 'aws'

dependencies {

exclude(

dependency("org.springframework.cloud:spring-cloud-function-web:${springCloudFunctionVersion}"))

}

// Required for Spring

mergeServiceFiles()

append 'META-INF/spring.handlers'

append 'META-INF/spring.schemas'

append 'META-INF/spring.tooling'

transform(PropertiesFileTransformer) {

paths = ['META-INF/spring.factories']

mergeStrategy = "append"

}

}You can use the Spring Boot Gradle Plugin and Spring Boot Thin Gradle Plugin to generate the thin jar.

buildscript {

dependencies {

classpath("org.springframework.boot.experimental:spring-boot-thin-gradle-plugin:${wrapperVersion}")

classpath("org.springframework.boot:spring-boot-gradle-plugin:${springBootVersion}")

}

}

apply plugin: 'org.springframework.boot'

apply plugin: 'org.springframework.boot.experimental.thin-launcher'

assemble.dependsOn = [thinJar]You can find the entire sample build.gradle file for deploying Spring Cloud Function

applications to AWS Lambda with Gradle here.

Upload

Build the sample under spring-cloud-function-samples/function-sample-aws and upload the -aws jar file to Lambda. The handler can be example.Handler or org.springframework.cloud.function.adapter.aws.SpringBootStreamHandler (FQN of the class, not a method reference, although Lambda does accept method references).

./mvnw -U clean package

Using the AWS command line tools it looks like this:

aws lambda create-function --function-name Uppercase --role arn:aws:iam::[USERID]:role/service-role/[ROLE] --zip-file fileb://function-sample-aws/target/function-sample-aws-2.0.0.BUILD-SNAPSHOT-aws.jar --handler org.springframework.cloud.function.adapter.aws.SpringBootStreamHandler --description "Spring Cloud Function Adapter Example" --runtime java8 --region us-east-1 --timeout 30 --memory-size 1024 --publish

The input type for the function in the AWS sample is a Foo with a single property called "value". So you would need this to test it:

{

"value": "test"

}

The AWS sample app is written in the "functional" style (as an ApplicationContextInitializer). This is much faster on startup in Lambda than the traditional @Bean style, so if you don’t need @Beans (or @EnableAutoConfiguration) it’s a good choice. Warm starts are not affected.

|

Type Conversion

Spring Cloud Function will attempt to transparently handle type conversion between the raw input stream and types declared by your function.

For example, if your function signature is as such Function<Foo, Bar> we will attempt to convert

incoming stream event to an instance of Foo.

In the event type is not known or can not be determined (e.g., Function<?, ?>) we will attempt to

convert an incoming stream event to a generic Map.

Raw Input

There are times when you may want to have access to a raw input. In this case all you need is to declare your

function signature to accept InputStream. For example, Function<InputStream, ?>. In this case

we will not attempt any conversion and will pass the raw input directly to a function.

Microsoft Azure

The Azure adapter bootstraps a Spring Cloud Function context and channels function calls from the Azure framework into the user functions, using Spring Boot configuration where necessary. Azure Functions has quite a unique, but invasive programming model, involving annotations in user code that are specific to the platform. The easiest way to use it with Spring Cloud is to extend a base class and write a method in it with the @FunctionName annotation which delegates to a base class method.

This project provides an adapter layer for a Spring Cloud Function application onto Azure.

You can write an app with a single @Bean of type Function and it will be deployable in Azure if you get the JAR file laid out right.

There is an AzureSpringBootRequestHandler which you must extend, and provide the input and output types as annotated method parameters (enabling Azure to inspect the class and create JSON bindings). The base class has two useful methods (handleRequest and handleOutput) to which you can delegate the actual function call, so mostly the function will only ever have one line.

Example:

public class FooHandler extends AzureSpringBootRequestHandler<Foo, Bar> {

@FunctionName("uppercase")

public Bar execute(@HttpTrigger(name = "req", methods = {HttpMethod.GET,

HttpMethod.POST}, authLevel = AuthorizationLevel.ANONYMOUS) HttpRequestMessage<Optional<Foo>> request,

ExecutionContext context) {

return handleRequest(request.getBody().get(), context);

}

}This Azure handler will delegate to a Function<Foo,Bar> bean (or a Function<Publisher<Foo>,Publisher<Bar>>). Some Azure triggers (e.g. @CosmosDBTrigger) result in a input type of List and in that case you can bind to List in the Azure handler, or String (the raw JSON). The List input delegates to a Function with input type Map<String,Object>, or Publisher or List of the same type. The output of the Function can be a List (one-for-one) or a single value (aggregation), and the output binding in the Azure declaration should match.

If your app has more than one @Bean of type Function etc. then you can choose the one to use by configuring function.name. Or if you make the @FunctionName in the Azure handler method match the function name it should work that way (also for function apps with multiple functions). The functions are extracted from the Spring Cloud FunctionCatalog so the default function names are the same as the bean names.

Accessing Azure ExecutionContext

Some time there is a need to access the target execution context provided by Azure runtime in the form of com.microsoft.azure.functions.ExecutionContext.

For example one of such needs is logging, so it can appear in the Azure console.

For that purpose Spring Cloud Function will register ExecutionContext as bean in the Application context, so it could be injected into your function.

For example

@Bean

public Function<Foo, Bar> uppercase(ExecutionContext targetContext) {

return foo -> {

targetContext.getLogger().info("Invoking 'uppercase' on " + foo.getValue());

return new Bar(foo.getValue().toUpperCase());

};

}Normally type-based injection should suffice, however if need to you can also utilise the bean name under which it is registered which is targetExecutionContext.

Notes on JAR Layout

You don’t need the Spring Cloud Function Web at runtime in Azure, so you can exclude this before you create the JAR you deploy to Azure, but it won’t be used if you include it, so it doesn’t hurt to leave it in. A function application on Azure is an archive generated by the Maven plugin. The function lives in the JAR file generated by this project. The sample creates it as an executable jar, using the thin layout, so that Azure can find the handler classes. If you prefer you can just use a regular flat JAR file. The dependencies should not be included.

Build file setup

In order to run Spring Cloud Function applications on Microsoft Azure, you can leverage the Maven plugin offered by the cloud platform provider.

In order to use the adapter plugin for Maven, add the plugin dependency to your pom.xml

file:

<dependencies>

<dependency>

<groupId>org.springframework.cloud</groupId>

<artifactId>spring-cloud-function-adapter-azure</artifactId>

</dependency>

</dependencies>Then, configure the plugin. You will need to provide Azure-specific configuration for your

application, specifying the resourceGroup, appName and other optional properties, and

add the package goal execution so that the function.json file required by Azure is

generated for you. Full plugin documentation can be found in the plugin repository.

<plugin>

<groupId>com.microsoft.azure</groupId>

<artifactId>azure-functions-maven-plugin</artifactId>

<configuration>

<resourceGroup>${functionResourceGroup}</resourceGroup>

<appName>${functionAppName}</appName>

</configuration>

<executions>

<execution>

<id>package-functions</id>

<goals>

<goal>package</goal>

</goals>

</execution>

</executions>

</plugin>You will also have to ensure that the files to be scanned by the plugin can be found in the Azure functions staging directory (see the plugin repository for more details on the staging directory and it’s default location).

You can find the entire sample pom.xml file for deploying Spring Cloud Function

applications to Microsoft Azure with Maven here.

| As of yet, only Maven plugin is available. Gradle plugin has not been created by the cloud platform provider. |

Build

./mvnw -U clean package

Running the sample

You can run the sample locally, just like the other Spring Cloud Function samples:

and curl -H "Content-Type: text/plain" localhost:8080/api/uppercase -d '{"value": "hello foobar"}'.

You will need the az CLI app (see https://docs.microsoft.com/en-us/azure/azure-functions/functions-create-first-java-maven for more detail). To deploy the function on Azure runtime:

$ az login $ mvn azure-functions:deploy

On another terminal try this: curl https://<azure-function-url-from-the-log>/api/uppercase -d '{"value": "hello foobar!"}'. Please ensure that you use the right URL for the function above. Alternatively you can test the function in the Azure Dashboard UI (click on the function name, go to the right hand side and click "Test" and to the bottom right, "Run").

The input type for the function in the Azure sample is a Foo with a single property called "value". So you need this to test it with something like below:

{

"value": "foobar"

}

The Azure sample app is written in the "non-functional" style (using @Bean). The functional style (with just Function or ApplicationContextInitializer) is much faster on startup in Azure than the traditional @Bean style, so if you don’t need @Beans (or @EnableAutoConfiguration) it’s a good choice. Warm starts are not affected.

:branch: master

|

Google Cloud Functions (Alpha)

The Google Cloud Functions adapter enables Spring Cloud Function apps to run on the Google Cloud Functions serverless platform. You can either run the function locally using the open source Google Functions Framework for Java or on GCP.

Project Dependencies

Start by adding the spring-cloud-function-adapter-gcp dependency to your project.

<dependencies>

<dependency>

<groupId>org.springframework.cloud</groupId>

<artifactId>spring-cloud-function-adapter-gcp</artifactId>

</dependency>

...

</dependencies>In addition, add the spring-boot-maven-plugin which will build the JAR of the function to deploy.

Notice that we also reference spring-cloud-function-adapter-gcp as a dependency of the spring-boot-maven-plugin. This is necessary because it modifies the plugin to package your function in the correct JAR format for deployment on Google Cloud Functions.

|

<plugin>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-maven-plugin</artifactId>

<configuration>

<outputDirectory>target/deploy</outputDirectory>

</configuration>

<dependencies>

<dependency>

<groupId>org.springframework.cloud</groupId>

<artifactId>spring-cloud-function-adapter-gcp</artifactId>

</dependency>

</dependencies>

</plugin>Finally, add the Maven plugin provided as part of the Google Functions Framework for Java.

This allows you to test your functions locally via mvn function:run.

The function target should always be set to org.springframework.cloud.function.adapter.gcp.GcfJarLauncher; this is an adapter class which acts as the entry point to your Spring Cloud Function from the Google Cloud Functions platform.

|

<plugin>

<groupId>com.google.cloud.functions</groupId>

<artifactId>function-maven-plugin</artifactId>

<version>0.9.1</version>

<configuration>

<functionTarget>org.springframework.cloud.function.adapter.gcp.GcfJarLauncher</functionTarget>

<port>8080</port>

</configuration>

</plugin>A full example of a working pom.xml can be found in the Spring Cloud Functions GCP sample.

HTTP Functions

Google Cloud Functions supports deploying HTTP Functions, which are functions that are invoked by HTTP request. The sections below describe instructions for deploying a Spring Cloud Function as an HTTP Function.

Getting Started

Let’s start with a simple Spring Cloud Function example:

@SpringBootApplication

public class CloudFunctionMain {

public static void main(String[] args) {

SpringApplication.run(CloudFunctionMain.class, args);

}

@Bean

public Function<String, String> uppercase() {

return value -> value.toUpperCase();

}

}Specify your configuration main class in resources/META-INF/MANIFEST.MF.

Main-Class: com.example.CloudFunctionMainThen run the function locally.

This is provided by the Google Cloud Functions function-maven-plugin described in the project dependencies section.

mvn function:run

Invoke the HTTP function:

curl http://localhost:8080/ -d "hello"

Deploy to GCP

As of March 2020, Google Cloud Functions for Java is in Alpha. You can get on the whitelist to try it out.

Start by packaging your application.

mvn package

If you added the custom spring-boot-maven-plugin plugin defined above, you should see the resulting JAR in target/deploy directory.

This JAR is correctly formatted for deployment to Google Cloud Functions.

Next, make sure that you have the Cloud SDK CLI installed.

From the project base directory run the following command to deploy.

gcloud alpha functions deploy function-sample-gcp-http \ --entry-point org.springframework.cloud.function.adapter.gcp.GcfJarLauncher \ --runtime java11 \ --trigger-http \ --source target/deploy \ --memory 512MB

Invoke the HTTP function:

curl https://REGION-PROJECT_ID.cloudfunctions.net/function-sample-gcp-http -d "hello"

Background Functions

Google Cloud Functions also supports deploying Background Functions which are invoked indirectly in response to an event, such as a message on a Cloud Pub/Sub topic, a change in a Cloud Storage bucket, or a Firebase event.

The spring-cloud-function-adapter-gcp allows for functions to be deployed as background functions as well.

The sections below describe the process for writing a Cloud Pub/Sub topic background function. However, there are a number of different event types that can trigger a background function to execute which are not discussed here; these are described in the Background Function triggers documentation.

Getting Started

Let’s start with a simple Spring Cloud Function which will run as a GCF background function:

@SpringBootApplication

public class BackgroundFunctionMain {

public static void main(String[] args) {

SpringApplication.run(BackgroundFunctionMain.class, args);

}

@Bean

public Consumer<PubSubMessage> pubSubFunction() {

return message -> System.out.println("The Pub/Sub message data: " + message.getData());

}

}In addition, create PubSubMessage class in the project with the below definition.

This class represents the Pub/Sub event structure which gets passed to your function on a Pub/Sub topic event.

public class PubSubMessage {

private String data;

private Map<String, String> attributes;

private String messageId;

private String publishTime;

public String getData() {

return data;

}

public void setData(String data) {

this.data = data;

}

public Map<String, String> getAttributes() {

return attributes;

}

public void setAttributes(Map<String, String> attributes) {

this.attributes = attributes;

}

public String getMessageId() {

return messageId;

}

public void setMessageId(String messageId) {

this.messageId = messageId;

}

public String getPublishTime() {

return publishTime;

}

public void setPublishTime(String publishTime) {

this.publishTime = publishTime;

}

}Specify your configuration main class in resources/META-INF/MANIFEST.MF.

Main-Class: com.example.BackgroundFunctionMainThen run the function locally.

This is provided by the Google Cloud Functions function-maven-plugin described in the project dependencies section.

mvn function:run

Invoke the HTTP function:

curl localhost:8080 -H "Content-Type: application/json" -d '{"data":"hello"}'

Verify that the function was invoked by viewing the logs.

Deploy to GCP

In order to deploy your background function to GCP, first package your application.

mvn package

If you added the custom spring-boot-maven-plugin plugin defined above, you should see the resulting JAR in target/deploy directory.

This JAR is correctly formatted for deployment to Google Cloud Functions.

Next, make sure that you have the Cloud SDK CLI installed.

From the project base directory run the following command to deploy.

gcloud alpha functions deploy function-sample-gcp-background \ --entry-point org.springframework.cloud.function.adapter.gcp.GcfJarLauncher \ --runtime java11 \ --trigger-topic my-functions-topic \ --source target/deploy \ --memory 512MB

Google Cloud Function will now invoke the function every time a message is published to the topic specified by --trigger-topic.

For a walkthrough on testing and verifying your background function, see the instructions for running the GCF Background Function sample.

Sample Functions

The project provides the following sample functions as reference:

-

The function-sample-gcp-http is an HTTP Function which you can test locally and try deploying.

-

The function-sample-gcp-background shows an example of a background function that is triggered by a message being published to a specified Pub/Sub topic.