This part of the reference documentation is concerned with data access and the interaction between the data access layer and the business or service layer.

Spring’s comprehensive transaction management support is covered in some detail, followed by thorough coverage of the various data access frameworks and technologies with which the Spring Framework integrates.

1. Transaction Management

Comprehensive transaction support is among the most compelling reasons to use the Spring Framework. The Spring Framework provides a consistent abstraction for transaction management that delivers the following benefits:

-

A consistent programming model across different transaction APIs, such as Java Transaction API (JTA), JDBC, Hibernate, and the Java Persistence API (JPA).

-

Support for declarative transaction management.

-

A simpler API for programmatic transaction management than complex transaction APIs, such as JTA.

-

Excellent integration with Spring’s data access abstractions.

The following sections describe the Spring Framework’s transaction features and technologies:

-

Advantages of the Spring Framework’s transaction support model describes why you would use the Spring Framework’s transaction abstraction instead of EJB Container-Managed Transactions (CMT) or choosing to drive local transactions through a proprietary API, such as Hibernate.

-

Understanding the Spring Framework transaction abstraction outlines the core classes and describes how to configure and obtain

DataSourceinstances from a variety of sources. -

Synchronizing resources with transactions describes how the application code ensures that resources are created, reused, and cleaned up properly.

-

Declarative transaction management describes support for declarative transaction management.

-

Programmatic transaction management covers support for programmatic (that is, explicitly coded) transaction management.

-

Transaction bound event describes how you could use application events within a transaction.

The chapter also includes discussions of best practices, application server integration, and solutions to common problems.

1.1. Advantages of the Spring Framework’s Transaction Support Model

Traditionally, Java EE developers have had two choices for transaction management: global or local transactions, both of which have profound limitations. Global and local transaction management is reviewed in the next two sections, followed by a discussion of how the Spring Framework’s transaction management support addresses the limitations of the global and local transaction models.

1.1.1. Global Transactions

Global transactions let you work with multiple transactional resources, typically

relational databases and message queues. The application server manages global

transactions through the JTA, which is a cumbersome API (partly due to its

exception model). Furthermore, a JTA UserTransaction normally needs to be sourced from

JNDI, meaning that you also need to use JNDI in order to use JTA. The use

of global transactions limits any potential reuse of application code, as JTA is

normally only available in an application server environment.

Previously, the preferred way to use global transactions was through EJB CMT (Container Managed Transaction). CMT is a form of declarative transaction management (as distinguished from programmatic transaction management). EJB CMT removes the need for transaction-related JNDI lookups, although the use of EJB itself necessitates the use of JNDI. It removes most but not all of the need to write Java code to control transactions. The significant downside is that CMT is tied to JTA and an application server environment. Also, it is only available if one chooses to implement business logic in EJBs (or at least behind a transactional EJB facade). The negatives of EJB in general are so great that this is not an attractive proposition, especially in the face of compelling alternatives for declarative transaction management.

1.1.2. Local Transactions

Local transactions are resource-specific, such as a transaction associated with a JDBC connection. Local transactions may be easier to use but have a significant disadvantage: They cannot work across multiple transactional resources. For example, code that manages transactions by using a JDBC connection cannot run within a global JTA transaction. Because the application server is not involved in transaction management, it cannot help ensure correctness across multiple resources. (It is worth noting that most applications use a single transaction resource.) Another downside is that local transactions are invasive to the programming model.

1.1.3. Spring Framework’s Consistent Programming Model

Spring resolves the disadvantages of global and local transactions. It lets application developers use a consistent programming model in any environment. You write your code once, and it can benefit from different transaction management strategies in different environments. The Spring Framework provides both declarative and programmatic transaction management. Most users prefer declarative transaction management, which we recommend in most cases.

With programmatic transaction management, developers work with the Spring Framework transaction abstraction, which can run over any underlying transaction infrastructure. With the preferred declarative model, developers typically write little or no code related to transaction management and, hence, do not depend on the Spring Framework transaction API or any other transaction API.

1.2. Understanding the Spring Framework Transaction Abstraction

The key to the Spring transaction abstraction is the notion of a transaction strategy. A

transaction strategy is defined by a TransactionManager, specifically the

org.springframework.transaction.PlatformTransactionManager interface for imperative

transaction management and the

org.springframework.transaction.ReactiveTransactionManager interface for reactive

transaction management. The following listing shows the definition of the

PlatformTransactionManager API:

public interface PlatformTransactionManager extends TransactionManager {

TransactionStatus getTransaction(TransactionDefinition definition) throws TransactionException;

void commit(TransactionStatus status) throws TransactionException;

void rollback(TransactionStatus status) throws TransactionException;

}This is primarily a service provider interface (SPI), although you can use it

programmatically from your application code. Because

PlatformTransactionManager is an interface, it can be easily mocked or stubbed as

necessary. It is not tied to a lookup strategy, such as JNDI.

PlatformTransactionManager implementations are defined like any other object (or bean)

in the Spring Framework IoC container. This benefit alone makes Spring Framework

transactions a worthwhile abstraction, even when you work with JTA. You can test

transactional code much more easily than if it used JTA directly.

Again, in keeping with Spring’s philosophy, the TransactionException that can be thrown

by any of the PlatformTransactionManager interface’s methods is unchecked (that

is, it extends the java.lang.RuntimeException class). Transaction infrastructure

failures are almost invariably fatal. In rare cases where application code can actually

recover from a transaction failure, the application developer can still choose to catch

and handle TransactionException. The salient point is that developers are not

forced to do so.

The getTransaction(..) method returns a TransactionStatus object, depending on a

TransactionDefinition parameter. The returned TransactionStatus might represent a

new transaction or can represent an existing transaction, if a matching transaction

exists in the current call stack. The implication in this latter case is that, as with

Java EE transaction contexts, a TransactionStatus is associated with a thread of

execution.

As of Spring Framework 5.2, Spring also provides a transaction management abstraction for

reactive applications that make use of reactive types or Kotlin Coroutines. The following

listing shows the transaction strategy defined by

org.springframework.transaction.ReactiveTransactionManager:

public interface ReactiveTransactionManager extends TransactionManager {

Mono<ReactiveTransaction> getReactiveTransaction(TransactionDefinition definition) throws TransactionException;

Mono<Void> commit(ReactiveTransaction status) throws TransactionException;

Mono<Void> rollback(ReactiveTransaction status) throws TransactionException;

}The reactive transaction manager is primarily a service provider interface (SPI),

although you can use it programmatically from your

application code. Because ReactiveTransactionManager is an interface, it can be easily

mocked or stubbed as necessary.

The TransactionDefinition interface specifies:

-

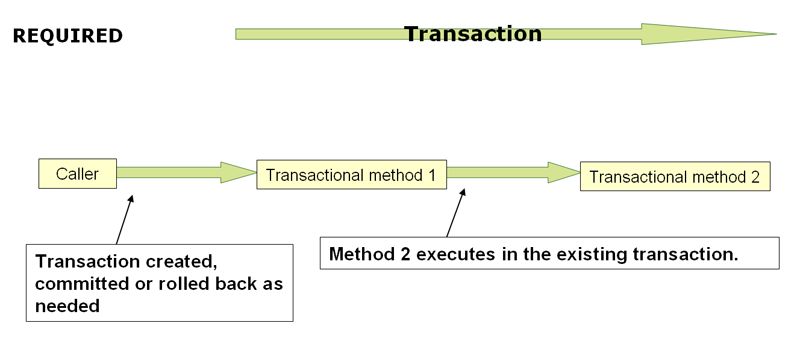

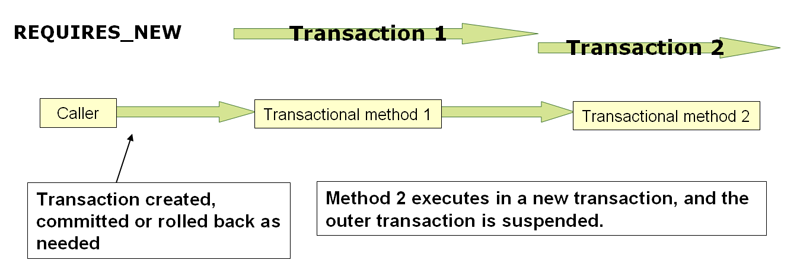

Propagation: Typically, all code within a transaction scope runs in that transaction. However, you can specify the behavior if a transactional method is run when a transaction context already exists. For example, code can continue running in the existing transaction (the common case), or the existing transaction can be suspended and a new transaction created. Spring offers all of the transaction propagation options familiar from EJB CMT. To read about the semantics of transaction propagation in Spring, see Transaction Propagation.

-

Isolation: The degree to which this transaction is isolated from the work of other transactions. For example, can this transaction see uncommitted writes from other transactions?

-

Timeout: How long this transaction runs before timing out and being automatically rolled back by the underlying transaction infrastructure.

-

Read-only status: You can use a read-only transaction when your code reads but does not modify data. Read-only transactions can be a useful optimization in some cases, such as when you use Hibernate.

These settings reflect standard transactional concepts. If necessary, refer to resources that discuss transaction isolation levels and other core transaction concepts. Understanding these concepts is essential to using the Spring Framework or any transaction management solution.

The TransactionStatus interface provides a simple way for transactional code to

control transaction execution and query transaction status. The concepts should be

familiar, as they are common to all transaction APIs. The following listing shows the

TransactionStatus interface:

public interface TransactionStatus extends TransactionExecution, SavepointManager, Flushable {

@Override

boolean isNewTransaction();

boolean hasSavepoint();

@Override

void setRollbackOnly();

@Override

boolean isRollbackOnly();

void flush();

@Override

boolean isCompleted();

}Regardless of whether you opt for declarative or programmatic transaction management in

Spring, defining the correct TransactionManager implementation is absolutely essential.

You typically define this implementation through dependency injection.

TransactionManager implementations normally require knowledge of the environment in

which they work: JDBC, JTA, Hibernate, and so on. The following examples show how you can

define a local PlatformTransactionManager implementation (in this case, with plain

JDBC.)

You can define a JDBC DataSource by creating a bean similar to the following:

<bean id="dataSource" class="org.apache.commons.dbcp.BasicDataSource" destroy-method="close">

<property name="driverClassName" value="${jdbc.driverClassName}" />

<property name="url" value="${jdbc.url}" />

<property name="username" value="${jdbc.username}" />

<property name="password" value="${jdbc.password}" />

</bean>The related PlatformTransactionManager bean definition then has a reference to the

DataSource definition. It should resemble the following example:

<bean id="txManager" class="org.springframework.jdbc.datasource.DataSourceTransactionManager">

<property name="dataSource" ref="dataSource"/>

</bean>If you use JTA in a Java EE container, then you use a container DataSource, obtained

through JNDI, in conjunction with Spring’s JtaTransactionManager. The following example

shows what the JTA and JNDI lookup version would look like:

<?xml version="1.0" encoding="UTF-8"?>

<beans xmlns="http://www.springframework.org/schema/beans"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xmlns:jee="http://www.springframework.org/schema/jee"

xsi:schemaLocation="

http://www.springframework.org/schema/beans

https://www.springframework.org/schema/beans/spring-beans.xsd

http://www.springframework.org/schema/jee

https://www.springframework.org/schema/jee/spring-jee.xsd">

<jee:jndi-lookup id="dataSource" jndi-name="jdbc/jpetstore"/>

<bean id="txManager" class="org.springframework.transaction.jta.JtaTransactionManager" />

<!-- other <bean/> definitions here -->

</beans>The JtaTransactionManager does not need to know about the DataSource (or any other

specific resources) because it uses the container’s global transaction management

infrastructure.

The preceding definition of the dataSource bean uses the <jndi-lookup/> tag

from the jee namespace. For more information see

The JEE Schema.

|

| If you use JTA, your transaction manager definition should look the same, regardless of what data access technology you use, be it JDBC, Hibernate JPA, or any other supported technology. This is due to the fact that JTA transactions are global transactions, which can enlist any transactional resource. |

In all Spring transaction setups, application code does not need to change. You can change how transactions are managed merely by changing configuration, even if that change means moving from local to global transactions or vice versa.

1.2.1. Hibernate Transaction Setup

You can also easily use Hibernate local transactions, as shown in the following examples.

In this case, you need to define a Hibernate LocalSessionFactoryBean, which your

application code can use to obtain Hibernate Session instances.

The DataSource bean definition is similar to the local JDBC example shown previously

and, thus, is not shown in the following example.

If the DataSource (used by any non-JTA transaction manager) is looked up through

JNDI and managed by a Java EE container, it should be non-transactional, because the

Spring Framework (rather than the Java EE container) manages the transactions.

|

The txManager bean in this case is of the HibernateTransactionManager type. In the

same way as the DataSourceTransactionManager needs a reference to the DataSource, the

HibernateTransactionManager needs a reference to the SessionFactory. The following

example declares sessionFactory and txManager beans:

<bean id="sessionFactory" class="org.springframework.orm.hibernate5.LocalSessionFactoryBean">

<property name="dataSource" ref="dataSource"/>

<property name="mappingResources">

<list>

<value>org/springframework/samples/petclinic/hibernate/petclinic.hbm.xml</value>

</list>

</property>

<property name="hibernateProperties">

<value>

hibernate.dialect=${hibernate.dialect}

</value>

</property>

</bean>

<bean id="txManager" class="org.springframework.orm.hibernate5.HibernateTransactionManager">

<property name="sessionFactory" ref="sessionFactory"/>

</bean>If you use Hibernate and Java EE container-managed JTA transactions, you should use the

same JtaTransactionManager as in the previous JTA example for JDBC, as the following

example shows. Also, it is recommended to make Hibernate aware of JTA through its

transaction coordinator and possibly also its connection release mode configuration:

<bean id="sessionFactory" class="org.springframework.orm.hibernate5.LocalSessionFactoryBean">

<property name="dataSource" ref="dataSource"/>

<property name="mappingResources">

<list>

<value>org/springframework/samples/petclinic/hibernate/petclinic.hbm.xml</value>

</list>

</property>

<property name="hibernateProperties">

<value>

hibernate.dialect=${hibernate.dialect}

hibernate.transaction.coordinator_class=jta

hibernate.connection.handling_mode=DELAYED_ACQUISITION_AND_RELEASE_AFTER_STATEMENT

</value>

</property>

</bean>

<bean id="txManager" class="org.springframework.transaction.jta.JtaTransactionManager"/>Or alternatively, you may pass the JtaTransactionManager into your LocalSessionFactoryBean

for enforcing the same defaults:

<bean id="sessionFactory" class="org.springframework.orm.hibernate5.LocalSessionFactoryBean">

<property name="dataSource" ref="dataSource"/>

<property name="mappingResources">

<list>

<value>org/springframework/samples/petclinic/hibernate/petclinic.hbm.xml</value>

</list>

</property>

<property name="hibernateProperties">

<value>

hibernate.dialect=${hibernate.dialect}

</value>

</property>

<property name="jtaTransactionManager" ref="txManager"/>

</bean>

<bean id="txManager" class="org.springframework.transaction.jta.JtaTransactionManager"/>1.3. Synchronizing Resources with Transactions

How to create different transaction managers and how they are linked to related resources

that need to be synchronized to transactions (for example DataSourceTransactionManager

to a JDBC DataSource, HibernateTransactionManager to a Hibernate SessionFactory,

and so forth) should now be clear. This section describes how the application code

(directly or indirectly, by using a persistence API such as JDBC, Hibernate, or JPA)

ensures that these resources are created, reused, and cleaned up properly. The section

also discusses how transaction synchronization is (optionally) triggered through the

relevant TransactionManager.

1.3.1. High-level Synchronization Approach

The preferred approach is to use Spring’s highest-level template-based persistence

integration APIs or to use native ORM APIs with transaction-aware factory beans or

proxies for managing the native resource factories. These transaction-aware solutions

internally handle resource creation and reuse, cleanup, optional transaction

synchronization of the resources, and exception mapping. Thus, user data access code does

not have to address these tasks but can focus purely on non-boilerplate

persistence logic. Generally, you use the native ORM API or take a template approach

for JDBC access by using the JdbcTemplate. These solutions are detailed in subsequent

sections of this reference documentation.

1.3.2. Low-level Synchronization Approach

Classes such as DataSourceUtils (for JDBC), EntityManagerFactoryUtils (for JPA),

SessionFactoryUtils (for Hibernate), and so on exist at a lower level. When you want the

application code to deal directly with the resource types of the native persistence APIs,

you use these classes to ensure that proper Spring Framework-managed instances are obtained,

transactions are (optionally) synchronized, and exceptions that occur in the process are

properly mapped to a consistent API.

For example, in the case of JDBC, instead of the traditional JDBC approach of calling

the getConnection() method on the DataSource, you can instead use Spring’s

org.springframework.jdbc.datasource.DataSourceUtils class, as follows:

Connection conn = DataSourceUtils.getConnection(dataSource);If an existing transaction already has a connection synchronized (linked) to it, that

instance is returned. Otherwise, the method call triggers the creation of a new

connection, which is (optionally) synchronized to any existing transaction and made

available for subsequent reuse in that same transaction. As mentioned earlier, any

SQLException is wrapped in a Spring Framework CannotGetJdbcConnectionException, one

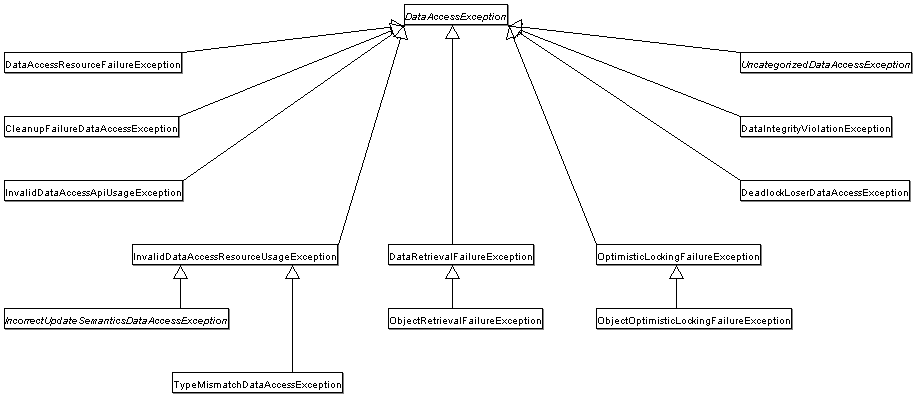

of the Spring Framework’s hierarchy of unchecked DataAccessException types. This approach

gives you more information than can be obtained easily from the SQLException and

ensures portability across databases and even across different persistence technologies.

This approach also works without Spring transaction management (transaction synchronization is optional), so you can use it whether or not you use Spring for transaction management.

Of course, once you have used Spring’s JDBC support, JPA support, or Hibernate support,

you generally prefer not to use DataSourceUtils or the other helper classes,

because you are much happier working through the Spring abstraction than directly

with the relevant APIs. For example, if you use the Spring JdbcTemplate or

jdbc.object package to simplify your use of JDBC, correct connection retrieval occurs

behind the scenes and you need not write any special code.

1.3.3. TransactionAwareDataSourceProxy

At the very lowest level exists the TransactionAwareDataSourceProxy class. This is a

proxy for a target DataSource, which wraps the target DataSource to add awareness of

Spring-managed transactions. In this respect, it is similar to a transactional JNDI

DataSource, as provided by a Java EE server.

You should almost never need or want to use this class, except when existing

code must be called and passed a standard JDBC DataSource interface implementation. In

that case, it is possible that this code is usable but is participating in Spring-managed

transactions. You can write your new code by using the higher-level

abstractions mentioned earlier.

1.4. Declarative Transaction Management

| Most Spring Framework users choose declarative transaction management. This option has the least impact on application code and, hence, is most consistent with the ideals of a non-invasive lightweight container. |

The Spring Framework’s declarative transaction management is made possible with Spring aspect-oriented programming (AOP). However, as the transactional aspects code comes with the Spring Framework distribution and may be used in a boilerplate fashion, AOP concepts do not generally have to be understood to make effective use of this code.

The Spring Framework’s declarative transaction management is similar to EJB CMT, in that

you can specify transaction behavior (or lack of it) down to the individual method level.

You can make a setRollbackOnly() call within a transaction context, if

necessary. The differences between the two types of transaction management are:

-

Unlike EJB CMT, which is tied to JTA, the Spring Framework’s declarative transaction management works in any environment. It can work with JTA transactions or local transactions by using JDBC, JPA, or Hibernate by adjusting the configuration files.

-

You can apply the Spring Framework declarative transaction management to any class, not merely special classes such as EJBs.

-

The Spring Framework offers declarative rollback rules, a feature with no EJB equivalent. Both programmatic and declarative support for rollback rules is provided.

-

The Spring Framework lets you customize transactional behavior by using AOP. For example, you can insert custom behavior in the case of transaction rollback. You can also add arbitrary advice, along with transactional advice. With EJB CMT, you cannot influence the container’s transaction management, except with

setRollbackOnly(). -

The Spring Framework does not support propagation of transaction contexts across remote calls, as high-end application servers do. If you need this feature, we recommend that you use EJB. However, consider carefully before using such a feature, because, normally, one does not want transactions to span remote calls.

The concept of rollback rules is important. They let you specify which exceptions

(and throwables) should cause automatic rollback. You can specify this declaratively, in

configuration, not in Java code. So, although you can still call setRollbackOnly() on

the TransactionStatus object to roll back the current transaction back, most often you

can specify a rule that MyApplicationException must always result in rollback. The

significant advantage to this option is that business objects do not depend on the

transaction infrastructure. For example, they typically do not need to import Spring

transaction APIs or other Spring APIs.

Although EJB container default behavior automatically rolls back the transaction on a

system exception (usually a runtime exception), EJB CMT does not roll back the

transaction automatically on an application exception (that is, a checked exception

other than java.rmi.RemoteException). While the Spring default behavior for

declarative transaction management follows EJB convention (roll back is automatic only

on unchecked exceptions), it is often useful to customize this behavior.

1.4.1. Understanding the Spring Framework’s Declarative Transaction Implementation

It is not sufficient merely to tell you to annotate your classes with the

@Transactional annotation, add @EnableTransactionManagement to your configuration,

and expect you to understand how it all works. To provide a deeper understanding, this

section explains the inner workings of the Spring Framework’s declarative transaction

infrastructure in the context of transaction-related issues.

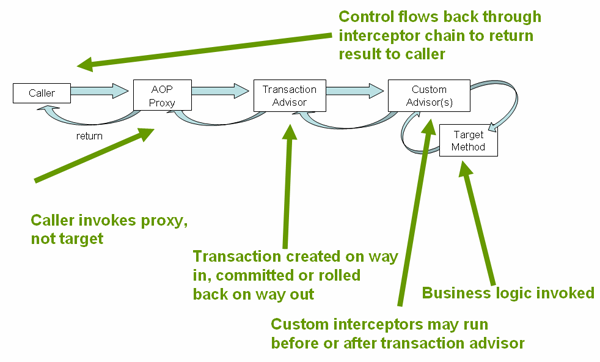

The most important concepts to grasp with regard to the Spring Framework’s declarative

transaction support are that this support is enabled

via AOP proxies and that the transactional

advice is driven by metadata (currently XML- or annotation-based). The combination of AOP

with transactional metadata yields an AOP proxy that uses a TransactionInterceptor in

conjunction with an appropriate TransactionManager implementation to drive transactions

around method invocations.

| Spring AOP is covered in the AOP section. |

Spring Framework’s TransactionInterceptor provides transaction management for

imperative and reactive programming models. The interceptor detects the desired flavor of

transaction management by inspecting the method return type. Methods returning a reactive

type such as Publisher or Kotlin Flow (or a subtype of those) qualify for reactive

transaction management. All other return types including void use the code path for

imperative transaction management.

Transaction management flavors impact which transaction manager is required. Imperative

transactions require a PlatformTransactionManager, while reactive transactions use

ReactiveTransactionManager implementations.

|

A reactive transaction managed by |

The following image shows a conceptual view of calling a method on a transactional proxy:

1.4.2. Example of Declarative Transaction Implementation

Consider the following interface and its attendant implementation. This example uses

Foo and Bar classes as placeholders so that you can concentrate on the transaction

usage without focusing on a particular domain model. For the purposes of this example,

the fact that the DefaultFooService class throws UnsupportedOperationException

instances in the body of each implemented method is good. That behavior lets you see

transactions being created and then rolled back in response to the

UnsupportedOperationException instance. The following listing shows the FooService

interface:

// the service interface that we want to make transactional

package x.y.service;

public interface FooService {

Foo getFoo(String fooName);

Foo getFoo(String fooName, String barName);

void insertFoo(Foo foo);

void updateFoo(Foo foo);

}// the service interface that we want to make transactional

package x.y.service

interface FooService {

fun getFoo(fooName: String): Foo

fun getFoo(fooName: String, barName: String): Foo

fun insertFoo(foo: Foo)

fun updateFoo(foo: Foo)

}The following example shows an implementation of the preceding interface:

package x.y.service;

public class DefaultFooService implements FooService {

@Override

public Foo getFoo(String fooName) {

// ...

}

@Override

public Foo getFoo(String fooName, String barName) {

// ...

}

@Override

public void insertFoo(Foo foo) {

// ...

}

@Override

public void updateFoo(Foo foo) {

// ...

}

}package x.y.service

class DefaultFooService : FooService {

override fun getFoo(fooName: String): Foo {

// ...

}

override fun getFoo(fooName: String, barName: String): Foo {

// ...

}

override fun insertFoo(foo: Foo) {

// ...

}

override fun updateFoo(foo: Foo) {

// ...

}

}Assume that the first two methods of the FooService interface, getFoo(String) and

getFoo(String, String), must run in the context of a transaction with read-only

semantics and that the other methods, insertFoo(Foo) and updateFoo(Foo), must

run in the context of a transaction with read-write semantics. The following

configuration is explained in detail in the next few paragraphs:

<!-- from the file 'context.xml' -->

<?xml version="1.0" encoding="UTF-8"?>

<beans xmlns="http://www.springframework.org/schema/beans"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xmlns:aop="http://www.springframework.org/schema/aop"

xmlns:tx="http://www.springframework.org/schema/tx"

xsi:schemaLocation="

http://www.springframework.org/schema/beans

https://www.springframework.org/schema/beans/spring-beans.xsd

http://www.springframework.org/schema/tx

https://www.springframework.org/schema/tx/spring-tx.xsd

http://www.springframework.org/schema/aop

https://www.springframework.org/schema/aop/spring-aop.xsd">

<!-- this is the service object that we want to make transactional -->

<bean id="fooService" class="x.y.service.DefaultFooService"/>

<!-- the transactional advice (what 'happens'; see the <aop:advisor/> bean below) -->

<tx:advice id="txAdvice" transaction-manager="txManager">

<!-- the transactional semantics... -->

<tx:attributes>

<!-- all methods starting with 'get' are read-only -->

<tx:method name="get*" read-only="true"/>

<!-- other methods use the default transaction settings (see below) -->

<tx:method name="*"/>

</tx:attributes>

</tx:advice>

<!-- ensure that the above transactional advice runs for any execution

of an operation defined by the FooService interface -->

<aop:config>

<aop:pointcut id="fooServiceOperation" expression="execution(* x.y.service.FooService.*(..))"/>

<aop:advisor advice-ref="txAdvice" pointcut-ref="fooServiceOperation"/>

</aop:config>

<!-- don't forget the DataSource -->

<bean id="dataSource" class="org.apache.commons.dbcp.BasicDataSource" destroy-method="close">

<property name="driverClassName" value="oracle.jdbc.driver.OracleDriver"/>

<property name="url" value="jdbc:oracle:thin:@rj-t42:1521:elvis"/>

<property name="username" value="scott"/>

<property name="password" value="tiger"/>

</bean>

<!-- similarly, don't forget the TransactionManager -->

<bean id="txManager" class="org.springframework.jdbc.datasource.DataSourceTransactionManager">

<property name="dataSource" ref="dataSource"/>

</bean>

<!-- other <bean/> definitions here -->

</beans>Examine the preceding configuration. It assumes that you want to make a service object,

the fooService bean, transactional. The transaction semantics to apply are encapsulated

in the <tx:advice/> definition. The <tx:advice/> definition reads as "all methods

starting with get are to run in the context of a read-only transaction, and all

other methods are to run with the default transaction semantics". The

transaction-manager attribute of the <tx:advice/> tag is set to the name of the

TransactionManager bean that is going to drive the transactions (in this case, the

txManager bean).

You can omit the transaction-manager attribute in the transactional advice

(<tx:advice/>) if the bean name of the TransactionManager that you want to

wire in has the name transactionManager. If the TransactionManager bean that

you want to wire in has any other name, you must use the transaction-manager

attribute explicitly, as in the preceding example.

|

The <aop:config/> definition ensures that the transactional advice defined by the

txAdvice bean runs at the appropriate points in the program. First, you define a

pointcut that matches the execution of any operation defined in the FooService interface

(fooServiceOperation). Then you associate the pointcut with the txAdvice by using an

advisor. The result indicates that, at the execution of a fooServiceOperation,

the advice defined by txAdvice is run.

The expression defined within the <aop:pointcut/> element is an AspectJ pointcut

expression. See the AOP section for more details on pointcut

expressions in Spring.

A common requirement is to make an entire service layer transactional. The best way to do this is to change the pointcut expression to match any operation in your service layer. The following example shows how to do so:

<aop:config>

<aop:pointcut id="fooServiceMethods" expression="execution(* x.y.service.*.*(..))"/>

<aop:advisor advice-ref="txAdvice" pointcut-ref="fooServiceMethods"/>

</aop:config>

In the preceding example, it is assumed that all your service interfaces are defined

in the x.y.service package. See the AOP section for more details.

|

Now that we have analyzed the configuration, you may be asking yourself, "What does all this configuration actually do?"

The configuration shown earlier is used to create a transactional proxy around the object

that is created from the fooService bean definition. The proxy is configured with

the transactional advice so that, when an appropriate method is invoked on the proxy,

a transaction is started, suspended, marked as read-only, and so on, depending on the

transaction configuration associated with that method. Consider the following program

that test drives the configuration shown earlier:

public final class Boot {

public static void main(final String[] args) throws Exception {

ApplicationContext ctx = new ClassPathXmlApplicationContext("context.xml");

FooService fooService = ctx.getBean(FooService.class);

fooService.insertFoo(new Foo());

}

}import org.springframework.beans.factory.getBean

fun main() {

val ctx = ClassPathXmlApplicationContext("context.xml")

val fooService = ctx.getBean<FooService>("fooService")

fooService.insertFoo(Foo())

}The output from running the preceding program should resemble the following (the Log4J

output and the stack trace from the UnsupportedOperationException thrown by the

insertFoo(..) method of the DefaultFooService class have been truncated for clarity):

<!-- the Spring container is starting up... -->

[AspectJInvocationContextExposingAdvisorAutoProxyCreator] - Creating implicit proxy for bean 'fooService' with 0 common interceptors and 1 specific interceptors

<!-- the DefaultFooService is actually proxied -->

[JdkDynamicAopProxy] - Creating JDK dynamic proxy for [x.y.service.DefaultFooService]

<!-- ... the insertFoo(..) method is now being invoked on the proxy -->

[TransactionInterceptor] - Getting transaction for x.y.service.FooService.insertFoo

<!-- the transactional advice kicks in here... -->

[DataSourceTransactionManager] - Creating new transaction with name [x.y.service.FooService.insertFoo]

[DataSourceTransactionManager] - Acquired Connection [org.apache.commons.dbcp.PoolableConnection@a53de4] for JDBC transaction

<!-- the insertFoo(..) method from DefaultFooService throws an exception... -->

[RuleBasedTransactionAttribute] - Applying rules to determine whether transaction should rollback on java.lang.UnsupportedOperationException

[TransactionInterceptor] - Invoking rollback for transaction on x.y.service.FooService.insertFoo due to throwable [java.lang.UnsupportedOperationException]

<!-- and the transaction is rolled back (by default, RuntimeException instances cause rollback) -->

[DataSourceTransactionManager] - Rolling back JDBC transaction on Connection [org.apache.commons.dbcp.PoolableConnection@a53de4]

[DataSourceTransactionManager] - Releasing JDBC Connection after transaction

[DataSourceUtils] - Returning JDBC Connection to DataSource

Exception in thread "main" java.lang.UnsupportedOperationException at x.y.service.DefaultFooService.insertFoo(DefaultFooService.java:14)

<!-- AOP infrastructure stack trace elements removed for clarity -->

at $Proxy0.insertFoo(Unknown Source)

at Boot.main(Boot.java:11)To use reactive transaction management the code has to use reactive types.

Spring Framework uses the ReactiveAdapterRegistry to determine whether a method

return type is reactive.

|

The following listing shows a modified version of the previously used FooService, but

this time the code uses reactive types:

// the reactive service interface that we want to make transactional

package x.y.service;

public interface FooService {

Flux<Foo> getFoo(String fooName);

Publisher<Foo> getFoo(String fooName, String barName);

Mono<Void> insertFoo(Foo foo);

Mono<Void> updateFoo(Foo foo);

}// the reactive service interface that we want to make transactional

package x.y.service

interface FooService {

fun getFoo(fooName: String): Flow<Foo>

fun getFoo(fooName: String, barName: String): Publisher<Foo>

fun insertFoo(foo: Foo) : Mono<Void>

fun updateFoo(foo: Foo) : Mono<Void>

}The following example shows an implementation of the preceding interface:

package x.y.service;

public class DefaultFooService implements FooService {

@Override

public Flux<Foo> getFoo(String fooName) {

// ...

}

@Override

public Publisher<Foo> getFoo(String fooName, String barName) {

// ...

}

@Override

public Mono<Void> insertFoo(Foo foo) {

// ...

}

@Override

public Mono<Void> updateFoo(Foo foo) {

// ...

}

}package x.y.service

class DefaultFooService : FooService {

override fun getFoo(fooName: String): Flow<Foo> {

// ...

}

override fun getFoo(fooName: String, barName: String): Publisher<Foo> {

// ...

}

override fun insertFoo(foo: Foo): Mono<Void> {

// ...

}

override fun updateFoo(foo: Foo): Mono<Void> {

// ...

}

}Imperative and reactive transaction management share the same semantics for transaction

boundary and transaction attribute definitions. The main difference between imperative

and reactive transactions is the deferred nature of the latter. TransactionInterceptor

decorates the returned reactive type with a transactional operator to begin and clean up

the transaction. Therefore, calling a transactional reactive method defers the actual

transaction management to a subscription type that activates processing of the reactive

type.

Another aspect of reactive transaction management relates to data escaping which is a natural consequence of the programming model.

Method return values of imperative transactions are returned from transactional methods upon successful termination of a method so that partially computed results do not escape the method closure.

Reactive transaction methods return a reactive wrapper type which represents a computation sequence along with a promise to begin and complete the computation.

A Publisher can emit data while a transaction is ongoing but not necessarily completed.

Therefore, methods that depend upon successful completion of an entire transaction need

to ensure completion and buffer results in the calling code.

1.4.3. Rolling Back a Declarative Transaction

The previous section outlined the basics of how to specify transactional settings for classes, typically service layer classes, declaratively in your application. This section describes how you can control the rollback of transactions in a simple, declarative fashion.

The recommended way to indicate to the Spring Framework’s transaction infrastructure

that a transaction’s work is to be rolled back is to throw an Exception from code that

is currently executing in the context of a transaction. The Spring Framework’s

transaction infrastructure code catches any unhandled Exception as it bubbles up

the call stack and makes a determination whether to mark the transaction for rollback.

In its default configuration, the Spring Framework’s transaction infrastructure code

marks a transaction for rollback only in the case of runtime, unchecked exceptions.

That is, when the thrown exception is an instance or subclass of RuntimeException. (

Error instances also, by default, result in a rollback). Checked exceptions that are

thrown from a transactional method do not result in rollback in the default

configuration.

You can configure exactly which Exception types mark a transaction for rollback,

including checked exceptions. The following XML snippet demonstrates how you configure

rollback for a checked, application-specific Exception type:

<tx:advice id="txAdvice" transaction-manager="txManager">

<tx:attributes>

<tx:method name="get*" read-only="true" rollback-for="NoProductInStockException"/>

<tx:method name="*"/>

</tx:attributes>

</tx:advice>If you do not want a transaction rolled

back when an exception is thrown, you can also specify 'no rollback rules'. The following example tells the Spring Framework’s

transaction infrastructure to commit the attendant transaction even in the face of an

unhandled InstrumentNotFoundException:

<tx:advice id="txAdvice">

<tx:attributes>

<tx:method name="updateStock" no-rollback-for="InstrumentNotFoundException"/>

<tx:method name="*"/>

</tx:attributes>

</tx:advice>When the Spring Framework’s transaction infrastructure catches an exception and it

consults the configured rollback rules to determine whether to mark the transaction for

rollback, the strongest matching rule wins. So, in the case of the following

configuration, any exception other than an InstrumentNotFoundException results in a

rollback of the attendant transaction:

<tx:advice id="txAdvice">

<tx:attributes>

<tx:method name="*" rollback-for="Throwable" no-rollback-for="InstrumentNotFoundException"/>

</tx:attributes>

</tx:advice>You can also indicate a required rollback programmatically. Although simple, this process is quite invasive and tightly couples your code to the Spring Framework’s transaction infrastructure. The following example shows how to programmatically indicate a required rollback:

public void resolvePosition() {

try {

// some business logic...

} catch (NoProductInStockException ex) {

// trigger rollback programmatically

TransactionAspectSupport.currentTransactionStatus().setRollbackOnly();

}

}fun resolvePosition() {

try {

// some business logic...

} catch (ex: NoProductInStockException) {

// trigger rollback programmatically

TransactionAspectSupport.currentTransactionStatus().setRollbackOnly();

}

}You are strongly encouraged to use the declarative approach to rollback, if at all possible. Programmatic rollback is available should you absolutely need it, but its usage flies in the face of achieving a clean POJO-based architecture.

1.4.4. Configuring Different Transactional Semantics for Different Beans

Consider the scenario where you have a number of service layer objects, and you want to

apply a totally different transactional configuration to each of them. You can do so

by defining distinct <aop:advisor/> elements with differing pointcut and

advice-ref attribute values.

As a point of comparison, first assume that all of your service layer classes are

defined in a root x.y.service package. To make all beans that are instances of classes

defined in that package (or in subpackages) and that have names ending in Service have

the default transactional configuration, you could write the following:

<?xml version="1.0" encoding="UTF-8"?>

<beans xmlns="http://www.springframework.org/schema/beans"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xmlns:aop="http://www.springframework.org/schema/aop"

xmlns:tx="http://www.springframework.org/schema/tx"

xsi:schemaLocation="

http://www.springframework.org/schema/beans

https://www.springframework.org/schema/beans/spring-beans.xsd

http://www.springframework.org/schema/tx

https://www.springframework.org/schema/tx/spring-tx.xsd

http://www.springframework.org/schema/aop

https://www.springframework.org/schema/aop/spring-aop.xsd">

<aop:config>

<aop:pointcut id="serviceOperation"

expression="execution(* x.y.service..*Service.*(..))"/>

<aop:advisor pointcut-ref="serviceOperation" advice-ref="txAdvice"/>

</aop:config>

<!-- these two beans will be transactional... -->

<bean id="fooService" class="x.y.service.DefaultFooService"/>

<bean id="barService" class="x.y.service.extras.SimpleBarService"/>

<!-- ... and these two beans won't -->

<bean id="anotherService" class="org.xyz.SomeService"/> <!-- (not in the right package) -->

<bean id="barManager" class="x.y.service.SimpleBarManager"/> <!-- (doesn't end in 'Service') -->

<tx:advice id="txAdvice">

<tx:attributes>

<tx:method name="get*" read-only="true"/>

<tx:method name="*"/>

</tx:attributes>

</tx:advice>

<!-- other transaction infrastructure beans such as a TransactionManager omitted... -->

</beans>The following example shows how to configure two distinct beans with totally different transactional settings:

<?xml version="1.0" encoding="UTF-8"?>

<beans xmlns="http://www.springframework.org/schema/beans"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xmlns:aop="http://www.springframework.org/schema/aop"

xmlns:tx="http://www.springframework.org/schema/tx"

xsi:schemaLocation="

http://www.springframework.org/schema/beans

https://www.springframework.org/schema/beans/spring-beans.xsd

http://www.springframework.org/schema/tx

https://www.springframework.org/schema/tx/spring-tx.xsd

http://www.springframework.org/schema/aop

https://www.springframework.org/schema/aop/spring-aop.xsd">

<aop:config>

<aop:pointcut id="defaultServiceOperation"

expression="execution(* x.y.service.*Service.*(..))"/>

<aop:pointcut id="noTxServiceOperation"

expression="execution(* x.y.service.ddl.DefaultDdlManager.*(..))"/>

<aop:advisor pointcut-ref="defaultServiceOperation" advice-ref="defaultTxAdvice"/>

<aop:advisor pointcut-ref="noTxServiceOperation" advice-ref="noTxAdvice"/>

</aop:config>

<!-- this bean will be transactional (see the 'defaultServiceOperation' pointcut) -->

<bean id="fooService" class="x.y.service.DefaultFooService"/>

<!-- this bean will also be transactional, but with totally different transactional settings -->

<bean id="anotherFooService" class="x.y.service.ddl.DefaultDdlManager"/>

<tx:advice id="defaultTxAdvice">

<tx:attributes>

<tx:method name="get*" read-only="true"/>

<tx:method name="*"/>

</tx:attributes>

</tx:advice>

<tx:advice id="noTxAdvice">

<tx:attributes>

<tx:method name="*" propagation="NEVER"/>

</tx:attributes>

</tx:advice>

<!-- other transaction infrastructure beans such as a TransactionManager omitted... -->

</beans>1.4.5. <tx:advice/> Settings

This section summarizes the various transactional settings that you can specify by using

the <tx:advice/> tag. The default <tx:advice/> settings are:

-

The propagation setting is

REQUIRED. -

The isolation level is

DEFAULT. -

The transaction is read-write.

-

The transaction timeout defaults to the default timeout of the underlying transaction system or none if timeouts are not supported.

-

Any

RuntimeExceptiontriggers rollback, and any checkedExceptiondoes not.

You can change these default settings. The following table summarizes the various attributes of the <tx:method/> tags

that are nested within <tx:advice/> and <tx:attributes/> tags:

| Attribute | Required? | Default | Description |

|---|---|---|---|

|

Yes |

Method names with which the transaction attributes are to be associated. The

wildcard (*) character can be used to associate the same transaction attribute

settings with a number of methods (for example, |

|

|

No |

|

Transaction propagation behavior. |

|

No |

|

Transaction isolation level. Only applicable to propagation settings of |

|

No |

-1 |

Transaction timeout (seconds). Only applicable to propagation |

|

No |

false |

Read-write versus read-only transaction. Applies only to |

|

No |

Comma-delimited list of |

|

|

No |

Comma-delimited list of |

1.4.6. Using @Transactional

In addition to the XML-based declarative approach to transaction configuration, you can use an annotation-based approach. Declaring transaction semantics directly in the Java source code puts the declarations much closer to the affected code. There is not much danger of undue coupling, because code that is meant to be used transactionally is almost always deployed that way anyway.

The standard javax.transaction.Transactional annotation is also supported as a

drop-in replacement to Spring’s own annotation. Please refer to JTA 1.2 documentation

for more details.

|

The ease-of-use afforded by the use of the @Transactional annotation is best

illustrated with an example, which is explained in the text that follows.

Consider the following class definition:

// the service class that we want to make transactional

@Transactional

public class DefaultFooService implements FooService {

@Override

public Foo getFoo(String fooName) {

// ...

}

@Override

public Foo getFoo(String fooName, String barName) {

// ...

}

@Override

public void insertFoo(Foo foo) {

// ...

}

@Override

public void updateFoo(Foo foo) {

// ...

}

}// the service class that we want to make transactional

@Transactional

class DefaultFooService : FooService {

override fun getFoo(fooName: String): Foo {

// ...

}

override fun getFoo(fooName: String, barName: String): Foo {

// ...

}

override fun insertFoo(foo: Foo) {

// ...

}

override fun updateFoo(foo: Foo) {

// ...

}

}Used at the class level as above, the annotation indicates a default for all methods of

the declaring class (as well as its subclasses). Alternatively, each method can be

annotated individually. See Method visibility and @Transactional for

further details on which methods Spring considers transactional. Note that a class-level

annotation does not apply to ancestor classes up the class hierarchy; in such a scenario,

inherited methods need to be locally redeclared in order to participate in a

subclass-level annotation.

When a POJO class such as the one above is defined as a bean in a Spring context,

you can make the bean instance transactional through an @EnableTransactionManagement

annotation in a @Configuration class. See the

javadoc

for full details.

In XML configuration, the <tx:annotation-driven/> tag provides similar convenience:

<!-- from the file 'context.xml' -->

<?xml version="1.0" encoding="UTF-8"?>

<beans xmlns="http://www.springframework.org/schema/beans"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xmlns:aop="http://www.springframework.org/schema/aop"

xmlns:tx="http://www.springframework.org/schema/tx"

xsi:schemaLocation="

http://www.springframework.org/schema/beans

https://www.springframework.org/schema/beans/spring-beans.xsd

http://www.springframework.org/schema/tx

https://www.springframework.org/schema/tx/spring-tx.xsd

http://www.springframework.org/schema/aop

https://www.springframework.org/schema/aop/spring-aop.xsd">

<!-- this is the service object that we want to make transactional -->

<bean id="fooService" class="x.y.service.DefaultFooService"/>

<!-- enable the configuration of transactional behavior based on annotations -->

<!-- a TransactionManager is still required -->

<tx:annotation-driven transaction-manager="txManager"/> (1)

<bean id="txManager" class="org.springframework.jdbc.datasource.DataSourceTransactionManager">

<!-- (this dependency is defined somewhere else) -->

<property name="dataSource" ref="dataSource"/>

</bean>

<!-- other <bean/> definitions here -->

</beans>| 1 | The line that makes the bean instance transactional. |

You can omit the transaction-manager attribute in the <tx:annotation-driven/>

tag if the bean name of the TransactionManager that you want to wire in has the name

transactionManager. If the TransactionManager bean that you want to dependency-inject

has any other name, you have to use the transaction-manager attribute, as in the

preceding example.

|

Reactive transactional methods use reactive return types in contrast to imperative programming arrangements as the following listing shows:

// the reactive service class that we want to make transactional

@Transactional

public class DefaultFooService implements FooService {

@Override

public Publisher<Foo> getFoo(String fooName) {

// ...

}

@Override

public Mono<Foo> getFoo(String fooName, String barName) {

// ...

}

@Override

public Mono<Void> insertFoo(Foo foo) {

// ...

}

@Override

public Mono<Void> updateFoo(Foo foo) {

// ...

}

}// the reactive service class that we want to make transactional

@Transactional

class DefaultFooService : FooService {

override fun getFoo(fooName: String): Flow<Foo> {

// ...

}

override fun getFoo(fooName: String, barName: String): Mono<Foo> {

// ...

}

override fun insertFoo(foo: Foo): Mono<Void> {

// ...

}

override fun updateFoo(foo: Foo): Mono<Void> {

// ...

}

}Note that there are special considerations for the returned Publisher with regards to

Reactive Streams cancellation signals. See the Cancel Signals section under

"Using the TransactionOperator" for more details.

|

Method visibility and

@TransactionalWhen you use transactional proxies with Spring’s standard configuration, you should apply

the When using The Spring TestContext Framework supports non-private |

You can apply the @Transactional annotation to an interface definition, a method

on an interface, a class definition, or a method on a class. However, the

mere presence of the @Transactional annotation is not enough to activate the

transactional behavior. The @Transactional annotation is merely metadata that can

be consumed by some runtime infrastructure that is @Transactional-aware and that

can use the metadata to configure the appropriate beans with transactional behavior.

In the preceding example, the <tx:annotation-driven/> element switches on the

transactional behavior.

The Spring team recommends that you annotate only concrete classes (and methods of

concrete classes) with the @Transactional annotation, as opposed to annotating interfaces.

You certainly can place the @Transactional annotation on an interface (or an interface

method), but this works only as you would expect it to if you use interface-based

proxies. The fact that Java annotations are not inherited from interfaces means that,

if you use class-based proxies (proxy-target-class="true") or the weaving-based

aspect (mode="aspectj"), the transaction settings are not recognized by the proxying

and weaving infrastructure, and the object is not wrapped in a transactional proxy.

|

In proxy mode (which is the default), only external method calls coming in through

the proxy are intercepted. This means that self-invocation (in effect, a method within

the target object calling another method of the target object) does not lead to an actual

transaction at runtime even if the invoked method is marked with @Transactional. Also,

the proxy must be fully initialized to provide the expected behavior, so you should not

rely on this feature in your initialization code — for example, in a @PostConstruct

method.

|

Consider using AspectJ mode (see the mode attribute in the following table) if you

expect self-invocations to be wrapped with transactions as well. In this case, there is

no proxy in the first place. Instead, the target class is woven (that is, its byte code

is modified) to support @Transactional runtime behavior on any kind of method.

| XML Attribute | Annotation Attribute | Default | Description |

|---|---|---|---|

|

N/A (see |

|

Name of the transaction manager to use. Required only if the name of the transaction

manager is not |

|

|

|

The default mode ( |

|

|

|

Applies to |

|

|

|

Defines the order of the transaction advice that is applied to beans annotated with

|

The default advice mode for processing @Transactional annotations is proxy,

which allows for interception of calls through the proxy only. Local calls within the

same class cannot get intercepted that way. For a more advanced mode of interception,

consider switching to aspectj mode in combination with compile-time or load-time weaving.

|

The proxy-target-class attribute controls what type of transactional proxies are

created for classes annotated with the @Transactional annotation. If

proxy-target-class is set to true, class-based proxies are created. If

proxy-target-class is false or if the attribute is omitted, standard JDK

interface-based proxies are created. (See Proxying Mechanisms

for a discussion of the different proxy types.)

|

@EnableTransactionManagement and <tx:annotation-driven/> look for

@Transactional only on beans in the same application context in which they are defined.

This means that, if you put annotation-driven configuration in a WebApplicationContext

for a DispatcherServlet, it checks for @Transactional beans only in your controllers

and not in your services. See MVC for more information.

|

The most derived location takes precedence when evaluating the transactional settings

for a method. In the case of the following example, the DefaultFooService class is

annotated at the class level with the settings for a read-only transaction, but the

@Transactional annotation on the updateFoo(Foo) method in the same class takes

precedence over the transactional settings defined at the class level.

@Transactional(readOnly = true)

public class DefaultFooService implements FooService {

public Foo getFoo(String fooName) {

// ...

}

// these settings have precedence for this method

@Transactional(readOnly = false, propagation = Propagation.REQUIRES_NEW)

public void updateFoo(Foo foo) {

// ...

}

}@Transactional(readOnly = true)

class DefaultFooService : FooService {

override fun getFoo(fooName: String): Foo {

// ...

}

// these settings have precedence for this method

@Transactional(readOnly = false, propagation = Propagation.REQUIRES_NEW)

override fun updateFoo(foo: Foo) {

// ...

}

}@Transactional Settings

The @Transactional annotation is metadata that specifies that an interface, class,

or method must have transactional semantics (for example, "start a brand new read-only

transaction when this method is invoked, suspending any existing transaction").

The default @Transactional settings are as follows:

-

The propagation setting is

PROPAGATION_REQUIRED. -

The isolation level is

ISOLATION_DEFAULT. -

The transaction is read-write.

-

The transaction timeout defaults to the default timeout of the underlying transaction system, or to none if timeouts are not supported.

-

Any

RuntimeExceptiontriggers rollback, and any checkedExceptiondoes not.

You can change these default settings. The following table summarizes the various

properties of the @Transactional annotation:

| Property | Type | Description |

|---|---|---|

|

Optional qualifier that specifies the transaction manager to be used. |

|

|

Optional propagation setting. |

|

|

|

Optional isolation level. Applies only to propagation values of |

|

|

Optional transaction timeout. Applies only to propagation values of |

|

|

Read-write versus read-only transaction. Only applicable to values of |

|

Array of |

Optional array of exception classes that must cause rollback. |

|

Array of class names. The classes must be derived from |

Optional array of names of exception classes that must cause rollback. |

|

Array of |

Optional array of exception classes that must not cause rollback. |

|

Array of |

Optional array of names of exception classes that must not cause rollback. |

|

Array of |

Labels may be evaluated by transaction managers to associate implementation-specific behavior with the actual transaction. |

Currently, you cannot have explicit control over the name of a transaction, where 'name'

means the transaction name that appears in a transaction monitor, if applicable

(for example, WebLogic’s transaction monitor), and in logging output. For declarative

transactions, the transaction name is always the fully-qualified class name + .

+ the method name of the transactionally advised class. For example, if the

handlePayment(..) method of the BusinessService class started a transaction, the

name of the transaction would be: com.example.BusinessService.handlePayment.

Multiple Transaction Managers with @Transactional

Most Spring applications need only a single transaction manager, but there may be

situations where you want multiple independent transaction managers in a single

application. You can use the value or transactionManager attribute of the

@Transactional annotation to optionally specify the identity of the

TransactionManager to be used. This can either be the bean name or the qualifier value

of the transaction manager bean. For example, using the qualifier notation, you can

combine the following Java code with the following transaction manager bean declarations

in the application context:

public class TransactionalService {

@Transactional("order")

public void setSomething(String name) { ... }

@Transactional("account")

public void doSomething() { ... }

@Transactional("reactive-account")

public Mono<Void> doSomethingReactive() { ... }

}class TransactionalService {

@Transactional("order")

fun setSomething(name: String) {

// ...

}

@Transactional("account")

fun doSomething() {

// ...

}

@Transactional("reactive-account")

fun doSomethingReactive(): Mono<Void> {

// ...

}

}The following listing shows the bean declarations:

<tx:annotation-driven/>

<bean id="transactionManager1" class="org.springframework.jdbc.datasource.DataSourceTransactionManager">

...

<qualifier value="order"/>

</bean>

<bean id="transactionManager2" class="org.springframework.jdbc.datasource.DataSourceTransactionManager">

...

<qualifier value="account"/>

</bean>

<bean id="transactionManager3" class="org.springframework.data.r2dbc.connectionfactory.R2dbcTransactionManager">

...

<qualifier value="reactive-account"/>

</bean>In this case, the individual methods on TransactionalService run under separate

transaction managers, differentiated by the order, account, and reactive-account

qualifiers. The default <tx:annotation-driven> target bean name, transactionManager,

is still used if no specifically qualified TransactionManager bean is found.

Custom Composed Annotations

If you find you repeatedly use the same attributes with @Transactional on many different

methods, Spring’s meta-annotation support lets you

define custom composed annotations for your specific use cases. For example, consider the

following annotation definitions:

@Target({ElementType.METHOD, ElementType.TYPE})

@Retention(RetentionPolicy.RUNTIME)

@Transactional(transactionManager = "order", label = "causal-consistency")

public @interface OrderTx {

}

@Target({ElementType.METHOD, ElementType.TYPE})

@Retention(RetentionPolicy.RUNTIME)

@Transactional(transactionManager = "account", label = "retryable")

public @interface AccountTx {

}@Target(AnnotationTarget.FUNCTION, AnnotationTarget.TYPE)

@Retention(AnnotationRetention.RUNTIME)

@Transactional(transactionManager = "order", label = ["causal-consistency"])

annotation class OrderTx

@Target(AnnotationTarget.FUNCTION, AnnotationTarget.TYPE)

@Retention(AnnotationRetention.RUNTIME)

@Transactional(transactionManager = "account", label = ["retryable"])

annotation class AccountTxThe preceding annotations let us write the example from the previous section as follows:

public class TransactionalService {

@OrderTx

public void setSomething(String name) {

// ...

}

@AccountTx

public void doSomething() {

// ...

}

}class TransactionalService {

@OrderTx

fun setSomething(name: String) {

// ...

}

@AccountTx

fun doSomething() {

// ...

}

}In the preceding example, we used the syntax to define the transaction manager qualifier and transactional labels, but we could also have included propagation behavior, rollback rules, timeouts, and other features.

1.4.7. Transaction Propagation

This section describes some semantics of transaction propagation in Spring. Note that this section is not a proper introduction to transaction propagation. Rather, it details some of the semantics regarding transaction propagation in Spring.

In Spring-managed transactions, be aware of the difference between physical and logical transactions, and how the propagation setting applies to this difference.

Understanding PROPAGATION_REQUIRED

PROPAGATION_REQUIRED enforces a physical transaction, either locally for the current

scope if no transaction exists yet or participating in an existing 'outer' transaction

defined for a larger scope. This is a fine default in common call stack arrangements

within the same thread (for example, a service facade that delegates to several repository methods

where all the underlying resources have to participate in the service-level transaction).

By default, a participating transaction joins the characteristics of the outer scope,

silently ignoring the local isolation level, timeout value, or read-only flag (if any).

Consider switching the validateExistingTransactions flag to true on your transaction

manager if you want isolation level declarations to be rejected when participating in

an existing transaction with a different isolation level. This non-lenient mode also

rejects read-only mismatches (that is, an inner read-write transaction that tries to participate

in a read-only outer scope).

|

When the propagation setting is PROPAGATION_REQUIRED, a logical transaction scope

is created for each method upon which the setting is applied. Each such logical

transaction scope can determine rollback-only status individually, with an outer

transaction scope being logically independent from the inner transaction scope.

In the case of standard PROPAGATION_REQUIRED behavior, all these scopes are

mapped to the same physical transaction. So a rollback-only marker set in the inner

transaction scope does affect the outer transaction’s chance to actually commit.

However, in the case where an inner transaction scope sets the rollback-only marker, the

outer transaction has not decided on the rollback itself, so the rollback (silently

triggered by the inner transaction scope) is unexpected. A corresponding

UnexpectedRollbackException is thrown at that point. This is expected behavior so

that the caller of a transaction can never be misled to assume that a commit was

performed when it really was not. So, if an inner transaction (of which the outer caller

is not aware) silently marks a transaction as rollback-only, the outer caller still

calls commit. The outer caller needs to receive an UnexpectedRollbackException to

indicate clearly that a rollback was performed instead.

Understanding PROPAGATION_REQUIRES_NEW

PROPAGATION_REQUIRES_NEW, in contrast to PROPAGATION_REQUIRED, always uses an

independent physical transaction for each affected transaction scope, never

participating in an existing transaction for an outer scope. In such an arrangement,

the underlying resource transactions are different and, hence, can commit or roll back

independently, with an outer transaction not affected by an inner transaction’s rollback

status and with an inner transaction’s locks released immediately after its completion.

Such an independent inner transaction can also declare its own isolation level, timeout,

and read-only settings and not inherit an outer transaction’s characteristics.

Understanding PROPAGATION_NESTED

PROPAGATION_NESTED uses a single physical transaction with multiple savepoints

that it can roll back to. Such partial rollbacks let an inner transaction scope

trigger a rollback for its scope, with the outer transaction being able to continue

the physical transaction despite some operations having been rolled back. This setting

is typically mapped onto JDBC savepoints, so it works only with JDBC resource

transactions. See Spring’s DataSourceTransactionManager.

1.4.8. Advising Transactional Operations

Suppose you want to run both transactional operations and some basic profiling advice.

How do you effect this in the context of <tx:annotation-driven/>?

When you invoke the updateFoo(Foo) method, you want to see the following actions:

-

The configured profiling aspect starts.

-

The transactional advice runs.

-

The method on the advised object runs.

-

The transaction commits.

-

The profiling aspect reports the exact duration of the whole transactional method invocation.

| This chapter is not concerned with explaining AOP in any great detail (except as it applies to transactions). See AOP for detailed coverage of the AOP configuration and AOP in general. |

The following code shows the simple profiling aspect discussed earlier:

package x.y;

import org.aspectj.lang.ProceedingJoinPoint;

import org.springframework.util.StopWatch;

import org.springframework.core.Ordered;

public class SimpleProfiler implements Ordered {

private int order;

// allows us to control the ordering of advice

public int getOrder() {

return this.order;

}

public void setOrder(int order) {

this.order = order;

}

// this method is the around advice

public Object profile(ProceedingJoinPoint call) throws Throwable {

Object returnValue;

StopWatch clock = new StopWatch(getClass().getName());

try {

clock.start(call.toShortString());

returnValue = call.proceed();

} finally {

clock.stop();

System.out.println(clock.prettyPrint());

}

return returnValue;

}

}class SimpleProfiler : Ordered {

private var order: Int = 0

// allows us to control the ordering of advice

override fun getOrder(): Int {

return this.order

}

fun setOrder(order: Int) {

this.order = order

}

// this method is the around advice

fun profile(call: ProceedingJoinPoint): Any {

var returnValue: Any

val clock = StopWatch(javaClass.name)

try {

clock.start(call.toShortString())

returnValue = call.proceed()

} finally {

clock.stop()

println(clock.prettyPrint())

}

return returnValue

}

}The ordering of advice

is controlled through the Ordered interface. For full details on advice ordering, see

Advice ordering.

The following configuration creates a fooService bean that has profiling and

transactional aspects applied to it in the desired order:

<?xml version="1.0" encoding="UTF-8"?>

<beans xmlns="http://www.springframework.org/schema/beans"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xmlns:aop="http://www.springframework.org/schema/aop"

xmlns:tx="http://www.springframework.org/schema/tx"

xsi:schemaLocation="

http://www.springframework.org/schema/beans

https://www.springframework.org/schema/beans/spring-beans.xsd

http://www.springframework.org/schema/tx

https://www.springframework.org/schema/tx/spring-tx.xsd

http://www.springframework.org/schema/aop

https://www.springframework.org/schema/aop/spring-aop.xsd">

<bean id="fooService" class="x.y.service.DefaultFooService"/>

<!-- this is the aspect -->

<bean id="profiler" class="x.y.SimpleProfiler">

<!-- run before the transactional advice (hence the lower order number) -->

<property name="order" value="1"/>

</bean>

<tx:annotation-driven transaction-manager="txManager" order="200"/>

<aop:config>

<!-- this advice runs around the transactional advice -->

<aop:aspect id="profilingAspect" ref="profiler">

<aop:pointcut id="serviceMethodWithReturnValue"

expression="execution(!void x.y..*Service.*(..))"/>

<aop:around method="profile" pointcut-ref="serviceMethodWithReturnValue"/>

</aop:aspect>

</aop:config>

<bean id="dataSource" class="org.apache.commons.dbcp.BasicDataSource" destroy-method="close">

<property name="driverClassName" value="oracle.jdbc.driver.OracleDriver"/>

<property name="url" value="jdbc:oracle:thin:@rj-t42:1521:elvis"/>

<property name="username" value="scott"/>

<property name="password" value="tiger"/>

</bean>

<bean id="txManager" class="org.springframework.jdbc.datasource.DataSourceTransactionManager">

<property name="dataSource" ref="dataSource"/>

</bean>

</beans>You can configure any number of additional aspects in similar fashion.

The following example creates the same setup as the previous two examples but uses the purely XML declarative approach:

<?xml version="1.0" encoding="UTF-8"?>

<beans xmlns="http://www.springframework.org/schema/beans"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xmlns:aop="http://www.springframework.org/schema/aop"

xmlns:tx="http://www.springframework.org/schema/tx"

xsi:schemaLocation="

http://www.springframework.org/schema/beans

https://www.springframework.org/schema/beans/spring-beans.xsd

http://www.springframework.org/schema/tx

https://www.springframework.org/schema/tx/spring-tx.xsd

http://www.springframework.org/schema/aop

https://www.springframework.org/schema/aop/spring-aop.xsd">

<bean id="fooService" class="x.y.service.DefaultFooService"/>

<!-- the profiling advice -->

<bean id="profiler" class="x.y.SimpleProfiler">

<!-- run before the transactional advice (hence the lower order number) -->

<property name="order" value="1"/>

</bean>

<aop:config>

<aop:pointcut id="entryPointMethod" expression="execution(* x.y..*Service.*(..))"/>

<!-- runs after the profiling advice (cf. the order attribute) -->

<aop:advisor advice-ref="txAdvice" pointcut-ref="entryPointMethod" order="2"/>

<!-- order value is higher than the profiling aspect -->