Preface

1. About the documentation

The Spring Cloud Data Flow reference guide is available as html, pdf and epub documents. The latest copy is available at docs.spring.io/spring-cloud-dataflow/docs/current-SNAPSHOT/reference/html/.

Copies of this document may be made for your own use and for distribution to others, provided that you do not charge any fee for such copies and further provided that each copy contains this Copyright Notice, whether distributed in print or electronically.

2. Getting help

Having trouble with Spring Cloud Data Flow, We’d like to help!

-

Ask a question - we monitor stackoverflow.com for questions tagged with

spring-cloud-dataflow. -

Report bugs with Spring Cloud Data Flow at github.com/spring-cloud/spring-cloud-dataflow/issues.

| All of Spring Cloud Data Flow is open source, including the documentation! If you find problems with the docs; or if you just want to improve them, please get involved. |

Spring Cloud Data Flow Overview

This section provides a brief overview of the Spring Cloud Data Flow reference documentation. Think of it as map for the rest of the document. You can read this reference guide in a linear fashion, or you can skip sections if something doesn’t interest you.

3. Introducing Spring Cloud Data Flow

Spring Cloud Data Flow is a cloud-native orchestration service for composable microservice applications on modern runtimes. With Spring Cloud Data Flow, developers can create and orchestrate data pipelines for common use cases such as data ingest, real-time analytics, and data import/export.

Spring Cloud Data Flow is the cloud native redesign of Spring XD – a project that aimed to simplify development of Big Data applications. The stream and batch modules from Spring XD are refactored as Spring Boot based stream and task/batch microservice applications respectively. These applications are now autonomous deployment units and they can "natively" run in modern runtimes such as Cloud Foundry, Apache YARN, Apache Mesos, and Kubernetes.

Spring Cloud Data Flow offers a collection of patterns and best practices for microservices-based distributed streaming and task/batch data pipelines.

3.1. Features

-

Develop using DSL, REST-APIs, Dashboard, and the drag-and-drop GUI - Flo

-

Create, unit-test, troubleshoot and manage microservice applications in isolation

-

Build data pipelines rapidly using the out-of-the-box stream and task/batch applications

-

Consume microservice applications as maven or docker artifacts

-

Scale data pipelines without interrupting data flows

-

Orchestrate data-centric applications on a variety of modern runtime platforms including Cloud Foundry, Apache YARN, Apache Mesos, and Kubernetes

-

Take advantage of metrics, health checks, and the remote management of each microservice application

Architecture

4. Introduction

Spring Cloud Data Flow simplifies the development and deployment of applications focused on data processing use-cases. The major concepts of the architecture are Applications, the Data Flow Server, and the target runtime.

Applications come in two flavors

-

Long lived Stream applications where an unbounded amount of data is consumed or produced via messaging middleware.

-

Short lived Task applications that process a finite set of data and then terminate.

Depending on the runtime, applications can be packaged in two ways

-

Spring Boot uber-jar that is hosted in a maven repository, file, http or any other Spring resource implementation.

-

Docker

The runtime is the place where applications execute. The target runtimes for applications are platforms that you may already be using for other application deployments.

The supported runtimes are

-

Cloud Foundry

-

Apache YARN

-

Kubernetes

-

Apache Mesos

-

Local Server for development

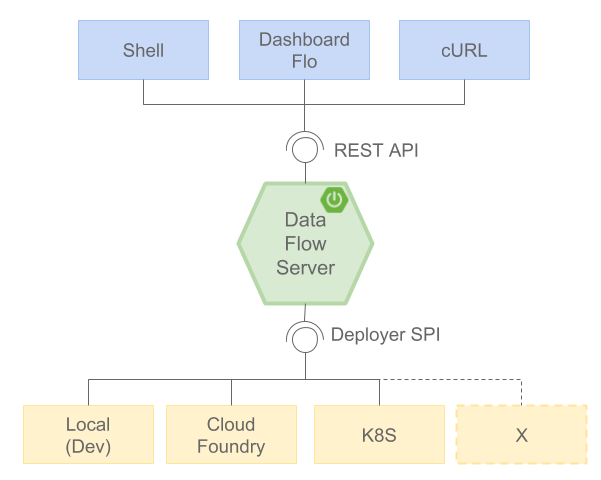

There is a deployer Service Provider Interface (SPI) that enables you to extend Data Flow to deploy onto other runtimes, for example to support Docker Swarm. There are community implementations of Hashicorp’s Nomad and RedHat Openshift is available. We look forward to working with the community for further contributions!

The component that is responsible for deploying applications to a runtime is the Data Flow Server. There is a Data Flow Server executable jar provided for each of the target runtimes. The Data Flow server is responsible for interpreting

-

A stream DSL that describes the logical flow of data through multiple applications.

-

A deployment manifest that describes the mapping of applications onto the runtime. For example, to set the initial number of instances, memory requirements, and data partitioning.

As an example, the DSL to describe the flow of data from an http source to an Apache Cassandra sink would be written as “http | cassandra”. These names in the DSL are registered with the Data Flow Server and map onto application artifacts that can be hosted in Maven or Docker repositories. Many source, processor, and sink applications for common use-cases (e.g. jdbc, hdfs, http, router) are provided by the Spring Cloud Data Flow team. The pipe symbol represents the communication between the two applications via messaging middleware. The two messaging middleware brokers that are supported are

-

Apache Kafka

-

RabbitMQ

In the case of Kafka, when deploying the stream, the Data Flow server is responsible to create the topics that correspond to each pipe symbol and configure each application to produce or consume from the topics so the desired flow of data is achieved.

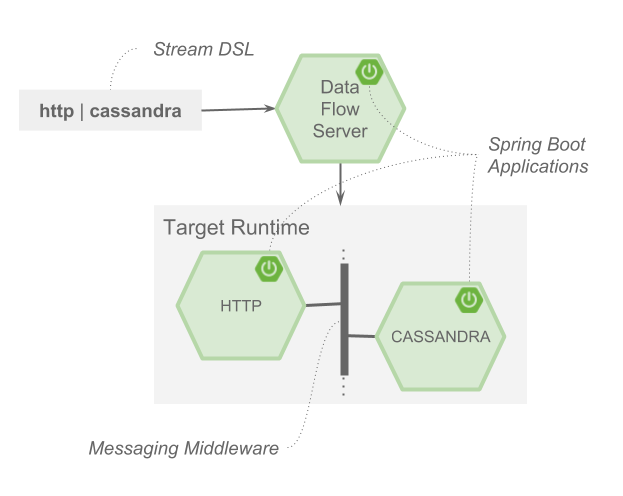

The interaction of the main components is shown below

In this diagram a DSL description of a stream is POSTed to the Data Flow Server. Based on the mapping of DSL application names to Maven and Docker artifacts, the http-source and cassandra-sink applications are deployed on the target runtime.

5. Microservice Architectural Style

The Data Flow Server deploys applications onto the target runtime that conform to the microservice architectural style. For example, a stream represents a high level application that consists of multiple small microservice applications each running in their own process. Each microservice application can be scaled up or down independent of the other and each has their own versioning lifecycle.

Both Streaming and Task based microservice applications build upon Spring Boot as the foundational library. This gives all microservice applications functionality such as health checks, security, configurable logging, monitoring and management functionality, as well as executable JAR packaging.

It is important to emphasise that these microservice applications are ‘just apps’ that you can run by yourself using ‘java -jar’ and passing in appropriate configuration properties. We provide many common microservice applications for common operations so you don’t have to start from scratch when addressing common use-cases which build upon the rich ecosystem of Spring Projects, e.g Spring Integration, Spring Data, Spring Hadoop and Spring Batch. Creating your own microservice application is similar to creating other Spring Boot applications, you can start using the Spring Initialzr web site or the UI to create the basic scaffolding of either a Stream or Task based microservice.

In addition to passing in the appropriate configuration to the applications, the Data Flow server is responsible for preparing the target platform’s infrastructure so that the application can be deployed. For example, in Cloud Foundry it would be binding specified services to the applications and executing the ‘cf push’ command for each application. For Kubernetes it would be creating the replication controller, service, and load balancer.

The Data Flow Server helps simplify the deployment of multiple applications onto a target runtime, but one could also opt to deploy each of the microservice applications manually and not use Data Flow at all. This approach might be more appropriate to start out with for small scale deployments, gradually adopting the convenience and consistency of Data Flow as you develop more applications. Manual deployment of Stream and Task based microservices is also a useful educational exercise that will help you better understand some of the automatic applications configuration and platform targeting steps that the Data Flow Server provides.

5.1. Comparison to other Platform architectures

Spring Cloud Data Flow’s architectural style is different than other Stream and Batch processing platforms. For example in Apache Spark, Apache Flink, and Google Cloud Dataflow applications run on a dedicated compute engine cluster. The nature of the compute engine gives these platforms a richer environment for performing complex calculations on the data as compared to Spring Cloud Data Flow, but it introduces complexity of another execution environment that is often not needed when creating data centric applications. That doesn’t mean you cannot do real time data computations when using Spring Cloud Data Flow. Refer to the analytics section which describes the integration of Redis to handle common counting based use-cases as well as the RxJava integration for functional API driven analytics use-cases, such as time-sliding-window and moving-average among others.

Similarly, Apache Storm, Hortonworks DataFlow and Spring Cloud Data Flow’s predecessor, Spring XD, use a dedicated application execution cluster, unique to each product, that determines where your code should execute on the cluster and perform health checks to ensure that long lived applications are restarted if they fail. Often, framework specific interfaces are required to be used in order to correctly “plug in” to the cluster’s execution framework.

As we discovered during the evolution of Spring XD, the rise of multiple container frameworks in 2015 made creating our own runtime a duplication of efforts. There is no reason to build your own resource management mechanics, when there are multiple runtime platforms that offer this functionality already. Taking these considerations into account is what made us shift to the current architecture where we delegate the execution to popular runtimes, runtimes that you may already be using for other purposes. This is an advantage in that it reduces the cognitive distance for creating and managing data centric applications as many of the same skills used for deploying other end-user/web applications are applicable.

6. Streaming Applications

While Spring Boot provides the foundation for creating DevOps friendly microservice applications, other libraries in the Spring ecosystem help create Stream based microservice applications. The most important of these is Spring Cloud Stream.

The essence of the Spring Cloud Stream programming model is to provide an easy way to describe multiple inputs and outputs of an application that communicate over messaging middleware. These input and outputs map onto Kafka topics or Rabbit exchanges and queues. Common application configuration for a Source that generates data, a Process that consumes and produces data and a Sink that consumes data is provided as part of the library.

6.1. Imperative Programming Model

Spring Cloud Stream is most closely integrated with Spring Integration’s imperative "event at a time" programming model. This means you write code that handles a single event callback. For example,

@EnableBinding(Sink.class)

public class LoggingSink {

@StreamListener(Sink.INPUT)

public void log(String message) {

System.out.println(message);

}

}In this case the String payload of a message coming on the input channel, is handed to the log method. The @EnableBinding annotation is what is used to tie together the input channel to the external middleware.

6.2. Functional Programming Model

However, Spring Cloud Stream can support other programming styles. The use of reactive APIs where incoming and outgoing data is handled as continuous data flows and it defines how each individual message should be handled. You can also use operators that describe functional transformations from inbound to outbound data flows. The upcoming versions will support Apache Kafka’s KStream API in the programming model.

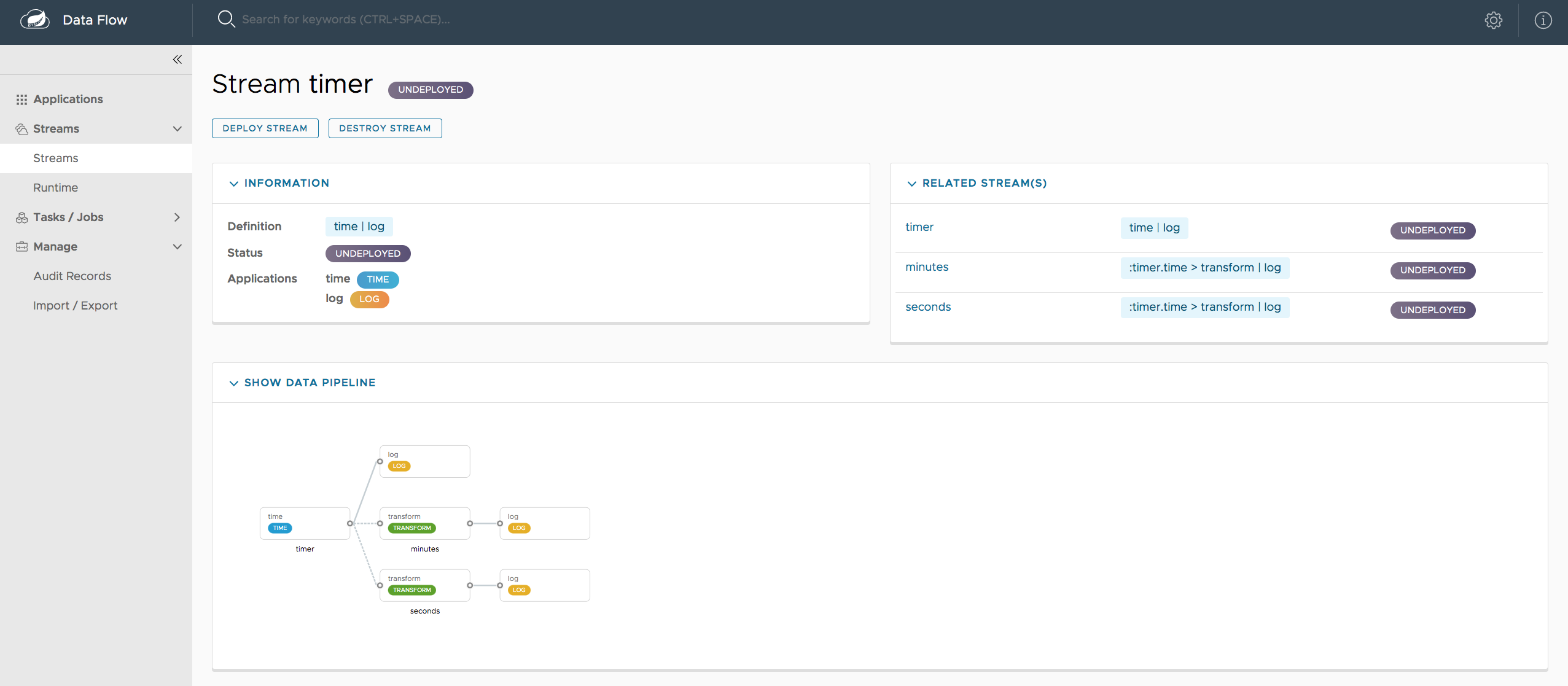

7. Streams

7.1. Topologies

The Stream DSL describes linear sequences of data flowing through the system. For example, in the stream definition http | transformer | cassandra, each pipe symbol connects the application on the left to the one on the right. Named channels can be used for routing and to fan out data to multiple messaging destinations.

Taps can be used to ‘listen in’ to the data that if flowing across any of the pipe symbols. Taps can be used as sources for new streams with an in independent life cycle.

7.2. Concurrency

For an application that will consume events, Spring Cloud stream exposes a concurrency setting that controls the size of a thread pool used for dispatching incoming messages. See the Consumer properties documentation for more information.

7.3. Partitioning

A common pattern in stream processing is to partition the data as it moves from one application to the next. Partitioning is a critical concept in stateful processing, for either performance or consistency reasons, to ensure that all related data is processed together. For example, in a time-windowed average calculation example, it is important that all measurements from any given sensor are processed by the same application instance. Alternatively, you may want to cache some data related to the incoming events so that it can be enriched without making a remote procedure call to retrieve the related data.

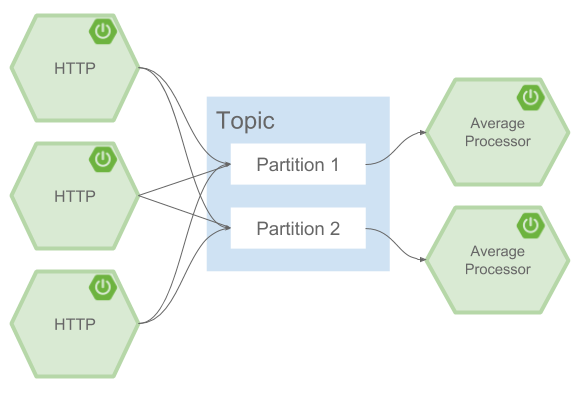

Spring Cloud Data Flow supports partitioning by configuring Spring Cloud Stream’s output and input bindings. Spring Cloud Stream provides a common abstraction for implementing partitioned processing use cases in a uniform fashion across different types of middleware. Partitioning can thus be used whether the broker itself is naturally partitioned (e.g., Kafka topics) or not (e.g., RabbitMQ). The following image shows how data could be partitioned into two buckets, such that each instance of the average processor application consumes a unique set of data.

To use a simple partitioning strategy in Spring Cloud Data Flow, you only need set the instance count for each application in the stream and a partitionKeyExpression producer property when deploying the stream. The partitionKeyExpression identifies what part of the message will be used as the key to partition data in the underlying middleware. An ingest stream can be defined as http | averageprocessor | cassandra (Note that the Cassandra sink isn’t shown in the diagram above). Suppose the payload being sent to the http source was in JSON format and had a field called sensorId. Deploying the stream with the shell command stream deploy ingest --propertiesFile ingestStream.properties where the contents of the file ingestStream.properties are

app.http.count=3

app.averageprocessor.count=2

app.http.producer.partitionKeyExpression=payload.sensorIdwill deploy the stream such that all the input and output destinations are configured for data to flow through the applications but also ensure that a unique set of data is always delivered to each averageprocessor instance. In this case the default algorithm is to evaluate payload.sensorId % partitionCount where the partitionCount is the application count in the case of RabbitMQ and the partition count of the topic in the case of Kafka.

Please refer to Passing stream partition properties during stream deployment for additional strategies to partition streams during deployment and how they map onto the underlying Spring Cloud Stream Partitioning properties.

Also note, that you can’t currently scale partitioned streams. Read the section Scaling at runtime for more information.

7.4. Message Delivery Guarantees

Streams are composed of applications that use the Spring Cloud Stream library as the basis for communicating with the underlying messaging middleware product. Spring Cloud Stream also provides an opinionated configuration of middleware from several vendors, in particular providing persistent publish-subscribe semantics.

The Binder abstraction in Spring Cloud Stream is what connects the application to the middleware. There are several configuration properties of the binder that are portable across all binder implementations and some that are specific to the middleware.

For consumer applications there is a retry policy for exceptions generated during message handling. The retry policy is configured using the common consumer properties maxAttempts, backOffInitialInterval, backOffMaxInterval, and backOffMultiplier. The default values of these properties will retry the callback method invocation 3 times and wait one second for the first retry. A backoff multiplier of 2 is used for the second and third attempts.

When the number of retry attempts has exceeded the maxAttempts value, the exception and the failed message will become the payload of a message and be sent to the application’s error channel. By default, the default message handler for this error channel logs the message. You can change the default behavior in your application by creating your own message handler that subscribes to the error channel.

Spring Cloud Stream also supports a configuration option for both Kafka and RabbitMQ binder implementations that will send the failed message and stack trace to a dead letter queue. The dead letter queue is a destination and its nature depends on the messaging middleware (e.g in the case of Kafka it is a dedicated topic). To enable this for RabbitMQ set the consumer properties republishtoDlq and autoBindDlq and the producer property autoBindDlq to true when deploying the stream. To always apply these producer and consumer properties when deploying streams, configure them as common application properties when starting the Data Flow server.

Additional messaging delivery guarantees are those provided by the underlying messaging middleware that is chosen for the application for both producing and consuming applications. Refer to the Kafka Consumer and Producer and Rabbit Consumer and Producer documentation for more details. You will find extensive declarative support for all the native QOS options.

8. Analytics

Spring Cloud Data Flow is aware of certain Sink applications that will write counter data to Redis and provides an REST endpoint to read counter data. The types of counters supported are

-

Counter - Counts the number of messages it receives, optionally storing counts in a separate store such as redis.

-

Field Value Counter - Counts occurrences of unique values for a named field in a message payload

-

Aggregate Counter - Stores total counts but also retains the total count values for each minute, hour day and month.

It is important to note that the timestamp that is used in the aggregate counter can come from a field in the message itself so that out of order messages are properly accounted.

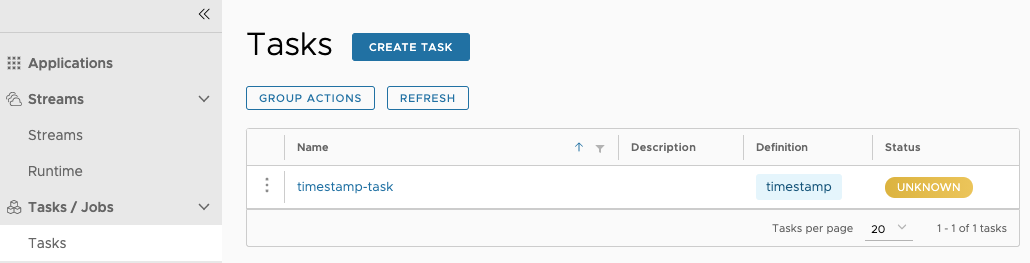

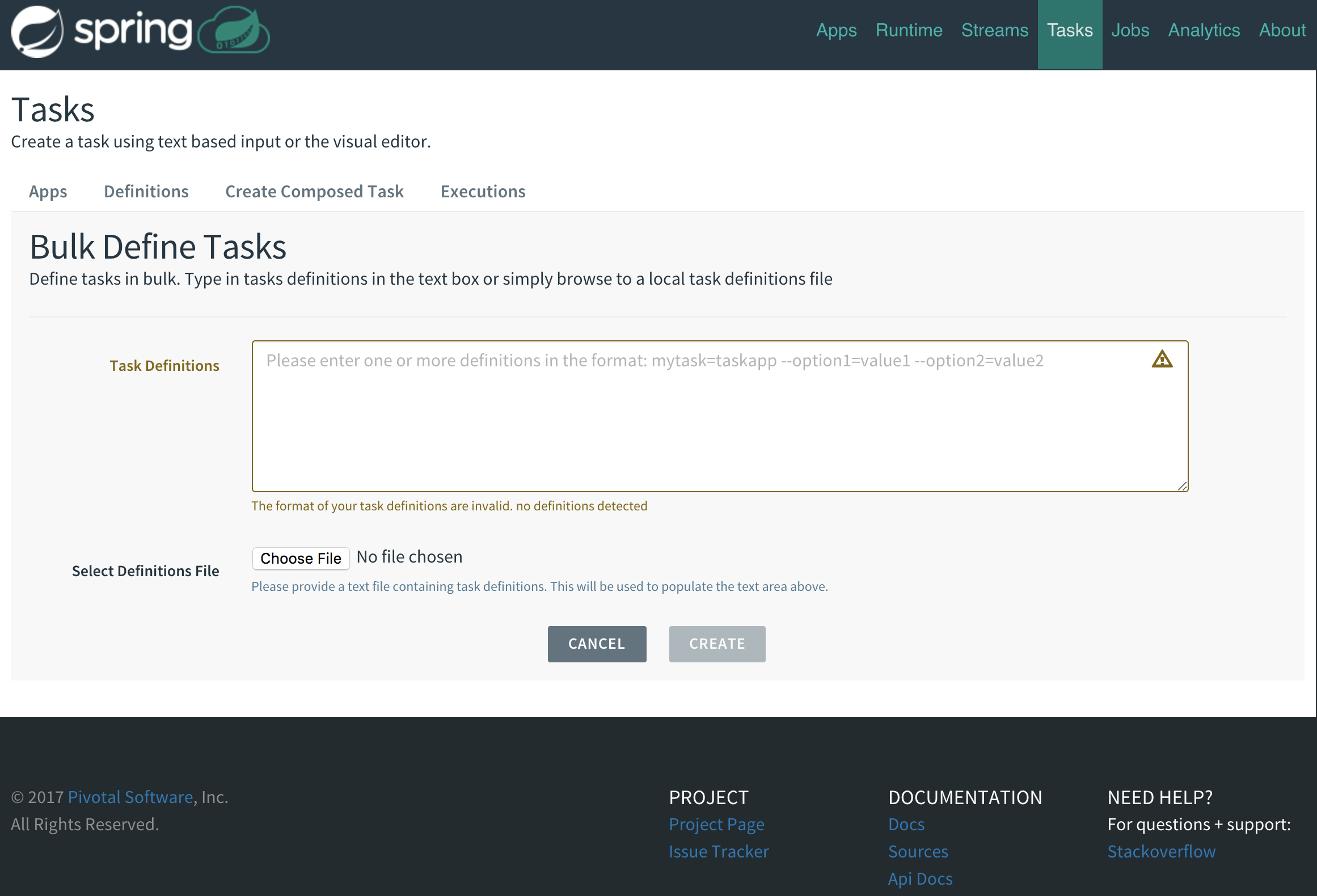

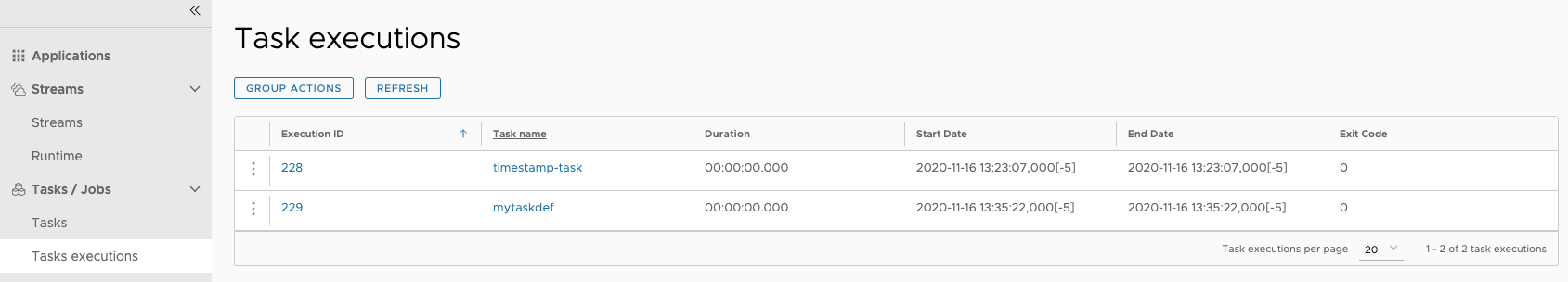

9. Task Applications

The Spring Cloud Task programming model provides:

-

Persistence of the Task’s lifecycle events and exit code status.

-

Lifecycle hooks to execute code before or after a task execution.

-

Emit task events to a stream (as a source) during the task lifecycle.

-

Integration with Spring Batch Jobs.

10. Data Flow Server

10.1. Endpoints

The Data Flow Server uses an embedded servlet container and exposes REST endpoints for creating, deploying, undeploying, and destroying streams and tasks, querying runtime state, analytics, and the like. The Data Flow Server is implemented using Spring’s MVC framework and the Spring HATEOAS library to create REST representations that follow the HATEOAS principle.

10.2. Customization

Each Data Flow Server executable jar targets a single runtime by delegating to the implementation of the deployer Service Provider Interface found on the classpath.

We provide a Data Flow Server executable jar that targets a single runtime. The Data Flow server delegates to the implementation of the deployer Service Provider Interface found on the classpath. In the current version, there are no endpoints specific to a target runtime, but may be available in future releases as a convenience to access runtime specific features

While we provide a server executable for each of the target runtimes you can also create your own customized server application using Spring Initialzr. This let’s you add or remove functionality relative to the executable jar we provide. For example, adding additional security implementations, custom endpoints, or removing Task or Analytics REST endpoints. You can also enable or disable some features through the use of feature toggles.

10.3. Security

The Data Flow Server executable jars support basic http, LDAP(S), File-based, and OAuth 2.0 authentication to access its endpoints. Refer to the security section for more information.

Authorization via groups is planned for a future release.

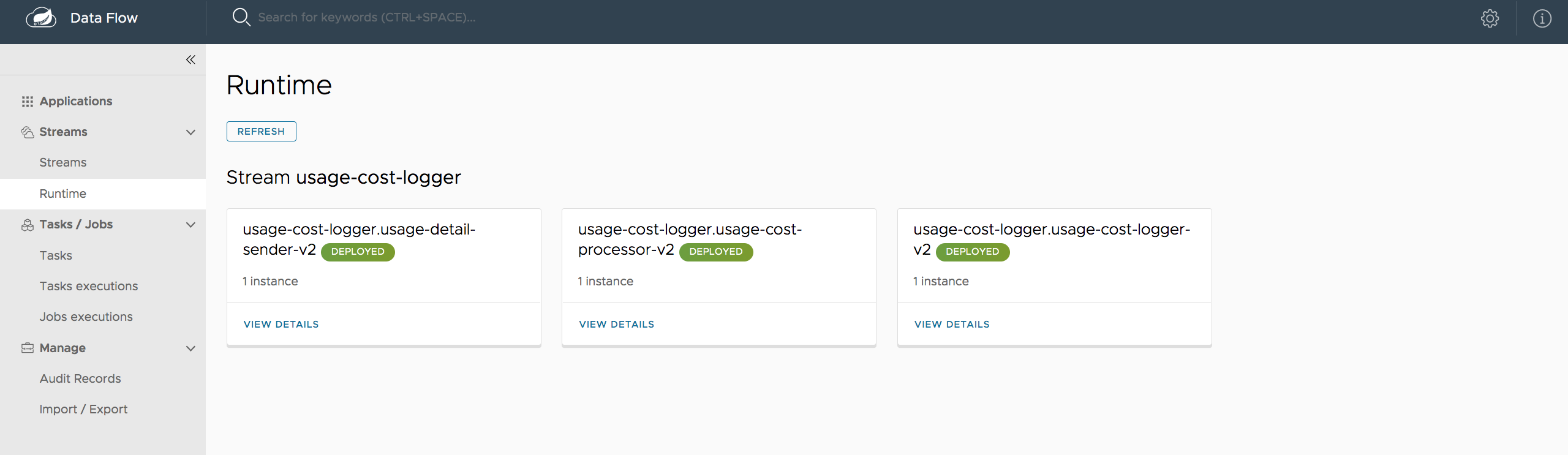

11. Runtime

11.1. Fault Tolerance

The target runtimes supported by Data Flow all have the ability to restart a long lived application should it fail. Spring Cloud Data Flow sets up whatever health probe is required by the runtime environment when deploying the application.

The collective state of all applications that comprise the stream is used to determine the state of the stream. If an application fails, the state of the stream will change from ‘deployed’ to ‘partial’.

11.2. Resource Management

Each target runtime lets you control the amount of memory, disk and CPU that is allocated to each application. These are passed as properties in the deployment manifest using key names that are unique to each runtime. Refer to the each platforms server documentation for more information.

11.3. Scaling at runtime

When deploying a stream, you can set the instance count for each individual application that comprises the stream. Once the stream is deployed, each target runtime lets you control the target number of instances for each individual application. Using the APIs, UIs, or command line tools for each runtime, you can scale up or down the number of instances as required. Future work will provide a portable command in the Data Flow Server to perform this operation.

Currently, this is not supported with the Kafka binder (based on the 0.8 simple consumer at the time of the release), as well as partitioned streams, for which the suggested workaround is redeploying the stream with an updated number of instances. Both cases require a static consumer set up based on information about the total instance count and current instance index, a limitation intended to be addressed in future releases. For example, Kafka 0.9 and higher provides good infrastructure for scaling applications dynamically and will be available as an alternative to the current Kafka 0.8 based binder in the near future. One specific concern regarding scaling partitioned streams is the handling of local state, which is typically reshuffled as the number of instances is changed. This is also intended to be addressed in the future versions, by providing first class support for local state management.

11.4. Application Versioning

Application versioning, that is upgrading or downgrading an application from one version to another, is not directly supported by Spring Cloud Data Flow. You must rely on specific target runtime features to perform these operational tasks.

The roadmap for Spring Cloud Data Flow will deploy applications that are compatible with Spinnaker to manage the complete application lifecycle. This also includes automated canary analysis backed by application metrics. Portable commands in the Data Flow server to trigger pipelines in Spinnaker are also planned.

Getting started

If you’re just getting started with Spring Cloud Data Flow, this is the section for you! Here we answer the basic “what?”, “how?” and “why?” questions. You’ll find a gentle introduction to Spring Cloud Data Flow along with installation instructions. We’ll then build our first Spring Cloud Data Flow application, discussing some core principles as we go.

12. System Requirements

You need Java installed (Java 8 or later), and to build, you need to have Maven installed as well.

You need to have an RDBMS for storing stream, task and app states in the database. The local Data Flow server by default uses embedded H2 database for this.

You also need to have Redis running if you are running any streams that involve analytics applications. Redis may also be required run the unit/integration tests.

13. Deploying Spring Cloud Data Flow Local Server

-

Download the Spring Cloud Data Flow Server and Shell apps:

wget http://repo.spring.io/release/org/springframework/cloud/spring-cloud-dataflow-server-local/1.1.2.RELEASE/spring-cloud-dataflow-server-local-1.1.2.RELEASE.jar wget http://repo.spring.io/release/org/springframework/cloud/spring-cloud-dataflow-shell/1.1.2.RELEASE/spring-cloud-dataflow-shell-1.1.2.RELEASE.jar -

Launch the Data Flow Server

-

Since the Data Flow Server is a Spring Boot application, you can run it just by using

java -jar.$ java -jar spring-cloud-dataflow-server-local-1.1.2.RELEASE.jar

-

-

Launch the shell:

$ java -jar spring-cloud-dataflow-shell-1.1.2.RELEASE.jarIf the Data Flow Server and shell are not running on the same host, point the shell to the Data Flow server URL:

server-unknown:>dataflow config server http://198.51.100.0 Successfully targeted http://198.51.100.0 dataflow:>By default, the application registry will be empty. If you would like to register all out-of-the-box stream applications built with the Kafka binder in bulk, you can with the following command. For more details, review how to register applications.

$ dataflow:>app import --uri http://bit.ly/Avogadro-SR1-stream-applications-kafka-10-mavenDepending on your environment, you may need to configure the Data Flow Server to point to a custom Maven repository location or configure proxy settings. See Maven Configuration for more information. -

You can now use the shell commands to list available applications (source/processors/sink) and create streams. For example:

dataflow:> stream create --name httptest --definition "http --server.port=9000 | log" dataflow:> stream deploy --name httptest --properties "app.http.security.basic.enabled=false"You will need to wait a little while until the apps are actually deployed successfully before posting data. Look in the log file of the Data Flow server for the location of the log files for the httpandlogapplications. Tail the log file for each application to verify the application has started. The security is disabled due to a small bug in the http source that secures all endpoints, not only Spring Boot Actuator endpoints, by default.Now post some data

dataflow:> http post --target http://localhost:9000 --data "hello world"

Look to see if hello world ended up in log files for the log application.

|

When deploying locally, each app (and each app instance, in case of |

|

In case you encounter unexpected errors when executing shell commands, you can

retrieve more detailed error information by setting the exception logging level

to |

13.1. Maven Configuration

If you want to override specific maven configuration properties (remote repositories, proxies, etc.) and/or run the Data Flow Server behind a proxy, you need to specify those properties as command line arguments when starting the Data Flow Server. For example:

+

$ java -jar spring-cloud-dataflow-server-local-1.1.2.RELEASE.jar --maven.localRepository=mylocal

--maven.remote-repositories.repo1.url=https://repo1

--maven.remote-repositories.repo1.auth.username=user1

--maven.remote-repositories.repo1.auth.password=pass1

--maven.remote-repositories.repo2.url=https://repo2 --maven.proxy.host=proxy1

--maven.proxy.port=9010 --maven.proxy.auth.username=proxyuser1

--maven.proxy.auth.password=proxypass1+

By default, the protocol is set to http. You can omit the auth properties if the proxy doesn’t need a username and password.

+

By default, the maven localRepository is set to ${user.home}/.m2/repository/,

and repo.spring.io/libs-snapshot will be the only remote repository. Like in the above example, the remote

repositories can be specified along with their authentication (if needed). If the remote repositories are behind a proxy,

then the proxy properties can be specified as above.

+

If you want to pass these properties as environment properties, then you need to use SPRING_APPLICATION_JSON to set

these properties and pass SPRING_APPLICATION_JSON as environment variable as below:

+

$ SPRING_APPLICATION_JSON='{ "maven": { "local-repository": null,

"remote-repositories": { "repo1": { "url": "https://repo1", "auth": { "username": "repo1user", "password": "repo1pass" } }, "repo2": { "url": "https://repo2" } },

"proxy": { "host": "proxyhost", "port": 9018, "auth": { "username": "proxyuser", "password": "proxypass" } } } }' java -jar spring-cloud-dataflow-server-local-{project-version}.jar14. Application Configuration

You can use the following configuration properties of the Data Flow server to customize how applications are deployed.

spring.cloud.deployer.local.workingDirectoriesRoot=java.io.tmpdir # Directory in which all created processes will run and create log files.

spring.cloud.deployer.local.deleteFilesOnExit=true # Whether to delete created files and directories on JVM exit.

spring.cloud.deployer.local.envVarsToInherit=TMP,LANG,LANGUAGE,"LC_.*. # Array of regular expression patterns for environment variables that will be passed to launched applications.

spring.cloud.deployer.local.javaCmd=java # Command to run java.

spring.cloud.deployer.local.shutdownTimeout=30 # Max number of seconds to wait for app shutdown.

spring.cloud.deployer.local.javaOpts= # The Java options to pass to the JVMWhen deploying the application you can also set deployer properties prefixed with app.<name of application>, So for example to set Java options for the time application in the ticktock stream, use the following stream deployment properties.

dataflow:> stream create --name ticktock --definition "time --server.port=9000 | log"

dataflow:> stream deploy --name ticktock --properties "app.time.spring.cloud.deployer.local.javaOpts=-Xmx2048m -Dtest=foo"As a convenience you can set the property spring.cloud.deployer.local.memory to set the Java option -Xmx. So for example,

dataflow:> stream deploy --name ticktock --properties "app.time.spring.cloud.deployer.local.memory=2048m"At deployment time, if you specify an -Xmx option in the javaOpts property in addition to a value of the memory option, the value in the javaOpts property has precedence. Also, the javaOpts property set when deploying the application has precedence over the Data Flow server’s javaOpts property.

Server Configuration

In this section you will learn how to configure Spring Cloud Data Flow server’s features such as the relational database to use and security.

15. Feature Toggles

Data Flow server offers specific set of features that can be enabled/disabled when launching. These features include all the lifecycle operations, REST endpoints (server, client implementations including Shell and the UI) for:

-

Streams

-

Tasks

-

Analytics

One can enable, disable these features by setting the following boolean properties when launching the Data Flow server:

-

spring.cloud.dataflow.features.streams-enabled -

spring.cloud.dataflow.features.tasks-enabled -

spring.cloud.dataflow.features.analytics-enabled

By default, all the features are enabled.

Note: Since analytics feature is enabled by default, the Data Flow server is expected to have a valid Redis store available as analytic repository as we provide a default implementation of analytics based on Redis.

This also means that the Data Flow server’s health depends on the redis store availability as well.

If you do not want to enabled HTTP endpoints to read analytics data written to Redis, then disable the analytics feature using the property mentioned above.

The REST endpoint /features provides information on the features enabled/disabled.

16. Database Configuration

Spring Cloud Data Flow provides schemas for H2, HSQLDB, MySQL, Oracle, Postgresql, DB2 and SqlServer that will be automatically created when the server starts.

The JDBC drivers for MySQL (via MariaDB driver), HSQLDB, PostgreSQL along with embedded H2 are available out of the box. If you are using any other database, then the corresponding JDBC driver jar needs to be on the classpath of the server.

The database properties can be passed as command-line arguments to the Data Flow Server.

For instance, If you are using MySQL:

java -jar spring-cloud-dataflow-server-local/target/spring-cloud-dataflow-server-local-1.0.0.BUILD-SNAPSHOT.jar \

--spring.datasource.url=jdbc:mysql:<db-info> \

--spring.datasource.username=<user> \

--spring.datasource.password=<password> \

--spring.datasource.driver-class-name=org.mariadb.jdbc.Driver &For PostgreSQL:

java -jar spring-cloud-dataflow-server-local/target/spring-cloud-dataflow-server-local-1.0.0.BUILD-SNAPSHOT.jar \

--spring.datasource.url=jdbc:postgresql:<db-info> \

--spring.datasource.username=<user> \

--spring.datasource.password=<password> \

--spring.datasource.driver-class-name=org.postgresql.Driver &For HSQLDB:

java -jar spring-cloud-dataflow-server-local/target/spring-cloud-dataflow-server-local-1.0.0.BUILD-SNAPSHOT.jar \

--spring.datasource.url=jdbc:hsqldb:<db-info> \

--spring.datasource.username=SA \

--spring.datasource.driver-class-name=org.hsqldb.jdbc.JDBCDriver &

There is a schema update to the Spring Cloud Data Flow datastore when

upgrading from version 1.0.x to 1.1.x. Migration scripts for specific

database types can be found

here.

|

If you wish to use an external H2 database instance instead of the one

embedded with Spring Cloud Data Flow set the

spring.dataflow.embedded.database.enabled property to false. If

spring.dataflow.embedded.database.enabled is set to false or a database

other than h2 is specified as the datasource the embedded database will not

start.

|

17. Security

By default, the Data Flow server is unsecured and runs on an unencrypted HTTP connection. You can secure your REST endpoints, as well as the Data Flow Dashboard by enabling HTTPS and requiring clients to authenticate using either:

-

Basic Authentication

NOTE: By default, the REST endpoints (administration, management and health), as well as the Dashboard UI do not require authenticated access.

17.1. Enabling HTTPS

By default, the dashboard, management, and health endpoints use HTTP as a transport.

You can switch to HTTPS easily, by adding a certificate to your configuration in

application.yml.

server:

port: 8443 (1)

ssl:

key-alias: yourKeyAlias (2)

key-store: path/to/keystore (3)

key-store-password: yourKeyStorePassword (4)

key-password: yourKeyPassword (5)

trust-store: path/to/trust-store (6)

trust-store-password: yourTrustStorePassword (7)| 1 | As the default port is 9393, you may choose to change the port to a more common HTTPs-typical port. |

| 2 | The alias (or name) under which the key is stored in the keystore. |

| 3 | The path to the keystore file. Classpath resources may also be specified, by using the classpath prefix: classpath:path/to/keystore |

| 4 | The password of the keystore. |

| 5 | The password of the key. |

| 6 | The path to the truststore file. Classpath resources may also be specified, by using the classpath prefix: classpath:path/to/trust-store |

| 7 | The password of the trust store. |

| If HTTPS is enabled, it will completely replace HTTP as the protocol over which the REST endpoints and the Data Flow Dashboard interact. Plain HTTP requests will fail - therefore, make sure that you configure your Shell accordingly. |

17.1.1. Using Self-Signed Certificates

For testing purposes or during development it might be convenient to create self-signed certificates. To get started, execute the following command to create a certificate:

$ keytool -genkey -alias dataflow -keyalg RSA -keystore dataflow.keystore \

-validity 3650 -storetype JKS \

-dname "CN=localhost, OU=Spring, O=Pivotal, L=Kailua-Kona, ST=HI, C=US" (1)

-keypass dataflow -storepass dataflow| 1 | CN is the only important parameter here. It should match the domain you are trying to access, e.g. localhost. |

Then add the following to your application.yml file:

server:

port: 8443

ssl:

enabled: true

key-alias: dataflow

key-store: "/your/path/to/dataflow.keystore"

key-store-type: jks

key-store-password: dataflow

key-password: dataflowThis is all that’s needed for the Data Flow Server. Once you start the server, you should be able to access it via https://localhost:8443/. As this is a self-signed certificate, you will hit a warning in your browser, that you need to ignore.

17.1.2. Self-Signed Certificates and the Shell

By default self-signed certificates are an issue for the Shell and additional steps are necessary to make the Shell work with self-signed certificates. Two options are available:

-

Add the self-signed certificate to the JVM truststore

-

Skip certificate validation

Add the self-signed certificate to the JVM truststore

In order to use the JVM truststore option, we need to export the previously created certificate from the keystore:

$ keytool -export -alias dataflow -keystore dataflow.keystore -file dataflow_cert -storepass dataflowNext, we need to create a truststore which the Shell will use:

$ keytool -importcert -keystore dataflow.truststore -alias dataflow -storepass dataflow -file dataflow_cert -nopromptNow, you are ready to launch the Data Flow Shell using the following JVM arguments:

$ java -Djavax.net.ssl.trustStorePassword=dataflow \

-Djavax.net.ssl.trustStore=/path/to/dataflow.truststore \

-Djavax.net.ssl.trustStoreType=jks \

-jar spring-cloud-dataflow-shell-1.1.2.RELEASE.jar|

In case you run into trouble establishing a connection via SSL, you can enable additional

logging by using and setting the |

Don’t forget to target the Data Flow Server with:

dataflow:> dataflow config server https://localhost:8443/Skip Certificate Validation

Alternatively, you can also bypass the certification validation by providing the

optional command-line parameter --dataflow.skip-ssl-validation=true.

Using this command-line parameter, the shell will accept any (self-signed) SSL certificate.

|

If possible you should avoid using this option. Disabling the trust manager defeats the purpose of SSL and makes you vulnerable to man-in-the-middle attacks. |

17.2. Basic Authentication

Basic Authentication can

be enabled by adding the following to application.yml or via

environment variables:

security:

basic:

enabled: true (1)

realm: Spring Cloud Data Flow (2)| 1 | Enables basic authentication. Must be set to true for security to be enabled. |

| 2 | (Optional) The realm for Basic authentication. Will default to Spring if not explicitly set. |

| Current versions of Chrome do not display the realm. Please see the following Chromium issue ticket for more information. |

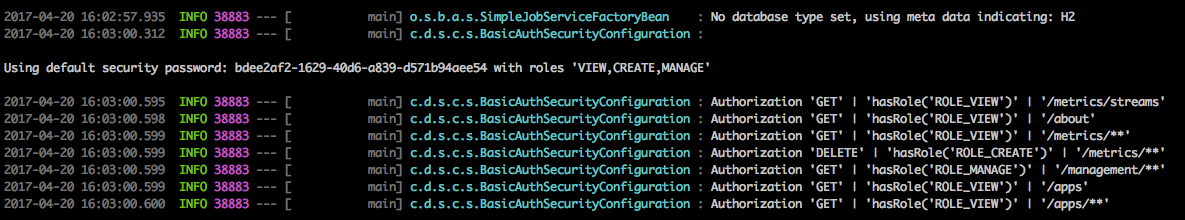

In this use-case, the underlying Spring Boot will auto-create a user called user with an auto-generated password which will be printed out to the console upon startup.

| Please be aware of inherent issues of Basic Authentication and logging out, since the credentials are cached by the browser and simply browsing back to application pages will log you back in. |

If you need to define more than one file-based user account, please take a look at File based authentication.

17.2.1. File based authentication

By default Spring Boot allows you to only specify one single user. Spring Cloud Data Flow also supports the listing of more than one user in a configuration file, as described below. Each user must be assigned a password and one or more roles:

security:

basic:

enabled: true

realm: Spring Cloud Data Flow

dataflow:

security:

authentication:

file:

enabled: true (1)

users: (2)

bob: bobspassword, ROLE_ADMIN (3)

alice: alicepwd, ROLE_VIEW, ROLE_CREATE| 1 | Enables file based authentication |

| 2 | This is a yaml map of username to password |

| 3 | Each map value is made of a corresponding password and role(s), comma separated |

| As of Spring Cloud Data Flow 1.1, roles are not supported, yet (specified roles are ignored). Due to an issue in Spring Security, though, at least one role must be provided. |

17.2.2. LDAP Authentication

Spring Cloud Data Flow also supports authentication against an LDAP server (Lightweight Directory Access Protocol), providing support for the following 2 modes:

-

Direct bind

-

Search and bind

When the LDAP authentication option is activated, the default single user mode is turned off.

In direct bind mode, a pattern is defined for the user’s distinguished name (DN), using a placeholder for the username. The authentication process derives the distinguished name of the user by replacing the placeholder and use it to authenticate a user against the LDAP server, along with the supplied password. You can set up LDAP direct bind as follows:

security:

basic:

enabled: true

realm: Spring Cloud Data Flow

dataflow:

security:

authentication:

ldap:

enabled: true (1)

url: ldap://ldap.example.com:3309 (2)

userDnPattern: uid={0},ou=people,dc=example,dc=com (3)| 1 | Enables LDAP authentication |

| 2 | The URL for the LDAP server |

| 3 | The distinguished name (DN) pattern for authenticating against the server |

The search and bind mode involves connecting to an LDAP server, either anonymously or with a fixed account, and searching for the distinguished name of the authenticating user based on its username, and then using the resulting value and the supplied password for binding to the LDAP server. This option is configured as follows:

security:

basic:

enabled: true

realm: Spring Cloud Data Flow

dataflow:

security:

authentication:

ldap:

enabled: true (1)

url: ldap://localhost:10389 (2)

managerDn: uid=admin,ou=system (3)

managerPassword: secret (4)

userSearchBase: ou=otherpeople,dc=example,dc=com (5)

userSearchFilter: uid={0} (6)| 1 | Enables LDAP integration |

| 2 | The URL of the LDAP server |

| 3 | A DN for to authenticate to the LDAP server, if anonymous searches are not supported (optional, required together with next option) |

| 4 | A password to authenticate to the LDAP server, if anonymous searches are not supported (optional, required together with previous option) |

| 5 | The base for searching the DN of the authenticating user (serves to restrict the scope of the search) |

| 6 | The search filter for the DN of the authenticating user |

| For more information, please also see the chapter LDAP Authentication of the Spring Security reference guide. |

LDAP Transport Security

When connecting to an LDAP server, you typically (In the LDAP world) have 2 options in order to establish a connection to an LDAP server securely:

-

LDAP over SSL (LDAPs)

-

Start Transport Layer Security (Start TLS is defined in RFC2830)

As of Spring Cloud Data Flow 1.1.0 only LDAPs is supported out-of-the-box. When using

official certificates no special configuration is necessary, in order to connect

to an LDAP Server via LDAPs. Just change the url format to ldaps, e.g. ldaps://localhost:636.

In case of using self-signed certificates, the setup for your Spring Cloud Data Flow server becomes slightly more complex. The setup is very similar to Using Self-Signed Certificates (Please read first) and Spring Cloud Data Flow needs to reference a trustStore in order to work with your self-signed certificates.

| While useful during development and testing, please never use self-signed certificates in production! |

Ultimately you have to provide a set of system properties to reference the trustStore and its credentials when starting the server:

$ java -Djavax.net.ssl.trustStorePassword=dataflow \

-Djavax.net.ssl.trustStore=/path/to/dataflow.truststore \

-Djavax.net.ssl.trustStoreType=jks \

-jar spring-cloud-starter-dataflow-server-local-1.1.2.RELEASE.jarAs mentioned above, another option to connect to an LDAP server securely is via Start TLS. In the LDAP world, LDAPs is technically even considered deprecated in favor of Start TLS. However, this option is currently not supported out-of-the-box by Spring Cloud Data Flow.

Please follow the following issue tracker ticket to track its implementation. You may also want to look at the Spring LDAP reference documentation chapter on Custom DirContext Authentication Processing for further details.

17.3. OAuth 2.0

OAuth 2.0 allows you to integrate Spring Cloud Data Flow into Single Sign On (SSO) environments. The following 2 OAuth2 Grant Types will be used:

-

Authorization Code - Used for the GUI (Browser) integration. You will be redirected to your OAuth Service for authentication

-

Password - Used by the shell (And the REST integration), so you can login using username and password

The REST endpoints are secured via Basic Authentication but will use the Password Grand Type under the covers to authenticate with your OAuth2 service.

| When authentication is set up, it is strongly recommended to enable HTTPS as well, especially in production environments. |

You can turn on OAuth2 authentication by adding the following to application.yml or via

environment variables:

security:

basic:

enabled: true (1)

realm: Spring Cloud Data Flow (2)

oauth2: (3)

client:

client-id: myclient

client-secret: mysecret

access-token-uri: http://127.0.0.1:9999/oauth/token

user-authorization-uri: http://127.0.0.1:9999/oauth/authorize

resource:

user-info-uri: http://127.0.0.1:9999/me| 1 | Must be set to true for security to be enabled. |

| 2 | The realm for Basic authentication |

| 3 | OAuth Configuration Section, if you leave off the OAuth2 section, Basic Authentication will be enabled instead. |

| As of version 1.0 Spring Cloud Data Flow does not provide finer-grained authorization. Thus, once you are logged in, you have full access to all functionality. |

You can verify that basic authentication is working properly using curl:

$ curl -u myusername:mypassword http://localhost:9393/As a result you should see a list of available REST endpoints.

17.3.1. Authentication using the Spring Cloud Data Flow Shell

If your OAuth2 provider supports the Password Grant Type you can start the Data Flow Shell with:

$ java -jar spring-cloud-dataflow-shell-1.1.2.RELEASE.jar \

--dataflow.uri=http://localhost:9393 \

--dataflow.username=my_username --dataflow.password=my_password| Keep in mind that when authentication for Spring Cloud Data Flow is enabled, the underlying OAuth2 provider must support the Password OAuth2 Grant Type, if you want to use the Shell. |

From within the Data Flow Shell you can also provide credentials using:

dataflow config server --uri http://localhost:9393 --username my_username --password my_passwordOnce successfully targeted, you should see the following output:

dataflow:>dataflow config info

dataflow config info

╔═══════════╤═══════════════════════════════════════╗

║Credentials│[username='my_username, password=****']║

╠═══════════╪═══════════════════════════════════════╣

║Result │ ║

║Target │http://localhost:9393 ║

╚═══════════╧═══════════════════════════════════════╝17.3.2. OAuth2 Authentication Examples

Local OAuth2 Server

With Spring Security OAuth you can easily create your own OAuth2 Server with the following 2 simple annotations:

-

@EnableResourceServer

-

@EnableAuthorizationServer

A working example application can be found at:

Simply clone the project, built and start it. Furthermore configure Spring Cloud Data Flow with the respective Client Id and Client Secret.

Authentication using GitHub

If you rather like to use an existing OAuth2 provider, here is an example for GitHub. First you need to Register a new application under your GitHub account at:

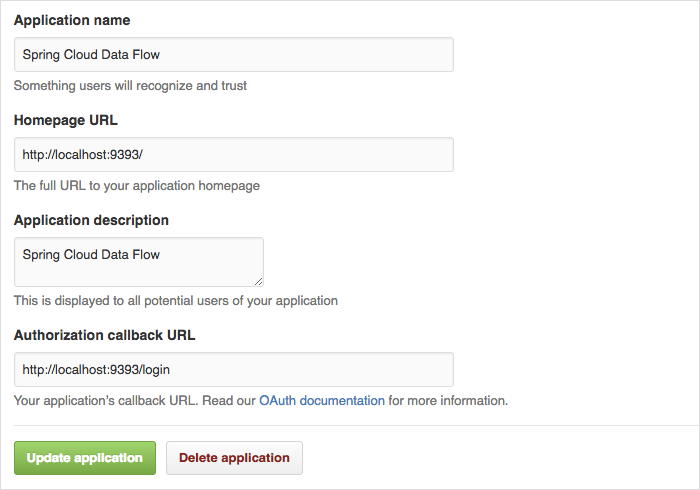

When running a default version of Spring Cloud Data Flow locally, your GitHub configuration should look like the following:

For the Authorization callback URL you will enter Spring Cloud Data Flow’s Login URL, e.g. localhost:9393/login.

|

Configure Spring Cloud Data Flow with the GitHub relevant Client Id and Secret:

security:

basic:

enabled: true

oauth2:

client:

client-id: your-github-client-id

client-secret: your-github-client-secret

access-token-uri: https://github.com/login/oauth/access_token

user-authorization-uri: https://github.com/login/oauth/authorize

resource:

user-info-uri: https://api.github.com/user| GitHub does not support the OAuth2 password grant type. As such you cannot use the Spring Cloud Data Flow Shell in conjunction with GitHub. |

17.4. Securing the Spring Boot Management Endpoints

When enabling security, please also make sure that the Spring Boot HTTP Management Endpoints

are secured as well. You can enable security for the management endpoints by adding the following to application.yml:

management:

contextPath: /management

security:

enabled: true

If you don’t explicitly enable security for the management endpoints,

you may end up having unsecured REST endpoints, despite security.basic.enabled

being set to true.

|

18. Monitoring and Management

The Spring Cloud Data Flow server is a Spring Boot application that includes the Actuator library, which adds several production ready features to help you monitor and manage your application.

The Actuator library adds http endpoints under the context path /management that is also

a discovery page for available endpoints. For example, there is a health endpoint

that shows application health information and an env that lists properties from

Spring’s ConfigurableEnvironment. By default only the health and application info

endpoints are accessible. The other endpoints are considered to be sensitive

and need to be enabled explicitly via configuration. If you are enabling

sensitive endpoints then you should also

secure the Data Flow server’s endpoints so that

information is not inadvertently exposed to unauthenticated users. The local Data Flow server has security disabled by default, so all actuator endpoints are available.

The Data Flow server requires a relational database and if the feature toggled for

analytics is enabled, a Redis server is also required. The Data Flow server will

autoconfigure the DataSourceHealthIndicator and RedisHealthIndicator if needed. The health of these two services is incorporated to the overall health status of the server through the health endpoint.

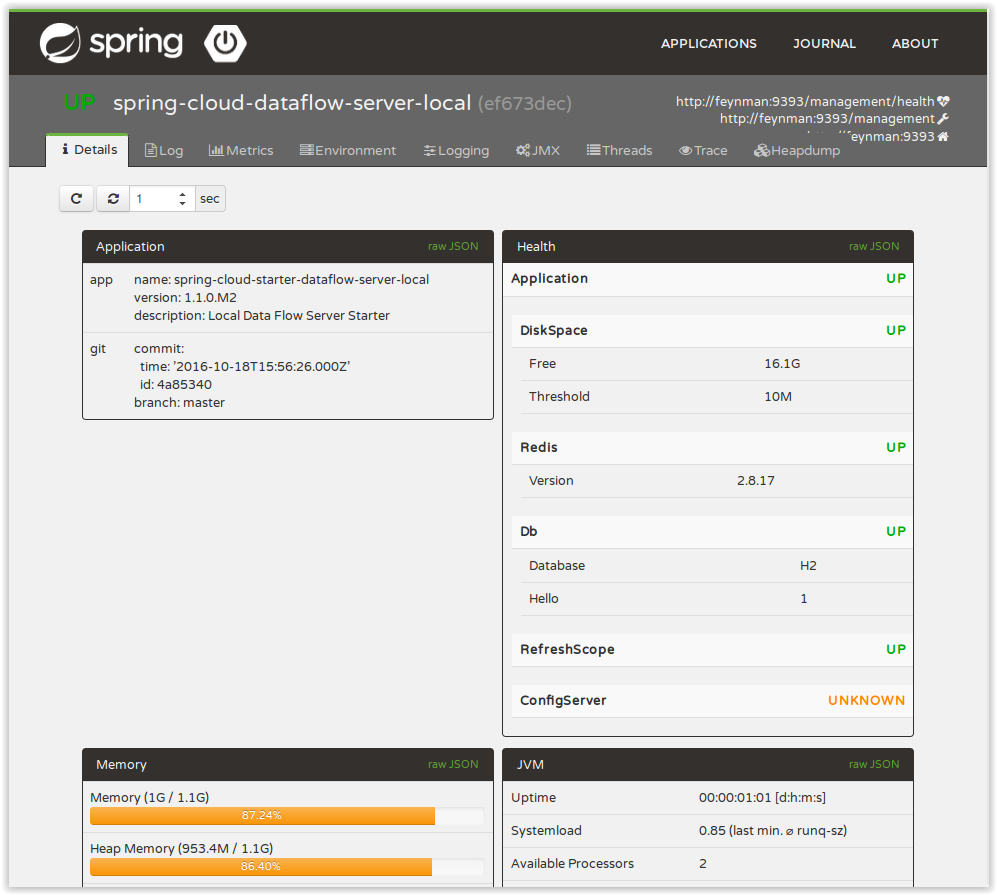

18.1. Spring Boot Admin

A nice way to visualize and interact with actuator endpoints is to incorporate the Spring Boot Admin client library into the Spring Cloud Data Flow server. You can create the Spring Boot Admin application by following a few simple steps.

An easy way to include the client library into the Data Flow server is to create a new Data Flow Server project from start.spring.io. Type 'flow' in the "Search for dependencies" text box and select the server runtime you want to customize. A simple way to have the Spring Cloud Data Flow server be a client to the Spring Boot Admin Server is by adding a dependency to the Data Flow server’s pom and an additional configuration property as documented in Registering Client Applications.

This will result in a UI with tabs for each of the actuator endpoints.

Additional configuration is required to interact with JMX beans and logging levels. Refer

to the Spring Boot admin documentation for more information. As only the info

and health endpoints are available to unauthenticated users, you should enable security on

the Data Flow Server and also configure Spring Boot Admin server’s security so that it

can securely access the actuator endpoints.

18.2. Monitoring Deployed Applications

The applications that are deployed by Spring Cloud Data Flow are based on Spring Boot which contains several features for monitoring your application in production. Each deployed application contains several web endpoints for monitoring and interacting with Stream and Task applications.

In particular, the metrics endpoint contains counters and gauges for HTTP requests, System Metrics (such as JVM stats), DataSource Metrics and Message Channel Metrics (such as message rates). In turn, these metrics can be exported periodically to various application monitoring tools via MetricWriter implementations. You can control how often and which Spring Boot metrics are exported through the use of include and exclude name filters.

The project Spring Cloud Data Flow Metrics provides the foundation for

exporting Spring Boot metrics. The main project provides Spring Boots AutoConfiguration to setup the exporting process and common

functionality such as defining a metric name prefix appropriate for your environement. For example, you may want to include the

region where the application is running in addition to the application’s name and stream/task to which it belongs.

The main project also includes a LogMetricWriter so that

metrics can be stored into the log file. While very simple in approach, log files are often ingested into application monitoring tools

(such as Splunk) where they can be further processed to create dashboards of an application’s performance.

The project Spring Cloud Data Flow Metrics Datadog Metrics provides integration to export Spring Boot metrics to Datadog.

To make use of this functionality, you will need to add additional dependencies into your Stream and Task applications. To customize the "out of the box" Task and Stream applications you can use the Data Flow Initializr to generate a project and then add to the generated Maven pom file the MetricWriter implementation you want to use. The documentation on the Data Flow Metrics project pages provides the additional information you need to get started.

Streams

In this section you will learn all about Streams and how to use them with Spring Cloud Data Flow.

19. Introduction

In Spring Cloud Data Flow, a basic stream defines the ingestion of event data from a source to a sink that passes through any number of processors. Streams are composed of Spring Cloud Stream applications and the deployment of stream definitions is done via the Data Flow Server (REST API). The Getting Started section shows you how to start the server and how to start and use the Spring Cloud Data Flow shell.

A high level DSL is used to create stream definitions. The DSL to define a stream that has an http source and a file sink (with no processors) is shown below

http | fileThe DSL mimics UNIX pipes and filters syntax. Default values for ports and filenames are used in this example but can be overridden using -- options, such as

http --server.port=8091 | file --directory=/tmp/httpdata/To create these stream definitions you use the shell or make an HTTP POST request to the Spring Cloud Data Flow Server. For more information on making HTTP request directly to the server, consult the REST API Guide.

20. Stream DSL

In the example above, we connected a source to a sink using the pipe symbol |. You can also pass properties to the source and sink configurations. The property names will depend on the individual app implementations, but as an example, the http source app exposes a server.port setting and it allows you to change the data ingestion port from the default value. To create the stream using port 8000, we would use

dataflow:> stream create --definition "http --server.port=8000 | log" --name myhttpstreamThe shell provides tab completion for application properties and also the shell command app info <appType>:<appName> provides additional documentation for all the supported properties.

| Supported Stream <appType>'s are: source, processor, and sink |

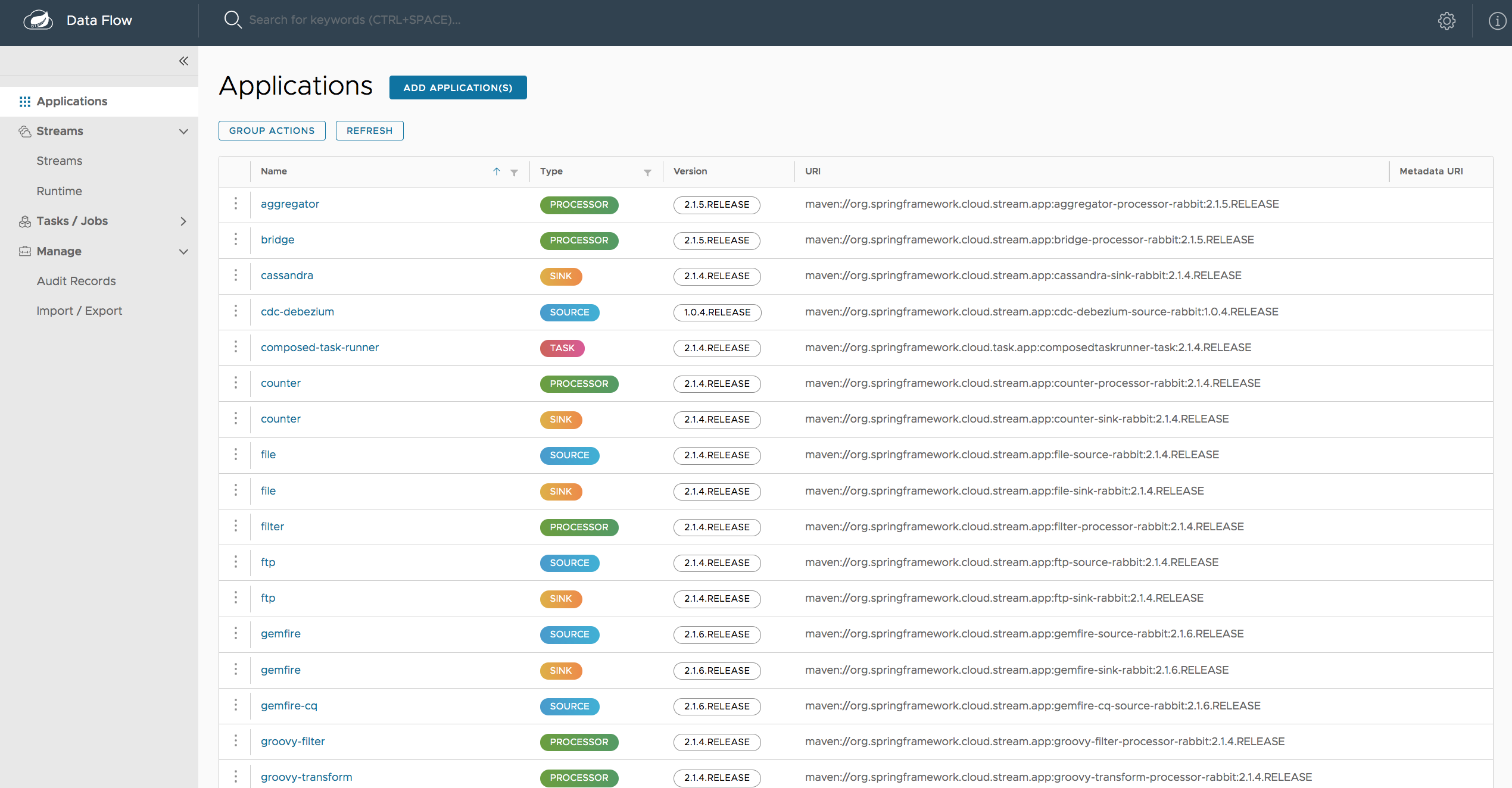

21. Register a Stream App

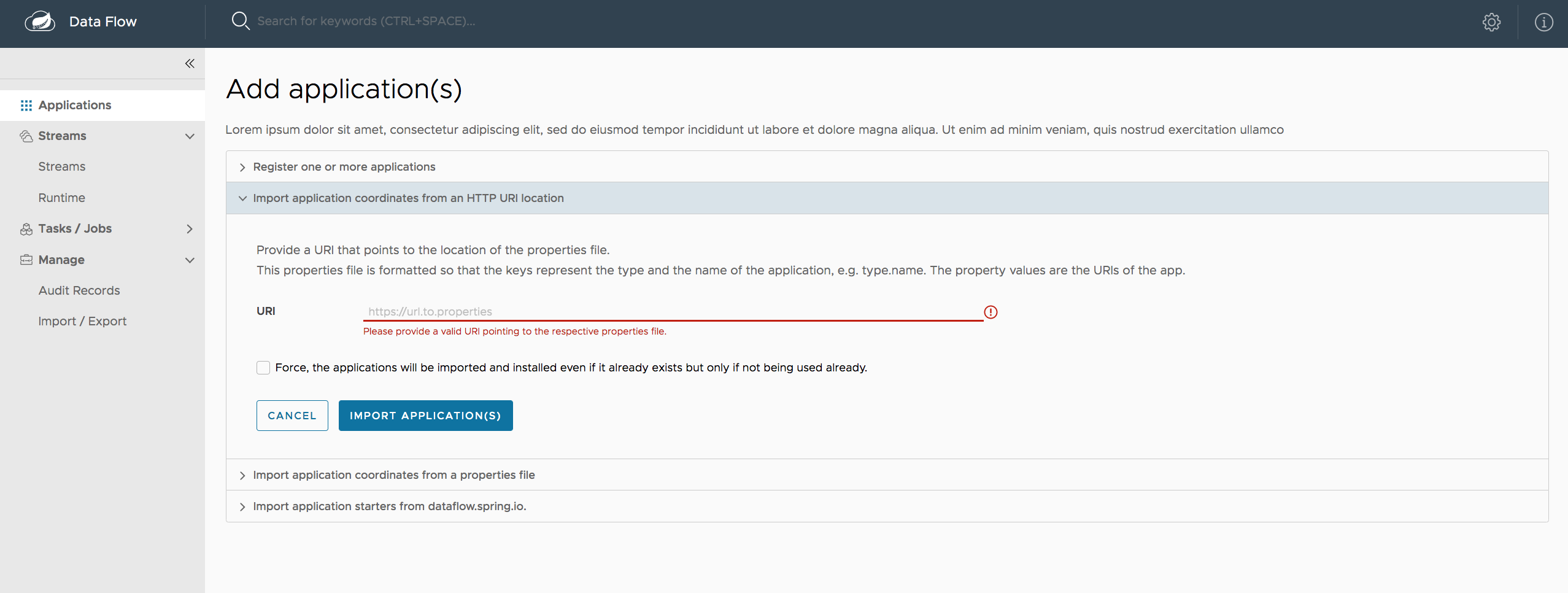

Register a Stream App with the App Registry using the Spring Cloud Data Flow Shell

app register command. You must provide a unique name, application type, and a URI that can be

resolved to the app artifact. For the type, specify "source", "processor", or "sink".

Here are a few examples:

dataflow:>app register --name mysource --type source --uri maven://com.example:mysource:0.0.1-SNAPSHOT

dataflow:>app register --name myprocessor --type processor --uri file:///Users/example/myprocessor-1.2.3.jar

dataflow:>app register --name mysink --type sink --uri http://example.com/mysink-2.0.1.jarWhen providing a URI with the maven scheme, the format should conform to the following:

maven://<groupId>:<artifactId>[:<extension>[:<classifier>]]:<version>For example, if you would like to register the snapshot versions of the http and log

applications built with the RabbitMQ binder, you could do the following:

dataflow:>app register --name http --type source --uri maven://org.springframework.cloud.stream.app:http-source-rabbit:1.1.2.BUILD-SNAPSHOT

dataflow:>app register --name log --type sink --uri maven://org.springframework.cloud.stream.app:log-sink-rabbit:1.1.2.BUILD-SNAPSHOTIf you would like to register multiple apps at one time, you can store them in a properties file

where the keys are formatted as <type>.<name> and the values are the URIs.

For example, if you would like to register the snapshot versions of the http and log

applications built with the RabbitMQ binder, you could have the following in a properties file [eg: stream-apps.properties]:

source.http=maven://org.springframework.cloud.stream.app:http-source-rabbit:1.1.2.BUILD-SNAPSHOT

sink.log=maven://org.springframework.cloud.stream.app:log-sink-rabbit:1.1.2.BUILD-SNAPSHOTThen to import the apps in bulk, use the app import command and provide the location of the properties file via --uri:

dataflow:>app import --uri file:///<YOUR_FILE_LOCATION>/stream-apps.propertiesFor convenience, we have the static files with application-URIs (for both maven and docker) available for all the out-of-the-box stream and task/batch app-starters. You can point to this file and import all the application-URIs in bulk. Otherwise, as explained in previous paragraphs, you can register them individually or have your own custom property file with only the required application-URIs in it. It is recommended, however, to have a "focused" list of desired application-URIs in a custom property file.

List of available Stream Application Starters:

| Artifact Type | Stable Release | SNAPSHOT Release |

|---|---|---|

RabbitMQ + Maven |

http://bit.ly/Bacon-BUILD-SNAPSHOT-stream-applications-rabbit-maven |

|

RabbitMQ + Docker |

http://bit.ly/Avogadro-SR1-stream-applications-rabbit-docker |

N/A ] |

Kafka 0.9 + Maven |

http://bit.ly/Avogadro-SR1-stream-applications-kafka-09-maven |

http://bit.ly/Bacon-BUILD-SNAPSHOT-stream-applications-kafka-09-maven |

Kafka 0.9 + Docker |

http://bit.ly/Avogadro-SR1-stream-applications-kafka-09-docker |

N/A ] |

Kafka 0.10 + Maven |

http://bit.ly/Avogadro-SR1-stream-applications-kafka-10-maven |

http://bit.ly/Bacon-BUILD-SNAPSHOT-stream-applications-kafka-10-maven |

Kafka 0.10 + Docker |

http://bit.ly/Avogadro-SR1-stream-applications-kafka-10-docker |

N/A ] |

List of available Task Application Starters:

| Artifact Type | Stable Release | SNAPSHOT Release |

|---|---|---|

Maven |

http://bit.ly/Belmont-BUILD-SNAPSHOT-task-applications-maven |

|

Docker |

N/A ] |

You can find more information about the available task starters look the Task App Starters Project Page and related reference documentation. For more information about the available stream starters look at the Stream App Starters Project Page and related reference documentation.

As an example, if you would like to register all out-of-the-box stream applications built with the RabbitMQ binder in bulk, you can with the following command.

dataflow:>app import --uri http://bit.ly/Avogadro-SR1-stream-applications-rabbit-mavenYou can also pass the --local option (which is TRUE by default) to indicate whether the

properties file location should be resolved within the shell process itself. If the location should

be resolved from the Data Flow Server process, specify --local false.

When using either app register or app import, if a stream app is already registered with

the provided name and type, it will not be overridden by default. If you would like to override the

pre-existing stream app, then include the --force option.

| In some cases the Resource is resolved on the server side, whereas in others the URI will be passed to a runtime container instance where it is resolved. Consult the specific documentation of each Data Flow Server for more detail. |

21.1. Whitelisting application properties

Stream applications are Spring Boot applications which are aware of many Common application properties, e.g. server.port but also families of properties such as those with the prefix spring.jmx and logging. When creating your own application it is desirable to whitelist properties so that the shell and the UI can display them first as primary properties when presenting options via TAB completion or in drop-down boxes.

To whitelist application properties create a file named spring-configuration-metadata-whitelist.properties in the META-INF resource directory. There are two property keys that can be used inside this file. The first key is named configuration-properties.classes. The value is a comma separated list of fully qualified @ConfigurationProperty class names. The second key is configuration-properties.names whose value is a comma separated list of property names. This can contain the full name of property, such as server.port or a partial name to whitelist a category of property names, e.g. spring.jmx.

The Spring Cloud Stream application starters are a good place to look for examples of usage. Here is a simple example of the file sink’s spring-configuration-metadata-whitelist.properties file

configuration-properties.classes=org.springframework.cloud.stream.app.file.sink.FileSinkPropertiesIf we also wanted to add server.port to be white listed, then it would look like this:

configuration-properties.classes=org.springframework.cloud.stream.app.file.sink.FileSinkProperties

configuration-properties.names=server.port|

Make sure to add 'spring-boot-configuration-processor' as an optional dependency to generate configuration metadata file for the properties. |

22. Creating custom applications

While there are out of the box source, processor, sink applications available, one can extend these applications or write a custom Spring Cloud Stream application.

The process of creating Spring Cloud Stream applications via Spring Initializr is detailed in the Spring Cloud Stream documentation. It is possible to include multiple binders to an application. If doing so, refer the instructions in Passing Spring Cloud Stream properties for the application on how to configure them.

For supporting property whitelisting, Spring Cloud Stream applications running in Spring Cloud Data Flow may include the Spring Boot configuration-processor as an optional dependency, as in the following example.

<dependencies>

<!-- other dependencies -->

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-configuration-processor</artifactId>

<optional>true</optional>

</dependency>

</dependencies>|

Make sure that the |

Once a custom application has been created, it can be registered as described in [spring-cloud-dataflow-register-appss].

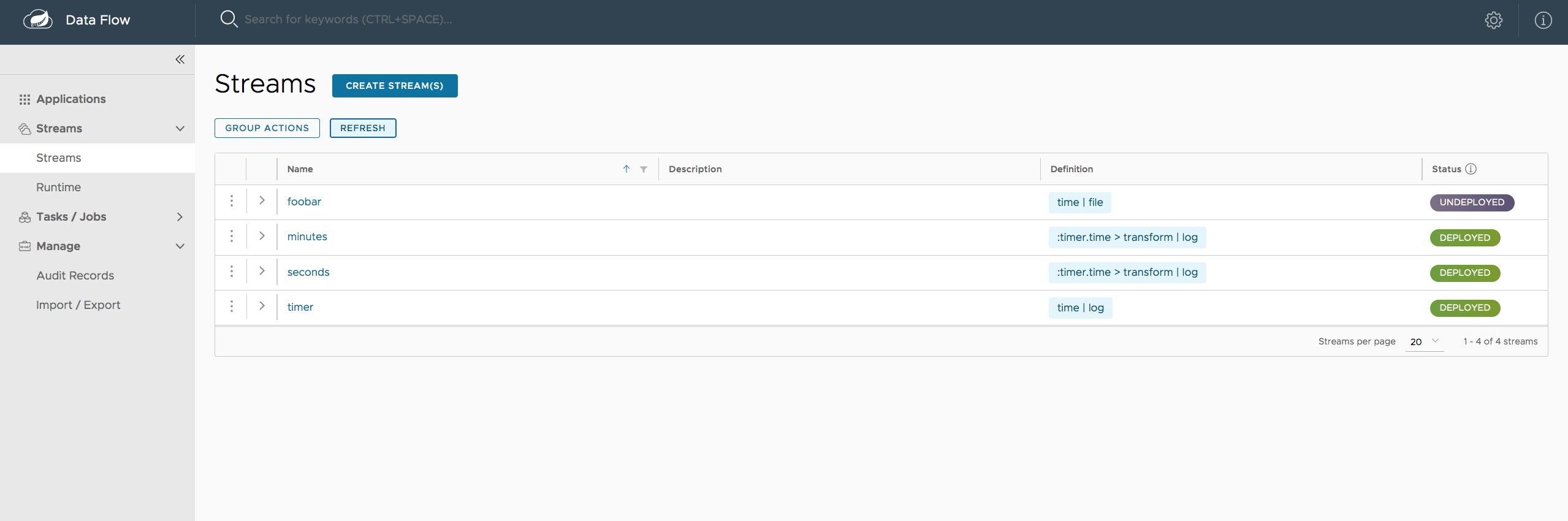

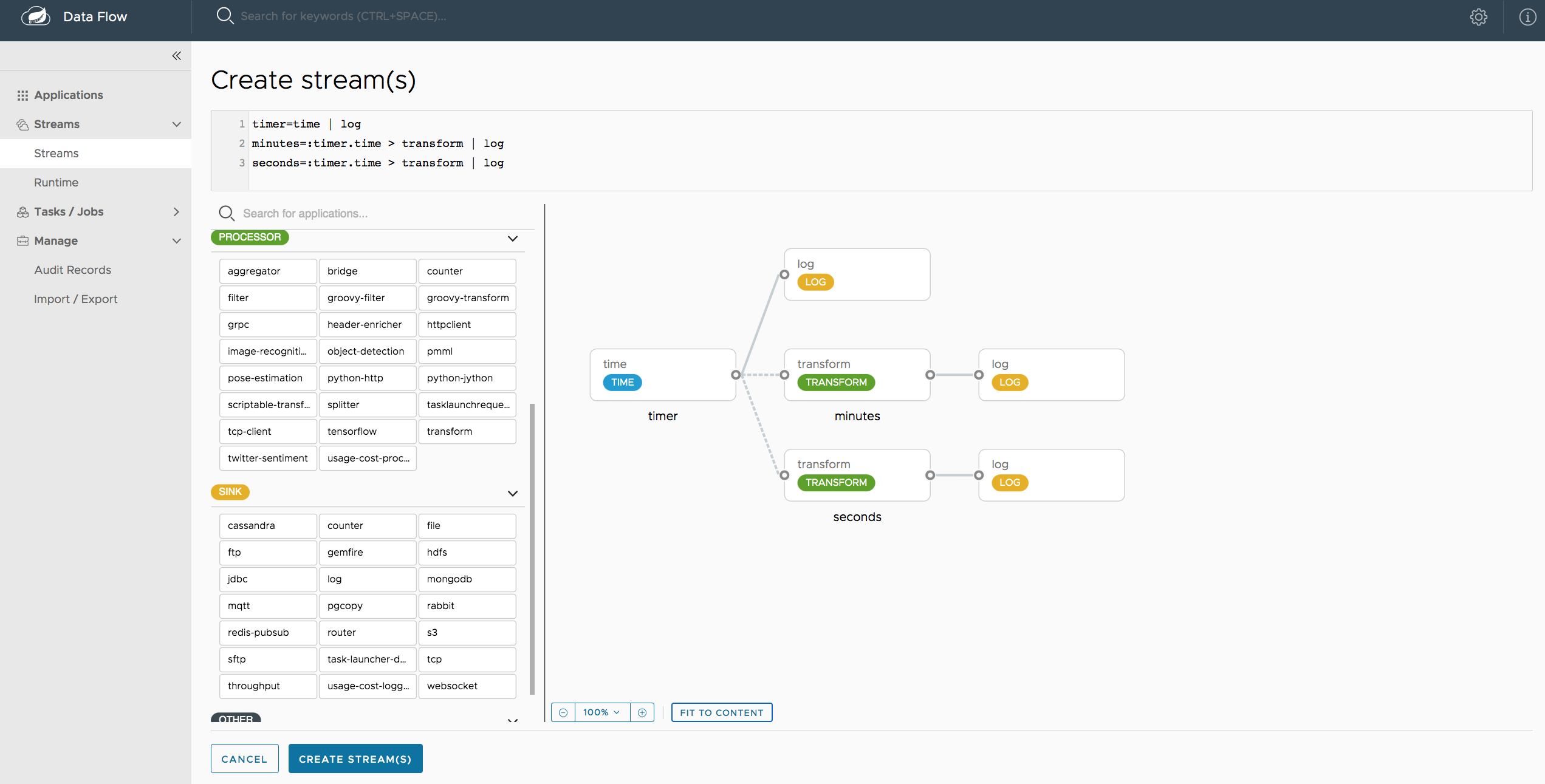

23. Creating a Stream

The Spring Cloud Data Flow Server exposes a full RESTful API for managing the lifecycle of stream definitions, but the easiest way to use is it is via the Spring Cloud Data Flow shell. Start the shell as described in the Getting Started section.

New streams are created by with the help of stream definitions. The definitions are built from a simple DSL. For example, let’s walk through what happens if we execute the following shell command:

dataflow:> stream create --definition "time | log" --name ticktockThis defines a stream named ticktock based off the DSL expression time | log. The DSL uses the "pipe" symbol |, to connect a source to a sink.

Then to deploy the stream execute the following shell command (or alternatively add the --deploy flag when creating the stream so that this step is not needed):

dataflow:> stream deploy --name ticktockThe Data Flow Server resolves time and log to maven coordinates and uses those to launch the time and log applications of the stream.

2016-06-01 09:41:21.728 INFO 79016 --- [nio-9393-exec-6] o.s.c.d.spi.local.LocalAppDeployer : deploying app ticktock.log instance 0

Logs will be in /var/folders/wn/8jxm_tbd1vj28c8vj37n900m0000gn/T/spring-cloud-dataflow-912434582726479179/ticktock-1464788481708/ticktock.log

2016-06-01 09:41:21.914 INFO 79016 --- [nio-9393-exec-6] o.s.c.d.spi.local.LocalAppDeployer : deploying app ticktock.time instance 0

Logs will be in /var/folders/wn/8jxm_tbd1vj28c8vj37n900m0000gn/T/spring-cloud-dataflow-912434582726479179/ticktock-1464788481910/ticktock.timeIn this example, the time source simply sends the current time as a message each second, and the log sink outputs it using the logging framework.

You can tail the stdout log (which has an "_<instance>" suffix). The log files are located within the directory displayed in the Data Flow Server’s log output, as shown above.

$ tail -f /var/folders/wn/8jxm_tbd1vj28c8vj37n900m0000gn/T/spring-cloud-dataflow-912434582726479179/ticktock-1464788481708/ticktock.log/stdout_0.log

2016-06-01 09:45:11.250 INFO 79194 --- [ kafka-binder-] log.sink : 06/01/16 09:45:11

2016-06-01 09:45:12.250 INFO 79194 --- [ kafka-binder-] log.sink : 06/01/16 09:45:12

2016-06-01 09:45:13.251 INFO 79194 --- [ kafka-binder-] log.sink : 06/01/16 09:45:1323.1. Application properties

Application properties are the properties associated with each application in the stream. When the application is deployed, the application properties are applied to the application via command line arguments or environment variables based on the underlying deployment implementation.

23.1.1. Passing application properties when creating a stream

The following stream

dataflow:> stream create --definition "time | log" --name ticktockcan have application properties defined at the time of stream creation.

The shell command app info <appType>:<appName> displays the white-listed application properties for the application.

For more info on the property white listing refer to Whitelisting application properties

Below are the white listed properties for the app time:

dataflow:> app info source:time

╔══════════════════════════════╤══════════════════════════════╤══════════════════════════════╤══════════════════════════════╗

║ Option Name │ Description │ Default │ Type ║

╠══════════════════════════════╪══════════════════════════════╪══════════════════════════════╪══════════════════════════════╣

║trigger.time-unit │The TimeUnit to apply to delay│<none> │java.util.concurrent.TimeUnit ║

║ │values. │ │ ║

║trigger.fixed-delay │Fixed delay for periodic │1 │java.lang.Integer ║

║ │triggers. │ │ ║

║trigger.cron │Cron expression value for the │<none> │java.lang.String ║

║ │Cron Trigger. │ │ ║

║trigger.initial-delay │Initial delay for periodic │0 │java.lang.Integer ║

║ │triggers. │ │ ║

║trigger.max-messages │Maximum messages per poll, -1 │1 │java.lang.Long ║

║ │means infinity. │ │ ║

║trigger.date-format │Format for the date value. │<none> │java.lang.String ║

╚══════════════════════════════╧══════════════════════════════╧══════════════════════════════╧══════════════════════════════╝Below are the white listed properties for the app log:

dataflow:> app info sink:log

╔══════════════════════════════╤══════════════════════════════╤══════════════════════════════╤══════════════════════════════╗

║ Option Name │ Description │ Default │ Type ║

╠══════════════════════════════╪══════════════════════════════╪══════════════════════════════╪══════════════════════════════╣

║log.name │The name of the logger to use.│<none> │java.lang.String ║

║log.level │The level at which to log │<none> │org.springframework.integratio║

║ │messages. │ │n.handler.LoggingHandler$Level║

║log.expression │A SpEL expression (against the│payload │java.lang.String ║

║ │incoming message) to evaluate │ │ ║

║ │as the logged message. │ │ ║

╚══════════════════════════════╧══════════════════════════════╧══════════════════════════════╧══════════════════════════════╝The application properties for the time and log apps can be specified at the time of stream creation as follows:

dataflow:> stream create --definition "time --fixed-delay=5 | log --level=WARN" --name ticktockNote that the properties fixed-delay and level defined above for the apps time and log are the 'short-form' property names provided by the shell completion.

These 'short-form' property names are applicable only for the white-listed properties and in all other cases, only fully qualified property names should be used.

23.2. Deployment properties

When deploying the stream, properties that control the deployment of the apps into the target platform are known as deployment properties.

For instance, one can specify how many instances need to be deployed for the specific application defined in the stream using the deployment property called count.

23.2.1. Passing instance count as deployment property

If you would like to have multiple instances of an application in the stream, you can include a property with the deploy command:

dataflow:> stream deploy --name ticktock --properties "app.time.count=3"Note that count is the reserved property name used by the underlying deployer. Hence, if the application also has a custom property named count, it is not supported

when specified in 'short-form' form during stream deployment as it could conflict with the instance count deployer property. Instead, the count as a custom application property can be

specified in its fully qualified form (example: app.foo.bar.count) during stream deployment or it can be specified using 'short-form' or fully qualified form during the stream creation

where it will be considered as an app property.

23.2.2. Inline vs file reference properties

When using the Spring Cloud Data Flow Shell, there are two ways to provide deployment properties: either inline or via a file reference. Those two ways are exclusive and documented below:

- Inline properties

-

use the

--propertiesshell option and list properties as a comma separated list of key=value pairs, like so:

stream deploy foo

--properties "app.transform.count=2,app.transform.producer.partitionKeyExpression=payload"- Using a file reference

-

use the

--propertiesFileoption and point it to a local Java.propertiesfile or.yamlor.ymlfile. The file should be on the file system of the machine running the shell. If using a.propertiesfile, normal rules apply (ISO 8859-1 encoding,=,<space>or:delimiter, etc.) although we recommend using=as a key-value pair delimiter for consistency.

stream deploy foo --propertiesFile myprops.propertieswhere myprops.properties contains:

app.transform.count=2

app.transform.producer.partitionKeyExpression=payloadBoth the above properties will be passed as deployment properties for the stream foo above.

23.2.3. Passing application properties when deploying a stream

The application properties can also be specified when deploying a stream. When specified during deployment, these application properties can either be specified as 'short-form' property names (applicable for white-listed properties) or fully qualified property names. The application properties should have the prefix "app.<appName/label>".

For example, the stream

dataflow:> stream create --definition "time | log" --name ticktockcan be deployed with application properties using the 'short-form' property names:

dataflow:>stream deploy ticktock --properties "app.time.fixed-delay=5,app.log.level=ERROR"When using the app label,

stream create ticktock --definition "a: time | b: log"the application properties can be defined as:

stream deploy ticktock --properties "app.a.fixed-delay=4,app.b.level=ERROR"23.2.4. Passing Spring Cloud Stream properties for the application

Spring Cloud Data Flow sets the required Spring Cloud Stream properties for the applications inside the stream. Most importantly, the spring.cloud.stream.bindings.<input/output>.destination is set internally for the apps to bind.

If someone wants to override any of the Spring Cloud Stream properties, they can be set via deployment properties.

For example, for the below stream

dataflow:> stream create --definition "http | transform --expression=payload.getValue('hello').toUpperCase() | log" --name ticktockif there are multiple binders available in the classpath for each of the applications and the binder is chosen for each deployment then the stream can be deployed with the specific Spring Cloud Stream properties as:

dataflow:>stream deploy ticktock --properties "app.time.spring.cloud.stream.bindings.output.binder=kafka,app.transform.spring.cloud.stream.bindings.input.binder=kafka,app.transform.spring.cloud.stream.bindings.output.binder=rabbit,app.log.spring.cloud.stream.bindings.input.binder=rabbit"| Overriding the destination names is not recommended as Spring Cloud Data Flow takes care of setting this internally. |

23.2.5. Passing per-binding producer consumer properties

A Spring Cloud Stream application can have producer and consumer properties set per-binding basis.

While Spring Cloud Data Flow supports specifying short-hand notation for per binding producer properties such as partitionKeyExpression, partitionKeyExtractorClass as described in Passing stream partition properties during stream deployment, all the supported Spring Cloud Stream producer/consumer properties can be set as Spring Cloud Stream properties for the app directly as well.

The consumer properties can be set for the inbound channel name with the prefix app.[app/label name].spring.cloud.stream.bindings.<channelName>.consumer. and the producer properties can be set for the outbound channel name with the prefix app.[app/label name].spring.cloud.stream.bindings.<channelName>.producer..

For example, the stream

dataflow:> stream create --definition "time | log" --name ticktockcan be deployed with producer/consumer properties as:

dataflow:>stream deploy ticktock --properties "app.time.spring.cloud.stream.bindings.output.producer.requiredGroups=myGroup,app.time.spring.cloud.stream.bindings.output.producer.headerMode=raw,app.log.spring.cloud.stream.bindings.input.consumer.concurrency=3,app.log.spring.cloud.stream.bindings.input.consumer.maxAttempts=5"The binder specific producer/consumer properties can also be specified in a similar way.

For instance

dataflow:>stream deploy ticktock --properties "app.time.spring.cloud.stream.rabbit.bindings.output.producer.autoBindDlq=true,app.log.spring.cloud.stream.rabbit.bindings.input.consumer.transacted=true"23.2.6. Passing stream partition properties during stream deployment

A common pattern in stream processing is to partition the data as it is streamed. This entails deploying multiple instances of a message consuming app and using content-based routing so that messages with a given key (as determined at runtime) are always routed to the same app instance. You can pass the partition properties during stream deployment to declaratively configure a partitioning strategy to route each message to a specific consumer instance.

See below for examples of deploying partitioned streams:

- app.[app/label name].producer.partitionKeyExtractorClass

-

The class name of a PartitionKeyExtractorStrategy (default

null) - app.[app/label name].producer.partitionKeyExpression

-

A SpEL expression, evaluated against the message, to determine the partition key; only applies if

partitionKeyExtractorClassis null. If both are null, the app is not partitioned (defaultnull) - app.[app/label name].producer.partitionSelectorClass

-

The class name of a PartitionSelectorStrategy (default

null) - app.[app/label name].producer.partitionSelectorExpression

-