AWS Lambda

The AWS adapter takes a Spring Cloud Function app and converts it to a form that can run in AWS Lambda.

In general, there are two ways to run Spring applications on AWS Lambda:

-

Use the AWS Lambda adapter via Spring Cloud Function to implement a functional approach as outlined below. This is a good fit for single responsibility APIs and event & messaging-based systems such as handling messages from an Amazon SQS or Amazon MQ queue, an Apache Kafka stream, or reacting to file uploads in Amazon S3.

-

Run a Spring Boot Web application on AWS Lambda via the Serverless Java container project. This is a good fit for migrations of existing Spring applications to AWS Lambda or if you build sophisticated APIs with multiple API endpoints and want to maintain the familiar

RestControllerapproach. This approach is outlined in more detail in Serverless Java container for Spring Boot Web.

The following guide expects that you have a basic understanding of AWS and AWS Lambda and focuses on the additional value that Spring provides. The details on how to get started with AWS Lambda are out of scope of this document. If you want to learn more, you can navigate to basic AWS Lambda concepts or a complete Java on AWS overview.

Getting Started

One of the goals of Spring Cloud Function framework is to provide the necessary infrastructure elements to enable a simple functional application to be compatible with a particular environment (such as AWS Lambda).

In the context of Spring, a simple functional application contains beans of type Supplier, Function or Consumer.

Let’s look at the example:

@SpringBootApplication

public class FunctionConfiguration {

public static void main(String[] args) {

SpringApplication.run(FunctionConfiguration.class, args);

}

@Bean

public Function<String, String> uppercase() {

return value -> value.toUpperCase();

}

}You can see a complete Spring Boot application with a function bean defined in it. On the surface this is just another Spring Boot app. However, when adding the Spring Cloud Function AWS Adapter to the project it will become a perfectly valid AWS Lambda application:

<dependencies>

<dependency>

<groupId>org.springframework.cloud</groupId>

<artifactId>spring-cloud-function-adapter-aws</artifactId>

</dependency>

</dependencies>No other code or configuration is required. We’ve provided a sample project ready to be built and deployed. You can access it in the official Spring Cloud function example repository.

You simply execute mvn clean package to generate the JAR file. All the necessary maven plugins have already been setup to generate

an appropriate AWS deployable JAR file. (You can read more details about the JAR layout in Notes on JAR Layout).

AWS Lambda Function Handler

In contrast to traditional web applications that expose their functionality via a listener on a given HTTP port (80, 443), AWS Lambda functions are invoked at a predefined entry point, called the Lambda function handler.

We recommend using the built-in org.springframework.cloud.function.adapter.aws.FunctionInvoker handler to streamline the integration with AWS Lambda. It provides advanced features such as multi-function routing, decoupling from AWS specifics, and POJO serialization out of the box. Please refer to the AWS Request Handlers and AWS Function Routing sections to learn more.

Deployment

After building the application, you can deploy the JAR file either manually via the AWS console, the AWS Command Line Interface (CLI), or Infrastructure as Code (IaC) tools such as AWS Serverless Application Model (AWS SAM), AWS Cloud Development Kit (AWS CDK), AWS CloudFormation, or Terraform.

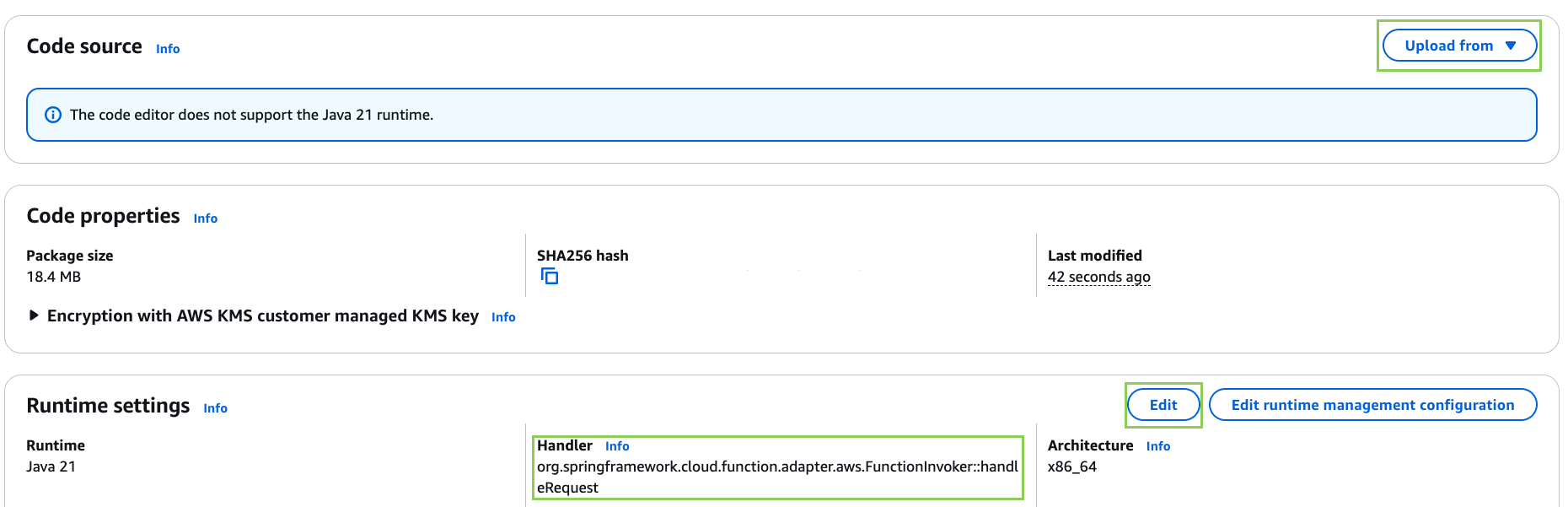

To create a Hello world Lambda function with the AWS console

-

Open the Functions page of the Lambda console.

-

Choose Create function.

-

Select Author from scratch.

-

For Function name, enter

MySpringLambdaFunction. -

For Runtime, choose Java 21.

-

Choose Create function.

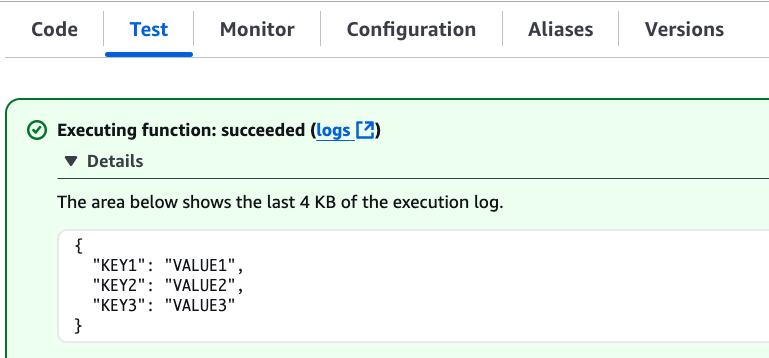

To upload your code and test the function:

-

Upload the previously created JAR file for example

target/function-sample-aws-0.0.1-SNAPSHOT-aws.jar. -

Provide the entry handler method

org.springframework.cloud.function.adapter.aws.FunctionInvoker::handleRequest. -

Navigate to the "Test" tab and click the "Test" button. The function should return with the provided JSON payload in uppercase.

To automate your deployment with Infrastructure as Code (IaC) tools please refer to the official AWS documentation.

AWS Request Handlers

As discussed in the getting started section, AWS Lambda functions are invoked at a predefined entry point, called the Lambda function handler. In its simplest form this can be a Java method reference. In the above example that would be com.my.package.FunctionConfiguration::uppercase. This configuration is needed to advise AWS Lambda which Java method to call in the provided JAR.

When a Lambda function is invoked, it passes an additional request payload and context object to this handler method. The request payload varies based on the AWS service (Amazon API Gateway, Amazon S3, Amazon SQS, Apache Kafka etc.) that triggered the function. The context object provides additional information about the Lambda function, the invocation and the environment, for example a unique request id (see also Java context in the official documentation).

AWS provides predefined handler interfaces (called RequestHandler or RequestStreamHandler) to deal with payload and context objects via the aws-lambda-java-events and aws-lambda-java-core libraries.

Spring Cloud Function already implements these interfaces and provides a org.springframework.cloud.function.adapter.aws.FunctionInvoker to completely abstract your function code

from the specifics of AWS Lambda. This allows you to just switch the entry point depending on which platform you run your functions.

However, for some use cases you want to integrate deeply with the AWS environment. For example, when your function is triggered by an Amazon S3 file upload you might want to access specific Amazon S3 properties. Or, if you want to return a partial batch response when processing items from an Amazon SQS queue. In that case you can still leverage the generic org.springframework.cloud.function.adapter.aws.FunctionInvoker but you will work with the dedicated AWS objects from within your function code:

@Bean

public Function<S3Event, String> processS3Event() {}

@Bean

public Function<SQSEvent, SQSBatchResponse> processSQSEvent() {}Type Conversion

Another benefit of leveraging the built-in FunctionInvoker is that Spring Cloud Function will attempt to transparently handle type conversion between the raw

input stream and types declared by your function.

For example, if your function signature is Function<Foo, Bar> it will attempt to convert the incoming stream event to an instance of Foo. This is especially helpful in API-triggered Lambda functions where the request body represents a business object and is not tied to AWS specifics.

If the event type is not known or can not be determined (e.g., Function<?, ?>) Spring Cloud Function will attempt to

convert an incoming stream event to a generic Map.

Raw Input

There are times when you may want to have access to a raw input. In this case all you need is to declare your

function signature to accept InputStream, for example Function<InputStream, ?>.

If specified, Spring Cloud function will not attempt any conversion and will pass the raw input directly to the function.

AWS Function Routing

One of the core features of Spring Cloud Function is routing. This capability allows you to have one special Java method (acting as a Lambda function handler) to delegate to other internal methods. You have already seen this in action when the generic FunctionInvoker automatically routed the requests to your uppercase function in the Getting Started section.

By default, if your app has more than one @Bean of type Function etc. they are extracted from the Spring Cloud FunctionCatalog and the framework will attempt to find a default following the search order where it searches first for Function then Consumer and finally Supplier. These default routing capabilities are needed because FunctionInvoker can not determine which function to bind, so it defaults internally to RoutingFunction. It is recommended to provide additional routing instructions using several mechanisms (see sample for more details).

The right routing mechanism depends on your preference to deploy your Spring Cloud Function project as a single or multiple Lambda functions.

Single Function vs. Multiple Functions

If you implement multiple Java methods in the same Spring Cloud Function project, for example uppercase and lowercase, you either deploy two separate Lambda functions with static routing information or you provide a dynamic routing method that decides which method to call during runtime. Let’s look at both approaches.

-

Deploying two separate AWS Lambda functions makes sense if you have different scaling, configuration or permission requirements per function. For example, if you create two Java methods

readObjectFromAmazonS3andwriteToAmazonDynamoDBin the same Spring Cloud Function project, you might want to create two separate Lambda functions. This is because they need different permissions to talk to either S3 or DynamoDB or their load pattern and memory configurations highly vary. In general, this approach is also recommended for messaging based applications where you read from a stream or a queue since you have a dedicated configuration per Lambda Event Source mapping. -

A single Lambda function is a valid approach when multiple Java methods share the same permission set or provide a cohesive business functionality. For example a CRUD-based Spring Cloud Function project with

createPet,updatePet,readPetanddeletePetmethods that all talk to the same DynamoDB table and have a similar usage pattern. Using a single Lambda function will improve deployment simplicity, cohesion and code reuse for shared classes (PetEntity). In addition, it can reduce cold starts between sequential invocations because areadPetfollowed bywritePetwill most likely hit an already running Lambda execution environment. When you build more sophisticated APIs however, or you want to leverage a@RestControllerapproach you may also want to evaluate the Serverless Java container for Spring Boot Web option.

If you favor the first approach you can also create two separate Spring Cloud Function projects and deploy them individually. This can be beneficial if different teams are responsible for maintaining and deploying the functions. However, in that case you need to deal with sharing cross-cutting concerns such as helper methods or entity classes between them. In general, we advise applying the same software modularity principles to your functional projects as you do for traditional web-based applications. For additional information on how to choose the right approach you can refer to Comparing design approaches for serverless microservices.

After the decision has been made you can benefit from the following routing mechanisms.

Routing for multiple Lambda functions

If you have decided to deploy your single Spring Cloud Function project (JAR) to multiple Lambda functions you need to provide a hint on which specific method to call, for example uppercase or lowercase. You can use AWS Lambda environment variables to provide the routing instructions.

Note that AWS does not allow dots . and/or hyphens - in the name of the environment variable. You can benefit from Spring Boot support and simply substitute dots with underscores and hyphens with camel case. So for example spring.cloud.function.definition becomes spring_cloud_function_definition and spring.cloud.function.routing-expression becomes spring_cloud_function_routingExpression.

Therefore, a configuration for a single Spring Cloud project with two methods deployed to separate AWS Lambda functions can look like this:

@SpringBootApplication

public class FunctionConfiguration {

public static void main(String[] args) {

SpringApplication.run(FunctionConfiguration.class, args);

}

@Bean

public Function<String, String> uppercase() {

return value -> value.toUpperCase();

}

@Bean

public Function<String, String> lowercase() {

return value -> value.toLowerCase();

}

}AWSTemplateFormatVersion: '2010-09-09'

Transform: AWS::Serverless-2016-10-31

Resources:

MyUpperCaseLambda:

Type: AWS::Serverless::Function

Properties:

Handler: org.springframework.cloud.function.adapter.aws.FunctionInvoker

Runtime: java21

MemorySize: 512

CodeUri: target/function-sample-aws-0.0.1-SNAPSHOT-aws.jar

Environment:

Variables:

spring_cloud_function_definition: uppercase

MyLowerCaseLambda:

Type: AWS::Serverless::Function

Properties:

Handler: org.springframework.cloud.function.adapter.aws.FunctionInvoker

Runtime: java21

MemorySize: 512

CodeUri: target/function-sample-aws-0.0.1-SNAPSHOT-aws.jar

Environment:

Variables:

spring_cloud_function_definition: lowercaseYou may ask - why not use the Lambda function handler and point the entry method directly to uppercase and lowercase? In a Spring Cloud Function project it is recommended to use the built-in FunctionInvoker as outlined in AWS Request Handlers. Therefore, we provide the routing definition via the environment variables.

Routing within a single Lambda function

If you have decided to deploy your Spring Cloud Function project with multiple methods (uppercase or lowercase) to a single Lambda function you need a more dynamic routing approach. Since application.properties and environment variables are defined at build or deployment time you can’t use them for a single function scenario. In this case you can leverage MessagingRoutingCallback or Message Headers as outlined in the Spring Cloud Function Routing section.

More details are available in the provided sample.

Performance considerations

A core characteristic of Serverless Functions is the ability to scale to zero and handle sudden traffic spikes. To handle requests AWS Lambda spins up new execution environments. These environments need to be initialized, your code needs to be downloaded and a JVM + your application needs to start. This is also known as a cold-start. To reduce this cold-start time you can rely on the following mechanisms to optimize performance.

-

Leverage AWS Lambda SnapStart to start your Lambda function from pre-initialized snapshots.

-

Tune the Memory Configuration via AWS Lambda Power Tuning to find the best tradeoff between performance and cost.

-

Follow AWS SDK Best Practices such as defining SDK clients outside the handler code or leverage more advanced priming techniques.

-

Implement additional Spring mechanisms to reduce Spring startup and initialization time such as functional bean registration.

Please refer to the official guidance for more information.

GraalVM Native Image

Spring Cloud Function provides GraalVM Native Image support for functions running on AWS Lambda. Since GraalVM native images do not run on a traditional Java Virtual Machine (JVM) you must deploy your native Spring Cloud Function to an AWS Lambda custom runtime. The most notable difference is that you no longer provide a JAR file but the native-image and a bootstrap file with starting instructions bundled in a zip package:

lambda-custom-runtime.zip

|-- bootstrap

|-- function-sample-aws-nativeBootstrap file:

#!/bin/sh

cd ${LAMBDA_TASK_ROOT:-.}

./function-sample-aws-nativeYou can find a full GraalVM native-image example with Spring Cloud Function on GitHub. For a deep dive you can also refer to the GraalVM modules of the Java on AWS Lambda workshop.

Custom Runtime

Lambda focuses on providing stable long-term support (LTS) Java runtime versions. The official Lambda runtimes are built around a combination of operating system, programming language, and software libraries that are subject to maintenance and security updates. For example, the Lambda runtime for Java supports the LTS versions such as Java 17 Corretto and Java 21 Corretto. You can find the full list here. There is no provided runtime for non-LTS versions like Java 22, Java 23 or Java 24.

To use other language versions, JVMs or GraalVM native-images, Lambda allows you to create custom runtimes. Custom runtimes allow you to provide and configure your own runtimes for running their application code. Spring Cloud Function provides all the necessary components to make it easy.

From the code perspective the application should not look different from any other Spring Cloud Function application.

The only thing you need to do is to provide a bootstrap script in the root of your ZIP/ JAR that runs the Spring Boot application.

and select "Custom Runtime" when creating a function in AWS.

Here is an example 'bootstrap' file:

#!/bin/sh

cd ${LAMBDA_TASK_ROOT:-.}

java -Dspring.main.web-application-type=none -Dspring.jmx.enabled=false \

-noverify -XX:TieredStopAtLevel=1 -Xss256K -XX:MaxMetaspaceSize=128M \

-Djava.security.egd=file:/dev/./urandom \

-cp .:`echo lib/*.jar | tr ' ' :` com.example.LambdaApplicationThe com.example.LambdaApplication represents your application which contains function beans.

Set the handler name in AWS to the name of your function. You can use function composition here as well (e.g., uppercase|reverse).

Once you upload your ZIP/ JAR to AWS your function will run in a custom runtime.

We provide a sample project

where you can also see how to configure your POM to properly generate the ZIP file.

The functional bean definition style works for custom runtimes as well, and is

faster than the @Bean style. A custom runtime can start up much quicker even than a functional bean implementation

of a Java lambda - it depends mostly on the number of classes you need to load at runtime.

Spring doesn’t do very much here, so you can reduce the cold start time by only using primitive types in your function, for instance,

and not doing any work in custom @PostConstruct initializers.

AWS Function Routing with Custom Runtime

When using a Custom Runtime Function Routing works the same way. All you need is to specify functionRouter as AWS Handler the same way you would use the name of the function as handler.

Deploying Lambda functions as container images

In contrast to JAR or ZIP based deployments you can also deploy your Lambda functions as a container image via an image registry. For additional details please refer to the official AWS Lambda documentation.

When deploying container images in a way similar to the one described here, it is important

to remember to set and environment variable DEFAULT_HANDLER with the name of the function.

For example, for function bean shown below the DEFAULT_HANDLER value would be readMessageFromSQS.

@Bean

public Consumer<Message<SQSMessageEvent>> readMessageFromSQS() {

return incomingMessage -> {..}

}Also, it is important to remember to ensure that spring_cloud_function_web_export_enabled is also set to false. It is true by default.

Notes on JAR Layout

You don’t need the Spring Cloud Function Web or Stream adapter at runtime in Lambda, so you might

need to exclude those before you create the JAR you send to AWS. A Lambda application has to be

shaded, but a Spring Boot standalone application does not, so you can run the same app using 2

separate jars (as per the sample). The sample app creates 2 jar files, one with an aws

classifier for deploying in Lambda, and one executable (thin) jar that includes spring-cloud-function-web

at runtime. Spring Cloud Function will try and locate a "main class" for you from the JAR file

manifest, using the Start-Class attribute (which will be added for you by the Spring Boot

tooling if you use the starter parent). If there is no Start-Class in your manifest you can

use an environment variable or system property MAIN_CLASS when you deploy the function to AWS.

If you are not using the functional bean definitions but relying on Spring Boot’s auto-configuration,

and are not depending on spring-boot-starter-parent,

then additional transformers must be configured as part of the maven-shade-plugin execution.

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-shade-plugin</artifactId>

<dependencies>

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-maven-plugin</artifactId>

<version>3.4.2</version>

</dependency>

</dependencies>

<executions>

<execution>

<goals>

<goal>shade</goal>

</goals>

<configuration>

<createDependencyReducedPom>false</createDependencyReducedPom>

<shadedArtifactAttached>true</shadedArtifactAttached>

<shadedClassifierName>aws</shadedClassifierName>

<transformers>

<transformer implementation="org.apache.maven.plugins.shade.resource.AppendingTransformer">

<resource>META-INF/spring.handlers</resource>

</transformer>

<transformer implementation="org.springframework.boot.maven.PropertiesMergingResourceTransformer">

<resource>META-INF/spring.factories</resource>

</transformer>

<transformer implementation="org.apache.maven.plugins.shade.resource.AppendingTransformer">

<resource>META-INF/spring/org.springframework.boot.autoconfigure.AutoConfiguration.imports</resource>

</transformer>

<transformer implementation="org.apache.maven.plugins.shade.resource.AppendingTransformer">

<resource>META-INF/spring/org.springframework.boot.actuate.autoconfigure.web.ManagementContextConfiguration.imports</resource>

</transformer>

<transformer implementation="org.apache.maven.plugins.shade.resource.AppendingTransformer">

<resource>META-INF/spring.schemas</resource>

</transformer>

<transformer implementation="org.apache.maven.plugins.shade.resource.AppendingTransformer">

<resource>META-INF/spring.components</resource>

</transformer>

</transformers>

</configuration>

</execution>

</executions>

</plugin>Build file setup

In order to run Spring Cloud Function applications on AWS Lambda, you can leverage Maven or Gradle plugins.

Maven

In order to use the adapter plugin for Maven, add the plugin dependency to your pom.xml

file:

<dependencies>

<dependency>

<groupId>org.springframework.cloud</groupId>

<artifactId>spring-cloud-function-adapter-aws</artifactId>

</dependency>

</dependencies>As pointed out in the Notes on JAR Layout, you will need a shaded jar in order to upload it to AWS Lambda. You can use the Maven Shade Plugin for that. The example of the setup can be found above.

You can use the Spring Boot Maven Plugin to generate the thin jar.

<plugin>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-maven-plugin</artifactId>

<dependencies>

<dependency>

<groupId>org.springframework.boot.experimental</groupId>

<artifactId>spring-boot-thin-layout</artifactId>

<version>${wrapper.version}</version>

</dependency>

</dependencies>

</plugin>You can find the entire sample pom.xml file for deploying Spring Cloud Function

applications to AWS Lambda with Maven here.

Gradle

In order to use the adapter plugin for Gradle, add the dependency to your build.gradle file:

dependencies {

compile("org.springframework.cloud:spring-cloud-function-adapter-aws:${version}")

}As pointed out in Notes on JAR Layout, you will need a shaded jar in order to upload it to AWS Lambda. You can use the Gradle Shadow Plugin for that:

You can use the Spring Boot Gradle Plugin and Spring Boot Thin Gradle Plugin to generate the thin jar.

Below is a complete gradle file

plugins {

id 'java'

id 'org.springframework.boot' version '3.4.2'

id 'io.spring.dependency-management' version '1.1.3'

id 'com.github.johnrengelman.shadow' version '8.1.1'

id 'maven-publish'

id 'org.springframework.boot.experimental.thin-launcher' version "1.0.31.RELEASE"

}

group = 'com.example'

version = '0.0.1-SNAPSHOT'

java {

sourceCompatibility = '17'

}

repositories {

mavenCentral()

mavenLocal()

maven { url 'https://repo.spring.io/milestone' }

}

ext {

set('springCloudVersion', "2024.0.0")

}

assemble.dependsOn = [thinJar, shadowJar]

publishing {

publications {

maven(MavenPublication) {

from components.java

versionMapping {

usage('java-api') {

fromResolutionOf('runtimeClasspath')

}

usage('java-runtime') {

fromResolutionResult()

}

}

}

}

}

shadowJar.mustRunAfter thinJar

import com.github.jengelman.gradle.plugins.shadow.transformers.*

shadowJar {

archiveClassifier = 'aws'

manifest {

inheritFrom(project.tasks.thinJar.manifest)

}

// Required for Spring

mergeServiceFiles()

append 'META-INF/spring.handlers'

append 'META-INF/spring.schemas'

append 'META-INF/spring.tooling'

append 'META-INF/spring/org.springframework.boot.autoconfigure.AutoConfiguration.imports'

append 'META-INF/spring/org.springframework.boot.actuate.autoconfigure.web.ManagementContextConfiguration.imports'

transform(PropertiesFileTransformer) {

paths = ['META-INF/spring.factories']

mergeStrategy = "append"

}

}

dependencies {

implementation 'org.springframework.boot:spring-boot-starter'

implementation 'org.springframework.cloud:spring-cloud-function-adapter-aws'

implementation 'org.springframework.cloud:spring-cloud-function-context'

testImplementation 'org.springframework.boot:spring-boot-starter-test'

}

dependencyManagement {

imports {

mavenBom "org.springframework.cloud:spring-cloud-dependencies:${springCloudVersion}"

}

}

tasks.named('test') {

useJUnitPlatform()

}You can find the entire sample build.gradle file for deploying Spring Cloud Function

applications to AWS Lambda with Gradle here.

Serverless Java container for Spring Boot Web

You can use the aws-serverless-java-container library to run a Spring Boot 3 applications in AWS Lambda. This is a good fit for migrations of existing Spring applications to AWS Lambda or if you build sophisticated APIs with multiple API endpoints and want to maintain the familiar RestController approach. The following section provides a high-level overview of the process. Please refer to the official sample code for additional information.

-

Import the Serverless Java Container library to your existing Spring Boot 3 web app

<dependency> <groupId>com.amazonaws.serverless</groupId> <artifactId>aws-serverless-java-container-springboot3</artifactId> <version>2.1.2</version> </dependency> -

Use the built-in Lambda function handler that serves as an entrypoint

com.amazonaws.serverless.proxy.spring.SpringDelegatingLambdaContainerHandler -

Configure an environment variable named

MAIN_CLASSto let the generic handler know where to find your original application main class. Usually that is the class annotated with @SpringBootApplication.

MAIN_CLAS = com.my.package.MySpringBootApplication

Below you can see an example deployment configuration:

AWSTemplateFormatVersion: '2010-09-09'

Transform: AWS::Serverless-2016-10-31

Resources:

MySpringBootLambdaFunction:

Type: AWS::Serverless::Function

Properties:

Handler: com.amazonaws.serverless.proxy.spring.SpringDelegatingLambdaContainerHandler

Runtime: java21

MemorySize: 1024

CodeUri: target/lambda-spring-boot-app-0.0.1-SNAPSHOT.jar #Must be a shaded Jar

Environment:

Variables:

MAIN_CLASS: com.amazonaws.serverless.sample.springboot3.Application #Class annotated with @SpringBootApplicationPlease find all the examples including GraalVM native-image here.