Bedrock Anthropic 2 Chat

|

Following the Bedrock recommendations, Spring AI is transitioning to using Amazon Bedrock’s Converse API for all chat conversation implementations in Spring AI.

While the existing

|

| The Anthropic 2 Chat API is deprecated and replaced by the new Anthropic Claude 3 Message API. Please use the Anthropic Claude 3 Message API for new projects. |

Anthropic’s Claude is an AI assistant based on Anthropic’s research into training helpful, honest, and harmless AI systems. The Claude model has the following high level features

-

200k Token Context Window: Claude boasts a generous token capacity of 200,000, making it ideal for handling extensive information in applications like technical documentation, codebase, and literary works.

-

Supported Tasks: Claude’s versatility spans tasks such as summarization, Q&A, trend forecasting, and document comparisons, enabling a wide range of applications from dialogues to content generation.

-

AI Safety Features: Built on Anthropic’s safety research, Claude prioritizes helpfulness, honesty, and harmlessness in its interactions, reducing brand risk and ensuring responsible AI behavior.

The AWS Bedrock Anthropic Model Page and Amazon Bedrock User Guide contains detailed information on how to use the AWS hosted model.

| Anthropic’s Claude 2 and 3 models are also available directly on the Anthropic’s own cloud platform. Spring AI provides dedicated Anthropic Claude client to access it. |

Prerequisites

Refer to the Spring AI documentation on Amazon Bedrock for setting up API access.

Add Repositories and BOM

Spring AI artifacts are published in Spring Milestone and Snapshot repositories. Refer to the Repositories section to add these repositories to your build system.

To help with dependency management, Spring AI provides a BOM (bill of materials) to ensure that a consistent version of Spring AI is used throughout the entire project. Refer to the Dependency Management section to add the Spring AI BOM to your build system.

Auto-configuration

Add the spring-ai-bedrock-ai-spring-boot-starter dependency to your project’s Maven pom.xml file:

<dependency>

<groupId>org.springframework.ai</groupId>

<artifactId>spring-ai-bedrock-ai-spring-boot-starter</artifactId>

</dependency>or to your Gradle build.gradle build file.

dependencies {

implementation 'org.springframework.ai:spring-ai-bedrock-ai-spring-boot-starter'

}| Refer to the Dependency Management section to add the Spring AI BOM to your build file. |

Enable Anthropic Chat

By default the Anthropic model is disabled.

To enable it set the spring.ai.bedrock.anthropic.chat.enabled property to true.

Exporting environment variable is one way to set this configuration property:

export SPRING_AI_BEDROCK_ANTHROPIC_CHAT_ENABLED=trueChat Properties

The prefix spring.ai.bedrock.aws is the property prefix to configure the connection to AWS Bedrock.

| Property | Description | Default |

|---|---|---|

spring.ai.bedrock.aws.region |

AWS region to use. |

us-east-1 |

spring.ai.bedrock.aws.timeout |

AWS timeout to use. |

5m |

spring.ai.bedrock.aws.access-key |

AWS access key. |

- |

spring.ai.bedrock.aws.secret-key |

AWS secret key. |

- |

The prefix spring.ai.bedrock.anthropic.chat is the property prefix that configures the chat model implementation for Claude.

| Property | Description | Default |

|---|---|---|

spring.ai.bedrock.anthropic.chat.enabled |

Enable Bedrock Anthropic chat model. Disabled by default |

false |

spring.ai.bedrock.anthropic.chat.model |

The model id to use. See the AnthropicChatModel for the supported models. |

anthropic.claude-v2 |

spring.ai.bedrock.anthropic.chat.options.temperature |

Controls the randomness of the output. Values can range over [0.0,1.0] |

0.8 |

spring.ai.bedrock.anthropic.chat.options.topP |

The maximum cumulative probability of tokens to consider when sampling. |

AWS Bedrock default |

spring.ai.bedrock.anthropic.chat.options.topK |

Specify the number of token choices the generative uses to generate the next token. |

AWS Bedrock default |

spring.ai.bedrock.anthropic.chat.options.stopSequences |

Configure up to four sequences that the generative recognizes. After a stop sequence, the generative stops generating further tokens. The returned text doesn’t contain the stop sequence. |

10 |

spring.ai.bedrock.anthropic.chat.options.anthropicVersion |

The version of the generative to use. |

bedrock-2023-05-31 |

spring.ai.bedrock.anthropic.chat.options.maxTokensToSample |

Specify the maximum number of tokens to use in the generated response. Note that the models may stop before reaching this maximum. This parameter only specifies the absolute maximum number of tokens to generate. We recommend a limit of 4,000 tokens for optimal performance. |

500 |

Look at the AnthropicChatModel for other model IDs.

Supported values are: anthropic.claude-instant-v1, anthropic.claude-v2 and anthropic.claude-v2:1.

Model ID values can also be found in the AWS Bedrock documentation for base model IDs.

All properties prefixed with spring.ai.bedrock.anthropic.chat.options can be overridden at runtime by adding a request specific Runtime Options to the Prompt call.

|

Runtime Options

The AnthropicChatOptions.java provides model configurations, such as temperature, topK, topP, etc.

On start-up, the default options can be configured with the BedrockAnthropicChatModel(api, options) constructor or the spring.ai.bedrock.anthropic.chat.options.* properties.

At run-time you can override the default options by adding new, request specific, options to the Prompt call.

For example to override the default temperature for a specific request:

ChatResponse response = chatModel.call(

new Prompt(

"Generate the names of 5 famous pirates.",

AnthropicChatOptions.builder()

.temperature(0.4)

.build()

));| In addition to the model specific AnthropicChatOptions you can use a portable ChatOptions instance, created with the ChatOptionsBuilder#builder(). |

Sample Controller

Create a new Spring Boot project and add the spring-ai-bedrock-ai-spring-boot-starter to your pom (or gradle) dependencies.

Add a application.properties file, under the src/main/resources directory, to enable and configure the Anthropic chat model:

spring.ai.bedrock.aws.region=eu-central-1

spring.ai.bedrock.aws.timeout=1000ms

spring.ai.bedrock.aws.access-key=${AWS_ACCESS_KEY_ID}

spring.ai.bedrock.aws.secret-key=${AWS_SECRET_ACCESS_KEY}

spring.ai.bedrock.anthropic.chat.enabled=true

spring.ai.bedrock.anthropic.chat.options.temperature=0.8

spring.ai.bedrock.anthropic.chat.options.top-k=15

replace the regions, access-key and secret-key with your AWS credentials.

|

This will create a BedrockAnthropicChatModel implementation that you can inject into your class.

Here is an example of a simple @Controller class that uses the chat model for text generations.

@RestController

public class ChatController {

private final BedrockAnthropicChatModel chatModel;

@Autowired

public ChatController(BedrockAnthropicChatModel chatModel) {

this.chatModel = chatModel;

}

@GetMapping("/ai/generate")

public Map generate(@RequestParam(value = "message", defaultValue = "Tell me a joke") String message) {

return Map.of("generation", this.chatModel.call(message));

}

@GetMapping("/ai/generateStream")

public Flux<ChatResponse> generateStream(@RequestParam(value = "message", defaultValue = "Tell me a joke") String message) {

Prompt prompt = new Prompt(new UserMessage(message));

return this.chatModel.stream(prompt);

}

}Manual Configuration

The BedrockAnthropicChatModel implements the ChatModel and StreamingChatModel and uses the Low-level AnthropicChatBedrockApi Client to connect to the Bedrock Anthropic service.

Add the spring-ai-bedrock dependency to your project’s Maven pom.xml file:

<dependency>

<groupId>org.springframework.ai</groupId>

<artifactId>spring-ai-bedrock</artifactId>

</dependency>or to your Gradle build.gradle build file.

dependencies {

implementation 'org.springframework.ai:spring-ai-bedrock'

}| Refer to the Dependency Management section to add the Spring AI BOM to your build file. |

Next, create an BedrockAnthropicChatModel and use it for text generations:

AnthropicChatBedrockApi anthropicApi = new AnthropicChatBedrockApi(

AnthropicChatBedrockApi.AnthropicModel.CLAUDE_V2.id(),

EnvironmentVariableCredentialsProvider.create(),

Region.US_EAST_1.id(),

new ObjectMapper(),

Duration.ofMillis(1000L));

BedrockAnthropicChatModel chatModel = new BedrockAnthropicChatModel(this.anthropicApi,

AnthropicChatOptions.builder()

.temperature(0.6)

.topK(10)

.topP(0.8)

.maxTokensToSample(100)

.anthropicVersion(AnthropicChatBedrockApi.DEFAULT_ANTHROPIC_VERSION)

.build());

ChatResponse response = this.chatModel.call(

new Prompt("Generate the names of 5 famous pirates."));

// Or with streaming responses

Flux<ChatResponse> response = this.chatModel.stream(

new Prompt("Generate the names of 5 famous pirates."));Low-level AnthropicChatBedrockApi Client

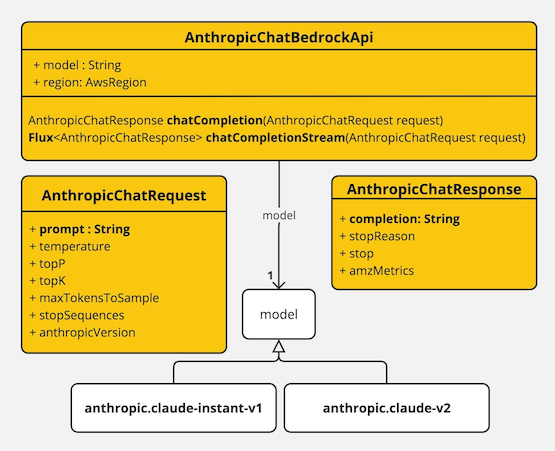

The AnthropicChatBedrockApi provides is lightweight Java client on top of AWS Bedrock Anthropic Claude models.

Following class diagram illustrates the AnthropicChatBedrockApi interface and building blocks:

Client supports the anthropic.claude-instant-v1, anthropic.claude-v2 and anthropic.claude-v2:1 models for both synchronous (e.g. chatCompletion()) and streaming (e.g. chatCompletionStream()) responses.

Here is a simple snippet how to use the api programmatically:

AnthropicChatBedrockApi anthropicChatApi = new AnthropicChatBedrockApi(

AnthropicModel.CLAUDE_V2.id(), Region.US_EAST_1.id(), Duration.ofMillis(1000L));

AnthropicChatRequest request = AnthropicChatRequest

.builder(String.format(AnthropicChatBedrockApi.PROMPT_TEMPLATE, "Name 3 famous pirates"))

.temperature(0.8)

.maxTokensToSample(300)

.topK(10)

.build();

// Sync request

AnthropicChatResponse response = this.anthropicChatApi.chatCompletion(this.request);

// Streaming request

Flux<AnthropicChatResponse> responseStream = this.anthropicChatApi.chatCompletionStream(this.request);

List<AnthropicChatResponse> responses = this.responseStream.collectList().block();Follow the AnthropicChatBedrockApi.java's JavaDoc for further information.