Titan Chat

|

Following the Bedrock recommendations, Spring AI is transitioning to using Amazon Bedrock’s Converse API for all Chat conversation implementations in Spring AI.

While the existing

|

Amazon Titan foundation models (FMs) provide customers with a breadth of high-performing image, multimodal embeddings, and text model choices, via a fully managed API. Amazon Titan models are created by AWS and pretrained on large datasets, making them powerful, general-purpose models built to support a variety of use cases, while also supporting the responsible use of AI. Use them as is or privately customize them with your own data.

The AWS Bedrock Titan Model Page and Amazon Bedrock User Guide contains detailed information on how to use the AWS hosted model.

Prerequisites

Refer to the Spring AI documentation on Amazon Bedrock for setting up API access.

Add Repositories and BOM

Spring AI artifacts are published in Spring Milestone and Snapshot repositories. Refer to the Repositories section to add these repositories to your build system.

To help with dependency management, Spring AI provides a BOM (bill of materials) to ensure that a consistent version of Spring AI is used throughout the entire project. Refer to the Dependency Management section to add the Spring AI BOM to your build system.

Auto-configuration

Add the spring-ai-bedrock-ai-spring-boot-starter dependency to your project’s Maven pom.xml file:

<dependency>

<groupId>org.springframework.ai</groupId>

<artifactId>spring-ai-bedrock-ai-spring-boot-starter</artifactId>

</dependency>or to your Gradle build.gradle build file.

dependencies {

implementation 'org.springframework.ai:spring-ai-bedrock-ai-spring-boot-starter'

}| Refer to the Dependency Management section to add the Spring AI BOM to your build file. |

Enable Titan Chat

By default the Titan model is disabled.

To enable it set the spring.ai.bedrock.titan.chat.enabled property to true.

Exporting environment variable is one way to set this configuration property:

export SPRING_AI_BEDROCK_TITAN_CHAT_ENABLED=trueChat Properties

The prefix spring.ai.bedrock.aws is the property prefix to configure the connection to AWS Bedrock.

| Property | Description | Default |

|---|---|---|

spring.ai.bedrock.aws.region |

AWS region to use. |

us-east-1 |

spring.ai.bedrock.aws.timeout |

AWS timeout to use. |

5m |

spring.ai.bedrock.aws.access-key |

AWS access key. |

- |

spring.ai.bedrock.aws.secret-key |

AWS secret key. |

- |

The prefix spring.ai.bedrock.titan.chat is the property prefix that configures the chat model implementation for Titan.

| Property | Description | Default |

|---|---|---|

spring.ai.bedrock.titan.chat.enabled |

Enable Bedrock Titan chat model. Disabled by default |

false |

spring.ai.bedrock.titan.chat.model |

The model id to use. See the TitanChatBedrockApi#TitanChatModel for the supported models. |

amazon.titan-text-lite-v1 |

spring.ai.bedrock.titan.chat.options.temperature |

Controls the randomness of the output. Values can range over [0.0,1.0] |

0.7 |

spring.ai.bedrock.titan.chat.options.topP |

The maximum cumulative probability of tokens to consider when sampling. |

AWS Bedrock default |

spring.ai.bedrock.titan.chat.options.stopSequences |

Configure up to four sequences that the generative recognizes. After a stop sequence, the generative stops generating further tokens. The returned text doesn’t contain the stop sequence. |

AWS Bedrock default |

spring.ai.bedrock.titan.chat.options.maxTokenCount |

Specify the maximum number of tokens to use in the generated response. Note that the models may stop before reaching this maximum. This parameter only specifies the absolute maximum number of tokens to generate. We recommend a limit of 4,000 tokens for optimal performance. |

AWS Bedrock default |

Look at the TitanChatBedrockApi#TitanChatModel for other model IDs.

Supported values are: amazon.titan-text-lite-v1, amazon.titan-text-express-v1 and amazon.titan-text-premier-v1:0.

Model ID values can also be found in the AWS Bedrock documentation for base model IDs.

All properties prefixed with spring.ai.bedrock.titan.chat.options can be overridden at runtime by adding a request specific Runtime Options to the Prompt call.

|

Runtime Options

The BedrockTitanChatOptions.java provides model configurations, such as temperature, topP, etc.

On start-up, the default options can be configured with the BedrockTitanChatModel(api, options) constructor or the spring.ai.bedrock.titan.chat.options.* properties.

At run-time you can override the default options by adding new, request specific, options to the Prompt call.

For example to override the default temperature for a specific request:

ChatResponse response = chatModel.call(

new Prompt(

"Generate the names of 5 famous pirates.",

BedrockTitanChatOptions.builder()

.temperature(0.4)

.build()

));| In addition to the model specific BedrockTitanChatOptions you can use a portable ChatOptions instance, created with the ChatOptionsBuilder#builder(). |

Sample Controller

Create a new Spring Boot project and add the spring-ai-bedrock-ai-spring-boot-starter to your pom (or gradle) dependencies.

Add a application.properties file, under the src/main/resources directory, to enable and configure the Titan chat model:

spring.ai.bedrock.aws.region=eu-central-1

spring.ai.bedrock.aws.timeout=1000ms

spring.ai.bedrock.aws.access-key=${AWS_ACCESS_KEY_ID}

spring.ai.bedrock.aws.secret-key=${AWS_SECRET_ACCESS_KEY}

spring.ai.bedrock.titan.chat.enabled=true

spring.ai.bedrock.titan.chat.options.temperature=0.8

replace the regions, access-key and secret-key with your AWS credentials.

|

This will create a BedrockTitanChatModel implementation that you can inject into your class.

Here is an example of a simple @Controller class that uses the chat model for text generations.

@RestController

public class ChatController {

private final BedrockTitanChatModel chatModel;

@Autowired

public ChatController(BedrockTitanChatModel chatModel) {

this.chatModel = chatModel;

}

@GetMapping("/ai/generate")

public Map generate(@RequestParam(value = "message", defaultValue = "Tell me a joke") String message) {

return Map.of("generation", this.chatModel.call(message));

}

@GetMapping("/ai/generateStream")

public Flux<ChatResponse> generateStream(@RequestParam(value = "message", defaultValue = "Tell me a joke") String message) {

Prompt prompt = new Prompt(new UserMessage(message));

return this.chatModel.stream(prompt);

}

}Manual Configuration

The BedrockTitanChatModel implements the ChatModel and StreamingChatModel and uses the Low-level TitanChatBedrockApi Client to connect to the Bedrock Titanic service.

Add the spring-ai-bedrock dependency to your project’s Maven pom.xml file:

<dependency>

<groupId>org.springframework.ai</groupId>

<artifactId>spring-ai-bedrock</artifactId>

</dependency>or to your Gradle build.gradle build file.

dependencies {

implementation 'org.springframework.ai:spring-ai-bedrock'

}| Refer to the Dependency Management section to add the Spring AI BOM to your build file. |

Next, create an BedrockTitanChatModel and use it for text generations:

TitanChatBedrockApi titanApi = new TitanChatBedrockApi(

TitanChatModel.TITAN_TEXT_EXPRESS_V1.id(),

EnvironmentVariableCredentialsProvider.create(),

Region.US_EAST_1.id(),

new ObjectMapper(),

Duration.ofMillis(1000L));

BedrockTitanChatModel chatModel = new BedrockTitanChatModel(this.titanApi,

BedrockTitanChatOptions.builder()

.temperature(0.6)

.topP(0.8)

.maxTokenCount(100)

.build());

ChatResponse response = this.chatModel.call(

new Prompt("Generate the names of 5 famous pirates."));

// Or with streaming responses

Flux<ChatResponse> response = this.chatModel.stream(

new Prompt("Generate the names of 5 famous pirates."));Low-level TitanChatBedrockApi Client

The TitanChatBedrockApi provides is lightweight Java client on top of AWS Bedrock Bedrock Titan models.

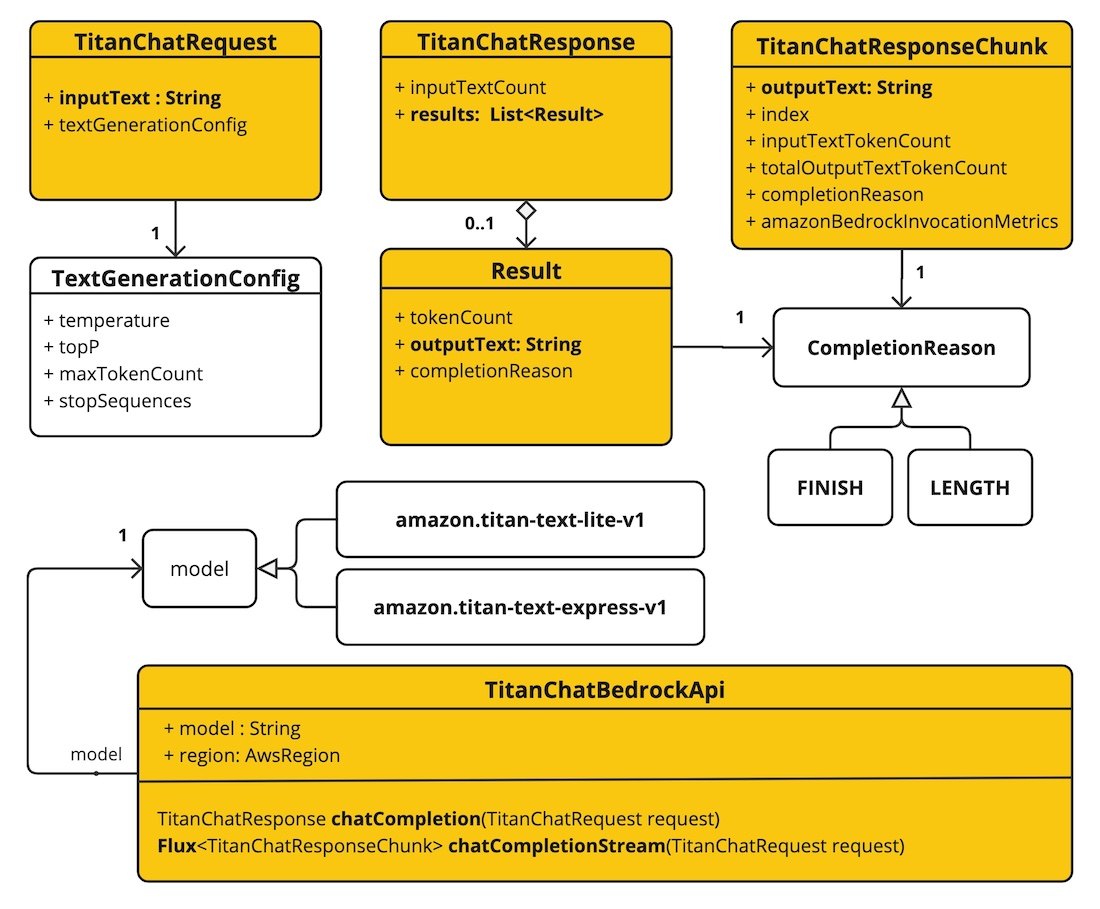

Following class diagram illustrates the TitanChatBedrockApi interface and building blocks:

Client supports the amazon.titan-text-lite-v1 and amazon.titan-text-express-v1 models for both synchronous (e.g. chatCompletion()) and streaming (e.g. chatCompletionStream()) responses.

Here is a simple snippet how to use the api programmatically:

TitanChatBedrockApi titanBedrockApi = new TitanChatBedrockApi(TitanChatCompletionModel.TITAN_TEXT_EXPRESS_V1.id(),

Region.US_EAST_1.id(), Duration.ofMillis(1000L));

TitanChatRequest titanChatRequest = TitanChatRequest.builder("Give me the names of 3 famous pirates?")

.temperature(0.5)

.topP(0.9)

.maxTokenCount(100)

.stopSequences(List.of("|"))

.build();

TitanChatResponse response = this.titanBedrockApi.chatCompletion(this.titanChatRequest);

Flux<TitanChatResponseChunk> response = this.titanBedrockApi.chatCompletionStream(this.titanChatRequest);

List<TitanChatResponseChunk> results = this.response.collectList().block();Follow the TitanChatBedrockApi's JavaDoc for further information.